影评爬取至txt

import requests

import bs4

headers={'User-Agent':'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/84.0.4147.89 Safari/537.36'}

urls= []

for i in range(6):#爬取前六页

url='https://movie.douban.com/subject/25986180/comments?start='

url= url+ str(i*20)+'&limit=20&sort=new_score&status=P'

print(url)

urls.append(url)

for url in urls:

response= requests.get(url, headers=headers)

content=response.text

soup = bs4.BeautifulSoup(content, 'html.parser')

anchorTag= soup.find_all('div',attrs={'class':'comment'})

for tags in anchorTag:

# comment=tags.find('p',attrs={'class':""}).text.strip()

try:

comment=tags.find('p',attrs={'class':""}).text.strip()

except:

continue

#print(comment)

with open(r"C:\Users\84468\Desktop\movie.txt", 'a+',encoding = 'utf-8') as f:

f.write(comment+ '\n')

结果如下:

词云代码:

import matplotlib.pyplot as plt

import jieba

from wordcloud import WordCloud

text = open(r'C:\Users\84468\Desktop\movie.txt', "r",encoding = 'utf-8').read()#读入txt文本数据

cut_text = jieba.cut(text) #结巴中文分词,生成字符串,默认精确模式,如果不通过分词,无法直接生成正确的中文词云

result = " ".join(cut_text)#必须给个符号分隔开分词结果来形成字符串,否则不能绘制词云

wc = WordCloud(background_color='white',width=500,height=300,max_font_size=50,min_font_size=10,font_path=r"c:\windows\fonts\simfang.ttf").generate(result)

plt.imshow(wc,interpolation='bilinear')

plt.axis("off")

plt.show()

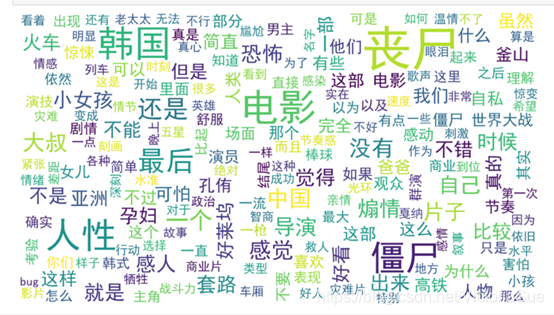

结果如下:

5426

5426

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?