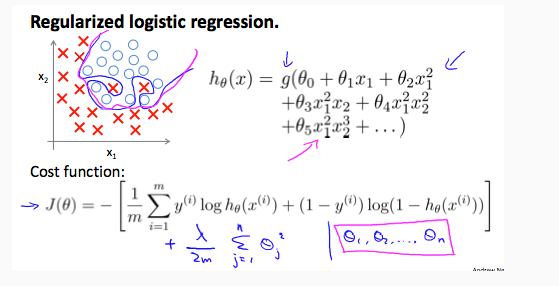

Regularized Logistic Regression

We can regularize logistic regression in a similar way that we regularize linear regression. As a result, we can avoid overfitting. The following image shows how the regularized function, displayed by the pink line, is less likely to overfit than the non-regularized function represented by the blue line:

Cost Function

Recall that our cost function for logistic regression was:

J(θ)=−1m∑mi=1[y(i) log(hθ(x(i)))+(1−y(i)) log(1−hθ(x(i)))]

We can regularize this equation by adding a term to the end:

| J(θ)=−1m∑mi=1[y(i) log(hθ(x(i)))+(1−y(i)) log(1−hθ(x(i)))]+λ2m∑nj=1θ2j |

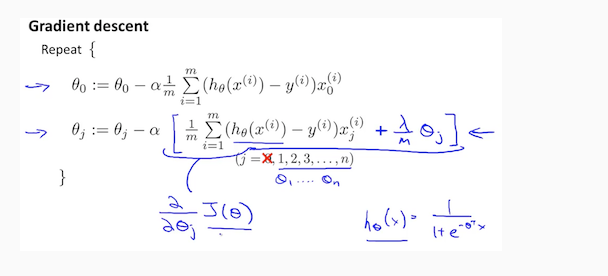

The second sum, ∑nj=1θ2j means to explicitly exclude the bias term, θ0 . I.e. the θ vector is indexed from 0 to n (holding n+1 values, θ0 through θn ), and this sum explicitly skips θ0 , by running from 1 to n, skipping 0. Thus, when computing the equation, we should continuously update the two following equations:

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?