使用Scrapy编写一个爬虫来爬取豆瓣排行榜

一、创建Scrapy项目

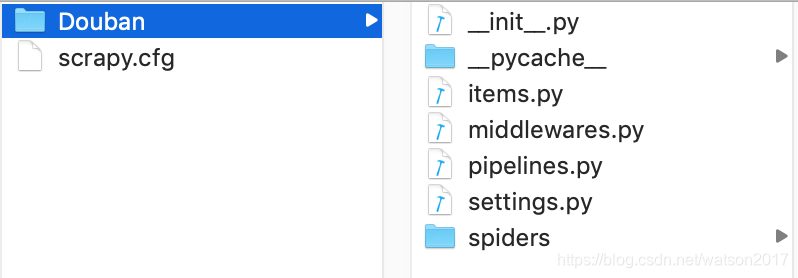

scrapy startproject Douban命令执行后,会创建一个Douban文件夹,结构如下

二、编写item文件,根据需要爬取的内容定义爬取字段

# -*- coding: utf-8 -*-

import scrapy

class DoubanItem(scrapy.Item):

# define the fields for your item here like:

# name = scrapy.Field()

title = scrapy.Field()

movieInfo = scrapy.Field()

star = scrapy.Field()

quote = scrapy.Field()

三、编写spider文件

进入Douban目录,使用命令创建一个基础爬虫类:

# doubanPos为爬虫名,douban.com为爬虫作用范围

scrapy genspider doubanPos "douban.com"执行命令后会在spiders文件夹中创建一个doubanPos.py的文件,现在开始对其编写:

# -*- coding: utf-8 -*-

import scrapy

from Douban.items import DoubanItem

class DoubanposSpider(scrapy.Spider):

"""

爬取豆瓣电影 https://movie.douban.com/top250?start=50&filter=

"""

#爬虫名

name = 'doubanPos'

#爬虫作用范围

allowed_domains = ['douban.com']

url = 'https://movie.douban.com/top250'

#起始url

start_urls = [url]

def parse(self, response):

#模型

item = DoubanItem()

movies = response.xpath('//div[@class="info"]')

for eachmv in movies:

item['title'] = eachmv.xpath('div[@class="hd"]/a/span/text()').extract()[0]

movieInfo = eachmv.xpath('div[@class="bd"]/p/text()').extract()

newstr = ""

for infostr in movieInfo:

newstr = newstr + infostr.strip()

item['movieInfo'] = newstr

item['star'] = eachmv.xpath('div[@class="bd"]/div[@class="star"]/span[@class="rating_num"]/text()').extract()[0]

item['quote'] = eachmv.xpath('div[@class="bd"]/p[@class="quote"]/span/text()').extract()[0]

yield item

#请求下一页,并调用回调函数self.parse处理Response

nexturl = response.xpath('//span[@class="next"]/link/@href').extract()

if nexturl:

nexturl = nexturl[0]

yield scrapy.Request(self.url + nexturl, callback = self.parse)四、编写pipelines文件

# -*- coding: utf-8 -*-

import json

class DoubanPipeline(object):

"""

功能:保存item数据

"""

def __init__(self):

self.jsonfile = open("douban.json", "wb")

def process_item(self, item, spider):

text = json.dumps(dict(item), ensure_ascii = False) + ",\n"

self.jsonfile.write(text.encode("utf-8"))

return item

def close_spider(self, spider):

self.jsonfile.close()

五、settings文件设置(主要设置内容)

DEFAULT_REQUEST_HEADERS = {

'User-Agent' : 'Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; Trident/5.0;',

'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8'

}

ITEM_PIPELINES = {

'Douban.pipelines.DoubanPipeline': 300,

}执行命令,运行程序

# doubanPos为爬虫名

scrapy crawl doubanPosScrapy教程地址:https://scrapy-chs.readthedocs.io/zh_CN/0.24/intro/overview.html

9684

9684

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?