Kafka

1 简介

Kafka是一种高吞吐量的分布式发布订阅消息系统,它可以处理消费者规模的网站中的所有动作流数据。详细参考:apache kafka

2 快速启动

2.1 下载

https://www.apache.org/dyn/closer.cgi?path=/kafka/1.0.0/kafka_2.11-1.0.0.tgz

tar -xzf kafka_2.11-1.0.0.tgz

cd kafka_2.11-1.0.0/

2.2 启动zookeeper

2.3 启动kafka server

2.3.1 修改config/server.properties

zookeeper.connect=192.168.10.11:2181,192.168.10.11:2182,192.168.10.11:2183

zookeeper 改成自己搭建的地址 zookeeper.connect=ip:port[,ip:port]

2.3.2 启动

bin/kafka-server-start.sh config/server.properties

默认启动端口:9092, 可以在配置文件中修改,启动过程可以看到kafka在zookeeper 中创建很多目录文件

[2017-12-15 11:35:20,632] INFO Creating /controller (is it secure? false) (kafka.utils.ZKCheckedEphemeral)

[2017-12-15 11:35:20,650] INFO Result of znode creation is: OK (kafka.utils.ZKCheckedEphemeral)

[2017-12-15 11:35:20,700] INFO [TransactionCoordinator id=0] Starting up. (kafka.coordinator.transaction.TransactionCoordinator)

[2017-12-15 11:35:20,702] INFO [TransactionCoordinator id=0] Startup complete. (kafka.coordinator.transaction.TransactionCoordinator)

[2017-12-15 11:35:20,703] INFO [Transaction Marker Channel Manager 0]: Starting (kafka.coordinator.transaction.TransactionMarkerChannelManager)

[2017-12-15 11:35:20,848] INFO Creating /brokers/ids/0 (is it secure? false) (kafka.utils.ZKCheckedEphemeral)

[2017-12-15 11:35:20,889] INFO Result of znode creation is: OK (kafka.utils.ZKCheckedEphemeral)

[2017-12-15 11:35:20,891] INFO Registered broker 0 at path /brokers/ids/0 with addresses: EndPoint(localhost,9092,ListenerName(PLAINTEXT),PLAINTEXT) (kafka.utils.ZkUtils)

在zookeeper 上查看

cd $ZOOKEEPER_DIR #自己zookeeper dir

./bin/zkCli.sh -server 192.168.10.11:2181 ##连接zookeeper client

查看目录

ls /

[cluster, controller, controller_epoch, brokers, admin, isr_change_notification, consumers, log_dir_event_notification, latest_producer_id_block, config]

2.4 创建一个topic

#创建一个topic = 'test'

bin/kafka-topics.sh --create --zookeeper 192.168.10.11:2181 --replication-factor 1 --partitions 1 --topic test

日志输出

[2017-12-15 11:48:03,335] INFO Created log for partition [test,0] in /tmp/kafka-logs with properties {compression.type -> producer, message.format.version -> 1.0-IV0, file.delete.delay.ms -> 60000, max.message.bytes -> 1000012, min.compaction.lag.ms -> 0, message.timestamp.type -> CreateTime, min.insync.replicas -> 1, segment.jitter.ms -> 0, preallocate -> false, min.cleanable.dirty.ratio -> 0.5, index.interval.bytes -> 4096, unclean.leader.election.enable -> false, retention.bytes -> -1, delete.retention.ms -> 86400000, cleanup.policy -> [delete], flush.ms -> 9223372036854775807, segment.ms -> 604800000, segment.bytes -> 1073741824, retention.ms -> 604800000, message.timestamp.difference.max.ms -> 9223372036854775807, segment.index.bytes -> 10485760, flush.messages -> 9223372036854775807}. (kafka.log.LogManager)

[2017-12-15 11:48:03,336] INFO [Partition test-0 broker=0] No checkpointed highwatermark is found for partition test-0 (kafka.cluster.Partition)

[2017-12-15 11:48:03,340] INFO Replica loaded for partition test-0 with initial high watermark 0 (kafka.cluster.Replica)

[2017-12-15 11:48:03,342] INFO [Partition test-0 broker=0] test-0 starts at Leader Epoch 0 from offset 0. Previous Leader Epoch was: -1 (kafka.cluster.Partition)

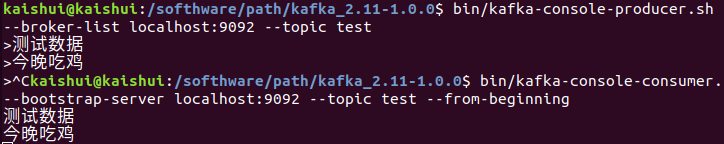

2.5 发送消息到topic

bin/kafka-console-producer.sh --broker-list localhost:9092 --topic test

2.6 启动消费者

bin/kafka-console-consumer.sh --bootstrap-server localhost:9092 --topic test --from-beginning

2.7 集群启动kafka

上面已经运行单个节点,但是生产环境最好还是运行一个集群,奇数个节点比较好。

从默认配置复制多两份配置出来

cp config/server.properties config/server-1.properties

cp config/server.properties config/server-2.properties

2.7.1 修改配置

config/server-1.properties:

broker.id=1 ##要求每个节点在集群中都是唯一的

listeners=PLAINTEXT://:9093 ##server 监听端口

log.dir=/tmp/kafka-logs-1 #日志数据目录

config/server-2.properties:

broker.id=2

listeners=PLAINTEXT://:9094

log.dir=/tmp/kafka-logs-2

2.7.2 启动这两个节点

bin/kafka-server-start.sh config/server-1.properties &

bin/kafka-server-start.sh config/server-2.properties &

2.7.3 对比单节点,集群中新建一个topic

bin/kafka-topics.sh --create --zookeeper 192.168.10.11:2181 --replication-factor 3 --partitions 1 --topic my-replicated-topic

2.7.4 查看topic描述信息

bin/kafka-topics.sh --describe --zookeeper 192.168.10.11:2181 --topic my-replicated-topic

Topic:my-replicated-topic PartitionCount:1 ReplicationFactor:3 Configs:

Topic: my-replicated-topic Partition: 0 Leader: 0 Replicas: 0,1,2 Isr: 0,1,2

leader : 这个节点负责所有的读写,再分配到不同的节点上

replicas : 当前还存活的节点broker.id

isr : in-sync 正在同步的节点

2.7.4.1 这时候查看单节点是新建的topic='test'

bin/kafka-topics.sh --describe --zookeeper 192.168.10.11:2181 --topic test

Topic:test PartitionCount:1 ReplicationFactor:1 Configs:

Topic: test Partition: 0 Leader: 0 Replicas: 0 Isr: 0

发现它只在我们brokerId=0上,并没有在集群中

2.7.4.2 启动消费生产者测试下

bin/kafka-console-producer.sh --broker-list localhost:9092 --topic my-replicated-topic

bin/kafka-consoconsumer.sh --bootstrap-server localhost:9092 --from-beginning --topic my-replicated-topic

和单节点比较结果并没有什么分别

2.7.4.2 模仿broker.id = 0 宕机会出现什么情况

netstat -nap | grep 9092

tcp6 0 0 :::9092 :::* LISTEN 11020/java

kill -9 11020

2.7.4.1 查看现在topic= my-replicated-topic的描述信息

bin/kafka-topics.sh --describe --zookeeper 192.168.10.11:2181 --topic my-replicated-topic

Topic:my-replicated-topic PartitionCount:1 ReplicationFactor:3 Configs:

Topic: my-replicated-topic Partition: 0 Leader: 1 Replicas: 0,1,2 Isr: 1,2

发现brokerId=0 还是在复制集set信息中

3 java kafka client 实例

3.1 maven 中引入依赖

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka-clients</artifactId>

<version>1.0.0</version>

</dependency>

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka-streams</artifactId>

<version>1.0.0</version>

</dependency>

3.2 producer 发送消息

public static void main(String[] args) throws Exception {

Properties props = new Properties();

//kafka servers

props.put("bootstrap.servers", "192.168.10.12:9092,192.168.10.12:9093,192.168.10.12:9094");

props.put("acks", "all");

props.put("retries", 0);

props.put("batch.size", 16384);

props.put("linger.ms", 1);

//topic 分组

props.put("client.id", "DemoProducer");

props.put("buffer.memory", 33554432);

//序列化工具

props.put("key.serializer", "org.apache.kafka.common.serialization.StringSerializer");

//序列化工具

props.put("value.serializer", "org.apache.kafka.common.serialization.StringSerializer");

Producer<String, String> producer = new KafkaProducer<>(props);

for (int i = 0; i < 10; i++)

producer.send(new ProducerRecord<String, String>("my-topic", Integer.toString(i), Integer.toString(i)));

producer.close();

}

}

配置信息参考:KafkaProducer javadocs

3.3 消费者

public static void main(String[] args) {

Properties props = new Properties();

//kafka servers

props.put("bootstrap.servers", "192.168.10.12:9092,192.168.10.12:9093,192.168.10.12:9094");

//group

props.put("group.id", "DemoConsumer");

props.put("enable.auto.commit", "true");

props.put("auto.commit.interval.ms", "1000");

props.put("key.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

props.put("value.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

KafkaConsumer<String, String> consumer = new KafkaConsumer<>(props);

//订阅的topic

consumer.subscribe(Arrays.asList("my-topic"));

while (true) {

//超时时间 ms

ConsumerRecords<String, String> records = consumer.poll(100);

for (ConsumerRecord<String, String> record : records)

System.out.printf("测试 offset = %d, key = %s, value = %s%n", record.offset(), record.key(), record.value());

}

}

8240

8240

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?