我尝试在SparkSQL中执行groupby,但大部分行都丢失了。

spark.sql(

"""

| SELECT

| website_session_id,

| MIN(website_pageview_id) as min_pv_id

|

| FROM website_pageviews

| GROUP BY website_session_id

| ORDER BY website_session_id

|

|

|""".stripMargin).show(10,truncate = false)

+------------------+---------+

|website_session_id|min_pv_id|

+------------------+---------+

|1 |1 |

|10 |15 |

|100 |168 |

|1000 |1910 |

|10000 |20022 |

|100000 |227964 |

|100001 |227966 |

|100002 |227967 |

|100003 |227970 |

|100004 |227973 |

+------------------+---------+

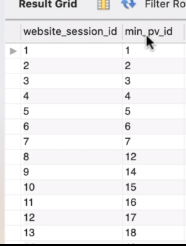

MySQL中的相同查询会得到如下所示的结果:

最好的方法是什么,以便在查询中获取所有行。

我已经检查了其他与此相关的答案,比如连接以获取所有行等,但是我想知道是否有其他方法可以像MySQL一样得到结果?

3684

3684

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?