一、Leaf框架

关于美团Leaf框架,是一个生成id的框架,满足了分布式生成id的需求。具体的内容请参考官方文档GitHub - Meituan-Dianping/Leaf: Distributed ID Generate Service。本文精简掉了leaf的FreeMarker,利用springboot的自动装配,封装leaf框架为starter,使用时候像其他starter一样,直接调用API即可。

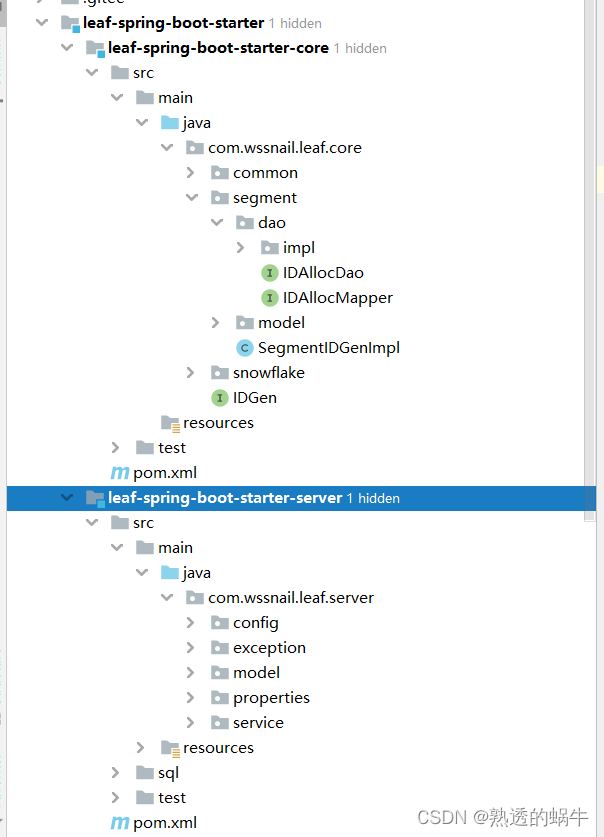

二、封装自己的starter

项目结构如下

2.1 需要修改的部分

leaf-spring-boot-starter-core

package com.wssnail.leaf.core.segment.dao.impl;

import com.wssnail.leaf.core.segment.dao.IDAllocDao;

import com.wssnail.leaf.core.segment.dao.IDAllocMapper;

import com.wssnail.leaf.core.segment.model.LeafAlloc;

import org.apache.ibatis.mapping.Environment;

import org.apache.ibatis.session.Configuration;

import org.apache.ibatis.session.SqlSession;

import org.apache.ibatis.session.SqlSessionFactory;

import org.apache.ibatis.session.SqlSessionFactoryBuilder;

import org.apache.ibatis.transaction.TransactionFactory;

import org.apache.ibatis.transaction.jdbc.JdbcTransactionFactory;

import javax.sql.DataSource;

import java.util.List;

/**

* @description: 此处需要修改自己的mapper接口的路径,因为mybatis通过代理模式执行mapper接口中的方法

* @author xiaojie

* @date 2022/8/28 17:17

* @version 1.0

*/

public class IDAllocDaoImpl implements IDAllocDao {

SqlSessionFactory sqlSessionFactory;

public IDAllocDaoImpl(DataSource dataSource) {

TransactionFactory transactionFactory = new JdbcTransactionFactory();

Environment environment = new Environment("development", transactionFactory, dataSource);

Configuration configuration = new Configuration(environment);

configuration.addMapper(IDAllocMapper.class);

sqlSessionFactory = new SqlSessionFactoryBuilder().build(configuration);

}

@Override

public List<LeafAlloc> getAllLeafAllocs() {

SqlSession sqlSession = sqlSessionFactory.openSession(false);

try {

return sqlSession.selectList("com.wssnail.leaf.core.segment.dao.IDAllocMapper.getAllLeafAllocs");

} finally {

sqlSession.close();

}

}

@Override

public LeafAlloc updateMaxIdAndGetLeafAlloc(String tag) {

SqlSession sqlSession = sqlSessionFactory.openSession();

try {

sqlSession.update("com.wssnail.leaf.core.segment.dao.IDAllocMapper.updateMaxId", tag);

LeafAlloc result = sqlSession.selectOne("com.wssnail.leaf.core.segment.dao.IDAllocMapper.getLeafAlloc", tag);

sqlSession.commit();

return result;

} finally {

sqlSession.close();

}

}

@Override

public LeafAlloc updateMaxIdByCustomStepAndGetLeafAlloc(LeafAlloc leafAlloc) {

SqlSession sqlSession = sqlSessionFactory.openSession();

try {

sqlSession.update("com.wssnail.leaf.core.segment.dao.IDAllocMapper.updateMaxIdByCustomStep", leafAlloc);

LeafAlloc result = sqlSession.selectOne("com.wssnail.leaf.core.segment.dao.IDAllocMapper.getLeafAlloc", leafAlloc.getKey());

sqlSession.commit();

return result;

} finally {

sqlSession.close();

}

}

@Override

public List<String> getAllTags() {

SqlSession sqlSession = sqlSessionFactory.openSession(false);

try {

return sqlSession.selectList("com.wssnail.leaf.core.segment.dao.IDAllocMapper.getAllTags");

} finally {

sqlSession.close();

}

}

}

2.2 、leaf-spring-boot-starter-server 部分

a 、创建配置类

package com.wssnail.leaf.server.properties;

import lombok.Data;

import org.springframework.boot.context.properties.ConfigurationProperties;

/**

* @author xiaojie

* @version 1.0

* @description: 配置类

* @date 2022/8/26 0:18

*/

@Data

@ConfigurationProperties(prefix = "wssnail.mt.leaf")

public class LeafProperties {

//是否开启分段,默认false

public Boolean segmentEnable = false;

//数据库连接地址

public String jdbcUrl;

//数据库用户名称

public String jdbcUsername;

//密码

public String jdbcPassword;

//是否开启雪花算法 默认false

public Boolean snowflakeEnable = false;

//zk端口 默认2181

public Integer snowflakePort = 2181;

//zk连接地址

public String snowflakeZkAddress = "127.0.0.1";

}

b、创建自动装配类

package com.wssnail.leaf.server.config;

import com.alibaba.druid.pool.DruidDataSource;

import com.wssnail.leaf.server.exception.InitException;

import com.wssnail.leaf.server.properties.LeafProperties;

import com.wssnail.leaf.server.service.SegmentService;

import com.wssnail.leaf.server.service.SnowflakeService;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.boot.autoconfigure.condition.ConditionalOnClass;

import org.springframework.boot.context.properties.EnableConfigurationProperties;

import org.springframework.context.annotation.Bean;

import org.springframework.context.annotation.Configuration;

import java.sql.SQLException;

/**

* @author xiaojie

* @version 1.0

* @description: 自动装配类

* @date 2022/8/26 0:19

*/

@Configuration

@EnableConfigurationProperties(LeafProperties.class)

public class LeafAutoConfig {

@Autowired

private LeafProperties leafProperties;

@Bean

@ConditionalOnClass(value ={SnowflakeService.class,SegmentService.class} )

DruidDataSource dataSource() throws SQLException {

DruidDataSource dataSource = new DruidDataSource();

dataSource.setUrl(leafProperties.getJdbcUrl());

dataSource.setUsername(leafProperties.getJdbcUsername());

dataSource.setPassword(leafProperties.getJdbcPassword());

dataSource.init();

return dataSource;

}

@Bean

@ConditionalOnClass

SnowflakeService snowflakeService() throws InitException {

return new SnowflakeService(leafProperties);

}

@Bean

@ConditionalOnClass

SegmentService segmentService() throws SQLException, InitException {

return new SegmentService(dataSource(), leafProperties);

}

}

c、修改service类 分段生成id和雪花算法生成id

package com.wssnail.leaf.server.service;

import com.alibaba.druid.pool.DruidDataSource;

import com.wssnail.leaf.core.IDGen;

import com.wssnail.leaf.core.common.Result;

import com.wssnail.leaf.core.common.ZeroIDGen;

import com.wssnail.leaf.core.segment.SegmentIDGenImpl;

import com.wssnail.leaf.core.segment.dao.IDAllocDao;

import com.wssnail.leaf.core.segment.dao.impl.IDAllocDaoImpl;

import com.wssnail.leaf.server.exception.InitException;

import com.wssnail.leaf.server.properties.LeafProperties;

import lombok.extern.slf4j.Slf4j;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

@Slf4j

public class SegmentService {

private Logger logger = LoggerFactory.getLogger(SegmentService.class);

private IDGen idGen;

private DruidDataSource dataSource;

private LeafProperties leafProperties;

public SegmentService(DruidDataSource dataSource, LeafProperties leafProperties) throws InitException {

this.dataSource=dataSource;

this.leafProperties=leafProperties;

if (leafProperties.getSegmentEnable()) {

// Config Dao

IDAllocDao dao = new IDAllocDaoImpl(dataSource);

// Config ID Gen

idGen = new SegmentIDGenImpl();

((SegmentIDGenImpl) idGen).setDao(dao);

if (idGen.init()) {

logger.info("Segment Service Init Successfully");

} else {

throw new InitException("Segment Service Init Fail");

}

} else {

idGen = new ZeroIDGen();

logger.info("Zero ID Gen Service Init Successfully");

}

}

public Result getId(String key) {

return idGen.get(key);

}

public SegmentIDGenImpl getIdGen() {

if (idGen instanceof SegmentIDGenImpl) {

return (SegmentIDGenImpl) idGen;

}

return null;

}

}

package com.wssnail.leaf.server.service;

import com.wssnail.leaf.core.IDGen;

import com.wssnail.leaf.core.common.Result;

import com.wssnail.leaf.core.common.ZeroIDGen;

import com.wssnail.leaf.core.snowflake.SnowflakeIDGenImpl;

import com.wssnail.leaf.server.exception.InitException;

import com.wssnail.leaf.server.properties.LeafProperties;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

public class SnowflakeService {

private Logger logger = LoggerFactory.getLogger(SnowflakeService.class);

private IDGen idGen;

private LeafProperties leafProperties;

public SnowflakeService(LeafProperties leafProperties) throws InitException {

this.leafProperties=leafProperties;

if (leafProperties.getSnowflakeEnable()) {

String zkAddress = leafProperties.getSnowflakeZkAddress();

int port = leafProperties.getSnowflakePort();

idGen = new SnowflakeIDGenImpl(zkAddress, port);

if(idGen.init()) {

logger.info("Snowflake Service Init Successfully");

} else {

throw new InitException("Snowflake Service Init Fail");

}

} else {

idGen = new ZeroIDGen();

logger.info("Zero ID Gen Service Init Successfully");

}

}

public Result getId(String key) {

return idGen.get(key);

}

}

187

187

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?