王者荣耀英雄信息爬取

分析

入口页面地址

https://pvp.qq.com/web201605/herolist.shtml

第一步获取所有英雄的列表

可以看到英雄列表是在源码中可以被找到的

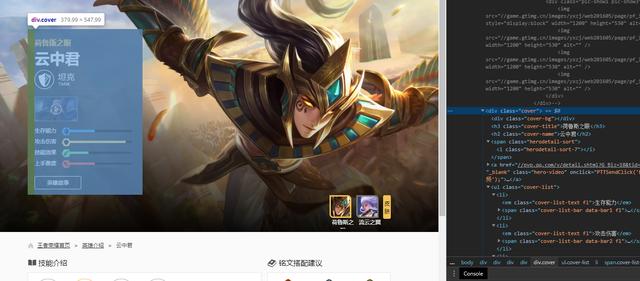

第二步 获取英雄的各种信息

英雄的基本信息放在一个class = "cover"的div中 我们主要采集英雄的名称 和 技能介绍

技能部分都在 class=" zk-con3 zk-con" 中 中的 ul中

image-20200525103312535.png

爬取英雄列表

创建工程

scrapy startproject wzrycd wzry创建爬虫

scrapy genspider wzry_spider pvp.qq.com修改配置

# 不遵循robots协议 因为站点没有这个文件 爬虫会直接略过ROBOTSTXT_OBEY = False# 添加请求头DEFAULT_REQUEST_HEADERS = { 'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8', 'Accept-Language': 'en', 'user-agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/81.0.4044.138 Safari/537.36'}# 下载延迟DOWNLOAD_DELAY = 1修改初始页面

start_urls = ['https://pvp.qq.com/web201605/herolist.shtml']创建爬取列表方法(测试)

def parse(self, response): print("=" * 50) print(response) print("=" * 50)运行爬虫

scrapy crawl wzry_spider2020-05-25 11:08:53 [scrapy.extensions.telnet] INFO: Telnet console listening on 127.0.0.1:60232020-05-25 11:08:54 [scrapy.core.engine] DEBUG: Crawled (200) (referer: None)==================================================<200 https://pvp.qq.com/web201605/herolist.shtml>==================================================2020-05-25 11:08:54 [scrapy.core.engine] INFO: Closing spider (finished)2020-05-25 11:08:54 [scrapy.statscollectors] INFO: Dumping Scrapy stats:{'downloader/request_bytes': 315,成功返回结果

爬取所有英雄链接方法

class WzrySpiderSpider(scrapy.Spider): name = 'wzry_spider' allowed_domains = ['pvp.qq.com'] start_urls = ['https://pvp.qq.com/web201605/herolist.shtml'] # 起始url base_url = "https://pvp.qq.com/web201605/" # url 前缀 def parse(self, response): print("=" * 50) # print(response.body) hero_list = response.xpath("//ul[@class='herolist clearfix']//li") for hero in hero_list: url = self.base_url + hero.xpath("./a/@href").get() print(url) # yield scrapy.Request(url) print("=" * 50)https://pvp.qq.com/web201605/herodetail/114.shtmlhttps://pvp.qq.com/web201605/herodetail/113.shtmlhttps://pvp.qq.com/web201605/herodetail/112.shtmlhttps://pvp.qq.com/web201605/herodetail/111.shtmlhttps://pvp.qq.com/web201605/herodetail/110.shtmlhttps://pvp.qq.com/web201605/herodetail/109.shtmlhttps://pvp.qq.com/web201605/herodetail/108.shtmlhttps://pvp.qq.com/web201605/herodetail/107.shtmlhttps://pvp.qq.com/web201605/herodetail/106.shtmlhttps://pvp.qq.com/web201605/herodetail/105.shtml==================================================爬取英雄详情页面

- 获得基本信息

# 基本信息块hero_info = response.xpath("//div[@class='cover']")hero_name = hero_info.xpath(".//h2[@class='cover-name']/text()").get()print(hero_name)# 分类sort_num = hero_info.xpath(".//span[@class='herodetail-sort']/i/@class").get()[-1:]print(sort_num)# 生存能力viability = hero_info.xpath(".//ul/li[1]/span/i/@style").get()[6:]print("生存能力:" + viability)# 伤害aggressivity = hero_info.xpath(".//ul/li[2]/span/i/@style").get()[6:]print("攻击能力:" + aggressivity)effect = hero_info.xpath(".//ul/li[3]/span/i/@style").get()[6:]print("技能影响" + effect)difficulty = hero_info.xpath(".//ul/li[4]/span/i/@style").get()[6:]print("上手难度:" + difficulty)- 获取技能信息

skill_list = response.xpath("//div[@class='skill-show']/div[@class='show-list']") for skill in skill_list: skill_name = skill.xpath("./p[@class='skill-name']/b/text()").get() if not skill_name: continue # 冷却时间 cooling = skill.xpath("./p[@class='skill-name']/span[1]/text()").get().split(":")[1].strip().split('/') # 消耗 consume = skill.xpath("./p[@class='skill-name']/span[1]/text()").get().split(":")[1].strip().split('/') # "".strip() # 如果这个技能是空的就 continue # 技能介绍 skill_desc = skill.xpath("./p[@class='skill-desc']/text()").get() new_skill = { "name": skill_name, "cooling": cooling, "consume": consume, "desc": skill_desc } new_hero = HeroInfo(name=hero_name, sort_num=sort_num, viability=viability, aggressivity=aggressivity, effect=effect, difficulty=difficulty, skills_list=new_skill) yield new_hero - 英雄信息item类

class HeroInfo(scrapy.Item): # 存字符串 name = scrapy.Field() sort_num = scrapy.Field() viability = scrapy.Field() aggressivity = scrapy.Field() effect = scrapy.Field() difficulty = scrapy.Field() # 存字典 skills_list = scrapy.Field()- 储存 pipelines.py文件

需要修改 ITEM_PIPELINES 配置 加入这个处理类

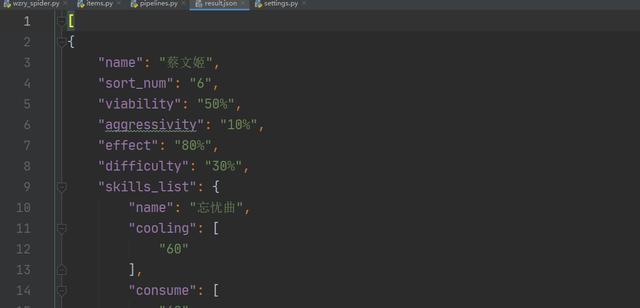

# -*- coding: utf-8 -*-from scrapy.exporters import JsonItemExporterclass WzryPipeline: def __init__(self): # 打开文件并实例化JsonItemExporter self.fp = open('result.json', 'wb') self.save_json = JsonItemExporter(self.fp, encoding="utf-8", ensure_ascii=False, indent=4) # 开始写入 self.save_json.start_exporting() def open_spider(self, spider): pass def close_spider(self, spider): # 结束写入 self.save_json.finish_exporting() # 关闭文件 self.fp.close() def process_item(self, item, spider): # 写入item self.save_json.export_item(item) return item运行爬虫

scrapy crawl wzry_spider结果

image-20200525150137203.png

全部代码

wzry_spider.py

# -*- coding: utf-8 -*-import scrapyfrom wzry.items import HeroInfoclass WzrySpiderSpider(scrapy.Spider): name = 'wzry_spider' allowed_domains = ['pvp.qq.com'] start_urls = ['https://pvp.qq.com/web201605/herolist.shtml'] # 起始url base_url = "https://pvp.qq.com/web201605/" # url 前缀 def parse(self, response): # print(response.body) hero_list = response.xpath("//ul[@class='herolist clearfix']//li") for hero in hero_list: url = self.base_url + hero.xpath("./a/@href").get() yield scrapy.Request(url, callback=self.get_hero_info) def get_hero_info(self, response): # 基本信息块 global new_skill hero_info = response.xpath("//div[@class='cover']") hero_name = hero_info.xpath(".//h2[@class='cover-name']/text()").get() # 分类 sort_num = hero_info.xpath(".//span[@class='herodetail-sort']/i/@class").get()[-1:] # 生存能力 viability = hero_info.xpath(".//ul/li[1]/span/i/@style").get()[6:] # 伤害 aggressivity = hero_info.xpath(".//ul/li[2]/span/i/@style").get()[6:] effect = hero_info.xpath(".//ul/li[3]/span/i/@style").get()[6:] difficulty = hero_info.xpath(".//ul/li[4]/span/i/@style").get()[6:] # 技能列表 skill_list = response.xpath("//div[@class='skill-show']/div[@class='show-list']") for skill in skill_list: skill_name = skill.xpath("./p[@class='skill-name']/b/text()").get() if not skill_name: continue # 冷却时间 cooling = skill.xpath("./p[@class='skill-name']/span[1]/text()").get().split(":" "")[1].strip().split('/') # 消耗 consume = skill.xpath("./p[@class='skill-name']/span[1]/text()").get().split(":" "")[1].strip().split('/') # "".strip() # 如果这个技能是空的就 continue # 技能介绍 skill_desc = skill.xpath("./p[@class='skill-desc']/text()").get() new_skill = { "name": skill_name, "cooling": cooling, "consume": consume, "desc": skill_desc } new_hero = HeroInfo(name=hero_name, sort_num=sort_num, viability=viability, aggressivity=aggressivity, effect=effect, difficulty=difficulty, skills_list=new_skill) yield new_heroitems.py

# -*- coding: utf-8 -*-# Define here the models for your scraped items## See documentation in:# https://docs.scrapy.org/en/latest/topics/items.htmlimport scrapyclass WzryItem(scrapy.Item): # define the fields for your item here like: # name = scrapy.Field() passclass HeroInfo(scrapy.Item): # 存字符串 name = scrapy.Field() sort_num = scrapy.Field() viability = scrapy.Field() aggressivity = scrapy.Field() effect = scrapy.Field() difficulty = scrapy.Field() # 存字典 skills_list = scrapy.Field()pipelines.py

# -*- coding: utf-8 -*-# Define your item pipelines here## Don't forget to add your pipeline to the ITEM_PIPELINES setting# See: https://docs.scrapy.org/en/latest/topics/item-pipeline.htmlfrom scrapy.exporters import JsonItemExporterclass WzryPipeline: def __init__(self): self.fp = open('result.json', 'wb') self.save_json = JsonItemExporter(self.fp, encoding="utf-8", ensure_ascii=False, indent=4) self.save_json.start_exporting() def open_spider(self, spider): pass def close_spider(self, spider): self.save_json.finish_exporting() self.fp.close() def process_item(self, item, spider): self.save_json.export_item(item) return item

784

784

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?