Hue安装部署(Centos 7.2)

2017-09-13 11:17:03 小强签名设计 阅读数 4635更多

分类专栏: 大数据生态圈

版权声明:本文为博主原创文章,遵循 CC 4.0 BY-SA 版权协议,转载请附上原文出处链接和本声明。

本文链接:https://blog.csdn.net/m0_37739193/article/details/77963240

一,HUE介绍

http://blog.csdn.net/liangyihuai/article/details/54137163

二,Hue的安装和部署

1,在root用户下用yum安装所依赖的系统包(能联网)

yum -y install ant asciidoc cyrus-sasl-devel cyrus-sasl-gssapi gcc gcc-c++ krb5-devel libtidy libxml2-devel libxslt-devel openldap-devel python-devel sqlite-devel openssl-devel mysql-devel gmp-devel(1).安装的时候发现并没有libtidy包,网上查了下所这个libtidy只对于单元测试而言,没安装上倒也不影响安装

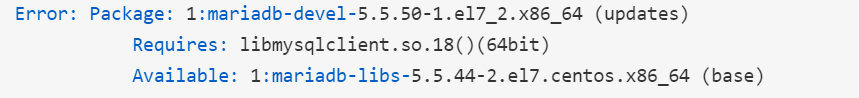

(2)如果安装mysql-devel报错 ,类似这样

解决方案参考https://blog.csdn.net/weixin_39689084/article/details/102627542

[hadoop@h153 ~]$ tar -zxvf hue-3.9.0-cdh5.5.2.tar.gz

[hadoop@h153 ~]$ cd hue-3.9.0-cdh5.5.2

2,编译源码包

[hadoop@h153 hue-3.9.0-cdh5.5.2]$ make apps

3,修改配置文件hue.ini

[hadoop@h153 hue-3.9.0-cdh5.5.2]$ vi desktop/conf/hue.ini

[desktop]

# 安全秘钥,存储session的加密处理

secret_key=dfsahjfhflsajdhfljahl

# Time zone name

time_zone=Asia/Shanghai

# Enable or disable debug mode.

django_debug_mode=false

# Enable or disable backtrace for server error

http_500_debug_mode=false

# This should be the hadoop cluster admin

## default_hdfs_superuser=hdfs

default_hdfs_superuser=root

# 不启用的模块

#app_blacklist=impala,security,rdbms,jobsub,pig,hbase,sqoop,zookeeper,metastore,indexer

[[database]]

# 数据库引擎类型

engine=mysql

# 数据库主机地址

host=hadoop101

# 数据库端口

port=3306

# 数据库用户名

user=hue

# 数据库密码

password=123456

# 数据库库名

name=hue

说明:设置一个secret_key的目的是加密你的cookie让你的hue更安全。找到secret_key这个项,然后随便设置一串字符串(官方建议30-60个字符长度)

4,启动hue

[hadoop@h153 hue-3.9.0-cdh5.5.2]$ build/env/bin/supervisor

关于hue安装后出现KeyError: "Couldn't get user id for user hue"的解决方法

首先说明出现此问题的原因是因为你使用的root用户安装了hue,然后在root用户下使用的build/env/bin/supervisor,如下图所示那样:

知道了原因,就容易解决问题了。首先要创建个普通用户,并给添加密码。如果密码给的过于简单,会给出提示,忽略就行,如下图:

然后,我们要给刚才解压的hue文件改变拥有者属性,通过 chown -R 用户名 文件地址。如下图:

![]()

最后,我们使用 su 命令切换用户,到hue文件夹下执行运行hue的命令就可以了。

[root@hadoop101 hue-3.9.0]# su hue

[hue@hadoop101 hue-3.9.0]$ build/env/bin/supervisor

[INFO] Not running as root, skipping privilege drop

starting server with options:

{'daemonize': False,

'host': 'h153',

'pidfile': None,

'port': 8888,

'server_group': 'hue',

'server_name': 'localhost',

'server_user': 'hue',

'ssl_certificate': None,

'ssl_cipher_list': 'ECDHE-RSA-AES128-GCM-SHA256:ECDHE-ECDSA-AES128-GCM-SHA256:ECDHE-RSA-AES256-GCM-SHA384:ECDHE-ECDSA-AES256-GCM-SHA384:DHE-RSA-AES128-GCM-SHA256:DHE-DSS-AES128-GCM-SHA256:kEDH+AESGCM:ECDHE-RSA-AES128-SHA256:ECDHE-ECDSA-AES128-SHA256:ECDHE-RSA-AES128-SHA:ECDHE-ECDSA-AES128-SHA:ECDHE-RSA-AES256-SHA384:ECDHE-ECDSA-AES256-SHA384:ECDHE-RSA-AES256-SHA:ECDHE-ECDSA-AES256-SHA:DHE-RSA-AES128-SHA256:DHE-RSA-AES128-SHA:DHE-DSS-AES128-SHA256:DHE-RSA-AES256-SHA256:DHE-DSS-AES256-SHA:DHE-RSA-AES256-SHA:AES128-GCM-SHA256:AES256-GCM-SHA384:AES128-SHA256:AES256-SHA256:AES128-SHA:AES256-SHA:AES:CAMELLIA:DES-CBC3-SHA:!aNULL:!eNULL:!EXPORT:!DES:!RC4:!MD5:!PSK:!aECDH:!EDH-DSS-DES-CBC3-SHA:!EDH-RSA-DES-CBC3-SHA:!KRB5-DES-CBC3-SHA',

'ssl_private_key': None,

'threads': 40,

'workdir': None}

5,浏览器查看(http://192.168.205.153:8888)

页面切换中文版

(1).修改配置文件

vi /opt/soft/hadoop/hue-3.10.0/desktop/core/src/desktop/settings.py

LANGUAGE_CODE = 'zh_CN'

#LANGUAGE_CODE = 'en-us'

LANGUAGES = [

('en-us', _('English')),

('zh_CN', _('Simplified Chinese')),

]

(2).重新编译

make apps

(3).启动hue

build/env/bin/supervisor

首次登陆设置任意用户名和密码作为超级用户,需要你记住,建议和Hadoop用户名一样

三,hue集成hadoop2.x

1,hdfs-site.xml配置文件添加如下内容

[hadoop@h153 hadoop-2.6.0-cdh5.5.2]$ vi etc/hadoop/hdfs-site.xml

<property>

<name>dfs.webhdfs.enabled</name>

<value>true</value>

</property>

2,core-site.xml配置文件添加如下内容

[hadoop@h153 hadoop-2.6.0-cdh5.5.2]$ vi etc/hadoop/core-site.xml

<property>

<name>hadoop.proxyuser.hue.hosts</name>

<value>*</value>

</property>

<property>

<name>hadoop.proxyuser.hue.groups</name>

<value>*</value>

</property>

3,修改hue的hue.ini配置文件

###########################################################################

# Settings to configure your Hadoop cluster.

###########################################################################

[hadoop]

# Configuration for HDFS NameNode

# ------------------------------------------------------------------------

[[hdfs_clusters]]

# HA support by using HttpFs

[[[default]]]

# Enter the filesystem uri

fs_defaultfs=hdfs://h153:8020

# NameNode logical name.

## logical_name=

# Use WebHdfs/HttpFs as the communication mechanism.

# Domain should be the NameNode or HttpFs host.

# Default port is 14000 for HttpFs.

webhdfs_url=http://h153:50070/webhdfs/v1

# Change this if your HDFS cluster is Kerberos-secured

## security_enabled=false

# In secure mode (HTTPS), if SSL certificates from YARN Rest APIs

# have to be verified against certificate authority

## ssl_cert_ca_verify=True

# Directory of the Hadoop configuration

## hadoop_conf_dir=$HADOOP_CONF_DIR when set or '/etc/hadoop/conf'

hadoop_hdfs_home=/home/hadoop/hadoop-2.6.0-cdh5.5.2

hadoop_bin=/home/hadoop/hadoop-2.6.0-cdh5.5.2/bin

hadoop_conf_dir=/home/hadoop/hadoop-2.6.0-cdh5.5.2/etc/hadoop

# Configuration for YARN (MR2)

# ------------------------------------------------------------------------

[[yarn_clusters]]

[[[default]]]

# Enter the host on which you are running the ResourceManager

resourcemanager_host=h153

# The port where the ResourceManager IPC listens on

## resourcemanager_port=8032

# Whether to submit jobs to this cluster

submit_to=True

# Resource Manager logical name (required for HA)

## logical_name=

# Change this if your YARN cluster is Kerberos-secured

## security_enabled=false

# URL of the ResourceManager API

resourcemanager_api_url=http://h153:8088

# URL of the ProxyServer API

proxy_api_url=http://h153:8088

# URL of the HistoryServer API

history_server_api_url=http://h153:19888

# In secure mode (HTTPS), if SSL certificates from YARN Rest APIs

# have to be verified against certificate authority

## ssl_cert_ca_verify=True

# HA support by specifying multiple clusters

# e.g.

# [[[ha]]]

# Resource Manager logical name (required for HA)

## logical_name=my-rm-name

4,重新启动hdfs

[hadoop@h153 hadoop-2.6.0-cdh5.5.2]$ sbin/stop-all.sh

[hadoop@h153 hadoop-2.6.0-cdh5.5.2]$ sbin/start-all.sh

[hadoop@h153 hadoop-2.6.0-cdh5.5.2]$ sbin/mr-jobhistory-daemon.sh start historyserver

5,重新启动hue服务器

[hadoop@h153 hue-3.9.0-cdh5.5.2]$ build/env/bin/supervisor

6,查看测试结果

[hadoop@h153 ~]$ hadoop fs -ls /

drwxr-xr-x - hadoop supergroup 0 2017-09-11 18:50 /hbase

drwxr-xr-x - hadoop supergroup 0 2017-09-11 19:07 /input

drwxr-xr-x - hadoop supergroup 0 2017-09-11 19:08 /output

drwx------ - hadoop supergroup 0 2017-09-11 20:14 /tmp

drwxr-xr-x - hadoop supergroup 0 2017-09-11 20:14 /user

[hadoop@h153 ~]$ hadoop fs -cat /input/he.txt

hello world

hello hadoop

hello hive

四,hue集成hive

1,配置hue.ini配置文件(找到[beeswax]段落,为什么叫[beeswax]而不是[hive]这是历史原因)

[beeswax]

# Host where HiveServer2 is running.

# If Kerberos security is enabled, use fully-qualified domain name (FQDN).

hive_server_host=h153

# Port where HiveServer2 Thrift server runs on.

hive_server_port=10000

# Hive configuration directory, where hive-site.xml is located

hive_conf_dir=/home/hadoop/hive-1.1.0-cdh5.5.2/conf

# Timeout in seconds for thrift calls to Hive service

server_conn_timeout=120

# Choose whether to use the old GetLog() thrift call from before Hive 0.14 to retrieve the logs.

# If false, use the FetchResults() thrift call from Hive 1.0 or more instead.

## use_get_log_api=false

# Set a LIMIT clause when browsing a partitioned table.

# A positive value will be set as the LIMIT. If 0 or negative, do not set any limit.

## browse_partitioned_table_limit=250

# The maximum number of partitions that will be included in the SELECT * LIMIT sample query for partitioned tables.

## sample_table_max_partitions=10

# A limit to the number of rows that can be downloaded from a query.

# A value of -1 means there will be no limit.

# A maximum of 65,000 is applied to XLS downloads.

## download_row_limit=1000000

# Hue will try to close the Hive query when the user leaves the editor page.

# This will free all the query resources in HiveServer2, but also make its results inaccessible.

## close_queries=false

# Thrift version to use when communicating with HiveServer2.

# New column format is from version 7.

## thrift_version=7

2,修改hive的hive-site.xml文件配置metastore server

[hadoop@h153 hive-1.1.0-cdh5.5.2]$ vi conf/hive-site.xml

<configuration>

<property>

<name>hive.metastore.uris</name>

<value>thrift://h153:9083</value>

</property>

</configuration>

3,修改hdfs文件系统的/tmp权限

[hadoop@h153 hadoop-2.6.0-cdh5.5.2]$ hdfs dfs -chmod -R 777 /tmp

4,创建表并插入数据

[hadoop@h153 hui]$ vi student.txt

101 zhangsan

102 lisi

103 laowu

[hadoop@h153 hive-1.1.0-cdh5.5.2]$ ./bin/hive

hive> create table student (id int, name string) row format delimited fields terminated by ' ' ;

hive> load data local inpath '/home/hadoop/hui/student.txt' into table student ;

hive> select * from student ;

OK

101 zhangsan

102 lisi

103 laowu

Time taken: 1.627 seconds, Fetched: 3 row(s)

hive> exit;

5,启动metastore server(先启动)和hiveserver2

前台执行

[hadoop@h153 hive-1.1.0-cdh5.5.2]$ bin/hive --service metastore

[hadoop@h153 hive-1.1.0-cdh5.5.2]$ bin/hive --service hiveserver2

后台执行,并输出日志为null

[hadoop@h153 hive-1.1.0-cdh5.5.2]$ nohup bin/hive --service metastore 1>/dev/null 2>&1 &

[hadoop@h153 hive-1.1.0-cdh5.5.2]$ nohup bin/hive --service hiveserver2 1>/dev/null 2>&1 &

6,查看配置是否生效

连接hive的时候出现这个错误:

解决:yum -y install cyrus-sasl-plain cyrus-sasl-devel cyrus-sasl-gssapi

五,hue集成Zookeeper

1,修改hue.ini中zookeeper相关配置

[zookeeper]

[[clusters]]

[[[default]]]

# Zookeeper ensemble. Comma separated list of Host/Port.

# e.g. localhost:2181,localhost:2182,localhost:2183

host_ports=h153:2181,h154:2181,h155:2181

2,重新启动hue服务器

[hadoop@h153 hue-3.9.0-cdh5.5.2]$ build/env/bin/supervisor

3,查看效果(连接不太稳定,多连几次)

六,hue集成hbase

1、代理用户授权认证

hadoop的core-site.xml添加

Hadoop中的ProxyUser作用参考:https://my.oschina.net/OttoWu/blog/806814

<property>

<name>hadoop.proxyuser.hue.hosts</name>

<value>*</value>

</property>

<property>

<name>hadoop.proxyuser.hue.groups</name>

<value>*</value>

</property>

<property>

<name>hadoop.proxyuser.hbase.hosts</name>

<value>*</value>

</property>

<property>

<name>hadoop.proxyuser.hbase.groups</name>

<value>*</value>

</property>

2,修改hue.ini中hbase相关配置

[hbase]

# Comma-separated list of HBase Thrift servers for clusters in the format of '(name|host:port)'.

# Use full hostname with security.

# If using Kerberos we assume GSSAPI SASL, not PLAIN.

hbase_clusters=(Cluster|h153:9090)

hbase_conf_dir=/usr/local/app/hbase-1.2.0/conf

2,启动HBase

[hadoop@h153 hbase-1.0.0-cdh5.5.2]$ bin/start-hbase.sh

3,HUE跟Hbase通讯是通过hbase-thrift,启动thrift server

[hadoop@h153 hbase-1.0.0-cdh5.5.2]$ bin/hbase-daemon.sh start thrift

4,创建表

[hadoop@h153 hbase-1.0.0-cdh5.5.2]$ bin/hbase shell

hbase(main):002:0> create 'hehe','cf'

5,查看测试结果

参考:

http://blog.csdn.net/youfashion/article/details/51002563

http://blog.csdn.net/eason_oracle/article/details/52153061

http://www.cnblogs.com/zlslch/p/6820387.html

351

351

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?