1. 是什么

docker启动后,会产生一个名为docker0的虚拟网桥

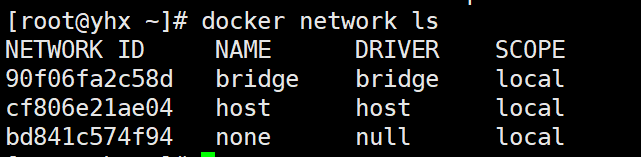

docker 默认创建的三大网络模式:

2. 常用命令

[root@yhx ~]# docker network --help

Usage: docker network COMMAND

Manage networks

Commands:

connect Connect a container to a network # 连接

create Create a network # 创建

disconnect Disconnect a container from a network # 取消连接

inspect Display detailed information on one or more networks #查看详细信息

ls List networks # 列表

prune Remove all unused networks # 删除全部

rm Remove one or more networks #删除一个或多个

Run 'docker network COMMAND --help' for more information on a command.

[root@yhx ~]#

3. 能干嘛

- 容器间的互联和通信以及端口映射

- 容器ip变动时可以通过服务名直接网络通信而不受影响

4. docker的网络模式:

4.1 bridge:用的最多

为每个容器分配、设置ip等,并将容器连接到一个docker0;虚拟网桥,默认为该模式

Docker服务默认会创建一个docker0网桥(其中有一个docker0的内部接口),该桥接网络的名称为docker0,它在内核层连通了其他的物理或虚拟网卡,这就将所有容器和本地主机都放到同一个物理网络。Docker默认指定了docker0接口的IP地址和子网掩码,让主机和容器之间可以通过相互通信。

说明:

查看veth和eth0绑定关系

- 启动2个ubuntu容器u1和u3:

[root@yhx ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

8ac172115e59 ubuntu "bash" 18 minutes ago Up 18 minutes u3

07cb57ae46e0 ubuntu "bash" 24 minutes ago Up 24 minutes u1

- 查看宿主机ip:可以看到2个veth69和73,其中69关联68,73关联72

[root@yhx ~]# ip addr

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 00:16:3e:0e:c6:3f brd ff:ff:ff:ff:ff:ff

inet 172.16.162.115/20 brd 172.16.175.255 scope global dynamic eth0

valid_lft 314731169sec preferred_lft 314731169sec

inet6 fe80::216:3eff:fe0e:c63f/64 scope link

valid_lft forever preferred_lft forever

3: docker0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default

link/ether 02:42:d6:4e:f4:4a brd ff:ff:ff:ff:ff:ff

inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0

valid_lft forever preferred_lft forever

inet6 fe80::42:d6ff:fe4e:f44a/64 scope link

valid_lft forever preferred_lft forever

69: veth4d0c3bc@if68: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue master docker0 state UP group default

link/ether ea:28:74:3a:6f:19 brd ff:ff:ff:ff:ff:ff link-netnsid 1

inet6 fe80::e828:74ff:fe3a:6f19/64 scope link

valid_lft forever preferred_lft forever

73: veth408256d@if72: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue master docker0 state UP group default

link/ether 1e:36:d4:de:95:40 brd ff:ff:ff:ff:ff:ff link-netnsid 2

inet6 fe80::1c36:d4ff:fede:9540/64 scope link

valid_lft forever preferred_lft forever

- 进入到u1容器,查看ip:可以看到有eth0,68关联69,符合我们两两匹配的预期

root@07cb57ae46e0:/# ip addr

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

68: eth0@if69: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default

link/ether 02:42:ac:11:00:03 brd ff:ff:ff:ff:ff:ff link-netnsid 0

inet 172.17.0.3/16 brd 172.17.255.255 scope global eth0

valid_lft forever preferred_lft forever

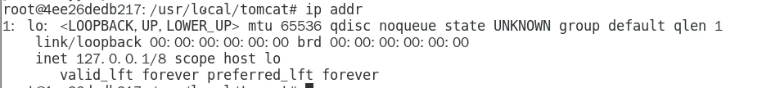

4.2 host:

容器将不会虚拟出自己的网卡,配置自己的IP等,而是使用宿主机的IP和端口与外界进行通信,不再需要额外进行NAT转换

说明

查看网络模式为host的容器:

启动容器tomcat83,设置网络模式为host:

查看容器网络模式:

可以看到ip和gateway为空

在tomcat83容器内部查看ip addr:

不做演示,应该和宿主机是一样的

4.3 none:几乎不会用

容器有独立的NetWork namespace,但并没有对其进行任何网络设置,如分配veth pair和网桥连接、IP等

禁用网络功能,只有lo标识(就是127.0.0.1表示本地循环)

在none模式下,并不为docker容器进行任何网络配置。也就是说,这个docker容器没有网卡、ip、路由等信息,只有一个lo,需要我们自己为docker容器添加网卡、配置ip等

启动tomcat4为none模式,查看inspect 和ip addr

[root@yhx ~]# docker run -d --network none --name=tomcat4 tomcat

"MacAddress": "",

"Networks": {

"none": {

"IPAMConfig": null,

"Links": null,

"Aliases": null,

"NetworkID": "bd841c574f94b9d3b4e351e2825b2b2327b501abd3c0501c7c1aeadb0edd2cf8",

"EndpointID": "66e81a0958cb654135b8c8e5ba559d13fcd0d789775c0ffc46a99a5a11eef88e",

"Gateway": "",

"IPAddress": "",

"IPPrefixLen": 0,

"IPv6Gateway": "",

"GlobalIPv6Address": "",

"GlobalIPv6PrefixLen": 0,

"MacAddress": "",

"DriverOpts": null

}

}

4.4 container:

新创建的容器不会创建自己的网卡和配置自己的IP,而是和一个指定的容器共享IP,端口范围等

两个容器除了网络方面,其他的如文件系统,进程列表等还是隔离的。

演示:

使用alpine镜像:

- 正常启动a1:

[root@yhx ~]# docker run -it --name a1 alpine - 以a1为模板启动a2:

docker run -it --network container:a1 --name a2 alpine - 查看他们两个的ipaddr:

[root@yhx ~]# docker exec -it a1 /bin/sh

/ # ip addr

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

78: eth0@if79: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue state UP

link/ether 02:42:ac:11:00:02 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.2/16 brd 172.17.255.255 scope global eth0

valid_lft forever preferred_lft forever

[root@yhx ~]# docker exec -it a2 /bin/sh

/ # ip addr

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

78: eth0@if79: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue state UP

link/ether 02:42:ac:11:00:02 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.2/16 brd 172.17.255.255 scope global eth0

valid_lft forever preferred_lft forever

- 停止a1,查看a2:因为a1没有,a2就变为了none

[root@yhx ~]# docker exec -it a2 /bin/sh

/ # ipaddr

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

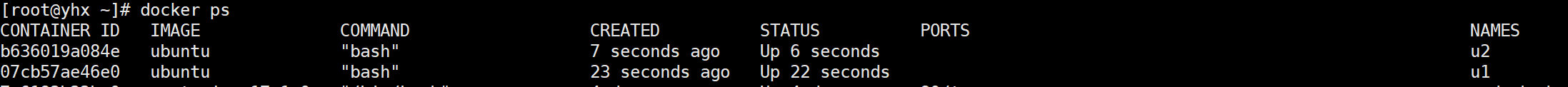

5. docker容器映射变化:

5.1 启动2个ubuntu的镜像:

5.2 查看u1网络:

docker inspect u1

"Networks": {

"bridge": {

"IPAMConfig": null,

"Links": null,

"Aliases": null,

"NetworkID": "90f06fa2c58d7da39b1ad65bd25dfd303e9310937b0b34f7b04baea3a506523a",

"EndpointID": "481cc3219089426b21e4026faac77c5f7ea091d55abc5a53b91adbdf50ee340d",

"Gateway": "172.17.0.1",

"IPAddress": "172.17.0.3",

"IPPrefixLen": 16,

"IPv6Gateway": "",

"GlobalIPv6Address": "",

"GlobalIPv6PrefixLen": 0,

"MacAddress": "02:42:ac:11:00:03",

"DriverOpts": null

}

}

5.3 查看u2网络:

docker inspect u2

"Networks": {

"bridge": {

"IPAMConfig": null,

"Links": null,

"Aliases": null,

"NetworkID": "90f06fa2c58d7da39b1ad65bd25dfd303e9310937b0b34f7b04baea3a506523a",

"EndpointID": "4f932a898102c07bb101421875c1247c559d060690a59e544988727fd5953cce",

"Gateway": "172.17.0.1",

"IPAddress": "172.17.0.4",

"IPPrefixLen": 16,

"IPv6Gateway": "",

"GlobalIPv6Address": "",

"GlobalIPv6PrefixLen": 0,

"MacAddress": "02:42:ac:11:00:04",

"DriverOpts": null

}

}

5.4 杀死u2并启动u3查看u3网络:

docker inspect u3

"Networks": {

"bridge": {

"IPAMConfig": null,

"Links": null,

"Aliases": null,

"NetworkID": "90f06fa2c58d7da39b1ad65bd25dfd303e9310937b0b34f7b04baea3a506523a",

"EndpointID": "1d1eb71449357164bb12fa43a5c12254582e75bf8923c9713a8682b38935faee",

"Gateway": "172.17.0.1",

"IPAddress": "172.17.0.4",

"IPPrefixLen": 16,

"IPv6Gateway": "",

"GlobalIPv6Address": "",

"GlobalIPv6PrefixLen": 0,

"MacAddress": "02:42:ac:11:00:04",

"DriverOpts": null

}

}

可以看到如果写死ip地址,但是如果某个容器突然挂了,其ip会被其他容器占用,就会导致错误。

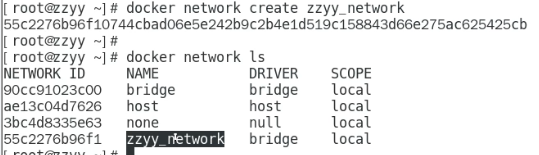

6. 自定义网络

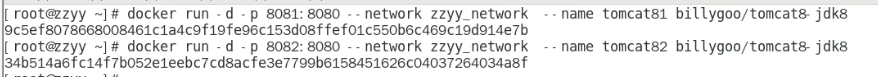

- 启动2个tomcat镜像,分别为81和82:

- 进入到容器内部,查看各自ip,并ping对方ip,可以看出,是没有问题的

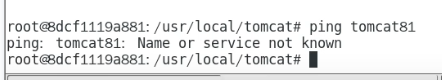

- 用服务名ping:可以看出是不行的

6.1 自定义桥接网络:zzyy_network

- 指定tomcat的网络模式为我们新建的网络模式

- 进入容器,并使用服务名ping对方:可以看出ping的通

6.2 结论

自定义网络本身就维护好了主机名和ip的对应关系(ip和域名都能通)

978

978

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?