在利用URL对网页爬虫前,我们先了解一下,什么是URL,它有哪些常用方法?

URL的定义:

public final class URL extends Object implements Serializable可以出这是final修饰的类,说明没有子类,且实现了Serializable,说明可以实现序列化。即可以将对象写入流中。

我们通过以下一个例子来来讲解一下常用方法

public class TestURL {

public static void main(String[] args) throws IOException {

//https:超文本传输协议,是安全的,端口号为443 ; www.baidu.com 域名 ; #后的aa为锚点,即超链接 ; ?后为传递的参数

URL url=new URL("https://www.baidu.com/index.html#aa?username=bjsxt&pwd=123456");

System.out.println("协议名称:"+url.getProtocol());

System.out.println("主机名称:"+url.getHost());//若端口号未写则应该为-1

System.out.println("端口号:"+url.getPort());

System.out.println("获取url文件名:"+url.getFile());

System.out.println("获取URL关联的默认端口号:"+url.getDefaultPort());

System.out.println("获取资源路径:"+url.getPath());

/**

* 1、从网络上获取资源,www.baidu.com

* 2、存储到本地

*/

//创建URL对象

URL url1=new URL("https://www.baidu.com");

//获取字节流输入流

InputStream is=url1.openStream();

//缓冲流

BufferedReader br=new BufferedReader(new InputStreamReader(is,"UTF-8"));

//存储到本地

BufferedWriter bw=new BufferedWriter(new OutputStreamWriter(new FileOutputStream("index.html"),"UTF-8"));

//边读边写

String line=null;

while((line=br.readLine())!=null){

System.out.println(line);

bw.write(line);

bw.newLine();

bw.flush();

}

//关闭流

bw.close();

br.close();

}

}输出结果:

协议名称:https

主机名称:www.baidu.com

端口号:-1

获取url文件名:/index.html

获取URL关联的默认端口号:443

获取资源路径:/index.htmlindex.html:

<!DOCTYPE html>

<!--STATUS OK-->

<html>

<head>

<meta http-equiv=content-type content=text/html;charset=utf-8>

<meta http-equiv=X-UA-Compatible content=IE=Edge>

<meta content=always name=referrer>

<link rel=stylesheet type=text/css

href=https://ss1.bdstatic.com/5eN1bjq8AAUYm2zgoY3K/r/www/cache/bdorz/baidu.min.css>

<title>百度一下,你就知道</title>

</head>

<body link=#0000cc>

<div id=wrapper>

<div id=head>

<div class=head_wrapper>

<div class=s_form>

<div class=s_form_wrapper>

<div id=lg>

<img hidefocus=true src=//www.baidu.com/img/bd_logo1.png

width=270 height=129>

</div>

<form id=form name=f action=//www.baidu.com/s class=fm>

<input type=hidden name=bdorz_come value=1> <input

type=hidden name=ie value=utf-8> <input type=hidden

name=f value=8> <input type=hidden name=rsv_bp value=1>

<input type=hidden name=rsv_idx value=1> <input

type=hidden name=tn value=baidu><span

class="bg s_ipt_wr"><input id=kw name=wd class=s_ipt

value maxlength=255 autocomplete=off autofocus=autofocus></span><span

class="bg s_btn_wr"><input type=submit id=su value=百度一下

class="bg s_btn" autofocus></span>

</form>

</div>

</div>

<div id=u1>

<a href=http://news.baidu.com name=tj_trnews class=mnav>新闻</a> <a

href=https://www.hao123.com name=tj_trhao123 class=mnav>hao123</a>

<a href=http://map.baidu.com name=tj_trmap class=mnav>地图</a> <a

href=http://v.baidu.com name=tj_trvideo class=mnav>视频</a> <a

href=http://tieba.baidu.com name=tj_trtieba class=mnav>贴吧</a>

<noscript>

<a

href=http://www.baidu.com/bdorz/login.gif?login&tpl=mn&u=http%3A%2F%2Fwww.baidu.com%2f%3fbdorz_come%3d1

name=tj_login class=lb>登录</a>

</noscript>

<script>document.write('<a href="http://www.baidu.com/bdorz/login.gif?login&tpl=mn&u=' + encodeURIComponent(window.location.href + (window.location.search === "" ? "?" : "&") + "bdorz_come=1") + '" name="tj_login" class="lb">登录</a>');

</script>

<a href=//www.baidu.com/more / name=tj_briicon class=bri

style="display: block;">更多产品</a>

</div>

</div>

</div>

<div id=ftCon>

<div id=ftConw>

<p id=lh>

<a href=http://home.baidu.com>关于百度</a> <a href=http://ir.baidu.com>About

Baidu</a>

</p>

<p id=cp>

©2017 Baidu <a href=http://www.baidu.com/duty />使用百度前必读</a>

<a href=http://jianyi.baidu.com / class=cp-feedback>意见反馈</a> 京ICP证030173号

<img src=//www.baidu.com/img/gs.gif>

</p>

</div>

</div>

</div>

</body>

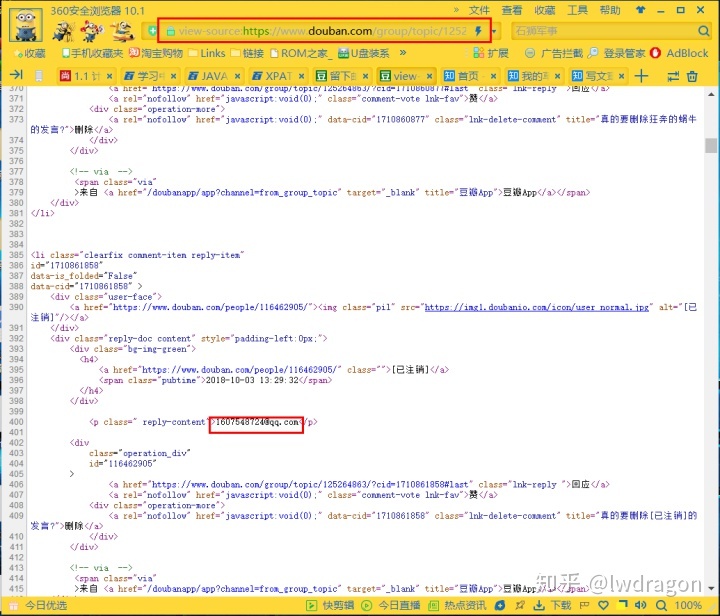

</html>通过上面的案例可以发现我们可以通过url的openstream方法可以获取一个网站的源码文件。所以我么设想是不是可以利用在一个网站中获取邮箱呢。

通过以上两个图片你是不是有了想法。

接下进入正题吧。代码如下:

package com.bjsxt.url;

import java.io.BufferedReader;

import java.io.IOException;

import java.io.InputStream;

import java.io.InputStreamReader;

import java.net.MalformedURLException;

import java.net.URL;

import java.util.regex.Matcher;

import java.util.regex.Pattern;

public class TestURL {

public static void main(String[] args) throws IOException {

URL url=new URL("https://www.douban.com/group/topic/125264863/");

BufferedReader br=new BufferedReader(new InputStreamReader(url.openStream()));//获取源码信息

String strReg="[w.]+@[w.]+.[w]+";

Pattern pattern=Pattern.compile(strReg);

String str=null;

while((str=br.readLine())!=null){

Matcher matcher=pattern.matcher(str);

while(matcher.find()){

System.out.println(matcher.group());

}

}

br.close();

}

}输出结果:

maming1989@126.com

1607548724@qq.com

516110546@qq.com

919706245@qq.com

jennifer012@yeah.net

1120978510@qq.com

Spencer721@163.com

chaiyya@163.com

735281660@qq.com

511611285@qq.com

2066192310@qq.com

2913980130@qq.com

18846190597@163.com

1134906240@qq.com

1937894408@qq.com

76224365@qq.com

pjiyan@outlook.com

17603227720@163.com

15967242526@163.com

mac1023@sina.com

1790443368@qq.com

putao891011@163.com

tsuabasayi@qq.com

1115656777@qq.com

zuoyexiongzhi@163.com

912333880@qq.com

t_sdam@163.com

771479801@qq.com

1975805867@qq.com

1435540787@qq.com

623025701@qq.com

401023798@qq.com

1451235943@qq.com

1253099965@qq.com

719886686@qq.com

1696063991@qq.com

1599603841@qq.com

910798479@qq.com

1084928422@qq.cm

754432791@qq.com

743512682@qq.com

441948935@qq.com

2778529189@qq.com

1552445663@qq.com

future_Michael@Sina.com

lazarro1002@gmail.com

228775969@qq.com

344615525@qq.com

815989503@qq.com

2913980130@qq.com

18825073461@qq.com

1286553850@qq.com

zui0134589@163.com

1921174267@qq.com

1635036743@qq.com

415630920@qq.com

1224469333@qq.com

2541971289@qq.com

li813549282@icloud.com

2261610491@qq.com

657860729@qq.com

623554043@qq.com

985663493@qq.com

fionaning95@foxmail.com

1923660403@qq.com

362372580@qq.com

931390596@qq.com

327144892@qq.com

244553797@qq.com

2547429661@qq.com

maming1989@126.com

maming1989@126.com

aby501@163.com

1451739284@qq.com

xunzhaoweizhi9@163.com

26545527@qq.com

2548491967@qq.com

1075202642@qq.com

544109270@qq.com

1527856122@qq.com

showhr129@163.com

imchenchang@163.com

imchenchang@sina.com

wuyuanmimei@yahoo.com

932674418@QQ.com

814402278@qq.com

619681588@qq.com

1259554862@qq.com

1196994728@qq.com

15295933537@163.com

794233892@qq.coom

847941713@qq.com

business@douban.com

以上就是爬虫内容。

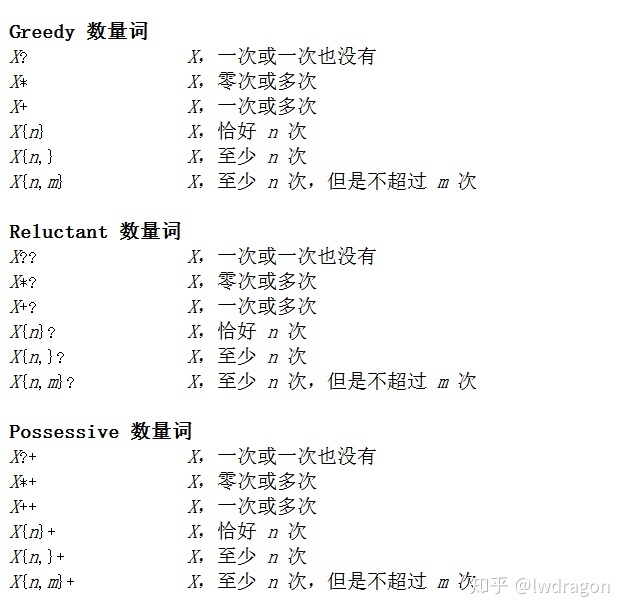

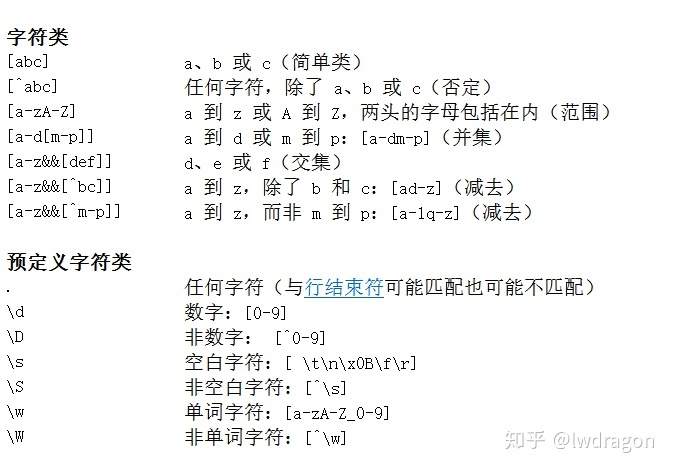

或许,你会对正则表达式那一块不熟悉,你只要会使用就好了。

你只要对strReg里如何设置了解一下。

下面是一些正则表达式:

------------------百战卓越018天

263

263

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?