目录

1.背景:

需要在生产上,复制一张表单并且重新命名,此表单数据量为两千万条,存在索引。

2.解决方案 :

- 使用Navicat直接CV复制,然后修改表名。

复制表单完成时间无法直观看出,还会出现卡顿。这种类似于下面的sql,速度慢。

INSERT into 新表 from SELECT * FROM 旧表- 导出sql文件,重新命名,再导入数据库。

数据量太大,文件即使能导出,上百兆的文件,打开都很费劲,更不用说一键修改表名。

- 拆分表单数据为excel,再分批次导入(以大化小)。

查询快,写入文件速度快。从文件导入比执行sql快。

- 代码批量插入,拆分数据,分批次导入。

查询快,相比上面省略导出excel环节,代码执行批量插入。请求事务比较集中,一查就得插入。

3.操作步骤:

A.导出excel:

原理:

本文将数据进行拆分,每30000条数据,存一个excel文件。

代码解释:

每次查询30000条数据,然后进行写入excel操作

完整代码:

import org.apache.poi.xssf.usermodel.XSSFCell;

import org.apache.poi.xssf.usermodel.XSSFRow;

import org.apache.poi.xssf.usermodel.XSSFSheet;

import org.apache.poi.xssf.usermodel.XSSFWorkbook;

import org.junit.jupiter.api.Test;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.boot.test.context.SpringBootTest;

import org.springframework.util.ObjectUtils;

import tk.mybatis.mapper.entity.Example;

import java.io.File;

import java.io.FileOutputStream;

import java.io.IOException;

import java.lang.reflect.Field;

import java.util.ArrayList;

import java.util.List;

import java.util.UUID;

@SpringBootTest

class Tests {

private static final List heaList;

private static final int baseNum = 30000;

@Autowired

private CampaignTargetMapper dao;

static {

heaList = new ArrayList();

heaList.add("id");

heaList.add("pk_id");

heaList.add("code");

heaList.add("camp_Key");

heaList.add("camp_code");

heaList.add("consumer_key");

heaList.add("customer_id");

heaList.add("customer_type");

heaList.add("status");

heaList.add("channel");

heaList.add("load_number");

heaList.add("brand_code");

heaList.add("country");

heaList.add("sent_date");

heaList.add("job_id");

// heaList.add("create_time");

// heaList.add("modify_time");

}

//写入文件路径:

private static final String filePath = "D:\\备份\\test2\\";

private static int page_no = 128;

@Test

void contextLoads() throws IllegalAccessException {

copyTableData();

}

public void copyTableData() throws IllegalAccessException {

int ratioNum = 30000;

//target总数

Integer targetCount = dao.selectCount(null);

//按2万等分

Integer num = targetCount / ratioNum;

for (int i = 191; i <= num; i++) {

Example example = new Example(CampaignTarget.class);

Example.Criteria criteria = example.createCriteria();

int firstCount = i == 1 ? 1 : (i-1) * ratioNum+1;

criteria.andBetween("id", firstCount, i * ratioNum);

List<CampaignTarget> list = dao.selectByExample(example);

if (ObjectUtils.isEmpty(list)){

continue;

}

String uuid = UUID.randomUUID().toString();

//表名:

String biaoming = "数据元管理表" + uuid.substring(0, 4);

exportToExcel(biaoming, list);

}}

/*

* sheetName:表名

* heaaList:表头

* contentList:表内容

* filePath:写入文件地址

* */

public static void exportToExcel(String sheetName, List<CampaignTarget> contentList) throws IllegalAccessException {

//创建sheet页

XSSFWorkbook workbook = new XSSFWorkbook();

XSSFSheet sheet = workbook.createSheet(sheetName);

createTitle(sheet);

int k = 1;

for (int i = 0; i < contentList.size(); i++) {

//反射得到所有属性

Class cls = contentList.get(i).getClass();

Field[] fields = cls.getDeclaredFields();

if (k > baseNum) {

k = 1;

}

XSSFRow row1 = sheet.createRow(k);

k++;

int n = 0;

for (Field field : fields) {

String name = field.getName();

//打开私有访问

field.setAccessible(true);

XSSFCell cell = row1.createCell(n);

System.out.println(name);

System.out.println(contentList.get(i));

String value = field.get(contentList.get(i)) == null ? null : field.get(contentList.get(i)).toString();

cell.setCellValue(value);

n++;

}

if (i!=0 && (i+1) % baseNum == 0) {

String basePath = filePath;

page_no++;

basePath += "cam_" + page_no + ".xlsx";

//写入文件

File file = new File(basePath);

try {

//将文件保存到指定位置

workbook.write(new FileOutputStream(file));

System.out.println("写入文件成功");

} catch (IOException e) {

e.printStackTrace();

}

//创建sheet页

// workbook = new XSSFWorkbook();

// sheet = workbook.createSheet(sheetName);

// createTitle(sheet);

}

}

}

public static void createTitle(XSSFSheet sheet) {

XSSFRow row = sheet.createRow(0);

for (int i = 0; i < heaList.size(); i++) {

XSSFCell cell = row.createCell(i);

cell.setCellValue(heaList.get(i).toString());

}

}

}

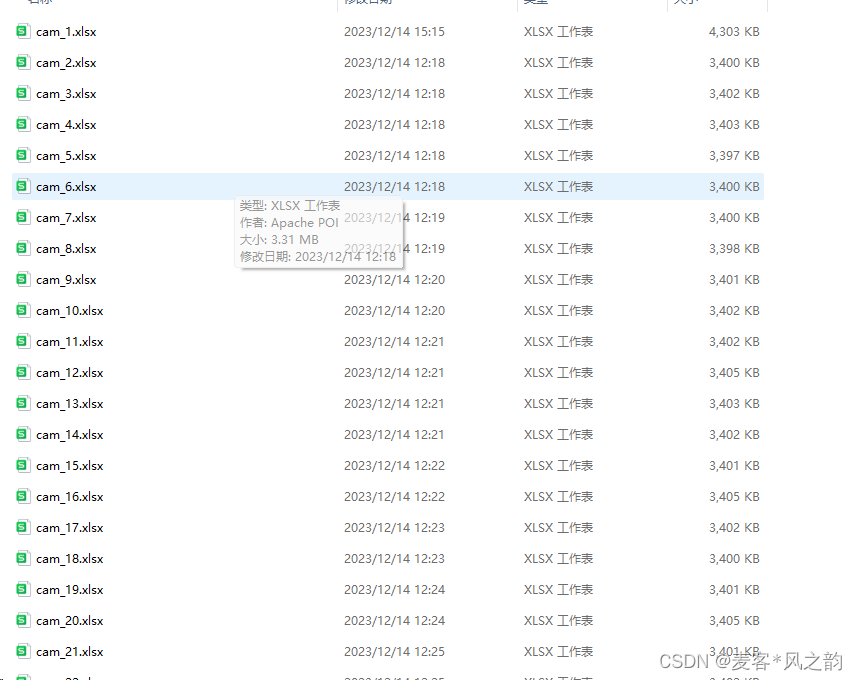

结果展示:

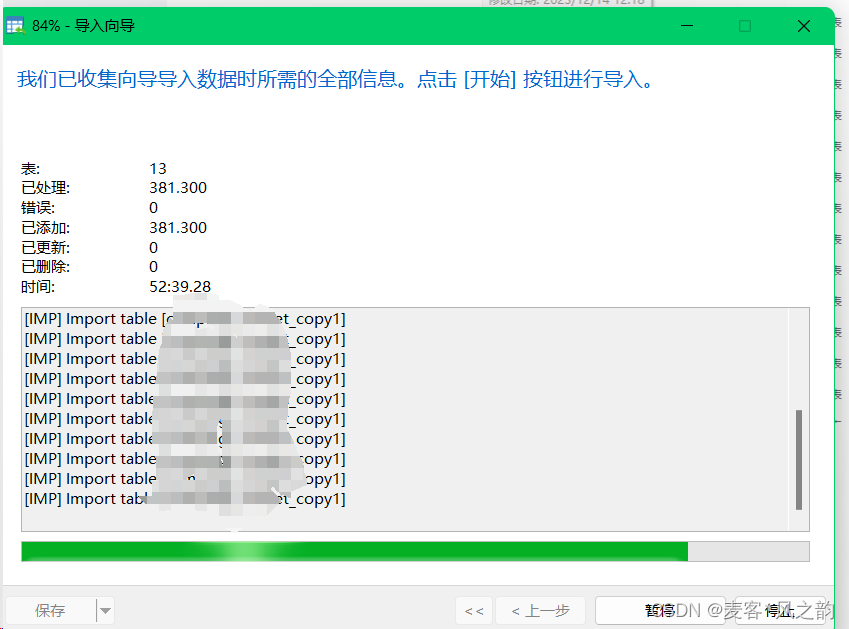

新表录入:

先要手动创建新表结构,然后使用Navicat进行excel导入。

B.批量插入:

原理:

先从原表中查询30000条数据,在给旧表中使用批量插入进行数据插入。

完整代码:

需要手动创建新表

public void copyTableData() {

int ratioNum = 30000;

//target总数

Integer targetCount = dao.selectCount(null);

//按2万等分

Integer num = targetCount / ratioNum;

//最后剩余

int remainder = targetCount % ratioNum;

for (int i = 0; i <= num; i++) {

Example example = new Example(CampaignTarget.class);

Example.Criteria criteria = example.createCriteria();

int firstCount = i == 1 ? 1 : (i-1) * ratioNum+1;

criteria.andBetween("id", firstCount, i * ratioNum);

List<CampaignTarget> list = dao.selectByExample(example);

if (ObjectUtils.isEmpty(list)){

continue;

}

List<CampaignTargetCopy1> targetTws = BeanPropertyUtil.copyList(list, CampaignTargetCopy1.class);

if (!ObjectUtils.isEmpty(targetTws)) {

dao1.insertList(targetTws);

}

}

//最后剩余

if (remainder != 0) {

Example example = new Example(CampaignTarget.class);

Example.Criteria criteria = example.createCriteria();

criteria.andBetween("id", num * ratioNum+1, targetCount);

List<CampaignTarget> list = dao.selectByExample(example);

if (!ObjectUtils.isEmpty(list)) {

List<CampaignTargetCopy1> targets = BeanPropertyUtil.copyList(list, CampaignTargetCopy1.class);

if (!ObjectUtils.isEmpty(targets)) {

dao1.insertList(targets);

}

}

}

}

文章讲述了在处理大量数据时,如何在生产环境中快速复制并重命名表单,避免导出大文件问题。作者提出使用Navicat复制表并结合代码批量插入,将数据拆分为每30000条存入一个Excel文件,提高了效率和性能。

文章讲述了在处理大量数据时,如何在生产环境中快速复制并重命名表单,避免导出大文件问题。作者提出使用Navicat复制表并结合代码批量插入,将数据拆分为每30000条存入一个Excel文件,提高了效率和性能。

674

674

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?