FLINK

在流式处理中,Flink经常会从Kafka读取流数据,这也是应用最为广泛的组合,Kafka源源不断的想Flink输送数据,Flink处理数据在输送给各种数据库,下面来看看Flink是如何读取Kafka的数据的吧

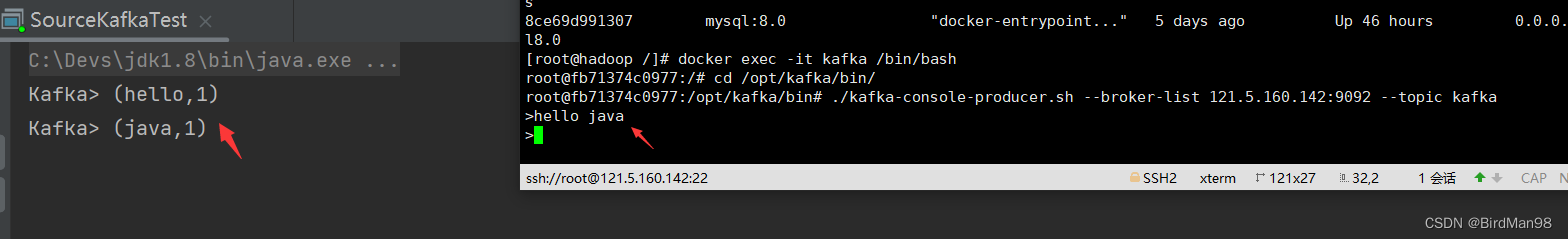

效果

Kafka

在开始之前需要安装Kafka并启动一个生产者生产数据,具体可以参考这两篇文章

注:Kafka主题要重新创建

配置好后就可以编写代码了

POM

添加FlinkKafka依赖,其他依赖配置请看

<!--kafka-->

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-kafka_${scala.binary.version}</artifactId>

<version>${flink.version}</version>

</dependency>

代码

还是同样的流程:创建流式环境—》获取数据源—》处理数据—》写出数据

package org.example.flink.level2;

import org.apache.flink.api.common.serialization.SimpleStringSchema;

import org.apache.flink.api.common.typeinfo.Types;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.datastream.KeyedStream;

import org.apache.flink.streaming.api.datastream.SingleOutputStreamOperator;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.connectors.kafka.FlinkKafkaConsumer;

import org.apache.flink.util.Collector;

import java.util.Arrays;

import java.util.Properties;

public class SourceKafkaTest {

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

env.setParallelism(1);

//kafka配置

Properties properties = new Properties();

properties.setProperty("bootstrap.servers", "121.5.160.142:9092");

properties.setProperty("group.id", "consumer-group");

properties.setProperty("key.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

properties.setProperty("value.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

properties.setProperty("auto.offset.reset", "latest");

//从Kafka获取数据流

DataStreamSource<String> stream = env.addSource(new FlinkKafkaConsumer<String>(

"kafka",

new SimpleStringSchema(),

properties

));

//切分数据

SingleOutputStreamOperator<Tuple2<String, Long>> wordAndOne = stream

.flatMap((String line, Collector<String> words) -> {

Arrays.stream(line.split(" ")).forEach(words::collect);

})

//指定拆分后的返回类型

.returns(Types.STRING)

//将切分的单词(存在重复)都映射成成二元组,格式为(单词,初始个数为1)如(hello,1)

.map(word -> Tuple2.of(word, 1L))

//指定返回二元组的泛型

.returns(Types.TUPLE(Types.STRING, Types.LONG));

//根据第一个位置的单词分组

KeyedStream<Tuple2<String, Long>, String> wordAndOneKS = wordAndOne

.keyBy(t -> t.f0);

//根据第二个位置的初始个数求和

SingleOutputStreamOperator<Tuple2<String, Long>> result = wordAndOneKS

.sum(1);

result.print("Kafka");

env.execute();

}

}

启动函数,我们在Kafka消息队列生产数据,控制台就能实时读取处理数据了

5509

5509

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?