这几天写代码,用flink通过mysql-cdc 和 jdbc-connector 两种连接器对接mysql,发现能把kafka的数据写到mysql,但从mysql读数据写到print-connector表的时候就读不出来,也不报错

2021-01-27 更新

后来发现是flink中的字段类型和mysql的没对齐,mysql中int,我在flink中用了bigint,开始以为能兼容的,结果出这个问题,按官网对齐后正常;

环境

mysql 5.7.27

flink1.11.2

pom.xml

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.abc.hd</groupId>

<artifactId>FlinkTest</artifactId>

<version>1.0-SNAPSHOT</version>

<repositories>

<!-- <repository>-->

<!-- <id>cloudera</id>-->

<!-- <url>https://repository.cloudera.com/artifactory/cloudera-repos/</url>-->

<!-- </repository>-->

</repositories>

<dependencies>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-mapreduce-client-core</artifactId>

<version>3.0.0</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>3.0.0</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-hdfs</artifactId>

<version>3.0.0</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>3.0.0</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-common</artifactId>

<version>1.11.2</version>

<!-- <scope>provided</scope>-->

</dependency>

<dependency>

<!-- 如果要使用Kafka的canal-json,对于程序而言,需要添加如下依赖-->

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-kafka_2.11</artifactId>

<version>1.11.2</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-api-java-bridge_2.11</artifactId>

<version>1.11.2</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-api-scala-bridge_2.11</artifactId>

<version>1.11.2</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-jdbc_2.11</artifactId>

<version>1.11.2</version>

</dependency>

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>5.1.46</version>

</dependency>

<dependency>

<groupId>com.alibaba.ververica</groupId>

<artifactId>flink-connector-mysql-cdc</artifactId>

<version>1.0.0</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-clients_2.11</artifactId>

<version>1.11.2</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-planner-blink_2.11</artifactId>

<version>1.11.2</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-scala_2.11</artifactId>

<version>1.11.2</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-java_2.11</artifactId>

<version>1.11.2</version>

<!-- <scope>provided</scope>-->

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-json</artifactId>

<version>1.11.2</version>

</dependency>

<dependency>

<groupId>com.alibaba</groupId>

<artifactId>fastjson</artifactId>

<version>1.2.74</version>

</dependency>

</dependencies>

<build>

<plugins>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<version>3.6.1</version>

<configuration>

<source>1.8</source>

<target>1.8</target>

</configuration>

</plugin>

</plugins>

</build>

</project>

代码如下:

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.table.api.EnvironmentSettings;

import org.apache.flink.table.api.TableResult;

import org.apache.flink.table.api.bridge.java.StreamTableEnvironment;

public class mysql2mysql {

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment streamEnv = StreamExecutionEnvironment.getExecutionEnvironment();

EnvironmentSettings envSettings = EnvironmentSettings.newInstance().useBlinkPlanner().inStreamingMode().build();

StreamTableEnvironment tableEnv = StreamTableEnvironment.create(streamEnv, envSettings);

String kafka_abc = "CREATE TABLE kafka_abc (\n" +

" id bigint,\n" +

" pid bigint,\n" +

"PRIMARY KEY (id) NOT ENFORCED \n" +

") WITH (\n" +

" 'connector' = 'kafka',\n" +

" 'topic' = 'test_abc',\n" +

" 'properties.bootstrap.servers' = '****:9092,****:9092,****:9092',\n" +

" 'properties.group.id' = 'testGroup',\n" +

" 'format' = 'json',\n" +

" 'scan.startup.mode' = 'earliest-offset',\n" +

"'json.ignore-parse-errors' = 'true',\n" +

"'json.fail-on-missing-field' = 'false' \n" +

")";

String mysql_pid = "create table mysql_pid( \n" +

"id bigint\n" +

",pid bigint \n" +

// ",PRIMARY KEY (id) NOT ENFORCED \n" +

") WITH (\n" +

" 'connector' = 'jdbc',\n" +

" 'url' = 'jdbc:mysql://*****:3307/canaltest?useUnicode=true&characterEncoding=utf8&useSSL=false&serverTimezone=UTC',\n" +

" 'username' = 'root',\n" +

" 'password' = '123',\n" +

" 'driver' = 'com.mysql.jdbc.Driver',\n" +

" 'table-name' = 'user_pid'\n" +

")";

String table_print = "create table table_print( \n" +

"id bigint,\n" +

"pid bigint\n" +

") WITH(\n" +

"'connector' = 'print',\n" +

"'print-identifier' = 'pppp',\n" +

"'standard-error' = 'true'\n" +

")";

String cdc_pid = "CREATE TABLE cdc_pid (\n" +

"id bigint\n" +

",pid bigint \n" +

// ",PRIMARY KEY (id) NOT ENFORCED\n" +

") with (\n" +

"'connector' = 'mysql-cdc',\n" +

"'server-id' = '100123',\n" +

"'hostname' = '****',\n" +

"'port' = '3307',\n" +

"'username' = 'root',\n" +

"'password' = '123',\n" +

"'database-name' = 'canaltest',\n" +

"'table-name' = 'user_pid'\n" +

")";

tableEnv.executeSql(kafka_users);

tableEnv.executeSql(table_print);

tableEnv.executeSql(mysql_pid);

tableEnv.executeSql(cdc_pid);

// 从kafka读数据并打印正常

// String sql_kakfa2print = "insert into table_print select id, pid from kafka_abc";

// tableEnv.executeSql(sql_kakfa2print);

// 从kafka写到mysql正常

// String kafka2mysqlPid = "insert into mysql_pid select id, pid from kafka_abc";

// tableEnv.executeSql(kafka2mysqlPid);

// 从mysql-jdbc读数据,程序不报错,也读不出来数据

String mysqlPid2Print = "insert into table_print select id, pid from mysql_pid";

tableEnv.executeSql(mysqlPid2Print);

// 从mysql-cdc读数据,程序不报错,也读不出来数据

// String cdcPid2Print = "insert into table_print select id, pid from cdc_pid";

// tableEnv.executeSql(cdcPid2Print);

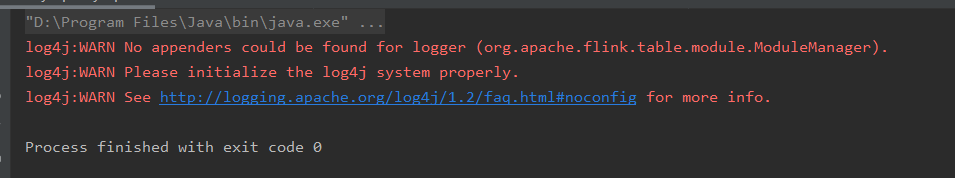

从mysql-jdbc读数据 或 从mysql-cdc读数据

这两种方式读取都无法读取数据,java程序也不报错,,就那么正常退出了

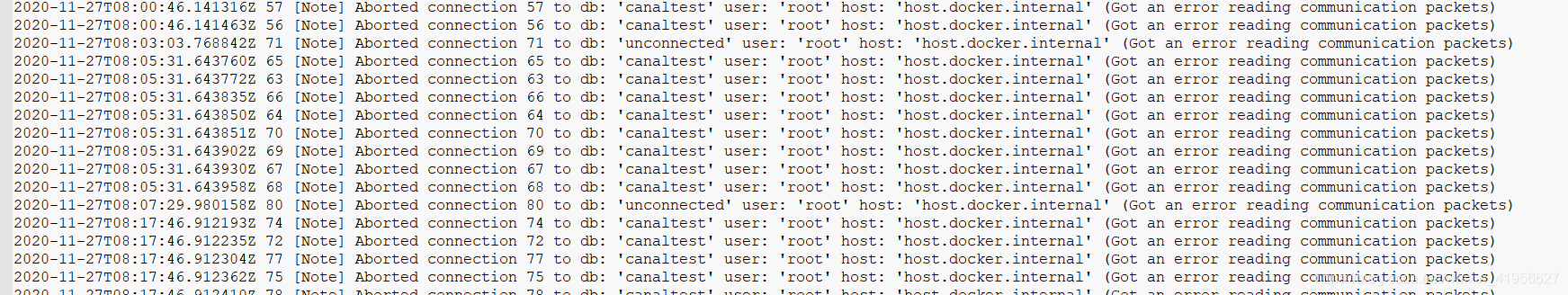

但在mysql的err日志文件有相关记录,只在读的时候有这个日志,写的时候没这个日志

2020-11-27T08:07:29.980158Z 80 [Note] Aborted connection 80 to db: ‘unconnected’ user: ‘root’ host: ‘host.docker.internal’ (Got an error reading communication packets)

2020-11-27T08:17:46.912193Z 74 [Note] Aborted connection 74 to db: ‘canaltest’ user: ‘root’ host: ‘host.docker.internal’ (Got an error reading communication packets)

换了pom中jdbc版本到8.x版本也是同样的现象

问题:flink能写入mysql,但读不出来,让我很疑惑,有哪位大佬知道原因的话麻烦解答一下,谢谢!

431

431

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?