MSELoss()

调用方法

import torch.nn.functional as F

a=torch.tensor([[0,0.5,2,3],[0,1,2,3],[0,1,2,3]],dtype=torch.float32)

b=torch.tensor([[1,0.3,3,4],[0,1.2,2.2,3],[0,1,2,5]],dtype=torch.float32) print(a.shape,b.shape)

c=F.mse_loss(a, b, reduction='sum')

d=0.5*F.mse_loss(a, b, reduction='mean')#通常加上0.5

print(c)

print(d)

结果:

torch.Size([3, 4]) torch.Size([3, 4])

tensor(7.1200)

tensor(0.2967)

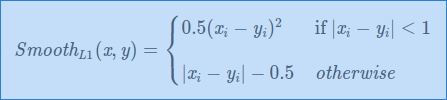

Smooth L1 Loss(Huber)

公式

调用方法

import torch.nn.functional as F

a=torch.tensor([[0,0.5,2,3],[0,1,2,3],[0,1,2,3]],dtype=torch.float32)

b=torch.tensor([[1,0.3,3,4],[0,1.2,2.2,3],[0,1,2,5]],dtype=torch.float32) print(a.shape,b.shape)

c=F.smooth_l1_loss(a, b, reduction='sum')

d=F.smooth_l1_loss(a, b, reduction='mean')

print(c)

print(d)

结果:

torch.Size([3, 4]) torch.Size([3, 4])

tensor(3.0600)

tensor(0.2550)

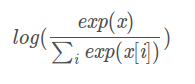

torch.nn.logSoftmax()

该API的主要是对预测结果进行计算转换,先求softmax在求log

代码:

pred=torch.tensor([[1.0,2.0,3.0],[0.5,0.5,0.5]],dtype=torch.float32)

pred_softmax=torch.nn.functional.softmax(pred,dim=1)

pred_logsoftmax=np.log(pred_softmax.data)

pred_logsoftmax1=torch.nn.functional.log_softmax(pred,dim=1)

输出为:

#原tensor

tensor([[1.0000, 2.0000, 3.0000],

[0.5000, 0.5000, 0.5000]])

#原tensor 进行softmax

tensor([[0.0900, 0.2447, 0.6652],

[0.3333, 0.3333, 0.3333]])

tensor([[-2.4076, -1.4076, -0.4076],

[-1.0986, -1.0986, -1.0986]])

tensor([[-2.4076, -1.4076, -0.4076],

[-1.0986, -1.0986, -1.0986]])

torch.nn.NLLLoss()

用于多分类的负对数似然损失函数(negative log likelihood loss)

将每个label的值作为pred每行取值的索引,取相反数

代码:

pred=torch.tensor([[1.0,2.0,3.0],[0.5,0.5,0.5]],dtype=torch.float32)

pred_logsoftmax=torch.nn.functional.log_softmax(pred,dim=1)

label=torch.LongTensor([0,1])

#用logsoftmax的结果进行nll_loss,默认是取平均值

loss=torch.nn.functional.nll_loss(pred_logsoftmax,label)

loss1=torch.nn.functional.cross_entropy(pred,label)

print(pred)

print(label)

print(pred_logsoftmax)

print(loss)

print(loss1)

tensor([[1.0000, 2.0000, 3.0000],

[0.5000, 0.5000, 0.5000]])

tensor([0, 1])

tensor([[-2.4076, -1.4076, -0.4076], 取该行第0个值的相反数

[-1.0986, -1.0986, -1.0986]]) 取该行第1个值的相反数

tensor(1.7531) 计算过程=[-(-2.4076)+(-(-1.0986))]/2=1.7531

tensor(1.7531)

修改

loss=torch.nn.functional.nll_loss(pred_logsoftmax,label,reduction="sum")

print(loss) tensor(3.5062)

torch.nn.CrossEntropyLoss()

就是torch.nn.log_softmax()与torch.nn.nll_loss()的结合体

代码:

#pre.shape=3,2 batch_size=3 cls_num=2

#label.shape=3 每个元素代表每隔样本的分类标签

pre=torch.tensor([[-1.0,1.0],[2.0,-2.0],[-3.0,3.0]])#定义一个预测tensor 一定要.0否则会报错

label=torch.LongTensor([1,0,1])

# loss=F.cross_entropy(a,b) 与torch.nn.CrossEntropyLoss()效果一样,不需要先定义

lossfn=torch.nn.CrossEntropyLoss()

loss=lossfn(pre,label)

print("loss ",loss)#loss tensor(0.0492)

a=torch.tensor([[-1.0,1.0],[2.0,-2.0],[-3.0,3.0]])#一定要

b=torch.LongTensor([1,0,1])

loss=F.cross_entropy(a,b)

会报错

RuntimeError: "log_softmax_lastdim_kernel_impl" not implemented for 'torch.LongTensor'

766

766

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?