大致思路:

canal去mysql拉取数据,放在canal所在的节点上,并且自身对外提供一个tcp服务,我们只要写一个连接该服务的客户端,去拉取数据并且指定往kafka写数据的格式就能达到以protobuf的格式往kafka中写数据的要求。

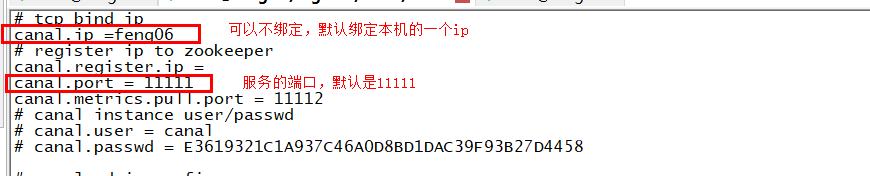

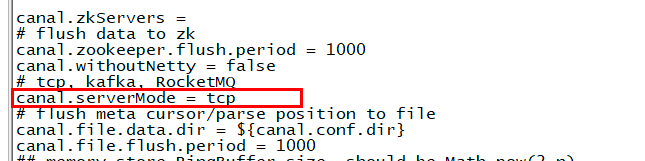

1. 配置canal(/bigdata/canal/conf/canal.properties),然后启动canal,这样就会开启一个tcp服务

2. 写拉取数据的客户端代码

PbOfCanalToKafka

packagecn._51doit.flink.canal;importcn._51doit.proto.OrderDetailProto;importcom.alibaba.google.common.base.CaseFormat;importcom.alibaba.otter.canal.client.CanalConnector;importcom.alibaba.otter.canal.client.CanalConnectors;importcom.alibaba.otter.canal.protocol.CanalEntry;importcom.alibaba.otter.canal.protocol.Message;importorg.apache.kafka.clients.producer.KafkaProducer;importorg.apache.kafka.clients.producer.ProducerRecord;importjava.net.InetSocketAddress;importjava.util.HashMap;importjava.util.List;importjava.util.Map;importjava.util.Properties;public classPbOfCanalToKafka {public static void main(String[] args) throwsException {

CanalConnector canalConnector= CanalConnectors.newSingleConnector((new InetSocketAddress("192.168.57.12", 11111)), "example", "canal", "canal123");//1 配置参数

Properties props = newProperties();//连接kafka节点

props.setProperty("bootstrap.servers", "feng05:9092,feng06:9092,feng07:9092");

props.setProperty("key.serializer", "org.apache.kafka.common.serialization.StringSerializer");

props.setProperty("value.serializer", "org.apache.kafka.common.serialization.ByteArraySerializer");

KafkaProducer producer = new KafkaProducer(props);while (true) {//建立连接

canalConnector.connect();//订阅bigdata数据库下的所有表

canalConnector.subscribe("doit.orderdetail");//每100毫秒拉取一次数据

Message message = canalConnector.get(10);if (message.getEntries().size() &

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

800

800

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?