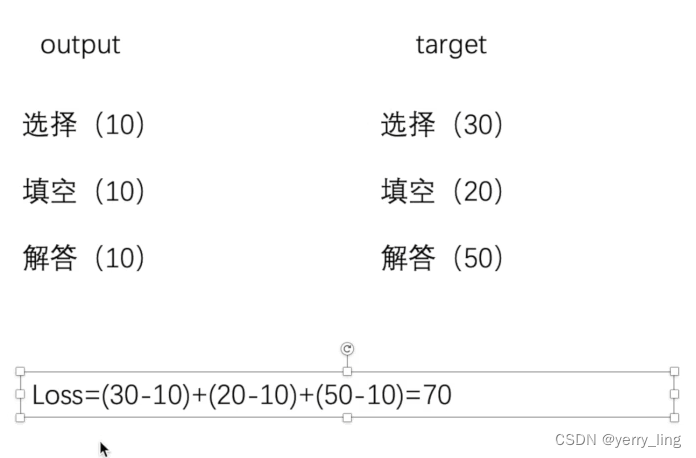

什么是loss?

1、计算实际输出的和目标之间的差距

2、为我们更新输出停工一定的依据(反向传播)grad

L1Loss和MSELoss

举个栗子

x:1,2,3 y:1,2,5

计算方式:

L1loss =(0+0+2)/3=0.6

MSE = (0+0+2^2)/3=1.333

import torch

from torch.nn import L1Loss

from torch import nn

#定义一个数组 dtype不能是int要转换为浮点数

inputs = torch.tensor([1,2,3],dtype=torch.float32)

targets = torch.tensor([1,2,5],dtype=torch.float32)

#1个样本 1通道 高宽1*3

inputs = torch.reshape(inputs,(1,1,1,3))

targets = torch.reshape(targets,(1,1,1,3))

loss =L1Loss()

result = loss(inputs,targets)

print(result)

loss_mse = nn.MSELoss()

result_mse =loss_mse(inputs,targets)

print(result_mse)

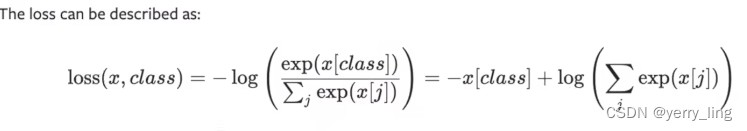

交叉熵

常用于分类的损失函数

例子:

有一个分类问题

cat,dog,fog

(0 , 1 , 2)one-hot编码

output [0.1,0.2,0.3]

如果target是1 表示dog类,dog的class,也是output,就会等于0.2

代入公式Loss(x.class) = -0.2+log(exp(0.1)+exp(0.2)+exp(0.3))

#交叉熵

input_data = torch.tensor([0.1,0.2,0.3])

target_data = torch.tensor([1])

#batch_size=1 有3个类别

input_data = torch.reshape(input_data,(1,3))

loss_cross = nn.CrossEntropyLoss()

result_cross = loss_cross(input_data,target_data)

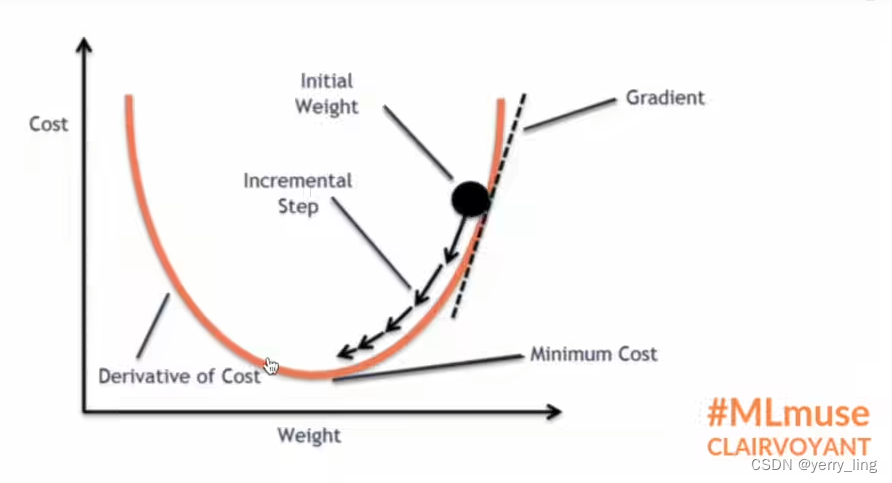

print(result_cross)反向传播

通过生成的grad来调整找到最少的loss

优化器

应用在前一篇博客的网络结构中

import torch.optim

import torchvision.datasets

from torch import nn

from torch.nn import Sequential, Conv2d, MaxPool2d, Flatten, Linear

from torch.utils.data import DataLoader

dataset = torchvision.datasets.CIFAR10("./dataset",train=False,transform=torchvision.transforms.ToTensor(),download=True)

dataloader = DataLoader(dataset,batch_size=1)

#网络结构

class Test(nn.Module):

def __init__(self):

super(Test, self).__init__()

self.module1 = Sequential(

Conv2d(3,32,5,padding=2),

MaxPool2d(2),

Conv2d(32, 32, 5, padding=2),

MaxPool2d(2),

Conv2d(32, 64, 5, padding=2),

MaxPool2d(2),

Flatten(),

Linear(1024,64),

Linear(64,10)

)

def forward(self,x):

x = self.module1(x)

return x

loss = nn.CrossEntropyLoss()

test = Test()

optim = torch.optim.SGD(test.parameters(),lr=0.01)

#迭代20次

for epoch in range(20):

running_loss =0.0

for data in dataloader:

imgs,targets = data

outputs = test(imgs)

result_loss = loss(outputs,targets)

optim.zero_grad()#清除grad

result_loss.backward()

optim.step()

print(result_loss)

running_loss = running_loss +result_loss

print(running_loss)

本文介绍了损失函数在深度学习中的作用,包括L1Loss和MSELoss的计算方法,以及交叉熵在分类问题中的应用。同时,通过反向传播和优化器(如SGD)的实际示例,展示了如何在神经网络中最小化损失并进行训练。

本文介绍了损失函数在深度学习中的作用,包括L1Loss和MSELoss的计算方法,以及交叉熵在分类问题中的应用。同时,通过反向传播和优化器(如SGD)的实际示例,展示了如何在神经网络中最小化损失并进行训练。

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?