python中requests模块使用_get_post_文件上传_图片爬取

1 模拟浏览器指纹

import requests

url = "http://1.1.1.154/pythonSpider/index.html"

headers = {

"User-Agent": "Mozilla/5.0 (compatible; Baiduspider/2.0; +http://www.baidu.com/search/spider.html)"

}

req= requests.Session()

res= req.get(url=url,headers=headers)

print(res.request.headers)

2 发送get 请求

import requests

url = "http://1.1.1.154/test/1.php"

headers = {

"User-Agent": "Mozilla/5.0 (compatible; Baiduspider/2.0; +http://www.baidu.com/search/spider.html)"

}

req = requests.Session()

params={

'id':'csm'

}

res = req.get(url=url,headers=headers,params=params)

print(res.text)

3 发送post 请求

import requests

url = "http://1.1.1.154/test/1.php"

headers = {

"User-Agent": "Mozilla/5.0 (compatible; Baiduspider/2.0; +http://www.baidu.com/search/spider.html)"

}

req = requests.Session()

date={

'id':'csm'

}

res = req.post(url=url,headers=headers,data=date)

print(res.text)

4 文件上传

import requests

import bs4

url = "http://1.1.1.154/DVWA-2.0.1/vulnerabilities/upload/"

headers = {

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:109.0) Gecko/20100101 Firefox/117.0",

"Cookie": "security=low; PHPSESSID=cbg23venv9cp8hl2et169ct1tu",

"Referer": "http://1.1.1.154/DVWA-2.0.1/vulnerabilities/upload/"

}

req = requests.Session()

date={

'MAX_FILE_SIZE':100000,

'Upload':'Upload'

}

files={

'uploaded':('2.php', b'<?php phpinfo(); ?>','image/jpeg')

}

res = req.post(url=url,headers=headers,data=date,files=files)

html = res.text

html = bs4.BeautifulSoup(html,'lxml')

pre = html.findAll('pre')

print(pre)

pre = pre[0].text

print(pre)

path = pre[0:pre.find(' ')]

print(f"path:{url}{path}")

5 服务器超时

import requests

url = "http://1.1.1.154/test/1.php"

headers = {

"User-Agent": "Mozilla/5.0 (compatible; Baiduspider/2.0; +http://www.baidu.com/search/spider.html)"

}

req = requests.Session()

date={

'id':'csm',

'sleep':'5'

}

try:

res = req.post(url=url,headers=headers,data=date,timeout=5)

except:

print('服务器超时!')

else:

print(res.text)

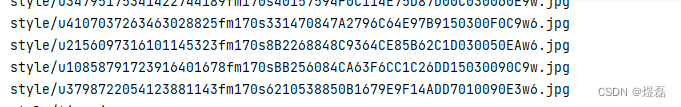

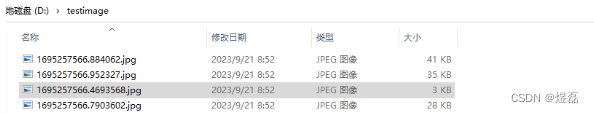

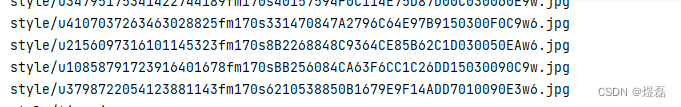

6 爬取图片文件

import requests

import re

import time

url = "http://192.168.225.204:9000/pythonSpider/index.html"

def get_html(url):

res = requests.get(url= url)

return res.content

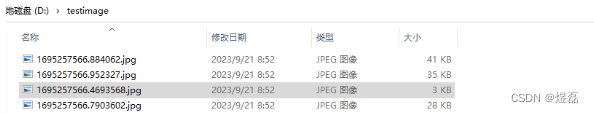

def download(path):

resp = requests.get(url=path).content

img_path = f"D:/testimage/{time.time()}.jpg"

with open(img_path,"wb") as f:

f.write(resp)

def get_img_path(url):

html = get_html(url)

img_url = re.findall(r"style/\w*\.jpg",html.decode())

for img_url_ in img_url:

print(img_url_)

path = url[0:url.rfind('/')+1] +img_url_

download(path)

get_img_path(url=url)

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?