从window访问Hadoop 客户端,需要在windows 下安装hadoop 依赖。

下载地址:https://gitee.com/fulsun/winutils-1/tree/master

下载依赖后解压到本地非中文目录下,并将此目录添加为系统变量%HADOOP_HOME%,此目录下的bin目录添加到PATH。运行winuitls完成自动安装

一、HDFS客户端创建

- maven环境配置

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.xxx</groupId>

<artifactId>HDFSdemo</artifactId>

<version>1.0-SNAPSHOT</version>

<properties>

<maven.compiler.source>8</maven.compiler.source>

<maven.compiler.target>8</maven.compiler.target>

</properties>

<dependencies>

<!-- https://mvnrepository.com/artifact/org.apache.hadoop/hadoop-client -->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>3.1.3</version>

</dependency>

<!-- https://mvnrepository.com/artifact/org.slf4j/slf4j-log4j12 -->

<dependency>

<groupId>org.slf4j</groupId>

<artifactId>slf4j-log4j12</artifactId>

<version>1.7.25</version>

<scope>test</scope>

</dependency>

<!-- https://mvnrepository.com/artifact/junit/junit -->

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>4.13.2</version>

</dependency>

</dependencies>

</project>

2)FileSystem 的初始化与关闭

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.ipc.Client;

import org.junit.After;

import org.junit.Before;

import org.junit.Test;

import java.io.IOException;

import java.net.URI;

import java.net.URISyntaxException;

public class HdfsClient {

private FileSystem fs;

@Before

public void configFileSystem() throws Exception {

// NameNode的网络地址

URI uri = new URI("hdfs://192.168.10.102:8020");

Configuration config = new Configuration();

config.set("dfs.replication","1");

String username = "atguigu";

fs = FileSystem.get(uri, config, username);

}

@After

public void closeFileSystem() throws IOException {

fs.close();

}

}

2) 通过FileSystem向集群中:创建文件夹与传输本地文件

@Test

public void doSome() throws IOException {

fs.mkdirs(new Path("/from_client01"));

}

3)通过客户端配置文件修改Hadoop 配置

首先明确,可以和NameNode产生通讯的程序都是Hadoop客户端。包括通过本地Linux 向NameNode传入指令

其次,对于Hadoop的配置设置:客户端优先级>用户自定义配置>默认配置

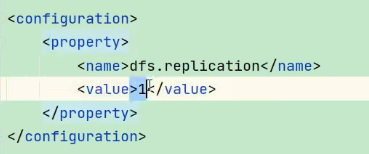

将Hadoop中默认生成的副本数 更改为:1

方法一:

将hdfs-site.xml放在resource中即可。

方法二:

在初始化FileSystem时传入参数Config

config,set(“dfs.replication”,“1”)

314

314

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?