目录

Spark Shell仅在测试和验证我们的程序时使用的较多,在生产环境中,通常会在IDE中编制程序,然后打成jar包,然后提交到集群,最常用的是创建一个Maven项目,利用Maven来管理jar包的依赖。

这里借用最基本的wordcount案例来演示一下:

一、编写WordCount程序

1、在IDEA中创建一个Maven项目WordCount并导入依赖

<dependencies>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.11</artifactId>

<version>2.4.0</version>

</dependency>

</dependencies>

<build>

<finalName>wordcount0731</finalName>

<plugins>

<plugin>

<groupId>net.alchim31.maven</groupId>

<artifactId>scala-maven-plugin</artifactId>

<version>3.2.2</version>

<executions>

<execution>

<goals>

<goal>compile</goal>

<goal>testCompile</goal>

</goals>

</execution>

</executions>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-assembly-plugin</artifactId>

<version>3.0.0</version>

<configuration>

<archive>

<manifest>

<mainClass>WordCount</mainClass>

</manifest>

</archive>

<descriptorRefs>

<descriptorRef>jar-with-dependencies</descriptorRef>

</descriptorRefs>

</configuration>

<executions>

<execution>

<id>make-assembly</id>

<phase>package</phase>

<goals>

<goal>single</goal>

</goals>

</execution>

</executions>

</plugin>

</plugins>

</build>2、编写代码

Scala代码:

package xsluo

import org.apache.spark.{SparkConf, SparkContext}

object WordCountScala {

def main(args: Array[String]): Unit = {

val conf = new SparkConf().setAppName("WC").setMaster("local[*]")

val sc = new SparkContext(conf)

val rdd = sc.textFile("hdfs://192.168.2.100:9000/test/words.log")

val array = rdd.flatMap(_.split(" "))

.map((_, 1))

.reduceByKey(_ + _)

.map(x => (x._2, x._1))

.sortByKey()

.map(x => (x._2, x._1))

.take(10)

for (elem <- array) {

println(elem)

}

sc.stop()

}

}

Java代码:

package xsluo;

import org.apache.spark.SparkConf;

import org.apache.spark.api.java.JavaPairRDD;

import org.apache.spark.api.java.JavaRDD;

import org.apache.spark.api.java.JavaSparkContext;

import org.apache.spark.api.java.function.FlatMapFunction;

import org.apache.spark.api.java.function.Function2;

import org.apache.spark.api.java.function.PairFunction;

import org.apache.spark.api.java.function.VoidFunction;

import scala.Tuple2;

import java.util.Arrays;

import java.util.Iterator;

public class WordCountJava {

public static void main(String[] args) {

SparkConf sparkConf = new SparkConf().setAppName("wordCountApp").setMaster("local[*]");

JavaSparkContext sc = new JavaSparkContext(sparkConf);

JavaRDD lines = sc.textFile("hdfs://192.168.2.100:9000/test/words.log");

JavaRDD words = lines.flatMap(new FlatMapFunction<String,String>() {

@Override

public Iterator call(String line) throws Exception {

return Arrays.asList(line.split(" ")).iterator();

}

});

JavaPairRDD word = words.mapToPair(new PairFunction<String,String,Integer>() {

@Override

public Tuple2 call(String word) throws Exception {

return new Tuple2(word,1);

}

});

JavaPairRDD wordcount = word.reduceByKey(new Function2<Integer,Integer,Integer>() {

@Override

public Integer call(Integer v1, Integer v2) throws Exception {

return v1 + v2;

}

});

wordcount.foreach(new VoidFunction<Tuple2<String, Integer>>() {

@Override

public void call(Tuple2<String,Integer> o) throws Exception {

System.out.println(o._1 + " : "+o._2);

}

});

}

}

二、启动Hadoop(HDFS+YARN)和Spark服务

进程如下:

准备好测试数据:

[hadoop@weekend110 spark-2.4.0-bin-hadoop2.7]$ hdfs dfs -cat /test/words.log

20/07/31 05:54:54 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

aaa bbb ccc ddd

hahaha wwww

fff aaa ggg

ggg bbb三、运行调试

1、本地调试:

本地Spark程序调试需要使用local提交模式,即将本机当做运行环境,Master和Worker都为本机。运行时直接加断点调试即可。

如下:

创建SparkConf的时候设置额外属性,表明本地执行:

val conf = new SparkConf().setAppName("WC").setMaster("local[*]")

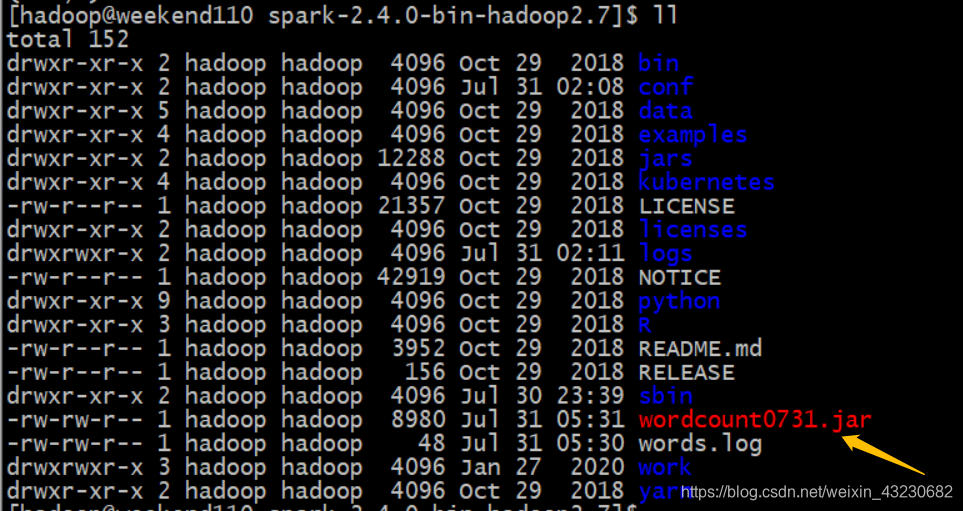

2、打包编译上传到集群,spark提交到Yarn执行:

[hadoop@weekend110 spark-2.4.0-bin-hadoop2.7]$ bin/spark-submit --class xsluo.WordCount --master yarn wordcount0731.jar

20/07/31 05:32:19 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

(wwww,1)

(ddd,1)

(fff,1)

(hahaha,1)

(ccc,1)

(bbb,2)

(ggg,2)

(aaa,2)

212

212

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?