node节点选择器

我们在创建pod资源的时候,pod会根据schduler进行调度,那么默认会调度到随机的一个工作节点,如果我们想要pod调度到指定节点或者调度到一些具有相同特点的node节点,怎么办呢?

可以使用pod中的nodeName或者nodeSelector字段指定要调度到的node节点

nodeName,指定pod节点运行在哪个具体node上

把tomcat.tar.gz上传到k8snode1和k8snode2,手动解压

ctr -n=k8s.io images import tomcat.tar.gz

把busybox.tar.gz上传到k8snode1和k8snode2,手动解压

ctr -n=k8s.io images import busybox.tar.gz

vim pod-node.yaml

apiVersion: v1

kind: Pod

metadata:

name: demo-pod

namespace: default

labels:

app: myapp

env: dev

spec:

nodeName: k8snode1

containers:

- name: tomcat-pod-java

ports:

- containerPort: 8080

image: tomcat:8.5-jre8-alpine

imagePullPolicy: IfNotPresent

- name: busybox

image: busybox:latest

command:

- "/bin/sh"

- "-c"

- "sleep 3600"

kubectl apply -f pod-node.yaml

查看pod调度到哪个节点

kubectl get pods -o wide

NAME READY STATUS RESTARTS

demo-pod 1/1 Running 0 k8snode1

nodeSelector:指定pod调度到具有哪些标签的node节点上

同一个yaml文件里定义pod资源,如果同时定义了nodeName和NodeSelector,那么条件必须都满足才可以,有一个不满足都会调度失败

给node节点打标签,打个具有disk=ceph的标签

kubectl label nodes k8snode2 disk=ceph

定义pod的时候指定要调度到具有disk=ceph标签的node上

vim pod-1.yaml

apiVersion: v1

kind: Pod

metadata:

name: demo-pod-1

namespace: default

labels:

app: myapp

env: dev

spec:

nodeSelector:

disk: ceph

containers:

- name: tomcat-pod-java

ports:

- containerPort: 8080

image: tomcat:8.5-jre8-alpine

imagePullPolicy: IfNotPresent

kubectl apply -f pod-1.yaml

查看pod调度到哪个节点

kubectl get pods -o wide

NAME READY STATUS RESTARTS

demo-pod-1 1/1 Running 0 k8snode2

做完上面实验,需要把default名称空间下的pod全都删除,kubectl delete pods pod名字

kubectl delete pods demo-pod-1

删除node节点打的标签

kubectl label nodes k8snode2 disk-

亲和性

node节点亲和性

node节点亲和性调度:nodeAffinity,查看官方介绍

kubectl explain pods.spec.affinity

KIND: Pod

VERSION: v1

RESOURCE: affinity <Object>

DESCRIPTION:

If specified, the pod's scheduling constraints

Affinity is a group of affinity scheduling rules.

FIELDS:

nodeAffinity <Object> #节点亲和性

podAffinity <Object> #pod亲和性

podAntiAffinity <Object> #pod反亲和性

kubectl explain pods.spec.affinity.nodeAffinity

KIND: Pod

VERSION: v1

RESOURCE: nodeAffinity <Object>

DESCRIPTION:

Describes node affinity scheduling rules for the pod.

Node affinity is a group of node affinity scheduling rules.

FIELDS:

preferredDuringSchedulingIgnoredDuringExecution <[]Object>

requiredDuringSchedulingIgnoredDuringExecution <Object>

prefered表示有节点尽量满足这个位置定义的亲和性,这不是一个必须的条件,软亲和性

require表示必须有节点满足这个位置定义的亲和性,这是个硬性条件,硬亲和性

使用requiredDuringSchedulingIgnoredDuringExecution硬亲和性

把myapp-v1.tar.gz上传到k8snode2和k8snode1上,手动解压:

ctr -n=k8s.io images import myapp-v1.tar.gz

vim pod-nodeaffinity-demo.yaml

apiVersion: v1

kind: Pod

metadata:

name: pod-node-affinity-demo

namespace: default

labels:

app: myapp

tier: frontend

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: zone

operator: In

values:

- foo

- bar

containers:

- name: myapp

image: docker.io/ikubernetes/myapp:v1

imagePullPolicy: IfNotPresent

我们检查当前节点中有任意一个节点拥有zone标签的值是foo或者bar,就可以把pod调度到这个node节点的foo或者bar标签上的节点上

kubectl apply -f pod-nodeaffinity-demo.yaml

kubectl get pods -o wide | grep pod-node

pod-node-affinity-demo 0/1 Pending 0 k8snode1

status的状态是pending,上面说明没有完成调度,因为没有一个拥有zone的标签的值是foo或者bar,而且使用的是硬亲和性,必须满足条件才能完成调度

给这个k8snode1节点打上标签zone=foo,在查看

kubectl label nodes k8snode1 zone=foo

kubectl get pods -o wide

pod-node-affinity-demo 1/1 Running 0 k8snode1

删除pod-nodeaffinity-demo.yaml

kubectl delete -f pod-nodeaffinity-demo.yaml

使用preferredDuringSchedulingIgnoredDuringExecution软亲和性

vim pod-nodeaffinity-demo-2.yaml

apiVersion: v1

kind: Pod

metadata:

name: pod-node-affinity-demo-2

namespace: default

labels:

app: myapp

tier: frontend

spec:

containers:

- name: myapp

image: docker.io/ikubernetes/myapp:v1

imagePullPolicy: IfNotPresent

affinity:

nodeAffinity:

preferredDuringSchedulingIgnoredDuringExecution:

- preference:

matchExpressions:

- key: zone1

operator: In

values:

- foo1

- bar1

weight: 10

- preference:

matchExpressions:

- key: zone2

operator: In

values:

- foo2

- bar2

weight: 20

kubectl apply -f pod-nodeaffinity-demo-2.yaml

kubectl get pods -o wide |grep demo-2

pod-node-affinity-demo-2 1/1 Running 0 k8snode1

上面说明软亲和性是可以运行这个pod的,尽管没有运行这个pod的节点定义的zone1标签

Node节点亲和性针对的是pod和node的关系,Pod调度到node节点的时候匹配的条件

测试完成,删除 pod-nodeaffinity-demo-2.yaml

kubectl delete -f pod-nodeaffinity-demo-2.yaml

weight权重

weight是相对权重,权重越高,pod调度的几率越大

假如给xianchaonode1和xianchaonode2都打上标签

kubectl label nodes k8snode1 zone1=foo1

kubectl label nodes k8snode2 zone2=foo2

vim pod-nodeaffinity-demo-2.yaml

apiVersion: v1

kind: Pod

metadata:

name: pod-node-affinity-demo-2

namespace: default

labels:

app: myapp

tier: frontend

spec:

containers:

- name: myapp

image: docker.io/ikubernetes/myapp:v1

imagePullPolicy: IfNotPresent

affinity:

nodeAffinity:

preferredDuringSchedulingIgnoredDuringExecution:

- preference:

matchExpressions:

- key: zone1

operator: In

values:

- foo1

- bar1

weight: 10

- preference:

matchExpressions:

- key: zone2

operator: In

values:

- foo2

- bar2

weight: 20

kubectl apply -f pod-nodeaffinity-demo-2.yaml

pod在定义node节点亲和性的时候,k8sode1和k8snode2都满足条件,都可以调度pod,且都具有标签,pod在匹配zone2=foo2的权重高,那么pod就会优先调度到k8snode2上

删除对应标签和pod

kubectl delete -f pod-nodeaffinity-demo.yaml

kubectl delete -f pod-nodeaffinity-demo-2.yaml

查看是否还有对应pod未删除

kubectl get pods -owide

Pod节点亲和性

pod自身的亲和性调度有两种表示形式

- podaffinity:pod和pod更倾向腻在一起,把相近的pod结合到相近的位置,如同一区域,同一机架,这样的话pod和pod之间更好通信,比方说有两个机房,这两个机房部署的集群有1000台主机,那么我们希望把nginx和tomcat都部署同一个地方的node节点上,可以提高通信效率;

- podunaffinity:pod和pod更倾向不腻在一起,如果部署两套程序,那么这两套程序更倾向于反亲和性,这样相互之间不会有影响。

- 第一个pod随机选则一个节点,做为评判后续的pod能否到达这个pod所在的节点上的运行方式,这就称为pod亲和性;我们怎么判定哪些节点是相同位置的,哪些节点是不同位置的;我们在定义pod亲和性时需要有一个前提,哪些pod在同一个位置,哪些pod不在同一个位置,这个位置是怎么定义的,标准是什么?以节点名称为标准,这个节点名称相同的表示是同一个位置,节点名称不相同的表示不是一个位置。

kubectl explain pods.spec.affinity.podAffinity

KIND: Pod

VERSION: v1

RESOURCE: podAffinity <Object>

DESCRIPTION:

Describes pod affinity scheduling rules (e.g. co-locate this pod in the

same node, zone, etc. as some other pod(s)).

Pod affinity is a group of inter pod affinity scheduling rules.

FIELDS:

preferredDuringSchedulingIgnoredDuringExecution <[]Object>

requiredDuringSchedulingIgnoredDuringExecution <[]Object>

requiredDuringSchedulingIgnoredDuringExecution: 硬亲和性

preferredDuringSchedulingIgnoredDuringExecution:软亲和性

kubectl explain pods.spec.affinity.podAffinity.requiredDuringSchedulingIgnoredDuringExecution

KIND: Pod

VERSION: v1

RESOURCE: requiredDuringSchedulingIgnoredDuringExecution <[]Object>

DESCRIPTION:

FIELDS:

labelSelector <Object>

namespaces <[]string>

topologyKey <string> -required-

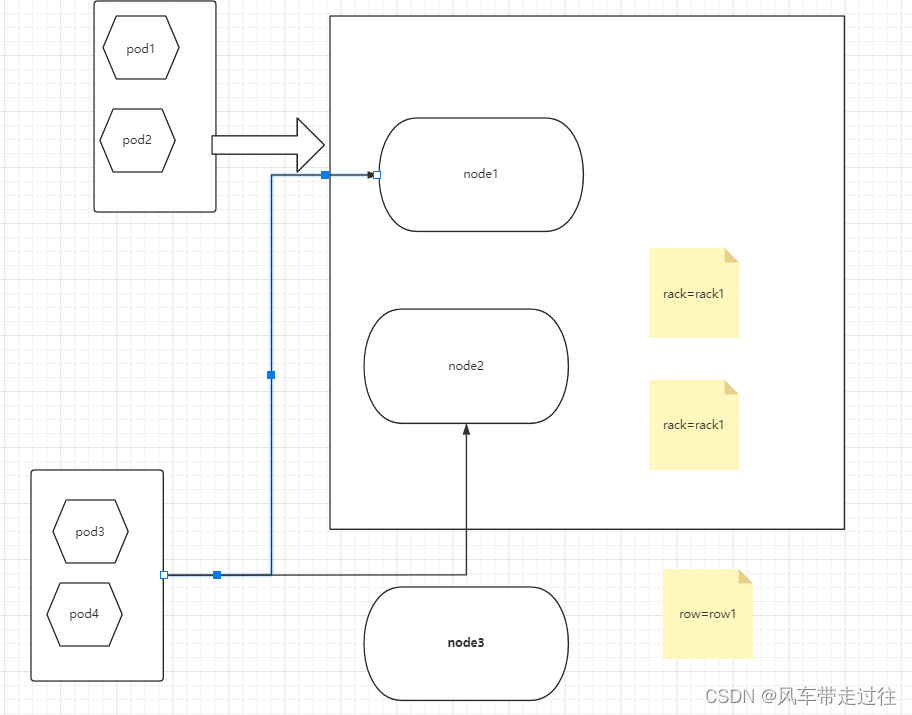

topologyKey:

位置拓扑的键,这个是必须字段

怎么判断是不是同一个位置:

rack=rack1

row=row1

使用rack的键是同一个位置

使用row的键是同一个位置

labelSelector:

我们要判断pod跟别的pod亲和,跟哪个pod亲和,需要靠labelSelector,通过labelSelector选则一组能作为亲和对象的pod资源

namespace:

labelSelector需要选则一组资源,那么这组资源是在哪个名称空间中呢,通过namespace指定,如果不指定namespaces,那么就是当前创建pod的名称空间

定义两个pod,第一个pod做为基准,第二个pod跟着它走

查看默认名称空间有哪些pod,把看到的pod删除,让默认名称空间没有pod

kubectl get pods

创建的pod必须与拥有app=myapp标签的pod在一个节点上

vim pod-required-affinity-demo-1.yaml

apiVersion: v1

kind: Pod

metadata:

name: pod-first

labels:

app2: myapp2

tier: frontend

spec:

containers:

- name: myapp

image: ikubernetes/myapp:v1

imagePullPolicy: IfNotPresent

kubectl apply -f pod-required-affinity-demo-1.yaml

vim pod-required-affinity-demo-2.yaml

apiVersion: v1

kind: Pod

metadata:

name: pod-second

labels:

app: backend

tier: db

spec:

containers:

- name: busybox

image: busybox:latest

imagePullPolicy: IfNotPresent

command: ["sh","-c","sleep 3600"]

affinity:

podAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchExpressions:

- {key: app2, operator: In, values: ["myapp2"]}

topologyKey: kubernetes.io/hostname

kubectl apply -f pod-required-affinity-demo-2.yaml

第一个pod调度到哪,第二个pod也调度到哪,这就是pod节点亲和性

kubectl get pods -o wide

pod-first running k8snode2

pod-second running k8snode2

删除测试pod

kubectl delete -f pod-required-affinity-demo-1.yaml

kubectl delete -f pod-required-affinity-demo-2.yaml

pod节点反亲和性

定义两个pod,第一个pod做为基准,第二个pod跟它调度节点相反

vim pod-required-anti-affinity-demo-1.yaml

apiVersion: v1

kind: Pod

metadata:

name: pod-first

labels:

app1: myapp1

tier: frontend

spec:

containers:

- name: myapp

image: ikubernetes/myapp:v1

imagePullPolicy: IfNotPresent

kubectl apply -f pod-required-anti-affinity-demo-1.yaml

vim pod-required-anti-affinity-demo-2.yaml

apiVersion: v1

kind: Pod

metadata:

name: pod-second

labels:

app: backend

tier: db

spec:

containers:

- name: busybox

image: busybox:latest

imagePullPolicy: IfNotPresent

command: ["sh","-c","sleep 3600"]

affinity:

podAntiAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchExpressions:

- {key: app1, operator: In, values: ["myapp1"]}

topologyKey: kubernetes.io/hostname

kubectl apply -f pod-required-anti-affinity-demo-2.yaml

显示两个pod不在一个node节点上,这就是pod节点反亲和性

kubectl get pods -o wide

pod-first running k8snode1

pod-second running k8snode2

删除测试用pod

kubectl delete -f pod-required-anti-affinity-demo-1.yaml

kubectl delete -f pod-required-anti-affinity-demo-2.yaml

topologykey 位置拓扑键

kubectl label nodes k8snode2 zone=foo

kubectl label nodes k8snode1 zone=foo

vim pod-first-required-anti-affinity-demo-1.yaml

apiVersion: v1

kind: Pod

metadata:

name: pod-first

labels:

app3: myapp3

tier: frontend

spec:

containers:

- name: myapp

image: ikubernetes/myapp:v1

imagePullPolicy: IfNotPresent

kubectl apply -f pod-first-required-anti-affinity-demo-1.yaml

vim pod-second-required-anti-affinity-demo-1.yaml

apiVersion: v1

kind: Pod

metadata:

name: pod-second

labels:

app: backend

tier: db

spec:

containers:

- name: busybox

image: busybox:latest

imagePullPolicy: IfNotPresent

command: ["sh","-c","sleep 3600"]

affinity:

podAntiAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchExpressions:

- {key: app3 ,operator: In, values: ["myapp3"]}

topologyKey: zone

kubectl apply -f pod-second-required-anti-affinity-demo-1.yaml

kubectl get pods -o wide

显示如下

pod-first running k8snode1

pod-second pending <none>

第二个pod是pending,因为两个节点是同一个位置,现在没有不是同一个位置的了,而且我们要求反亲和性,所以就会处于pending状态,如果在反亲和性这个位置把required改成preferred,那么也会运行。

kubectl delete -f pod-first-required-anti-affinity-demo-1.yaml

kubectl delete -f pod-second-required-anti-affinity-demo-1.yaml

移除标签

kubectl label nodes k8snode1 zone-

kubectl label nodes k8snode2 zone-

- podaffinity:pod节点亲和性,pod倾向于哪个pod

- poduntiaffinity:pod反亲和性

- nodeaffinity:node节点亲和性,pod倾向于哪个node

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?