Flink的per-job 提交时报容器超过内存限制的错误

提交Flink作业给yarn运行

[hadoop@hadoop1 flink-on-yarn]$ bin/flink run -m yarn-cluster -p 2 -ys 2 -yjm 1024 -ytm 1024 -c org.apache.flink.examples.java.wordcount.WordCount examples/batch/WordCount.jar --input hdfs://mycluster/test/words.log --output hdfs://mycluster/test/out

运行结果报错,如下图:

解决方法:

配置yarn-site.xml文件

<property>

<name>yarn.nodemanager.resource.memory-mb</name>

<value>6000</value>

</property>

<property>

<name>yarn.scheduler.minimum-allocation-mb</name>

<value>2048</value>

</property>

<property>

<name>yarn.nodemanager.vmem-pmem-ratio</name>

<value>2.1</value><!--自定义-->

</property>

配置mapred-site.xml文件

<property>

<name>mapreduce.reduce.memory.mb </name>

<value>4096</value>

</property>

<property>

<name>mapreduce.map.memory.mb </name>

<value>4096</value>

</property>

<property>

<name>mapreduce.map.java.opts</name>

<value>-Xmx3072m</value>

</property>

<property>

<name>mapreduce.reduce.java.opts</name>

<value>-Xmx3072m</value>

</property>

以上内存值设置根据自己集群的主机内存容量合理配置,我的集群每个主机内存都是8G。注意mapreduce.map.java.opts和mapreduce.reduce.java.opts值要小于mapreduce.reduce.memory.mb和mapreduce.map.java.opts。

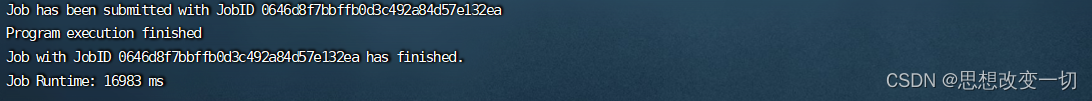

配置完后,作业成功运行:

参考链接:HOW TO PLAN AND CONFIGURE YARN AND MAPREDUCE 2 IN HDP 2.0

7095

7095

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?