3.掉线时限参数设置

需要注意的是hdfs-site.xml 配置文件中的heartbeat.recheck.interval的单位为毫秒,dfs.heartbeat.interval的单位为秒。

<property>

<name>dfs.namenode.heartbeat.recheck-interval</name>

<value>300000</value>

</property>

<property>

<name>dfs.heartbeat.interval</name>

<value>3</value>

</property>

4. 服役新数据节点

- 需求

随着公司业务的增长,数据量越来越大,原有的数据节点的容量已经不能满足存储数据的需求,需要在原有集群基础上动态添加新的数据节点。 - 环境准备

(1)在hadoop104主机上再克隆一台hadoop105主机

(2)修改IP地址和主机名称

(3)删除原来HDFS文件系统留存的文件(/opt/module/hadoop-2.7.2/data和log)

(4)source一下配置文件

[hadoop@hadoop105 hadoop-2.7.2]$ source /etc/profile

- 服役新节点具体步骤

(1)直接启动DataNode,即可关联到集群

[hadoop@hadoop105 hadoop-2.7.2]$ sbin/hadoop-daemon.sh start datanode

[hadoop@hadoop105 hadoop-2.7.2]$ sbin/yarn-daemon.sh start nodemanager

(2)在hadoop105上上传文件

[hadoop@hadoop105 hadoop-2.7.2]$ hadoop fs -put /opt/module/hadoop-2.7.2/LICENSE.txt /

(3)如果数据不均衡,可以用命令实现集群的再平衡

[hadoop@hadoop102 sbin]$ ./start-balancer.sh

starting balancer, logging to /opt/module/hadoop-2.7.2/logs/hadoop-atguigu-balancer-hadoop102.out

Time Stamp Iteration# Bytes Already Moved Bytes Left To Move Bytes Being Moved

5. 退役旧数据节点(添加白名单)

添加到白名单的主机节点,都允许访问NameNode,不在白名单的主机节点,都会被退出。

配置白名单的具体步骤如下:

(1)在NameNode的/opt/module/hadoop-2.7.2/etc/hadoop目录下创建dfs.hosts文件

[hadoop@hadoop102 hadoop]$ pwd

/opt/module/hadoop-2.7.2/etc/hadoop

[hadoop@hadoop102 hadoop]$ touch dfs.hosts

[hadoop@hadoop102 hadoop]$ vi dfs.hosts

添加如下主机名称(不添加hadoop105)

hadoop102

hadoop103

hadoop104

(2)在NameNode的hdfs-site.xml配置文件中增加dfs.hosts属性

<property>

<name>dfs.hosts</name>

<value>/opt/module/hadoop-2.7.2/etc/hadoop/dfs.hosts</value>

</property>

(3)配置文件分发

[hadoop@hadoop102 hadoop]$ xsync hdfs-site.xml

(4)刷新NameNode

[hadoop@hadoop102 hadoop-2.7.2]$ hdfs dfsadmin -refreshNodes

Refresh nodes successful

(5)更新ResourceManager节点

[hadoop@hadoop102 hadoop-2.7.2]$ yarn rmadmin -refreshNodes

17/06/24 14:17:11 INFO client.RMProxy: Connecting to ResourceManager at hadoop103/192.168.1.103:8033

(6)在web浏览器上查看

(7)如果数据不均衡,可以用命令实现集群的再平衡

[hadoop@hadoop102 sbin]$ ./start-balancer.sh

starting balancer, logging to /opt/module/hadoop-2.7.2/logs/hadoop-atguigu-balancer-hadoop102.out

Time Stamp Iteration# Bytes Already Moved Bytes Left To Move Bytes Being Moved

6. 退役旧数据节点(黑名单退役)

1)在NameNode的/opt/module/hadoop-2.7.2/etc/hadoop目录下创建dfs.hosts.exclude文件

[hadoop@hadoop102 hadoop]$ pwd

/opt/module/hadoop-2.7.2/etc/hadoop

[hadoop@hadoop102 hadoop]$ touch dfs.hosts.exclude

[hadoop@hadoop102 hadoop]$ vi dfs.hosts.exclude

添加如下主机名称(要退役的节点)

hadoop105

2)在NameNode的hdfs-site.xml配置文件中增加dfs.hosts.exclude属性

<property>

<name>dfs.hosts.exclude</name>

<value>/opt/module/hadoop-2.7.2/etc/hadoop/dfs.hosts.exclude</value>

</property>

3)刷新NameNode、刷新ResourceManager

[hadoop@hadoop102 hadoop-2.7.2]$ hdfs dfsadmin -refreshNodes

Refresh nodes successful

[hadoop@hadoop102 hadoop-2.7.2]$ yarn rmadmin -refreshNodes

17/06/24 14:55:56 INFO client.RMProxy: Connecting to ResourceManager at hadoop103/192.168.1.103:8033

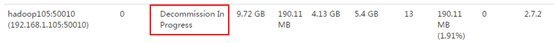

4)检查Web浏览器,退役节点的状态为decommission in progress(退役中),说明数据节点正在复制块到其他节点。

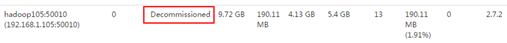

5)等待退役节点状态为decommissioned(所有块已经复制完成),停止该节点及节点资源管理器。注意:如果副本数是3,服役的节点小于等于3,是不能退役成功的,需要修改副本数后才能退役。

[hadoop@hadoop105 hadoop-2.7.2]$ sbin/hadoop-daemon.sh stop datanode

stopping datanode

[hadoop@hadoop105 hadoop-2.7.2]$ sbin/yarn-daemon.sh stop nodemanager

stopping nodemanager

6)如果数据不均衡,可以用命令实现集群的再平衡

[hadoop@hadoop102 hadoop-2.7.2]$ sbin/start-balancer.sh

starting balancer, logging to /opt/module/hadoop-2.7.2/logs/hadoop-atguigu-balancer-hadoop102.out

Time Stamp Iteration# Bytes Already Moved Bytes Left To Move Bytes Being Moved

425

425

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?