1、KNN基本介绍

1.1 定义

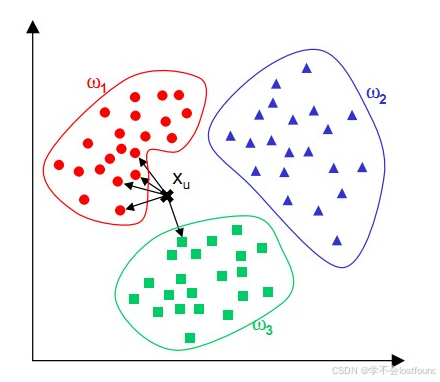

KNN(K-NearestNeighbor,即:K最邻近算法)是一种基于实例的学习方法,用于分类和回归任务,它通过查找一个数据点的最近邻居来预测该数据点的标签或数值。

所谓K最近邻,就是K个最近的邻居,即:每个样本都可以用它最接近的K个邻近值来代表

1.2 核心思想【近朱者赤,近墨者黑】

如果一个样本在特征空间中的K个最相邻的样本中的大多数属于某一个类别,则该样本也属于这个类别,并具有这个类别上样本的特性。(例如,在上图 ![]() 的5个最相邻样本中,有4个(大多数)是属于

的5个最相邻样本中,有4个(大多数)是属于 ![]() 的,所以可以将

的,所以可以将 ![]() 也视为其归属于

也视为其归属于 ![]() )

)

由于KNN主要是靠周围有限的邻近样本,而不是靠判别类域的方法来确定所属类别,所以对于类域交叉或重叠较多的待分样本集而言,KNN会比其他方法更为适合。

2、KNN的流程与特点

2.1 流程

(1)选择 K 值:确定邻居的数量 K。

(2)距离计算:计算待预测数据点与其他所有数据点之间的距离。

(3)寻找最近邻居:找出距离最近的 K 个数据点。

(4)决策规则:

- 分类: 在 K 个最近邻居中,通过多数投票的方式确定数据点的类别。

- 回归: 计算 K 个最近邻居的目标值的平均值或加权平均值。

2.2 优点

-

简单有效:算法原理简单,易于理解和实现。

-

无需训练:KNN 属于懒惰学习(懒惰学习简单来说就是:要考试了,才去学习,边学边考,不提前学习),不需要在训练阶段构建模型(几乎没有训练过程,在推理时直接硬计算,不属于典型的人工智能)。

-

可用于非线性问题:不需要假设数据的分布,适用于非线性可分问题。

-

数据适用性广:适用于各种类型的数据,包括数值型、类别型等。

2.3 缺点

-

计算成本高:需要计算待预测点与所有训练数据点之间的距离,计算量大。

-

存储成本高:需要存储全部数据集。

-

对异常值敏感:异常值或噪声数据可能对预测结果产生较大影响。

-

需要选择合适的 K 值:K 值的选择对结果影响较大,需要通过交叉验证等方法来确定。

-

距离度量:对距离度量的选择敏感,不同的度量可能导致不同的结果。

2.4 基于缺点的改进措施

KNN 算法虽然简单,但在实际应用中仍需要根据具体问题进行适当的调整和优化,以提高其性能和适用性,比如:

-

优化 K 值选择:使用交叉验证等方法来选择最优的 K 值。

-

改进距离度量:根据数据特性选择合适的距离度量,如加权距离等。

-

使用不同的邻居权重:在多数投票或平均值计算中,给距离近的邻居更大的权重。

-

局部敏感的缩放:对特征进行局部敏感的缩放,以减少噪声的影响。

-

使用有效的数据结构:如 KD-树、球树等,以减少邻居搜索的时间复杂度。

-

增量学习:在新数据到来时,逐步更新模型,而不是重新训练。

-

混合 KNN:将 KNN 与其他算法结合,如决策树,以提高预测的准确性和鲁棒性。

3 、余弦相似度与欧几里得空间

在人工智能和机器学习领域,余弦相似度和欧几里得空间(又称“欧式空间”)是两种常用的度量方法,用于评估数据点之间的相似性或距离

尤其是KNN算法,要找到K个最近的邻居,首先就需要用“欧式空间”来计算距离,从而才能判断哪个样本距离当前样本比较近

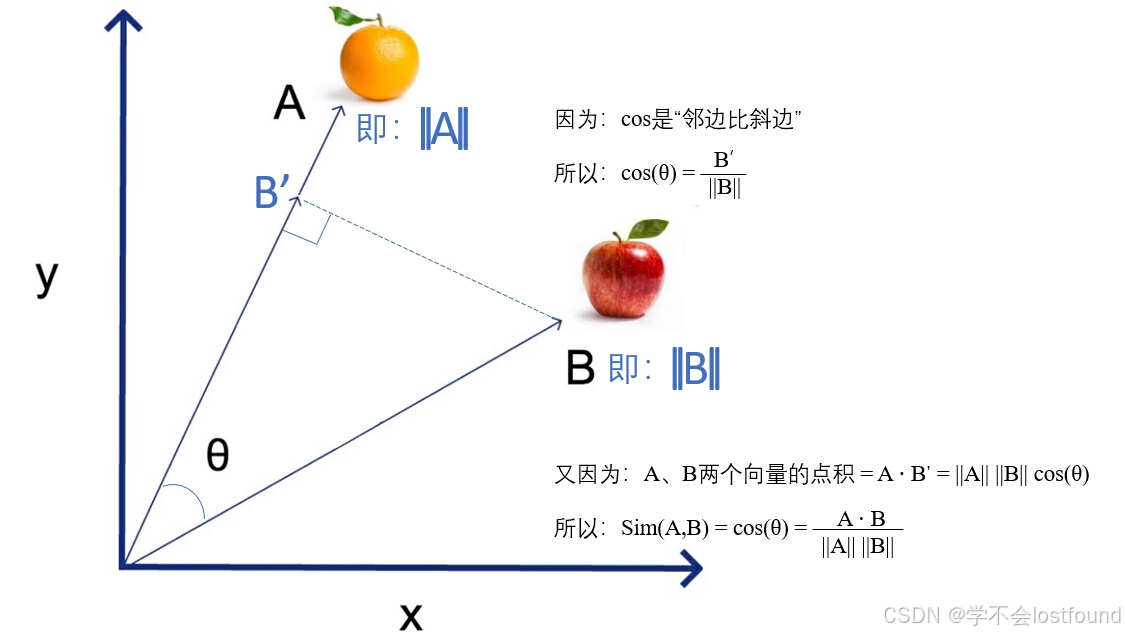

3.1 余弦相似度(Cosine Similarity)

(1)定义

余弦相似度是衡量两个向量在方向上的相似程度,而不是它们的大小,它通过计算两个向量之间夹角的余弦值来度量相似性(余弦相似度越大,代表两个向量越相似)。

- 夹角为0°:相似度为1,相似度最高

- 夹角为90°:相似度为0,相似度为中等

- 夹角为180°:相似度为-1,相似度最低(反向)

(2)公式

![]()

其中,A · B表示A、B两个向量的点积, ||A|| 和 ||B|| 分别是A、B两个向量的模。

(3)应用

- 文本分析:在文本处理中,常用余弦相似度来评估文档之间的相似性,因为文本内容的方向(主题)比大小(长度)更重要。

- 推荐系统:用于评估用户或物品之间的相似性,以推荐相似的物品或内容。

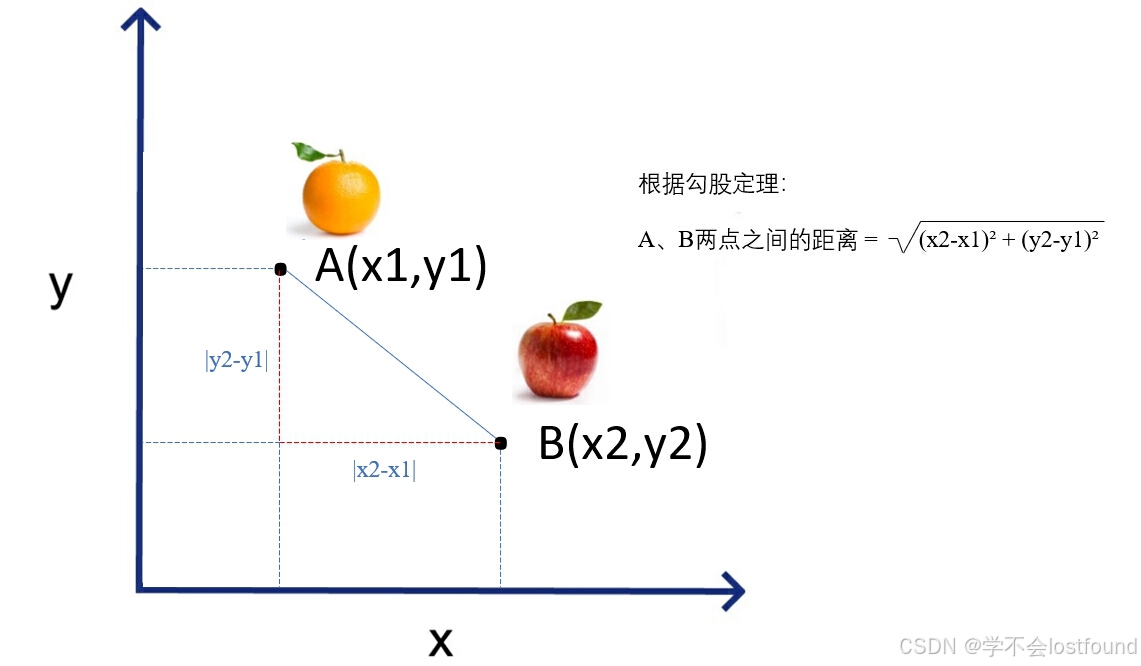

3.2 欧几里得空间(Euclidean Space)

(1)定义

欧几里得空间是最常见的距离度量方式,它基于两点之间的直线距离。在欧几里得空间中,两点之间的距离是它们坐标差的平方和的平方根。

(2)公式: ![]()

其中, ![]() 和

和 ![]() 分别是A、B两个向量在第 (i) 维的坐标。

分别是A、B两个向量在第 (i) 维的坐标。

(3)应用:

- 机器学习:在许多机器学习算法中,如 K-均值聚类、K-最近邻等,欧几里得距离用于计算样本之间的距离。

- 几何学:在几何学中,欧几里得距离是最基本的距离度量,用于计算点、线段、多边形等对象的大小和位置。

3.3 两种度量方法的区别

(1)余弦相似度

强调方向的一致性,适用于那些方向比大小更重要的场景。它允许我们识别具有相同主题或趋势的文档或用户偏好,即使它们的大小或频率不同。

(2)欧几里得空间

提供了一种直观的距离度量方式,适用于那些大小和方向都重要的场景。它帮助我们识别物理空间中的实际距离或相似度。

(3)如何选择

在实际应用中,需要根据数据的特性和问题的需求来选择用哪种度量方式。

例如:

-

文本数据可能更倾向于使用余弦相似度

-

物理空间数据可能更适合使用欧几里得距离。

理解这些度量方法的原理和适用场景对于设计有效的算法和模型至关重要。

4、KNN算法实践

4.1 分类任务:【鸢尾花(iris)识别】

鸢尾花是一种常见的花卉植物,它分为许多不同的品种,其中最为著名的有3种类别:

- 山鸢尾(Iris setosa):具有短而直立的叶子和鲜艳的花朵,生长在寒冷气候下。

- 杂色鸢尾(Iris versicolor):叶子宽大而扁平,花朵颜色丰富,常见于北美洲湿地。

- 长鞘鸢尾(Iris virginica):叶片弯曲,花朵大而丰满,生长在湿润的土壤中

4.1.1 项目需求

对于上述的三种鸢尾花类别,需要通过机器学习算法来做分类预测

4.1.2 项目分析

-

任务:给定一朵花,让模型识别到底是哪个子品种

-

输入:一朵花

-

一朵花不能直接输入到计算机中,所以要进行数字化的转型,即:将花转换成特征数据进行输入

-

与业务专家详细咨询,发现鸢尾花的子品种与“花萼长度”、“花萼宽度”、“花瓣长度”、“花瓣宽度”这四个特征有关

-

-

输出:子品种

-

不同的品种,也就是不同的类型,所以这是一个分类问题

-

需要对状态和类别进行编码,如0、1、...、N-1这些状态,0、1、2这三种类别

-

4.1.3 项目实现

Step1:加载数据

# 要对鸢尾花使用KNN算法做分类,则首先要有鸢尾花的数据,即:需要能加载鸢尾花的数据集

# 在python中,sklearn.datasets模块内置了一个典型的鸢尾花数据集,并且,可以通过其内置的load_iris函数来加载这个数据集

# (1)sklearn(scikit-learn)是一个开源的 Python 机器学习库

# 基于 NumPy、SciPy 和 matplotlib 等科学计算库

# 提供了一系列简单高效的工具,用于数据挖掘和数据分析

# (2)sklearn.datasets是 sklearn 库中的一个模块,包含多个用于获取标准数据集的函数

# 这些数据集通常用于测试和演示机器学习算法的性能,其中常见的数据集包括:

# ①鸢尾花数据集 (Iris):用于分类问题,包含 150 个样本,每个样本有 4 个特征

# ②葡萄酒数据集 (Wine):用于分类问题,包含 178 个样本,每个样本有 13 个特征

# ③手写数字数据集 (Digits):用于分类问题,包含 1797 个样本,每个样本有 64 个特征

# ④波士顿房价数据集 (Boston):用于回归问题,包含 506 个样本,每个样本有 13 个特征

# (3)load_iris是sklearn.datasets子模块中的一个内置函数,用于加载鸢尾花数据集

# load_iris()函数返回的对象一般包含以下属性:

# ①data:一个数组,包含了数据集中所有样本的特征值。

# ②target:一个数组,包含了数据集中每个样本对应的类别标签。

# ③target_names:一个列表,包含了类别的名称,鸢尾花数据集的类别为 setosa、versicolor 和 virginica。

# ④DESCR:一个字符串,包含了数据集的描述信息。

# ⑤feature_names:一个列表,包含了数据集特征的名称。

# ⑥filename:数据集在 scikit-learn 库中的文件路径。

from sklearn.datasets import load_iris

# 上面导入sklearn数据集模块和load_iris函数之后,就可以进行函数的调用,获取到数据集

result = load_iris()

# 获取到数据集之后,可以用python学习三板斧(type/dir/print),对数据集进行初步的认识

# (1)看是什么类型

print(type(result)) # 结果:<class 'sklearn.utils._bunch.Bunch'>

# (2)看有哪些属性和方法

print(dir(result)) # 结果:['DESCR', 'data', 'data_module', 'feature_names', 'filename', 'frame', 'target', 'target_names']

# (3)看是什么内容

print(result)

# (4)在dir获取到的属性和方法中,DESCR属性(description)可以用来获取对数据集的描述

print(result.DESCR)

# 通过上面对数据集的初识,可以发现,这个数据集里面已经包含了鸢尾花的特征(data)和标签(target)

# 因为模型是需要实现从 X 到 y 的映射,所以,我们需要从数据集中分别取出特征和标签

# (1)将特征(data)赋值到 X

# (2)将标签(target)赋值到 y

# 取值方法:在load_iris函数中,有一个return_X_y参数,其默认为False

# (1)return_X_y=False,不做任何拆分,依旧返回data、target、target_names、DESCR、feature_names、filename等内容

# (2)return_X_y=True,做拆分,取出data和target,拆分成两个数组(numpy.ndarray)进行返回

# 备注:在机器学习和统计建模中,通常习惯性将 X 大写,将 y 小写,建议遵循此规范

X, y = load_iris(return_X_y=True)

# 获取到 X 和 y 之后,我们可以查看其内容和形状,对 X 和 y 进行第一步的了解

# (1)查看 X 的内容

print(X)

# (2)查看 y 的内容

print(y)

# (3)查看 X 的形状,可以得到sample_size(样本数量), num_features(每个样本的特征数量)

print(X.shape)

# (2)查看 y 的形状,labels_size(样本对应的标签数量)

print(y.shape)Step2:数据切分

# 在模型处理的过程中,我们通常需要将数据集至少分为两部分,一部分用于训练模型,一部分用于验证评估模型

# 但我们从load_iris函数中获取的是一个总的数据集,不区分训练集合测试集,所以需要想办法做切分

# 在python中,sklearn.model_selection是 sklearn 库中的一个模块,提供用于模型选择和评估的一系列工具和函数

# 例如:

# (1)交叉验证(Cross-validation):

# ①cross_val_score:用于计算模型在不同子集上的评分。

# ②cross_validate:比 cross_val_score 更为通用,允许计算多个评分指标。

# ③KFold、StratifiedKFold、GroupKFold:用于生成数据集的不同训练/测试分割。

# (2)训练/测试集分割:

# ①train_test_split:将数据集分割为训练集和测试集。

# ②GroupShuffleSplit、GroupKFold:在需要考虑数据组或块的情况下进行数据分割。

# (3)网格搜索(Grid Search):

# ①GridSearchCV:通过遍历给定参数的所有可能组合来寻找最佳模型参数。

# ②ParameterGrid:用于生成网格搜索中参数的笛卡尔积。

# (4)随机搜索(Random Search):

# RandomizedSearchCV:在给定参数的分布中随机选择组合,用于寻找最佳模型参数。

# (5)学习曲线(Learning Curves):

# learning_curve:用于评估模型随着训练样本数量的增加如何变化。

# (6)模型持久化(Model Persistence):

# fit:训练模型,可以与 cross_val_score 或 cross_validate 结合使用。

# (7)评估指标(Metrics):

# ①score:一个通用函数,用于返回指定的评分指标。

# ②make_scorer:创建一个评分函数。

# (8)工具函数:

# ①permuted_learning_curve:用于评估模型对数据随机扰动的敏感性。

# ②successive_halving:一种资源优化的交叉验证方法。

# 所以,我们需要引入train_test_split函数,对总的数据集做训练集和测试集的切分

from sklearn.model_selection import train_test_split

# train_test_split函数会将X和y都分别拆分成测试集和训练集,以总共四个数组(numpy.ndarray)形式进行返回

# 其中:通常用train表示训练集(X_train, y_train),test表示测试集(X_test, y_test),建议遵循此规范

# 在train_test_split函数中,除了要传入X、y两组数据之外,还有两个很关键的参数:

# (1)test_size:控制测试集在原始数据集中所占的比例

# 比如test_size=0.2,就代表把20%的数据集作为测试集,剩下80%作为训练集

# (2)random_state:通过0或正整数,表示随机数生成器的种子值,用于确保数据分割的可重复性。

# 因为数据集是一个数组,而数组本身是有序的

# 所以我们最好是将数据集进行打乱,然后再分成测试集和训练集,这样训练出来的模型才更具代表性

# 如果不传random_state,则train_test_split函数会自动打乱顺序,每次调用train_test_split函数后,打乱的顺序都不一样

# 但在科学研究、数据分析、机器学习和软件开发等领域,可重复的结果非常重要,比如:

# ①实验验证:开发了一个新的机器学习模型或算法,需要多次运行实验以验证结果的一致性时

# ②结果复现:其他研究者需要能够复现当前的实验结果,以验证当前方法的有效性时

# ③模型调试:调试模型,需要确保每次更改模型或数据后,结果的变化是可预测时

# ④跨环境一致性:代码在不同的环境(如不同的机器或服务器)上运行,需要确保结果保持一致时

# ⑤教育和培训:教育和培训场景中,需要确保学习材料的准确性和一致性时

# ⑥长期研究:在长期研究项目中,需要确保研究的连续性和数据的完整性时

# ⑦比较研究:比较不同模型或算法的性能,需要确保比较的公平性和有效性时

# 因此,需要有参数能控制生成可重复的结果(将random_state参数传相同的值,所打乱的顺序就能保持相同)

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=0)

# 获取到 X_train, X_test, y_train, y_test 之后,我们可以查看其内容和形状

# (1)查看形状

print(f"X_train.shape:{X_train.shape}")

print(f"y_train.shape:{y_train.shape}")

print(f"X_test.shape:{X_test.shape}")

print(f"y_test.shape:{y_test.shape}")

# (2)查看内容

print(f"X_train:{X_train}")

print(f"y_train:{y_train}")

print(f"X_test:{X_test}")

print(f"y_test:{y_test}")Step3:套用模型

# 把数据集切分好了之后,就可以套用模型,并使用训练集对其进行训练了

# 在python中,sklearn.neighbors是 sklearn 库中的一个模块,它实现了基于邻近度的算法,主要用于分类、回归和聚类等任务

# 在sklearn.neighbors中,KNeighborsClassifier就是用于实现KNN算法的分类器,所以我们需要先对其进行引入

from sklearn.neighbors import KNeighborsClassifier

# KNeighborsClassifier是一个类,所以应该用面向对象的思想对其进行使用(即:实例化对象)

# K最邻近算法中,K的值很重要,其实,在初始化实例时,可以使用n_neighbors参数指定K值

# 如果不指定,则会采取默认值,从KNeighborsClassifier类的代码中可以知晓默认值是5

# n_neighbors : int, default=5

# Number of neighbors to use by default for :meth:`kneighbors` queries.

knn = KNeighborsClassifier()

# 实例化的knn对象,会具备KNeighborsClassifier类中的方法

# (1)在 sklearn 库中,fit方法是大多数机器学习算法类的一个核心方法,用于训练模型

# 对于 KNeighborsClassifier 这样的算法,fit 法的作用是学习训练数据,以便模型能够进行预测

# KNeighborsClassifier中fit方法的大致流程:

# (1)验证数据:fit 方法首先会检查输入数据的形状和类型,确保它们符合算法的要求

# (2)学习模式:对于 KNN 算法,学习过程实际上就是存储训练数据,KNN 是一种懒惰学习算法,它在预测时直接使用训练数据,而不是构建一个显式的模型

# (3)设置参数:fit 方法会根据初始化分类器时设置的参数(如 n_neighbors)来配置算法

# 训练模型时,需要将训练集(X_train和y_train)作为参数传入fit方法中

knn.fit(X=X_train, y=y_train)

# (2)在 sklearn 库中,predict方法是大多数机器学习算法类的一个核心方法,用于模型预测

# 预测模型时,需要将测试集(X_test)作为参数传入predict方法中

y_pred = knn.predict(X=X_test)

# 获取到预测结果(y_pred)之后,可以对其内容进行查看

print(f"y_pred:{y_pred}")Step4:评估模型

# 评估模型的方法很简单,通过上面的实践,我们已经得到了以下几组数据

# (1)X_train:X的训练集数据

# (2)X_test:y的训练集数据

# (3)y_train:X的预测集数据

# (4)y_test:y的预测集数据

# (5)y_pred:根据训练集做了模型训练之后,通过模型对X的预测集数据得到的预测结果

# 因此,我们只需要将y_test与y_pred进行对比,看有多少个数是相等的,就可以得到预测的准确率

# acc即:accuracy,代表准确率,mean代表取平均数

acc = (y_pred == y_test).mean()

print(f"预测的准确率为:{acc}")Step5:模型的保存与加载

# 在机器学习项目中,一旦模型被训练并评估为满足性能要求,接下来的步骤通常是将模型保存到文件中,以便将来可以轻松地重新加载和部署

# 所以,我们需要想一个办法,将我们训练好的模型能写入到一个文件中,并且,这个文件要能方便加载出模型,以供我们继续进行使用

# 在python中,joblib库能用于序列化python对象

# (序列化是将数据结构或对象状态转换为可以存储或传输的格式的过程)

# joblib特别适合于处理大型numpy数组,这使得它在科学计算和机器学习领域非常有用,因为这些领域的数据通常包含大量的数值数据

# (1)主要特点

# ①高效处理大型数组:joblib优化了numpy数组的序列化和反序列化过程,使其比python中标准的pickle模块更高效

# ②压缩:joblib在序列化数据时使用压缩,这有助于减少磁盘空间的使用和加快读写速度

# ③支持大数据:由于其高效的压缩和处理方式,joblib 能够处理比内存大得多的数据

# (2)常用功能

# ①保存对象:使用joblib.dump()函数,可以将python对象保存到磁盘上的文件中

# ②加载对象:使用joblib.load()函数,可以从磁盘上的文件中加载先前保存的对象

# (3)应用场景

# ①机器学习模型持久化:在训练完机器学习模型后,通常需要将其保存到磁盘上,以便后续使用或部署,joblib是实现这一功能的有效工具

# ②数据处理和分析:在数据处理和分析流程中,joblib可以用来保存中间结果或最终结果,以便在不同的环境中重用

# 所以,我们需要引入joblib库

import joblib

# 用joblib保存模型,需要传入两个关键参数:

# (1)value参数用于指向训练好的模型对象

# (2)filename参数用于定义模型保存的文件名称

joblib.dump(value=knn, filename="knn_iris.model")

# 用joblib加载模型,传入文件保存的模型文件名称即可

model = joblib.load(filename="knn_iris.model")4.2 回归任务:【波士顿(boston)房价预测】

波士顿是美国马萨诸塞州的首府和最大城市,也是美国东北部新英格兰地区的最大城市,被誉为“美国雅典”

作为一个历史悠久、教育资源丰厚、环境优美的城市,波士顿市非常适合宜居,故其房价是人们关注的重点

4.2.1 项目需求

根据波士顿的历史房价信息,需要通过机器学习算法来做回归预测

4.2.2 项目分析

-

任务:给定波士顿地区,让模型预测房屋的中值价格

-

输入:波士顿地区

-

波士顿地区不能直接输入到计算机中,所以要进行数字化的转型,即:将波士顿转换成特征数据进行输入

-

与业务专家详细咨询,发现波士顿地区的房价走势与“城镇人均犯罪率”、“住宅用地超过 25000 平方尺的比例”、“城镇非零售商业用地的比例”、“房子是否在河边”、“一氧化氮浓度”、“每栋住宅的平均房间数”、“1940 年之前建成的自住单位的比例”、“到五个波士顿就业中心的加权距离”、“高速公路可达性指数”、“每 10000 美元的全值财产税率”、“城镇师生比例”、“黑人比例”、“低收入人群比例”等特征有关

-

-

输出:房屋的中值价格

-

不同的情况,对应的房价基本上都会不一样,房价属于连续型的数据,并不是离散型的

-

需要对邻近的中值房价求平均值

-

4.2.3 项目实现

Step1:加载数据

# 要对波士顿房价使用KNN算法做回归,则首先要有波士顿房价的数据,即:需要能加载波士顿房价的数据集

# 在python中,sklearn.datasets模块以前内置了一个典型的波士顿房价数据集,并且,可以通过其内置的load_boston函数来加载这个数据集

# 但是,由于这个数据集中包含一个特征(B),这个特征代表的是黑人的人口占比,涉及种族歧视的嫌疑,所以在sklearn的1.2版本就移除了这个数据集

# 因此,我们需要自己找这个数据集

# 好在我学习此知识时,我的老师将此数据集做成了一个csv文件给了我,所以我读取这个csv文件的内容即可

# 目前已将此文件内容放到了本文末尾的附页章节中,有需要的朋友可自取

# 声明:本人使用此数据集,仅做学习交流目的,绝无种族歧视的意思,本人信奉人人平等的原则

# 因为需要获取的是数据集,而数据集一般有特定的格式,比如Numpy.ndarray、Pandas DataFrame等

# 我们知道,numpy主要是做数值计算的,pandas主要是做数据分析的

# 又因为csv文件中,包含特征值和标签值两种类型的数据

# 所以此处我们需要先导入pandas库,方便后面对csv文件做内容的获取以及特征值、标签值的拆分

import pandas as pd

# pd.read_csv是 pandas 库中的一个函数,用于读取CSV (逗号分隔值)类型的文件,并将其转换为DataFrame 对象(DataFrame是 pandas 中用于存储和操作结构化数据的主要数据结构)。

# filepath_or_buffer参数用于指定所需读取的csv文件的路径和名称

# skiprows参数表示跳过内容的行数,有两种传值方法:

# (1)填写正整数,表示跳过前多少行(比如,填1是跳过第一行,填2是跳过前两行,以此类推)

# (2)填写索引,表示跳过第多少行(比如,填[0]是跳过第一行,填[1]是跳过第二行,以此类推)

# 由于csv文件中的第一行是无关内容,所以我们可以传值skiprows=1,在取数据时跳过第一行

data = pd.read_csv(filepath_or_buffer="boston_house_prices.csv", skiprows=1)

# 从csv文件中获取到的有效数据包括以下内容:

# (1)CRIM:城镇人均犯罪率

# (2)ZN:住宅用地超过 25000 平方尺的比例

# (3)INDUS:城镇非零售商业用地的比例

# (4)CHAS:Charles River 虚拟变量(如果房子在河边,则为 1;否则为 0)

# (5)NOX:一氧化氮浓度(每千万分之一)

# (6)RM:每栋住宅的平均房间数

# (7)AGE:1940 年之前建成的自住单位的比例

# (8)DIS:加权距离到五个波士顿就业中心

# (9)RAD:高速公路可达性指数

# (10)TAX:每 10000 美元的全值财产税率

# (11)PTRATIO:城镇师生比例

# (12)B:1000(Bk - 0.63)^2,其中 Bk 是城镇中黑人的比例

# (13)LSTAT:低收入人群(劳动阶层)的比例

# (14)MEDV:目标变量,即房屋的中值价格(以千美元为单位)

# 从上面数据可以知晓:MEDV这个内容属于标签值,其他13个内容属于特征值

# 因此,我们可以将除MEDV之外的数据视为X,将MEDV对应的数据视为y

# 通过drop方法,可以取出除MEDV之外的所以特征列内容,再通过to_numpy()方法转换为Numpy.ndarray类型的数据,方便后续进行回归计算

X = data.drop(columns=["MEDV"]).to_numpy()

# 通过列索引(["MEDV"]),可以取出MEDV这一标签列的内容,再通过to_numpy()方法转换为Numpy.ndarray类型的数据,方便后续进行回归计算

y = data["MEDV"].to_numpy()Step2:数据切分

# 与鸢尾花分类任务中的逻辑同理,我们需要引入train_test_split函数,对总的数据集做训练集和测试集的切分

from sklearn.model_selection import train_test_split

# 类似地,我们可以传入test_size和random_state两个参数:

# 用random_state定下随机数种子,并按照random_state的比例切分测试集和训练集

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=0)

# 获取到 X_train, X_test, y_train, y_test 之后,我们同样可以查看其内容和形状

# (1)查看形状

print(f"X_train.shape:{X_train.shape}")

print(f"y_train.shape:{y_train.shape}")

print(f"X_test.shape:{X_test.shape}")

print(f"y_test.shape:{y_test.shape}")

# (2)查看内容

print(f"X_train:{X_train}")

print(f"y_train:{y_train}")

print(f"X_test:{X_test}")

print(f"y_test:{y_test}")Step3:套用模型

# 同理,把数据集切分好了之后,就可以套用模型,并使用训练集对其进行训练了

# 在回归问题中,同样需要导入sklearn.neighbors模块

# 在sklearn.neighbors中,KNeighborsRegressor就是用于实现KNN算法的回归器,所以我们需要先对其进行引入

from sklearn.neighbors import KNeighborsRegressor

# KNeighborsRegressor是一个类,所以应该用面向对象的思想对其进行使用(即:实例化对象)

# K最邻近算法中,K的值很重要,其实,在初始化实例时,可以使用n_neighbors参数指定K值

# 如果不指定,则会采取默认值,从KNeighborsRegressor类的代码中可以知晓默认值是5

# n_neighbors : int, default=5

# Number of neighbors to use by default for :meth:`kneighbors` queries.

knn = KNeighborsRegressor()

# 同理,我们需要分别用fit和predict方法做训练和预测

knn.fit(X=X_train, y=y_train)

y_pred = knn.predict(X=X_test)

# 获取到预测结果(y_pred)之后,同样可以对其内容进行查看

print(f"y_pred:{y_pred}")Step4:评估模型

对于回归问题,通常可以用MAE或MSE这两种统计指标,来衡量预测值与实际值之间的差异,也就是模型的准确性(误差越大、越是不准)

(1)MAE(Mean Absolute Error,平均绝对误差)

MAE 是预测误差绝对值的平均,它通过计算预测值与实际值之间差的绝对值,然后取平均,来衡量模型预测的准确性

MAE 的公式:

![]()

其中:

- n 是样本数量

是第 i 个样本的实际值

是第 i 个样本的实际值 是第 i 个样本的预测值

是第 i 个样本的预测值

MAE 的特点是对所有大小的预测误差都一视同仁,不会因为误差较大就给予更大的惩罚

(2)MSE(Mean Squared Error,均方误差)

MSE 是预测误差的平方和的平均值,它通过计算预测值与实际值之间差的平方,然后取平均,来衡量模型预测的准确性

MSE 的公式:

![]()

其中:

- n 是样本数量

是第 i 个样本的实际值

是第 i 个样本的实际值 是第 i 个样本的预测值

是第 i 个样本的预测值

MSE 的特点是对大的预测误差给予更大的惩罚(因为误差被平方),这使得 MSE 成为一个非常严格准确性衡量指标,尤其当需要对较大的预测误差进行惩罚时

# (1)用MAE来做计算和评估

mae = abs(y_pred - y_test).mean()

print(f"用MAE指标来衡量预测的误差为:{mae}")

# (2)用MSE来做计算和评估

mse = ((y_pred - y_test) ** 2).mean()

print(f"用MSE指标来衡量预测的误差为:{mse}")Step5:模型的保存与加载

# 同理,我们需要引入joblib库

import joblib

# 同理,可用dump和load方法分别做模型的保存与加载

joblib.dump(value=knn, filename="knn_boston.model")

model = joblib.load(filename="knn_boston.model")5、自定义KNN算法

在本文第四章节的KNN算法实践中,用的都是sklearn库中的标准模块和函数,为了理解其中的实现原理,以及学习如何自定义一个机器学习算法,本章节将以分类和回归两个任务来展现KNN算法的自定义流程(其实就是全面模仿sklearn)

5.1 自定义分类器

# KNN算法核心是在特征空间中的K个最相邻的样本中,看大多数的样本属于哪个类别,从而判断当前样本属于哪个类别

# 所以,如何找到“大多数”,这个问题是最关键的

# 在python中,collections模块内置了一个Counter类

# Counter类可以对一个列表内容进行分析,并通过返回列表中每个元素出现的次数

# 此外,Counter类中的most_common方法可以获取次数最多的n个元素及对应的次数

# 因此,我们需要引入collections模块和Counter类

from collections import Counter

# 由于最终通过predict方法预测出来的标签需要是Numpy.ndarray格式的数据,这样才方便与测试集的标签做对比分析

# 所以,我们需要引入numpy库

import numpy as np

# 定义一个MyKNeighborsClassifier类,实现KNN算法的fit和predict方法

class MyKNeighborsClassifier():

"""

自定义KNN分类算法

"""

def __init__(self, n_neighbors=5):

"""

初始化方法:主要接收n_neighbors,也就是K值(邻居数),可参考sklearn设置默认值为5

"""

self.n_neighbors = n_neighbors

def fit(self, X_train, y_train):

"""

训练过程(KNN是懒惰学习,所以这里只是把X、y的值进行传入,不做额外处理)

需要注意:这里的X、y,指的都是训练集的数据!

"""

self.X_train = X_train

self.y_train = y_train

def predict(self, X_test):

"""

推理过程(此处是重点)

"""

# 大致流程:

# Step1:寻找样本的 K 个最近邻居

# Step2:对 K 个最近邻居的标签进行投票

# Step3:对投票数最多的邻居所对应的标签进行返回

# 具体实现:

# 1、定义一个列表,用于接收每个测试集预测出来的标签值

results = []

# 2、循环遍历测试集中的每一个样本,分别求其对应的标签值,并存放值results中

for x_test in X_test:

# Step1:用欧式空间,求当前测试样本与每个训练样本的距离

# self.X_train - x_test代表用每个训练样本的所有特征与当前测试样本的对应特征相减

# ** 2代表相减之后求平方

# sum(axis=1)代表每个特征值相减之后求平方再求和

# ** 0.5代表最终求和之后再开根号

distance = ((self.X_train - x_test) ** 2).sum(axis=1) ** 0.5

# Step2:进行排序和切片,找到最近的K个邻居

# argsort()函数是NumPy提供的一个方法,用于返回数组值从小到大的排序结果

# [:self.n_neighbors]其实就是[:5](即[0:5])的切片,用于找到最近的K个邻居

idxes = distance.argsort()[:self.n_neighbors]

# Step3:获取最近的这K个邻居的标签

labels = self.y_train[idxes]

# Step4:从K个邻居的标签中,获取到重复次数最多的值

# Counter(labels): Counter是 collections 模块中的一个类,它接收一个列表,并返回一个字典

# 其中,字典的键是列表中的元素、值是它们出现的次数

# most_common(1): most_common()方法用于返回一个列表,其中包含Counter对象中的元素及其计数(key: value),按计数从大到小排列

# 传1表示只返回出现次数最多的1个元素,传2则表示返回出现次数最多的2个元素,以此类推

# [0]: 对列表取索引值,传0代表取第一个元素(获取的是一个元组)

# 元组中有两个数据,第一个数据是标签,第二个数据是标签出现的次数

# [0][0]: 再次使用索引[0]来获取元组中的第一个元素,即出现次数最多的标签

# 示例:

# 假设存在标签列表:labels = ["山鸢尾", "山鸢尾", "山鸢尾", "杂色鸢尾", "杂色鸢尾", "长鞘鸢尾"]

# 则Counter(labels)的内容为:{'山鸢尾': 3, '杂色鸢尾': 2, '长鞘鸢尾': 1}

# Counter(labels).most_common(1)的内容为:[('山鸢尾', 3)]

# Counter(labels).most_common(1)[0]的内容为:('山鸢尾', 3)

# Counter(labels).most_common(1)[0][0]的内容为:山鸢尾

final_label = Counter(labels).most_common(1)[0][0]

# Step5:重复次数最多的值,即可视为标签值,需要添加到预测标签值的结果集中

results.append(final_label)

# 3、用Numpy.ndarray格式,返回每个样本对应的标签值,方便后续与实际值之间做分析

return np.array(results)使用自定义的KNN分类器处理【鸢尾花(iris)识别】任务:

# 引入load_iris,获取鸢尾花数据集

from sklearn.datasets import load_iris

X, y = load_iris(return_X_y=True)

# 引入train_test_split,将数据集切分为训练集和测试集

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=0)

# 调用自定义的knn分类器,分别传入训练集和测试集

my_knn_classifier = MyKNeighborsClassifier()

my_knn_classifier.fit(X_train=X_train, y_train=y_train)

y_pred = my_knn_classifier.predict(X_test=X_test)

# 比较测试集的标签值和预测出来的标签值,计算预测的准确率

acc = (y_pred == y_test).mean()

print(f"预测的准确率为:{acc}")5.2 自定义回归器

# 与自定义的分类器同理,我们需要先引入collections模块和Counter类

from collections import Counter

# 同理,我们还需要引入numpy库

import numpy as np

# 定义一个MyKNeighborsRegressor类,实现KNN算法的fit和predict方法

class MyKNeighborsRegressor():

"""

自定义KNN回归算法

"""

def __init__(self, n_neighbors=5):

"""

初始化方法:主要接收n_neighbors,也就是K值(邻居数),可参考sklearn设置默认值为5

"""

self.n_neighbors = n_neighbors

def fit(self, X_train, y_train):

"""

训练过程(KNN是懒惰学习,所以这里只是把X、y的值进行传入,不做额外处理)

需要注意:这里的X、y,指的都是训练集的数据!

"""

self.X_train = X_train

self.y_train = y_train

def predict(self, X_test):

"""

推理过程(此处是重点)

"""

# 大致流程:

# Step1:寻找样本的 K 个最近邻居

# Step2:获取 K 个最近邻居的标签值

# Step3:对获取到的标签值求均值并进行返回

# 具体实现:

# 1、定义一个列表,用于接收每个测试集预测出来的标签值

results = []

# 2、循环遍历测试集中的每一个样本,分别求其对应的标签值,并存放值results中

for x_test in X_test:

# Step1:用欧式空间,求当前测试样本与每个训练样本的距离

distance = ((self.X_train - x_test) ** 2).sum(axis=1) ** 0.5

# Step2:进行排序和切片,找到最近的K个邻居

idxes = distance.argsort()[:self.n_neighbors]

# Step3:获取最近的这K个邻居的标签

labels = self.y_train[idxes]

# Step4:对获取到的标签值列表求均值

final_label = labels.mean()

# Step5:将均值添加到预测标签值的结果集中

results.append(final_label)

# 3、用Numpy.ndarray格式,返回每个样本对应的标签值,方便后续与实际值之间做分析

return np.array(results)使用自定义的KNN分类器处理【波士顿(bosto)房价预测】任务:

# 引入pandas库,通过csv文件获取数据集

import pandas as pd

data = pd.read_csv(filepath_or_buffer="boston_house_prices.csv", skiprows=[0])

# 通过索引,将数据集拆分为特征集和标签集

X = data.drop(columns=["MEDV"]).to_numpy()

y = data["MEDV"].to_numpy()

# 引入train_test_split,将数据集进一步切分为训练集和测试集

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=0)

# 调用自定义的knn回归器,分别传入训练集和测试集

my_knn_regressor = KNeighborsRegressor()

my_knn_regressor.fit(X=X_train, y=y_train)

y_pred = my_knn_regressor.predict(X=X_test)

# 比较测试集的标签值和预测出来的标签值,计算预测的误差大小

mae = abs(y_pred - y_test).mean()

print(f"用MAE指标来衡量预测的误差为:{mae}")

mse = ((y_pred - y_test) ** 2).mean()

print(f"用MSE指标来衡量预测的误差为:{mse}")6、附页

【boston_house_prices.csv】文件内容

506,13,,,,,,,,,,,,

"CRIM","ZN","INDUS","CHAS","NOX","RM","AGE","DIS","RAD","TAX","PTRATIO","B","LSTAT","MEDV"

0.00632,18,2.31,0,0.538,6.575,65.2,4.09,1,296,15.3,396.9,4.98,24

0.02731,0,7.07,0,0.469,6.421,78.9,4.9671,2,242,17.8,396.9,9.14,21.6

0.02729,0,7.07,0,0.469,7.185,61.1,4.9671,2,242,17.8,392.83,4.03,34.7

0.03237,0,2.18,0,0.458,6.998,45.8,6.0622,3,222,18.7,394.63,2.94,33.4

0.06905,0,2.18,0,0.458,7.147,54.2,6.0622,3,222,18.7,396.9,5.33,36.2

0.02985,0,2.18,0,0.458,6.43,58.7,6.0622,3,222,18.7,394.12,5.21,28.7

0.08829,12.5,7.87,0,0.524,6.012,66.6,5.5605,5,311,15.2,395.6,12.43,22.9

0.14455,12.5,7.87,0,0.524,6.172,96.1,5.9505,5,311,15.2,396.9,19.15,27.1

0.21124,12.5,7.87,0,0.524,5.631,100,6.0821,5,311,15.2,386.63,29.93,16.5

0.17004,12.5,7.87,0,0.524,6.004,85.9,6.5921,5,311,15.2,386.71,17.1,18.9

0.22489,12.5,7.87,0,0.524,6.377,94.3,6.3467,5,311,15.2,392.52,20.45,15

0.11747,12.5,7.87,0,0.524,6.009,82.9,6.2267,5,311,15.2,396.9,13.27,18.9

0.09378,12.5,7.87,0,0.524,5.889,39,5.4509,5,311,15.2,390.5,15.71,21.7

0.62976,0,8.14,0,0.538,5.949,61.8,4.7075,4,307,21,396.9,8.26,20.4

0.63796,0,8.14,0,0.538,6.096,84.5,4.4619,4,307,21,380.02,10.26,18.2

0.62739,0,8.14,0,0.538,5.834,56.5,4.4986,4,307,21,395.62,8.47,19.9

1.05393,0,8.14,0,0.538,5.935,29.3,4.4986,4,307,21,386.85,6.58,23.1

0.7842,0,8.14,0,0.538,5.99,81.7,4.2579,4,307,21,386.75,14.67,17.5

0.80271,0,8.14,0,0.538,5.456,36.6,3.7965,4,307,21,288.99,11.69,20.2

0.7258,0,8.14,0,0.538,5.727,69.5,3.7965,4,307,21,390.95,11.28,18.2

1.25179,0,8.14,0,0.538,5.57,98.1,3.7979,4,307,21,376.57,21.02,13.6

0.85204,0,8.14,0,0.538,5.965,89.2,4.0123,4,307,21,392.53,13.83,19.6

1.23247,0,8.14,0,0.538,6.142,91.7,3.9769,4,307,21,396.9,18.72,15.2

0.98843,0,8.14,0,0.538,5.813,100,4.0952,4,307,21,394.54,19.88,14.5

0.75026,0,8.14,0,0.538,5.924,94.1,4.3996,4,307,21,394.33,16.3,15.6

0.84054,0,8.14,0,0.538,5.599,85.7,4.4546,4,307,21,303.42,16.51,13.9

0.67191,0,8.14,0,0.538,5.813,90.3,4.682,4,307,21,376.88,14.81,16.6

0.95577,0,8.14,0,0.538,6.047,88.8,4.4534,4,307,21,306.38,17.28,14.8

0.77299,0,8.14,0,0.538,6.495,94.4,4.4547,4,307,21,387.94,12.8,18.4

1.00245,0,8.14,0,0.538,6.674,87.3,4.239,4,307,21,380.23,11.98,21

1.13081,0,8.14,0,0.538,5.713,94.1,4.233,4,307,21,360.17,22.6,12.7

1.35472,0,8.14,0,0.538,6.072,100,4.175,4,307,21,376.73,13.04,14.5

1.38799,0,8.14,0,0.538,5.95,82,3.99,4,307,21,232.6,27.71,13.2

1.15172,0,8.14,0,0.538,5.701,95,3.7872,4,307,21,358.77,18.35,13.1

1.61282,0,8.14,0,0.538,6.096,96.9,3.7598,4,307,21,248.31,20.34,13.5

0.06417,0,5.96,0,0.499,5.933,68.2,3.3603,5,279,19.2,396.9,9.68,18.9

0.09744,0,5.96,0,0.499,5.841,61.4,3.3779,5,279,19.2,377.56,11.41,20

0.08014,0,5.96,0,0.499,5.85,41.5,3.9342,5,279,19.2,396.9,8.77,21

0.17505,0,5.96,0,0.499,5.966,30.2,3.8473,5,279,19.2,393.43,10.13,24.7

0.02763,75,2.95,0,0.428,6.595,21.8,5.4011,3,252,18.3,395.63,4.32,30.8

0.03359,75,2.95,0,0.428,7.024,15.8,5.4011,3,252,18.3,395.62,1.98,34.9

0.12744,0,6.91,0,0.448,6.77,2.9,5.7209,3,233,17.9,385.41,4.84,26.6

0.1415,0,6.91,0,0.448,6.169,6.6,5.7209,3,233,17.9,383.37,5.81,25.3

0.15936,0,6.91,0,0.448,6.211,6.5,5.7209,3,233,17.9,394.46,7.44,24.7

0.12269,0,6.91,0,0.448,6.069,40,5.7209,3,233,17.9,389.39,9.55,21.2

0.17142,0,6.91,0,0.448,5.682,33.8,5.1004,3,233,17.9,396.9,10.21,19.3

0.18836,0,6.91,0,0.448,5.786,33.3,5.1004,3,233,17.9,396.9,14.15,20

0.22927,0,6.91,0,0.448,6.03,85.5,5.6894,3,233,17.9,392.74,18.8,16.6

0.25387,0,6.91,0,0.448,5.399,95.3,5.87,3,233,17.9,396.9,30.81,14.4

0.21977,0,6.91,0,0.448,5.602,62,6.0877,3,233,17.9,396.9,16.2,19.4

0.08873,21,5.64,0,0.439,5.963,45.7,6.8147,4,243,16.8,395.56,13.45,19.7

0.04337,21,5.64,0,0.439,6.115,63,6.8147,4,243,16.8,393.97,9.43,20.5

0.0536,21,5.64,0,0.439,6.511,21.1,6.8147,4,243,16.8,396.9,5.28,25

0.04981,21,5.64,0,0.439,5.998,21.4,6.8147,4,243,16.8,396.9,8.43,23.4

0.0136,75,4,0,0.41,5.888,47.6,7.3197,3,469,21.1,396.9,14.8,18.9

0.01311,90,1.22,0,0.403,7.249,21.9,8.6966,5,226,17.9,395.93,4.81,35.4

0.02055,85,0.74,0,0.41,6.383,35.7,9.1876,2,313,17.3,396.9,5.77,24.7

0.01432,100,1.32,0,0.411,6.816,40.5,8.3248,5,256,15.1,392.9,3.95,31.6

0.15445,25,5.13,0,0.453,6.145,29.2,7.8148,8,284,19.7,390.68,6.86,23.3

0.10328,25,5.13,0,0.453,5.927,47.2,6.932,8,284,19.7,396.9,9.22,19.6

0.14932,25,5.13,0,0.453,5.741,66.2,7.2254,8,284,19.7,395.11,13.15,18.7

0.17171,25,5.13,0,0.453,5.966,93.4,6.8185,8,284,19.7,378.08,14.44,16

0.11027,25,5.13,0,0.453,6.456,67.8,7.2255,8,284,19.7,396.9,6.73,22.2

0.1265,25,5.13,0,0.453,6.762,43.4,7.9809,8,284,19.7,395.58,9.5,25

0.01951,17.5,1.38,0,0.4161,7.104,59.5,9.2229,3,216,18.6,393.24,8.05,33

0.03584,80,3.37,0,0.398,6.29,17.8,6.6115,4,337,16.1,396.9,4.67,23.5

0.04379,80,3.37,0,0.398,5.787,31.1,6.6115,4,337,16.1,396.9,10.24,19.4

0.05789,12.5,6.07,0,0.409,5.878,21.4,6.498,4,345,18.9,396.21,8.1,22

0.13554,12.5,6.07,0,0.409,5.594,36.8,6.498,4,345,18.9,396.9,13.09,17.4

0.12816,12.5,6.07,0,0.409,5.885,33,6.498,4,345,18.9,396.9,8.79,20.9

0.08826,0,10.81,0,0.413,6.417,6.6,5.2873,4,305,19.2,383.73,6.72,24.2

0.15876,0,10.81,0,0.413,5.961,17.5,5.2873,4,305,19.2,376.94,9.88,21.7

0.09164,0,10.81,0,0.413,6.065,7.8,5.2873,4,305,19.2,390.91,5.52,22.8

0.19539,0,10.81,0,0.413,6.245,6.2,5.2873,4,305,19.2,377.17,7.54,23.4

0.07896,0,12.83,0,0.437,6.273,6,4.2515,5,398,18.7,394.92,6.78,24.1

0.09512,0,12.83,0,0.437,6.286,45,4.5026,5,398,18.7,383.23,8.94,21.4

0.10153,0,12.83,0,0.437,6.279,74.5,4.0522,5,398,18.7,373.66,11.97,20

0.08707,0,12.83,0,0.437,6.14,45.8,4.0905,5,398,18.7,386.96,10.27,20.8

0.05646,0,12.83,0,0.437,6.232,53.7,5.0141,5,398,18.7,386.4,12.34,21.2

0.08387,0,12.83,0,0.437,5.874,36.6,4.5026,5,398,18.7,396.06,9.1,20.3

0.04113,25,4.86,0,0.426,6.727,33.5,5.4007,4,281,19,396.9,5.29,28

0.04462,25,4.86,0,0.426,6.619,70.4,5.4007,4,281,19,395.63,7.22,23.9

0.03659,25,4.86,0,0.426,6.302,32.2,5.4007,4,281,19,396.9,6.72,24.8

0.03551,25,4.86,0,0.426,6.167,46.7,5.4007,4,281,19,390.64,7.51,22.9

0.05059,0,4.49,0,0.449,6.389,48,4.7794,3,247,18.5,396.9,9.62,23.9

0.05735,0,4.49,0,0.449,6.63,56.1,4.4377,3,247,18.5,392.3,6.53,26.6

0.05188,0,4.49,0,0.449,6.015,45.1,4.4272,3,247,18.5,395.99,12.86,22.5

0.07151,0,4.49,0,0.449,6.121,56.8,3.7476,3,247,18.5,395.15,8.44,22.2

0.0566,0,3.41,0,0.489,7.007,86.3,3.4217,2,270,17.8,396.9,5.5,23.6

0.05302,0,3.41,0,0.489,7.079,63.1,3.4145,2,270,17.8,396.06,5.7,28.7

0.04684,0,3.41,0,0.489,6.417,66.1,3.0923,2,270,17.8,392.18,8.81,22.6

0.03932,0,3.41,0,0.489,6.405,73.9,3.0921,2,270,17.8,393.55,8.2,22

0.04203,28,15.04,0,0.464,6.442,53.6,3.6659,4,270,18.2,395.01,8.16,22.9

0.02875,28,15.04,0,0.464,6.211,28.9,3.6659,4,270,18.2,396.33,6.21,25

0.04294,28,15.04,0,0.464,6.249,77.3,3.615,4,270,18.2,396.9,10.59,20.6

0.12204,0,2.89,0,0.445,6.625,57.8,3.4952,2,276,18,357.98,6.65,28.4

0.11504,0,2.89,0,0.445,6.163,69.6,3.4952,2,276,18,391.83,11.34,21.4

0.12083,0,2.89,0,0.445,8.069,76,3.4952,2,276,18,396.9,4.21,38.7

0.08187,0,2.89,0,0.445,7.82,36.9,3.4952,2,276,18,393.53,3.57,43.8

0.0686,0,2.89,0,0.445,7.416,62.5,3.4952,2,276,18,396.9,6.19,33.2

0.14866,0,8.56,0,0.52,6.727,79.9,2.7778,5,384,20.9,394.76,9.42,27.5

0.11432,0,8.56,0,0.52,6.781,71.3,2.8561,5,384,20.9,395.58,7.67,26.5

0.22876,0,8.56,0,0.52,6.405,85.4,2.7147,5,384,20.9,70.8,10.63,18.6

0.21161,0,8.56,0,0.52,6.137,87.4,2.7147,5,384,20.9,394.47,13.44,19.3

0.1396,0,8.56,0,0.52,6.167,90,2.421,5,384,20.9,392.69,12.33,20.1

0.13262,0,8.56,0,0.52,5.851,96.7,2.1069,5,384,20.9,394.05,16.47,19.5

0.1712,0,8.56,0,0.52,5.836,91.9,2.211,5,384,20.9,395.67,18.66,19.5

0.13117,0,8.56,0,0.52,6.127,85.2,2.1224,5,384,20.9,387.69,14.09,20.4

0.12802,0,8.56,0,0.52,6.474,97.1,2.4329,5,384,20.9,395.24,12.27,19.8

0.26363,0,8.56,0,0.52,6.229,91.2,2.5451,5,384,20.9,391.23,15.55,19.4

0.10793,0,8.56,0,0.52,6.195,54.4,2.7778,5,384,20.9,393.49,13,21.7

0.10084,0,10.01,0,0.547,6.715,81.6,2.6775,6,432,17.8,395.59,10.16,22.8

0.12329,0,10.01,0,0.547,5.913,92.9,2.3534,6,432,17.8,394.95,16.21,18.8

0.22212,0,10.01,0,0.547,6.092,95.4,2.548,6,432,17.8,396.9,17.09,18.7

0.14231,0,10.01,0,0.547,6.254,84.2,2.2565,6,432,17.8,388.74,10.45,18.5

0.17134,0,10.01,0,0.547,5.928,88.2,2.4631,6,432,17.8,344.91,15.76,18.3

0.13158,0,10.01,0,0.547,6.176,72.5,2.7301,6,432,17.8,393.3,12.04,21.2

0.15098,0,10.01,0,0.547,6.021,82.6,2.7474,6,432,17.8,394.51,10.3,19.2

0.13058,0,10.01,0,0.547,5.872,73.1,2.4775,6,432,17.8,338.63,15.37,20.4

0.14476,0,10.01,0,0.547,5.731,65.2,2.7592,6,432,17.8,391.5,13.61,19.3

0.06899,0,25.65,0,0.581,5.87,69.7,2.2577,2,188,19.1,389.15,14.37,22

0.07165,0,25.65,0,0.581,6.004,84.1,2.1974,2,188,19.1,377.67,14.27,20.3

0.09299,0,25.65,0,0.581,5.961,92.9,2.0869,2,188,19.1,378.09,17.93,20.5

0.15038,0,25.65,0,0.581,5.856,97,1.9444,2,188,19.1,370.31,25.41,17.3

0.09849,0,25.65,0,0.581,5.879,95.8,2.0063,2,188,19.1,379.38,17.58,18.8

0.16902,0,25.65,0,0.581,5.986,88.4,1.9929,2,188,19.1,385.02,14.81,21.4

0.38735,0,25.65,0,0.581,5.613,95.6,1.7572,2,188,19.1,359.29,27.26,15.7

0.25915,0,21.89,0,0.624,5.693,96,1.7883,4,437,21.2,392.11,17.19,16.2

0.32543,0,21.89,0,0.624,6.431,98.8,1.8125,4,437,21.2,396.9,15.39,18

0.88125,0,21.89,0,0.624,5.637,94.7,1.9799,4,437,21.2,396.9,18.34,14.3

0.34006,0,21.89,0,0.624,6.458,98.9,2.1185,4,437,21.2,395.04,12.6,19.2

1.19294,0,21.89,0,0.624,6.326,97.7,2.271,4,437,21.2,396.9,12.26,19.6

0.59005,0,21.89,0,0.624,6.372,97.9,2.3274,4,437,21.2,385.76,11.12,23

0.32982,0,21.89,0,0.624,5.822,95.4,2.4699,4,437,21.2,388.69,15.03,18.4

0.97617,0,21.89,0,0.624,5.757,98.4,2.346,4,437,21.2,262.76,17.31,15.6

0.55778,0,21.89,0,0.624,6.335,98.2,2.1107,4,437,21.2,394.67,16.96,18.1

0.32264,0,21.89,0,0.624,5.942,93.5,1.9669,4,437,21.2,378.25,16.9,17.4

0.35233,0,21.89,0,0.624,6.454,98.4,1.8498,4,437,21.2,394.08,14.59,17.1

0.2498,0,21.89,0,0.624,5.857,98.2,1.6686,4,437,21.2,392.04,21.32,13.3

0.54452,0,21.89,0,0.624,6.151,97.9,1.6687,4,437,21.2,396.9,18.46,17.8

0.2909,0,21.89,0,0.624,6.174,93.6,1.6119,4,437,21.2,388.08,24.16,14

1.62864,0,21.89,0,0.624,5.019,100,1.4394,4,437,21.2,396.9,34.41,14.4

3.32105,0,19.58,1,0.871,5.403,100,1.3216,5,403,14.7,396.9,26.82,13.4

4.0974,0,19.58,0,0.871,5.468,100,1.4118,5,403,14.7,396.9,26.42,15.6

2.77974,0,19.58,0,0.871,4.903,97.8,1.3459,5,403,14.7,396.9,29.29,11.8

2.37934,0,19.58,0,0.871,6.13,100,1.4191,5,403,14.7,172.91,27.8,13.8

2.15505,0,19.58,0,0.871,5.628,100,1.5166,5,403,14.7,169.27,16.65,15.6

2.36862,0,19.58,0,0.871,4.926,95.7,1.4608,5,403,14.7,391.71,29.53,14.6

2.33099,0,19.58,0,0.871,5.186,93.8,1.5296,5,403,14.7,356.99,28.32,17.8

2.73397,0,19.58,0,0.871,5.597,94.9,1.5257,5,403,14.7,351.85,21.45,15.4

1.6566,0,19.58,0,0.871,6.122,97.3,1.618,5,403,14.7,372.8,14.1,21.5

1.49632,0,19.58,0,0.871,5.404,100,1.5916,5,403,14.7,341.6,13.28,19.6

1.12658,0,19.58,1,0.871,5.012,88,1.6102,5,403,14.7,343.28,12.12,15.3

2.14918,0,19.58,0,0.871,5.709,98.5,1.6232,5,403,14.7,261.95,15.79,19.4

1.41385,0,19.58,1,0.871,6.129,96,1.7494,5,403,14.7,321.02,15.12,17

3.53501,0,19.58,1,0.871,6.152,82.6,1.7455,5,403,14.7,88.01,15.02,15.6

2.44668,0,19.58,0,0.871,5.272,94,1.7364,5,403,14.7,88.63,16.14,13.1

1.22358,0,19.58,0,0.605,6.943,97.4,1.8773,5,403,14.7,363.43,4.59,41.3

1.34284,0,19.58,0,0.605,6.066,100,1.7573,5,403,14.7,353.89,6.43,24.3

1.42502,0,19.58,0,0.871,6.51,100,1.7659,5,403,14.7,364.31,7.39,23.3

1.27346,0,19.58,1,0.605,6.25,92.6,1.7984,5,403,14.7,338.92,5.5,27

1.46336,0,19.58,0,0.605,7.489,90.8,1.9709,5,403,14.7,374.43,1.73,50

1.83377,0,19.58,1,0.605,7.802,98.2,2.0407,5,403,14.7,389.61,1.92,50

1.51902,0,19.58,1,0.605,8.375,93.9,2.162,5,403,14.7,388.45,3.32,50

2.24236,0,19.58,0,0.605,5.854,91.8,2.422,5,403,14.7,395.11,11.64,22.7

2.924,0,19.58,0,0.605,6.101,93,2.2834,5,403,14.7,240.16,9.81,25

2.01019,0,19.58,0,0.605,7.929,96.2,2.0459,5,403,14.7,369.3,3.7,50

1.80028,0,19.58,0,0.605,5.877,79.2,2.4259,5,403,14.7,227.61,12.14,23.8

2.3004,0,19.58,0,0.605,6.319,96.1,2.1,5,403,14.7,297.09,11.1,23.8

2.44953,0,19.58,0,0.605,6.402,95.2,2.2625,5,403,14.7,330.04,11.32,22.3

1.20742,0,19.58,0,0.605,5.875,94.6,2.4259,5,403,14.7,292.29,14.43,17.4

2.3139,0,19.58,0,0.605,5.88,97.3,2.3887,5,403,14.7,348.13,12.03,19.1

0.13914,0,4.05,0,0.51,5.572,88.5,2.5961,5,296,16.6,396.9,14.69,23.1

0.09178,0,4.05,0,0.51,6.416,84.1,2.6463,5,296,16.6,395.5,9.04,23.6

0.08447,0,4.05,0,0.51,5.859,68.7,2.7019,5,296,16.6,393.23,9.64,22.6

0.06664,0,4.05,0,0.51,6.546,33.1,3.1323,5,296,16.6,390.96,5.33,29.4

0.07022,0,4.05,0,0.51,6.02,47.2,3.5549,5,296,16.6,393.23,10.11,23.2

0.05425,0,4.05,0,0.51,6.315,73.4,3.3175,5,296,16.6,395.6,6.29,24.6

0.06642,0,4.05,0,0.51,6.86,74.4,2.9153,5,296,16.6,391.27,6.92,29.9

0.0578,0,2.46,0,0.488,6.98,58.4,2.829,3,193,17.8,396.9,5.04,37.2

0.06588,0,2.46,0,0.488,7.765,83.3,2.741,3,193,17.8,395.56,7.56,39.8

0.06888,0,2.46,0,0.488,6.144,62.2,2.5979,3,193,17.8,396.9,9.45,36.2

0.09103,0,2.46,0,0.488,7.155,92.2,2.7006,3,193,17.8,394.12,4.82,37.9

0.10008,0,2.46,0,0.488,6.563,95.6,2.847,3,193,17.8,396.9,5.68,32.5

0.08308,0,2.46,0,0.488,5.604,89.8,2.9879,3,193,17.8,391,13.98,26.4

0.06047,0,2.46,0,0.488,6.153,68.8,3.2797,3,193,17.8,387.11,13.15,29.6

0.05602,0,2.46,0,0.488,7.831,53.6,3.1992,3,193,17.8,392.63,4.45,50

0.07875,45,3.44,0,0.437,6.782,41.1,3.7886,5,398,15.2,393.87,6.68,32

0.12579,45,3.44,0,0.437,6.556,29.1,4.5667,5,398,15.2,382.84,4.56,29.8

0.0837,45,3.44,0,0.437,7.185,38.9,4.5667,5,398,15.2,396.9,5.39,34.9

0.09068,45,3.44,0,0.437,6.951,21.5,6.4798,5,398,15.2,377.68,5.1,37

0.06911,45,3.44,0,0.437,6.739,30.8,6.4798,5,398,15.2,389.71,4.69,30.5

0.08664,45,3.44,0,0.437,7.178,26.3,6.4798,5,398,15.2,390.49,2.87,36.4

0.02187,60,2.93,0,0.401,6.8,9.9,6.2196,1,265,15.6,393.37,5.03,31.1

0.01439,60,2.93,0,0.401,6.604,18.8,6.2196,1,265,15.6,376.7,4.38,29.1

0.01381,80,0.46,0,0.422,7.875,32,5.6484,4,255,14.4,394.23,2.97,50

0.04011,80,1.52,0,0.404,7.287,34.1,7.309,2,329,12.6,396.9,4.08,33.3

0.04666,80,1.52,0,0.404,7.107,36.6,7.309,2,329,12.6,354.31,8.61,30.3

0.03768,80,1.52,0,0.404,7.274,38.3,7.309,2,329,12.6,392.2,6.62,34.6

0.0315,95,1.47,0,0.403,6.975,15.3,7.6534,3,402,17,396.9,4.56,34.9

0.01778,95,1.47,0,0.403,7.135,13.9,7.6534,3,402,17,384.3,4.45,32.9

0.03445,82.5,2.03,0,0.415,6.162,38.4,6.27,2,348,14.7,393.77,7.43,24.1

0.02177,82.5,2.03,0,0.415,7.61,15.7,6.27,2,348,14.7,395.38,3.11,42.3

0.0351,95,2.68,0,0.4161,7.853,33.2,5.118,4,224,14.7,392.78,3.81,48.5

0.02009,95,2.68,0,0.4161,8.034,31.9,5.118,4,224,14.7,390.55,2.88,50

0.13642,0,10.59,0,0.489,5.891,22.3,3.9454,4,277,18.6,396.9,10.87,22.6

0.22969,0,10.59,0,0.489,6.326,52.5,4.3549,4,277,18.6,394.87,10.97,24.4

0.25199,0,10.59,0,0.489,5.783,72.7,4.3549,4,277,18.6,389.43,18.06,22.5

0.13587,0,10.59,1,0.489,6.064,59.1,4.2392,4,277,18.6,381.32,14.66,24.4

0.43571,0,10.59,1,0.489,5.344,100,3.875,4,277,18.6,396.9,23.09,20

0.17446,0,10.59,1,0.489,5.96,92.1,3.8771,4,277,18.6,393.25,17.27,21.7

0.37578,0,10.59,1,0.489,5.404,88.6,3.665,4,277,18.6,395.24,23.98,19.3

0.21719,0,10.59,1,0.489,5.807,53.8,3.6526,4,277,18.6,390.94,16.03,22.4

0.14052,0,10.59,0,0.489,6.375,32.3,3.9454,4,277,18.6,385.81,9.38,28.1

0.28955,0,10.59,0,0.489,5.412,9.8,3.5875,4,277,18.6,348.93,29.55,23.7

0.19802,0,10.59,0,0.489,6.182,42.4,3.9454,4,277,18.6,393.63,9.47,25

0.0456,0,13.89,1,0.55,5.888,56,3.1121,5,276,16.4,392.8,13.51,23.3

0.07013,0,13.89,0,0.55,6.642,85.1,3.4211,5,276,16.4,392.78,9.69,28.7

0.11069,0,13.89,1,0.55,5.951,93.8,2.8893,5,276,16.4,396.9,17.92,21.5

0.11425,0,13.89,1,0.55,6.373,92.4,3.3633,5,276,16.4,393.74,10.5,23

0.35809,0,6.2,1,0.507,6.951,88.5,2.8617,8,307,17.4,391.7,9.71,26.7

0.40771,0,6.2,1,0.507,6.164,91.3,3.048,8,307,17.4,395.24,21.46,21.7

0.62356,0,6.2,1,0.507,6.879,77.7,3.2721,8,307,17.4,390.39,9.93,27.5

0.6147,0,6.2,0,0.507,6.618,80.8,3.2721,8,307,17.4,396.9,7.6,30.1

0.31533,0,6.2,0,0.504,8.266,78.3,2.8944,8,307,17.4,385.05,4.14,44.8

0.52693,0,6.2,0,0.504,8.725,83,2.8944,8,307,17.4,382,4.63,50

0.38214,0,6.2,0,0.504,8.04,86.5,3.2157,8,307,17.4,387.38,3.13,37.6

0.41238,0,6.2,0,0.504,7.163,79.9,3.2157,8,307,17.4,372.08,6.36,31.6

0.29819,0,6.2,0,0.504,7.686,17,3.3751,8,307,17.4,377.51,3.92,46.7

0.44178,0,6.2,0,0.504,6.552,21.4,3.3751,8,307,17.4,380.34,3.76,31.5

0.537,0,6.2,0,0.504,5.981,68.1,3.6715,8,307,17.4,378.35,11.65,24.3

0.46296,0,6.2,0,0.504,7.412,76.9,3.6715,8,307,17.4,376.14,5.25,31.7

0.57529,0,6.2,0,0.507,8.337,73.3,3.8384,8,307,17.4,385.91,2.47,41.7

0.33147,0,6.2,0,0.507,8.247,70.4,3.6519,8,307,17.4,378.95,3.95,48.3

0.44791,0,6.2,1,0.507,6.726,66.5,3.6519,8,307,17.4,360.2,8.05,29

0.33045,0,6.2,0,0.507,6.086,61.5,3.6519,8,307,17.4,376.75,10.88,24

0.52058,0,6.2,1,0.507,6.631,76.5,4.148,8,307,17.4,388.45,9.54,25.1

0.51183,0,6.2,0,0.507,7.358,71.6,4.148,8,307,17.4,390.07,4.73,31.5

0.08244,30,4.93,0,0.428,6.481,18.5,6.1899,6,300,16.6,379.41,6.36,23.7

0.09252,30,4.93,0,0.428,6.606,42.2,6.1899,6,300,16.6,383.78,7.37,23.3

0.11329,30,4.93,0,0.428,6.897,54.3,6.3361,6,300,16.6,391.25,11.38,22

0.10612,30,4.93,0,0.428,6.095,65.1,6.3361,6,300,16.6,394.62,12.4,20.1

0.1029,30,4.93,0,0.428,6.358,52.9,7.0355,6,300,16.6,372.75,11.22,22.2

0.12757,30,4.93,0,0.428,6.393,7.8,7.0355,6,300,16.6,374.71,5.19,23.7

0.20608,22,5.86,0,0.431,5.593,76.5,7.9549,7,330,19.1,372.49,12.5,17.6

0.19133,22,5.86,0,0.431,5.605,70.2,7.9549,7,330,19.1,389.13,18.46,18.5

0.33983,22,5.86,0,0.431,6.108,34.9,8.0555,7,330,19.1,390.18,9.16,24.3

0.19657,22,5.86,0,0.431,6.226,79.2,8.0555,7,330,19.1,376.14,10.15,20.5

0.16439,22,5.86,0,0.431,6.433,49.1,7.8265,7,330,19.1,374.71,9.52,24.5

0.19073,22,5.86,0,0.431,6.718,17.5,7.8265,7,330,19.1,393.74,6.56,26.2

0.1403,22,5.86,0,0.431,6.487,13,7.3967,7,330,19.1,396.28,5.9,24.4

0.21409,22,5.86,0,0.431,6.438,8.9,7.3967,7,330,19.1,377.07,3.59,24.8

0.08221,22,5.86,0,0.431,6.957,6.8,8.9067,7,330,19.1,386.09,3.53,29.6

0.36894,22,5.86,0,0.431,8.259,8.4,8.9067,7,330,19.1,396.9,3.54,42.8

0.04819,80,3.64,0,0.392,6.108,32,9.2203,1,315,16.4,392.89,6.57,21.9

0.03548,80,3.64,0,0.392,5.876,19.1,9.2203,1,315,16.4,395.18,9.25,20.9

0.01538,90,3.75,0,0.394,7.454,34.2,6.3361,3,244,15.9,386.34,3.11,44

0.61154,20,3.97,0,0.647,8.704,86.9,1.801,5,264,13,389.7,5.12,50

0.66351,20,3.97,0,0.647,7.333,100,1.8946,5,264,13,383.29,7.79,36

0.65665,20,3.97,0,0.647,6.842,100,2.0107,5,264,13,391.93,6.9,30.1

0.54011,20,3.97,0,0.647,7.203,81.8,2.1121,5,264,13,392.8,9.59,33.8

0.53412,20,3.97,0,0.647,7.52,89.4,2.1398,5,264,13,388.37,7.26,43.1

0.52014,20,3.97,0,0.647,8.398,91.5,2.2885,5,264,13,386.86,5.91,48.8

0.82526,20,3.97,0,0.647,7.327,94.5,2.0788,5,264,13,393.42,11.25,31

0.55007,20,3.97,0,0.647,7.206,91.6,1.9301,5,264,13,387.89,8.1,36.5

0.76162,20,3.97,0,0.647,5.56,62.8,1.9865,5,264,13,392.4,10.45,22.8

0.7857,20,3.97,0,0.647,7.014,84.6,2.1329,5,264,13,384.07,14.79,30.7

0.57834,20,3.97,0,0.575,8.297,67,2.4216,5,264,13,384.54,7.44,50

0.5405,20,3.97,0,0.575,7.47,52.6,2.872,5,264,13,390.3,3.16,43.5

0.09065,20,6.96,1,0.464,5.92,61.5,3.9175,3,223,18.6,391.34,13.65,20.7

0.29916,20,6.96,0,0.464,5.856,42.1,4.429,3,223,18.6,388.65,13,21.1

0.16211,20,6.96,0,0.464,6.24,16.3,4.429,3,223,18.6,396.9,6.59,25.2

0.1146,20,6.96,0,0.464,6.538,58.7,3.9175,3,223,18.6,394.96,7.73,24.4

0.22188,20,6.96,1,0.464,7.691,51.8,4.3665,3,223,18.6,390.77,6.58,35.2

0.05644,40,6.41,1,0.447,6.758,32.9,4.0776,4,254,17.6,396.9,3.53,32.4

0.09604,40,6.41,0,0.447,6.854,42.8,4.2673,4,254,17.6,396.9,2.98,32

0.10469,40,6.41,1,0.447,7.267,49,4.7872,4,254,17.6,389.25,6.05,33.2

0.06127,40,6.41,1,0.447,6.826,27.6,4.8628,4,254,17.6,393.45,4.16,33.1

0.07978,40,6.41,0,0.447,6.482,32.1,4.1403,4,254,17.6,396.9,7.19,29.1

0.21038,20,3.33,0,0.4429,6.812,32.2,4.1007,5,216,14.9,396.9,4.85,35.1

0.03578,20,3.33,0,0.4429,7.82,64.5,4.6947,5,216,14.9,387.31,3.76,45.4

0.03705,20,3.33,0,0.4429,6.968,37.2,5.2447,5,216,14.9,392.23,4.59,35.4

0.06129,20,3.33,1,0.4429,7.645,49.7,5.2119,5,216,14.9,377.07,3.01,46

0.01501,90,1.21,1,0.401,7.923,24.8,5.885,1,198,13.6,395.52,3.16,50

0.00906,90,2.97,0,0.4,7.088,20.8,7.3073,1,285,15.3,394.72,7.85,32.2

0.01096,55,2.25,0,0.389,6.453,31.9,7.3073,1,300,15.3,394.72,8.23,22

0.01965,80,1.76,0,0.385,6.23,31.5,9.0892,1,241,18.2,341.6,12.93,20.1

0.03871,52.5,5.32,0,0.405,6.209,31.3,7.3172,6,293,16.6,396.9,7.14,23.2

0.0459,52.5,5.32,0,0.405,6.315,45.6,7.3172,6,293,16.6,396.9,7.6,22.3

0.04297,52.5,5.32,0,0.405,6.565,22.9,7.3172,6,293,16.6,371.72,9.51,24.8

0.03502,80,4.95,0,0.411,6.861,27.9,5.1167,4,245,19.2,396.9,3.33,28.5

0.07886,80,4.95,0,0.411,7.148,27.7,5.1167,4,245,19.2,396.9,3.56,37.3

0.03615,80,4.95,0,0.411,6.63,23.4,5.1167,4,245,19.2,396.9,4.7,27.9

0.08265,0,13.92,0,0.437,6.127,18.4,5.5027,4,289,16,396.9,8.58,23.9

0.08199,0,13.92,0,0.437,6.009,42.3,5.5027,4,289,16,396.9,10.4,21.7

0.12932,0,13.92,0,0.437,6.678,31.1,5.9604,4,289,16,396.9,6.27,28.6

0.05372,0,13.92,0,0.437,6.549,51,5.9604,4,289,16,392.85,7.39,27.1

0.14103,0,13.92,0,0.437,5.79,58,6.32,4,289,16,396.9,15.84,20.3

0.06466,70,2.24,0,0.4,6.345,20.1,7.8278,5,358,14.8,368.24,4.97,22.5

0.05561,70,2.24,0,0.4,7.041,10,7.8278,5,358,14.8,371.58,4.74,29

0.04417,70,2.24,0,0.4,6.871,47.4,7.8278,5,358,14.8,390.86,6.07,24.8

0.03537,34,6.09,0,0.433,6.59,40.4,5.4917,7,329,16.1,395.75,9.5,22

0.09266,34,6.09,0,0.433,6.495,18.4,5.4917,7,329,16.1,383.61,8.67,26.4

0.1,34,6.09,0,0.433,6.982,17.7,5.4917,7,329,16.1,390.43,4.86,33.1

0.05515,33,2.18,0,0.472,7.236,41.1,4.022,7,222,18.4,393.68,6.93,36.1

0.05479,33,2.18,0,0.472,6.616,58.1,3.37,7,222,18.4,393.36,8.93,28.4

0.07503,33,2.18,0,0.472,7.42,71.9,3.0992,7,222,18.4,396.9,6.47,33.4

0.04932,33,2.18,0,0.472,6.849,70.3,3.1827,7,222,18.4,396.9,7.53,28.2

0.49298,0,9.9,0,0.544,6.635,82.5,3.3175,4,304,18.4,396.9,4.54,22.8

0.3494,0,9.9,0,0.544,5.972,76.7,3.1025,4,304,18.4,396.24,9.97,20.3

2.63548,0,9.9,0,0.544,4.973,37.8,2.5194,4,304,18.4,350.45,12.64,16.1

0.79041,0,9.9,0,0.544,6.122,52.8,2.6403,4,304,18.4,396.9,5.98,22.1

0.26169,0,9.9,0,0.544,6.023,90.4,2.834,4,304,18.4,396.3,11.72,19.4

0.26938,0,9.9,0,0.544,6.266,82.8,3.2628,4,304,18.4,393.39,7.9,21.6

0.3692,0,9.9,0,0.544,6.567,87.3,3.6023,4,304,18.4,395.69,9.28,23.8

0.25356,0,9.9,0,0.544,5.705,77.7,3.945,4,304,18.4,396.42,11.5,16.2

0.31827,0,9.9,0,0.544,5.914,83.2,3.9986,4,304,18.4,390.7,18.33,17.8

0.24522,0,9.9,0,0.544,5.782,71.7,4.0317,4,304,18.4,396.9,15.94,19.8

0.40202,0,9.9,0,0.544,6.382,67.2,3.5325,4,304,18.4,395.21,10.36,23.1

0.47547,0,9.9,0,0.544,6.113,58.8,4.0019,4,304,18.4,396.23,12.73,21

0.1676,0,7.38,0,0.493,6.426,52.3,4.5404,5,287,19.6,396.9,7.2,23.8

0.18159,0,7.38,0,0.493,6.376,54.3,4.5404,5,287,19.6,396.9,6.87,23.1

0.35114,0,7.38,0,0.493,6.041,49.9,4.7211,5,287,19.6,396.9,7.7,20.4

0.28392,0,7.38,0,0.493,5.708,74.3,4.7211,5,287,19.6,391.13,11.74,18.5

0.34109,0,7.38,0,0.493,6.415,40.1,4.7211,5,287,19.6,396.9,6.12,25

0.19186,0,7.38,0,0.493,6.431,14.7,5.4159,5,287,19.6,393.68,5.08,24.6

0.30347,0,7.38,0,0.493,6.312,28.9,5.4159,5,287,19.6,396.9,6.15,23

0.24103,0,7.38,0,0.493,6.083,43.7,5.4159,5,287,19.6,396.9,12.79,22.2

0.06617,0,3.24,0,0.46,5.868,25.8,5.2146,4,430,16.9,382.44,9.97,19.3

0.06724,0,3.24,0,0.46,6.333,17.2,5.2146,4,430,16.9,375.21,7.34,22.6

0.04544,0,3.24,0,0.46,6.144,32.2,5.8736,4,430,16.9,368.57,9.09,19.8

0.05023,35,6.06,0,0.4379,5.706,28.4,6.6407,1,304,16.9,394.02,12.43,17.1

0.03466,35,6.06,0,0.4379,6.031,23.3,6.6407,1,304,16.9,362.25,7.83,19.4

0.05083,0,5.19,0,0.515,6.316,38.1,6.4584,5,224,20.2,389.71,5.68,22.2

0.03738,0,5.19,0,0.515,6.31,38.5,6.4584,5,224,20.2,389.4,6.75,20.7

0.03961,0,5.19,0,0.515,6.037,34.5,5.9853,5,224,20.2,396.9,8.01,21.1

0.03427,0,5.19,0,0.515,5.869,46.3,5.2311,5,224,20.2,396.9,9.8,19.5

0.03041,0,5.19,0,0.515,5.895,59.6,5.615,5,224,20.2,394.81,10.56,18.5

0.03306,0,5.19,0,0.515,6.059,37.3,4.8122,5,224,20.2,396.14,8.51,20.6

0.05497,0,5.19,0,0.515,5.985,45.4,4.8122,5,224,20.2,396.9,9.74,19

0.06151,0,5.19,0,0.515,5.968,58.5,4.8122,5,224,20.2,396.9,9.29,18.7

0.01301,35,1.52,0,0.442,7.241,49.3,7.0379,1,284,15.5,394.74,5.49,32.7

0.02498,0,1.89,0,0.518,6.54,59.7,6.2669,1,422,15.9,389.96,8.65,16.5

0.02543,55,3.78,0,0.484,6.696,56.4,5.7321,5,370,17.6,396.9,7.18,23.9

0.03049,55,3.78,0,0.484,6.874,28.1,6.4654,5,370,17.6,387.97,4.61,31.2

0.03113,0,4.39,0,0.442,6.014,48.5,8.0136,3,352,18.8,385.64,10.53,17.5

0.06162,0,4.39,0,0.442,5.898,52.3,8.0136,3,352,18.8,364.61,12.67,17.2

0.0187,85,4.15,0,0.429,6.516,27.7,8.5353,4,351,17.9,392.43,6.36,23.1

0.01501,80,2.01,0,0.435,6.635,29.7,8.344,4,280,17,390.94,5.99,24.5

0.02899,40,1.25,0,0.429,6.939,34.5,8.7921,1,335,19.7,389.85,5.89,26.6

0.06211,40,1.25,0,0.429,6.49,44.4,8.7921,1,335,19.7,396.9,5.98,22.9

0.0795,60,1.69,0,0.411,6.579,35.9,10.7103,4,411,18.3,370.78,5.49,24.1

0.07244,60,1.69,0,0.411,5.884,18.5,10.7103,4,411,18.3,392.33,7.79,18.6

0.01709,90,2.02,0,0.41,6.728,36.1,12.1265,5,187,17,384.46,4.5,30.1

0.04301,80,1.91,0,0.413,5.663,21.9,10.5857,4,334,22,382.8,8.05,18.2

0.10659,80,1.91,0,0.413,5.936,19.5,10.5857,4,334,22,376.04,5.57,20.6

8.98296,0,18.1,1,0.77,6.212,97.4,2.1222,24,666,20.2,377.73,17.6,17.8

3.8497,0,18.1,1,0.77,6.395,91,2.5052,24,666,20.2,391.34,13.27,21.7

5.20177,0,18.1,1,0.77,6.127,83.4,2.7227,24,666,20.2,395.43,11.48,22.7

4.26131,0,18.1,0,0.77,6.112,81.3,2.5091,24,666,20.2,390.74,12.67,22.6

4.54192,0,18.1,0,0.77,6.398,88,2.5182,24,666,20.2,374.56,7.79,25

3.83684,0,18.1,0,0.77,6.251,91.1,2.2955,24,666,20.2,350.65,14.19,19.9

3.67822,0,18.1,0,0.77,5.362,96.2,2.1036,24,666,20.2,380.79,10.19,20.8

4.22239,0,18.1,1,0.77,5.803,89,1.9047,24,666,20.2,353.04,14.64,16.8

3.47428,0,18.1,1,0.718,8.78,82.9,1.9047,24,666,20.2,354.55,5.29,21.9

4.55587,0,18.1,0,0.718,3.561,87.9,1.6132,24,666,20.2,354.7,7.12,27.5

3.69695,0,18.1,0,0.718,4.963,91.4,1.7523,24,666,20.2,316.03,14,21.9

13.5222,0,18.1,0,0.631,3.863,100,1.5106,24,666,20.2,131.42,13.33,23.1

4.89822,0,18.1,0,0.631,4.97,100,1.3325,24,666,20.2,375.52,3.26,50

5.66998,0,18.1,1,0.631,6.683,96.8,1.3567,24,666,20.2,375.33,3.73,50

6.53876,0,18.1,1,0.631,7.016,97.5,1.2024,24,666,20.2,392.05,2.96,50

9.2323,0,18.1,0,0.631,6.216,100,1.1691,24,666,20.2,366.15,9.53,50

8.26725,0,18.1,1,0.668,5.875,89.6,1.1296,24,666,20.2,347.88,8.88,50

11.1081,0,18.1,0,0.668,4.906,100,1.1742,24,666,20.2,396.9,34.77,13.8

18.4982,0,18.1,0,0.668,4.138,100,1.137,24,666,20.2,396.9,37.97,13.8

19.6091,0,18.1,0,0.671,7.313,97.9,1.3163,24,666,20.2,396.9,13.44,15

15.288,0,18.1,0,0.671,6.649,93.3,1.3449,24,666,20.2,363.02,23.24,13.9

9.82349,0,18.1,0,0.671,6.794,98.8,1.358,24,666,20.2,396.9,21.24,13.3

23.6482,0,18.1,0,0.671,6.38,96.2,1.3861,24,666,20.2,396.9,23.69,13.1

17.8667,0,18.1,0,0.671,6.223,100,1.3861,24,666,20.2,393.74,21.78,10.2

88.9762,0,18.1,0,0.671,6.968,91.9,1.4165,24,666,20.2,396.9,17.21,10.4

15.8744,0,18.1,0,0.671,6.545,99.1,1.5192,24,666,20.2,396.9,21.08,10.9

9.18702,0,18.1,0,0.7,5.536,100,1.5804,24,666,20.2,396.9,23.6,11.3

7.99248,0,18.1,0,0.7,5.52,100,1.5331,24,666,20.2,396.9,24.56,12.3

20.0849,0,18.1,0,0.7,4.368,91.2,1.4395,24,666,20.2,285.83,30.63,8.8

16.8118,0,18.1,0,0.7,5.277,98.1,1.4261,24,666,20.2,396.9,30.81,7.2

24.3938,0,18.1,0,0.7,4.652,100,1.4672,24,666,20.2,396.9,28.28,10.5

22.5971,0,18.1,0,0.7,5,89.5,1.5184,24,666,20.2,396.9,31.99,7.4

14.3337,0,18.1,0,0.7,4.88,100,1.5895,24,666,20.2,372.92,30.62,10.2

8.15174,0,18.1,0,0.7,5.39,98.9,1.7281,24,666,20.2,396.9,20.85,11.5

6.96215,0,18.1,0,0.7,5.713,97,1.9265,24,666,20.2,394.43,17.11,15.1

5.29305,0,18.1,0,0.7,6.051,82.5,2.1678,24,666,20.2,378.38,18.76,23.2

11.5779,0,18.1,0,0.7,5.036,97,1.77,24,666,20.2,396.9,25.68,9.7

8.64476,0,18.1,0,0.693,6.193,92.6,1.7912,24,666,20.2,396.9,15.17,13.8

13.3598,0,18.1,0,0.693,5.887,94.7,1.7821,24,666,20.2,396.9,16.35,12.7

8.71675,0,18.1,0,0.693,6.471,98.8,1.7257,24,666,20.2,391.98,17.12,13.1

5.87205,0,18.1,0,0.693,6.405,96,1.6768,24,666,20.2,396.9,19.37,12.5

7.67202,0,18.1,0,0.693,5.747,98.9,1.6334,24,666,20.2,393.1,19.92,8.5

38.3518,0,18.1,0,0.693,5.453,100,1.4896,24,666,20.2,396.9,30.59,5

9.91655,0,18.1,0,0.693,5.852,77.8,1.5004,24,666,20.2,338.16,29.97,6.3

25.0461,0,18.1,0,0.693,5.987,100,1.5888,24,666,20.2,396.9,26.77,5.6

14.2362,0,18.1,0,0.693,6.343,100,1.5741,24,666,20.2,396.9,20.32,7.2

9.59571,0,18.1,0,0.693,6.404,100,1.639,24,666,20.2,376.11,20.31,12.1

24.8017,0,18.1,0,0.693,5.349,96,1.7028,24,666,20.2,396.9,19.77,8.3

41.5292,0,18.1,0,0.693,5.531,85.4,1.6074,24,666,20.2,329.46,27.38,8.5

67.9208,0,18.1,0,0.693,5.683,100,1.4254,24,666,20.2,384.97,22.98,5

20.7162,0,18.1,0,0.659,4.138,100,1.1781,24,666,20.2,370.22,23.34,11.9

11.9511,0,18.1,0,0.659,5.608,100,1.2852,24,666,20.2,332.09,12.13,27.9

7.40389,0,18.1,0,0.597,5.617,97.9,1.4547,24,666,20.2,314.64,26.4,17.2

14.4383,0,18.1,0,0.597,6.852,100,1.4655,24,666,20.2,179.36,19.78,27.5

51.1358,0,18.1,0,0.597,5.757,100,1.413,24,666,20.2,2.6,10.11,15

14.0507,0,18.1,0,0.597,6.657,100,1.5275,24,666,20.2,35.05,21.22,17.2

18.811,0,18.1,0,0.597,4.628,100,1.5539,24,666,20.2,28.79,34.37,17.9

28.6558,0,18.1,0,0.597,5.155,100,1.5894,24,666,20.2,210.97,20.08,16.3

45.7461,0,18.1,0,0.693,4.519,100,1.6582,24,666,20.2,88.27,36.98,7

18.0846,0,18.1,0,0.679,6.434,100,1.8347,24,666,20.2,27.25,29.05,7.2

10.8342,0,18.1,0,0.679,6.782,90.8,1.8195,24,666,20.2,21.57,25.79,7.5

25.9406,0,18.1,0,0.679,5.304,89.1,1.6475,24,666,20.2,127.36,26.64,10.4

73.5341,0,18.1,0,0.679,5.957,100,1.8026,24,666,20.2,16.45,20.62,8.8

11.8123,0,18.1,0,0.718,6.824,76.5,1.794,24,666,20.2,48.45,22.74,8.4

11.0874,0,18.1,0,0.718,6.411,100,1.8589,24,666,20.2,318.75,15.02,16.7

7.02259,0,18.1,0,0.718,6.006,95.3,1.8746,24,666,20.2,319.98,15.7,14.2

12.0482,0,18.1,0,0.614,5.648,87.6,1.9512,24,666,20.2,291.55,14.1,20.8

7.05042,0,18.1,0,0.614,6.103,85.1,2.0218,24,666,20.2,2.52,23.29,13.4

8.79212,0,18.1,0,0.584,5.565,70.6,2.0635,24,666,20.2,3.65,17.16,11.7

15.8603,0,18.1,0,0.679,5.896,95.4,1.9096,24,666,20.2,7.68,24.39,8.3

12.2472,0,18.1,0,0.584,5.837,59.7,1.9976,24,666,20.2,24.65,15.69,10.2

37.6619,0,18.1,0,0.679,6.202,78.7,1.8629,24,666,20.2,18.82,14.52,10.9

7.36711,0,18.1,0,0.679,6.193,78.1,1.9356,24,666,20.2,96.73,21.52,11

9.33889,0,18.1,0,0.679,6.38,95.6,1.9682,24,666,20.2,60.72,24.08,9.5

8.49213,0,18.1,0,0.584,6.348,86.1,2.0527,24,666,20.2,83.45,17.64,14.5

10.0623,0,18.1,0,0.584,6.833,94.3,2.0882,24,666,20.2,81.33,19.69,14.1

6.44405,0,18.1,0,0.584,6.425,74.8,2.2004,24,666,20.2,97.95,12.03,16.1

5.58107,0,18.1,0,0.713,6.436,87.9,2.3158,24,666,20.2,100.19,16.22,14.3

13.9134,0,18.1,0,0.713,6.208,95,2.2222,24,666,20.2,100.63,15.17,11.7

11.1604,0,18.1,0,0.74,6.629,94.6,2.1247,24,666,20.2,109.85,23.27,13.4

14.4208,0,18.1,0,0.74,6.461,93.3,2.0026,24,666,20.2,27.49,18.05,9.6

15.1772,0,18.1,0,0.74,6.152,100,1.9142,24,666,20.2,9.32,26.45,8.7

13.6781,0,18.1,0,0.74,5.935,87.9,1.8206,24,666,20.2,68.95,34.02,8.4

9.39063,0,18.1,0,0.74,5.627,93.9,1.8172,24,666,20.2,396.9,22.88,12.8

22.0511,0,18.1,0,0.74,5.818,92.4,1.8662,24,666,20.2,391.45,22.11,10.5

9.72418,0,18.1,0,0.74,6.406,97.2,2.0651,24,666,20.2,385.96,19.52,17.1

5.66637,0,18.1,0,0.74,6.219,100,2.0048,24,666,20.2,395.69,16.59,18.4

9.96654,0,18.1,0,0.74,6.485,100,1.9784,24,666,20.2,386.73,18.85,15.4

12.8023,0,18.1,0,0.74,5.854,96.6,1.8956,24,666,20.2,240.52,23.79,10.8

10.6718,0,18.1,0,0.74,6.459,94.8,1.9879,24,666,20.2,43.06,23.98,11.8

6.28807,0,18.1,0,0.74,6.341,96.4,2.072,24,666,20.2,318.01,17.79,14.9

9.92485,0,18.1,0,0.74,6.251,96.6,2.198,24,666,20.2,388.52,16.44,12.6

9.32909,0,18.1,0,0.713,6.185,98.7,2.2616,24,666,20.2,396.9,18.13,14.1

7.52601,0,18.1,0,0.713,6.417,98.3,2.185,24,666,20.2,304.21,19.31,13

6.71772,0,18.1,0,0.713,6.749,92.6,2.3236,24,666,20.2,0.32,17.44,13.4

5.44114,0,18.1,0,0.713,6.655,98.2,2.3552,24,666,20.2,355.29,17.73,15.2

5.09017,0,18.1,0,0.713,6.297,91.8,2.3682,24,666,20.2,385.09,17.27,16.1

8.24809,0,18.1,0,0.713,7.393,99.3,2.4527,24,666,20.2,375.87,16.74,17.8

9.51363,0,18.1,0,0.713,6.728,94.1,2.4961,24,666,20.2,6.68,18.71,14.9

4.75237,0,18.1,0,0.713,6.525,86.5,2.4358,24,666,20.2,50.92,18.13,14.1

4.66883,0,18.1,0,0.713,5.976,87.9,2.5806,24,666,20.2,10.48,19.01,12.7

8.20058,0,18.1,0,0.713,5.936,80.3,2.7792,24,666,20.2,3.5,16.94,13.5

7.75223,0,18.1,0,0.713,6.301,83.7,2.7831,24,666,20.2,272.21,16.23,14.9

6.80117,0,18.1,0,0.713,6.081,84.4,2.7175,24,666,20.2,396.9,14.7,20

4.81213,0,18.1,0,0.713,6.701,90,2.5975,24,666,20.2,255.23,16.42,16.4

3.69311,0,18.1,0,0.713,6.376,88.4,2.5671,24,666,20.2,391.43,14.65,17.7

6.65492,0,18.1,0,0.713,6.317,83,2.7344,24,666,20.2,396.9,13.99,19.5

5.82115,0,18.1,0,0.713,6.513,89.9,2.8016,24,666,20.2,393.82,10.29,20.2

7.83932,0,18.1,0,0.655,6.209,65.4,2.9634,24,666,20.2,396.9,13.22,21.4

3.1636,0,18.1,0,0.655,5.759,48.2,3.0665,24,666,20.2,334.4,14.13,19.9

3.77498,0,18.1,0,0.655,5.952,84.7,2.8715,24,666,20.2,22.01,17.15,19

4.42228,0,18.1,0,0.584,6.003,94.5,2.5403,24,666,20.2,331.29,21.32,19.1

15.5757,0,18.1,0,0.58,5.926,71,2.9084,24,666,20.2,368.74,18.13,19.1

13.0751,0,18.1,0,0.58,5.713,56.7,2.8237,24,666,20.2,396.9,14.76,20.1

4.34879,0,18.1,0,0.58,6.167,84,3.0334,24,666,20.2,396.9,16.29,19.9

4.03841,0,18.1,0,0.532,6.229,90.7,3.0993,24,666,20.2,395.33,12.87,19.6

3.56868,0,18.1,0,0.58,6.437,75,2.8965,24,666,20.2,393.37,14.36,23.2

4.64689,0,18.1,0,0.614,6.98,67.6,2.5329,24,666,20.2,374.68,11.66,29.8

8.05579,0,18.1,0,0.584,5.427,95.4,2.4298,24,666,20.2,352.58,18.14,13.8

6.39312,0,18.1,0,0.584,6.162,97.4,2.206,24,666,20.2,302.76,24.1,13.3

4.87141,0,18.1,0,0.614,6.484,93.6,2.3053,24,666,20.2,396.21,18.68,16.7

15.0234,0,18.1,0,0.614,5.304,97.3,2.1007,24,666,20.2,349.48,24.91,12

10.233,0,18.1,0,0.614,6.185,96.7,2.1705,24,666,20.2,379.7,18.03,14.6

14.3337,0,18.1,0,0.614,6.229,88,1.9512,24,666,20.2,383.32,13.11,21.4

5.82401,0,18.1,0,0.532,6.242,64.7,3.4242,24,666,20.2,396.9,10.74,23

5.70818,0,18.1,0,0.532,6.75,74.9,3.3317,24,666,20.2,393.07,7.74,23.7

5.73116,0,18.1,0,0.532,7.061,77,3.4106,24,666,20.2,395.28,7.01,25

2.81838,0,18.1,0,0.532,5.762,40.3,4.0983,24,666,20.2,392.92,10.42,21.8

2.37857,0,18.1,0,0.583,5.871,41.9,3.724,24,666,20.2,370.73,13.34,20.6

3.67367,0,18.1,0,0.583,6.312,51.9,3.9917,24,666,20.2,388.62,10.58,21.2

5.69175,0,18.1,0,0.583,6.114,79.8,3.5459,24,666,20.2,392.68,14.98,19.1

4.83567,0,18.1,0,0.583,5.905,53.2,3.1523,24,666,20.2,388.22,11.45,20.6

0.15086,0,27.74,0,0.609,5.454,92.7,1.8209,4,711,20.1,395.09,18.06,15.2

0.18337,0,27.74,0,0.609,5.414,98.3,1.7554,4,711,20.1,344.05,23.97,7

0.20746,0,27.74,0,0.609,5.093,98,1.8226,4,711,20.1,318.43,29.68,8.1

0.10574,0,27.74,0,0.609,5.983,98.8,1.8681,4,711,20.1,390.11,18.07,13.6

0.11132,0,27.74,0,0.609,5.983,83.5,2.1099,4,711,20.1,396.9,13.35,20.1

0.17331,0,9.69,0,0.585,5.707,54,2.3817,6,391,19.2,396.9,12.01,21.8

0.27957,0,9.69,0,0.585,5.926,42.6,2.3817,6,391,19.2,396.9,13.59,24.5

0.17899,0,9.69,0,0.585,5.67,28.8,2.7986,6,391,19.2,393.29,17.6,23.1

0.2896,0,9.69,0,0.585,5.39,72.9,2.7986,6,391,19.2,396.9,21.14,19.7

0.26838,0,9.69,0,0.585,5.794,70.6,2.8927,6,391,19.2,396.9,14.1,18.3

0.23912,0,9.69,0,0.585,6.019,65.3,2.4091,6,391,19.2,396.9,12.92,21.2

0.17783,0,9.69,0,0.585,5.569,73.5,2.3999,6,391,19.2,395.77,15.1,17.5

0.22438,0,9.69,0,0.585,6.027,79.7,2.4982,6,391,19.2,396.9,14.33,16.8

0.06263,0,11.93,0,0.573,6.593,69.1,2.4786,1,273,21,391.99,9.67,22.4

0.04527,0,11.93,0,0.573,6.12,76.7,2.2875,1,273,21,396.9,9.08,20.6

0.06076,0,11.93,0,0.573,6.976,91,2.1675,1,273,21,396.9,5.64,23.9

0.10959,0,11.93,0,0.573,6.794,89.3,2.3889,1,273,21,393.45,6.48,22

0.04741,0,11.93,0,0.573,6.03,80.8,2.505,1,273,21,396.9,7.88,11.9

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?