ELK 工作原理

Logstash收集AppServer产生的Log,并存放到ElasticSearch集群中,而Kibana则从ES集群中查询数据生成图表,再返回给Browser。

下载

elasticsearch:https://www.elastic.co/cn/downloads/elasticsearch

kibana:https://www.elastic.co/cn/downloads/kibana

logstash:https://www.elastic.co/cn/downloads/logstash

部署

安装elasticsearch

解压下载包:elasticsearch-8.7.0-windows-x86_64.zip

修改配置文件config/elasticsearch.yml,关闭验证和ssl

xpack.security.enabled: false #关闭安全验证

xpack.security.enrollment.enabled: false

xpack.security.http.ssl:

enabled: false

keystore.path: certs/http.p12

xpack.security.transport.ssl:

enabled: false

verification_mode: certificate

keystore.path: certs/transport.p12

truststore.path: certs/transport.p12

network.host: 127.0.0.1

http.port: 9200

discovery.seed_hosts: [ "127.0.0.1", "[::1]" ]

http.host: 0.0.0.0

http.cors.enabled: true

http.cors.allow-origin: "*"

http.cors.allow-headers: Authorization,Content-Type,X-Requested-with,Content-Length

启动elasticsearch

双击bin/elasticsearch.bat

安装logstash

解压下载包:logstash-8.7.0-windows-x86_64.zip

修改config/logstash.yml

xpack.monitoring.enabled: false

xpack.monitoring.elasticsearch.hosts: [ "http://127.0.0.1:9200" ]

添加config/logstash.conf

input {

tcp {

host => "127.0.0.1"

port => 5044

codec => json_lines

}

}

output {

stdout{

codec => rubydebug

}

elasticsearch {

hosts => ["http://127.0.0.1:9200"]

index => "applog-%{+YYYY.MM.dd}"

}

}

启动logstash

进入bin目录,使用cmd命令,输入:logstash -f config/logstash.conf

安装kibana

解压下载文件:kibana-8.7.0-windows-x86_64.zip

修改配置文件:config/kibana.yml

server.port: 5601

server.host: "0.0.0.0"

elasticsearch.hosts: [ "http://127.0.0.1:9200" ]

i18n.locale: "zh-CN"

启动kibana

进入bin目录,双击kibana.bat

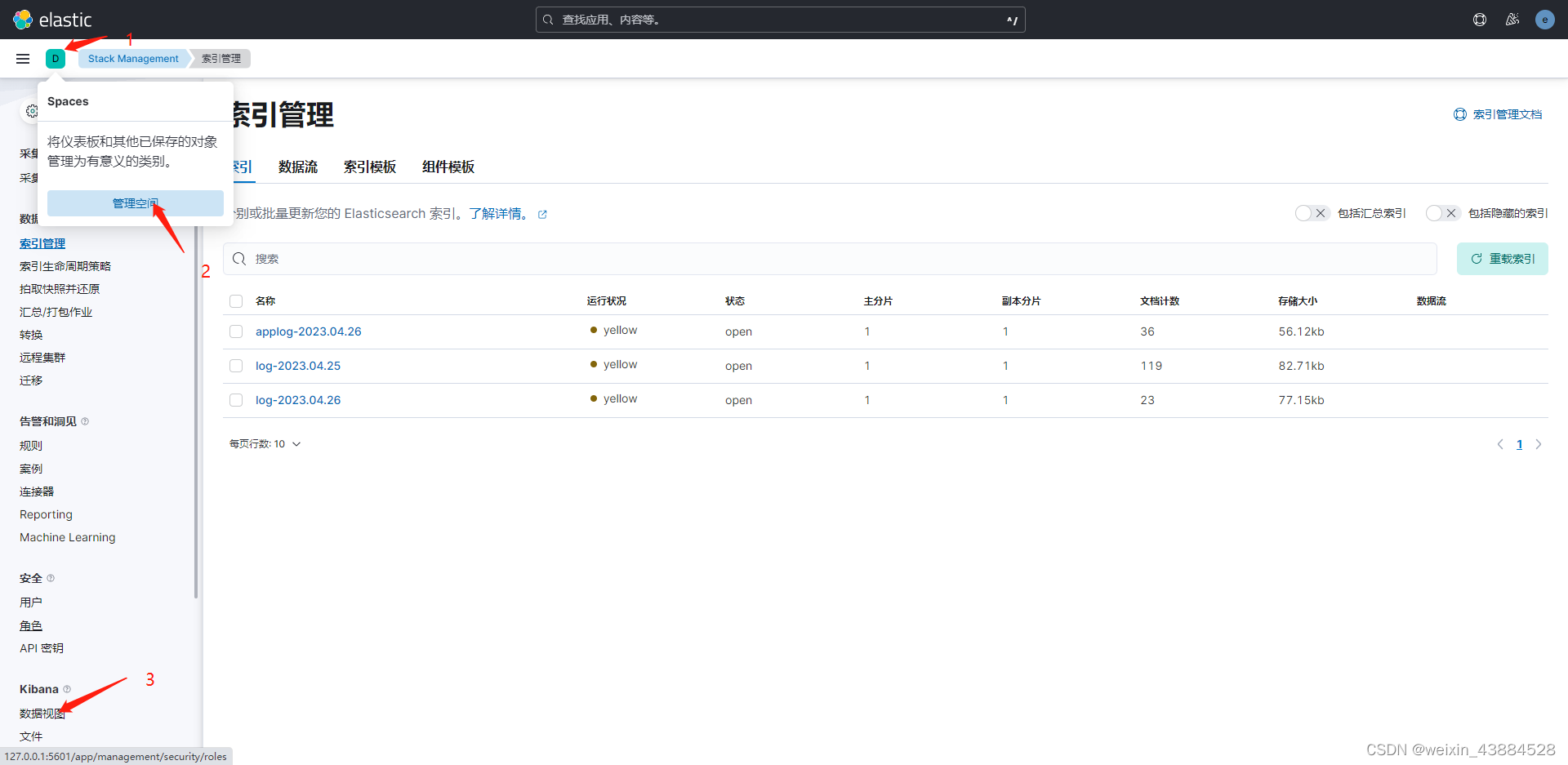

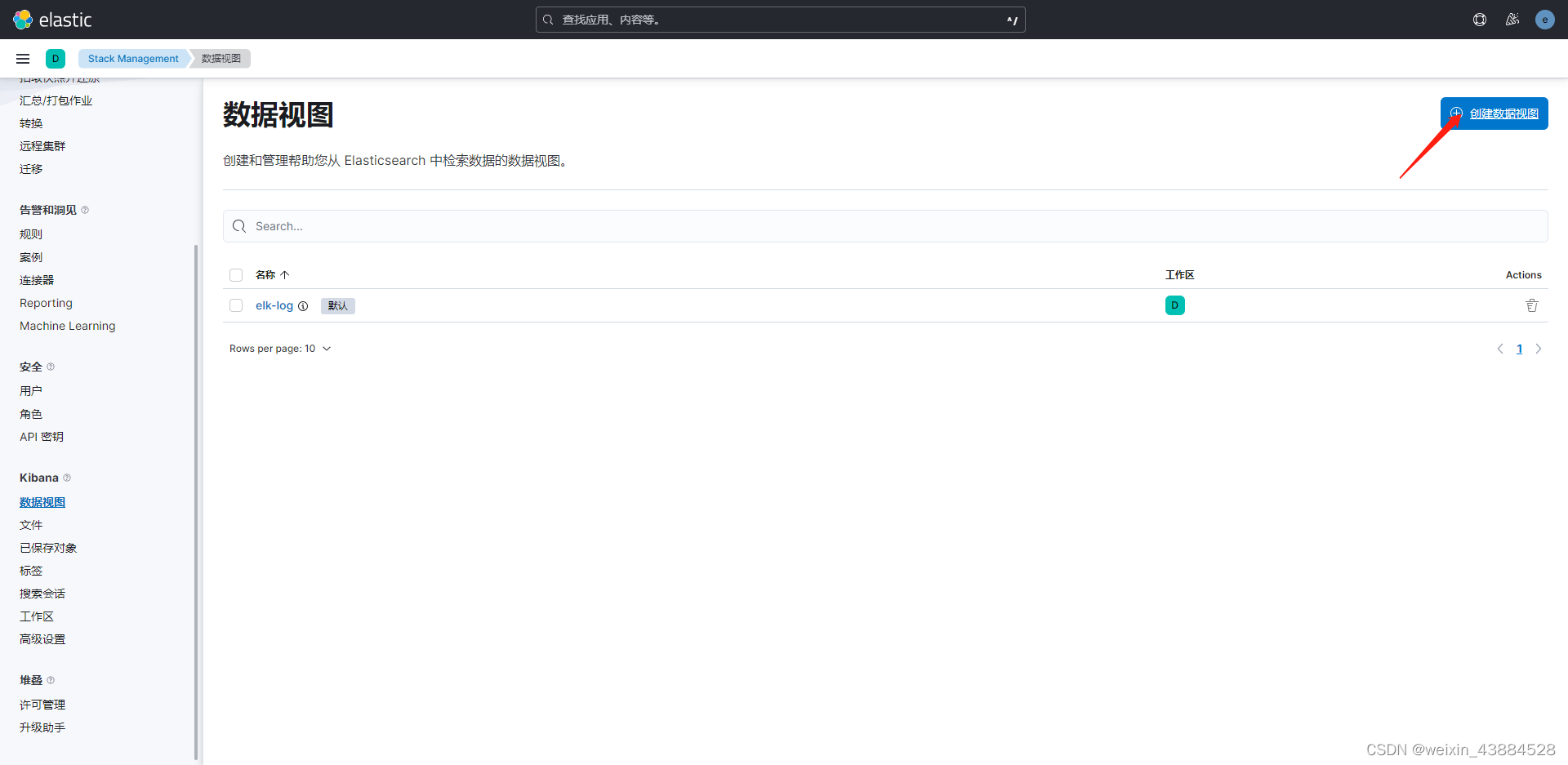

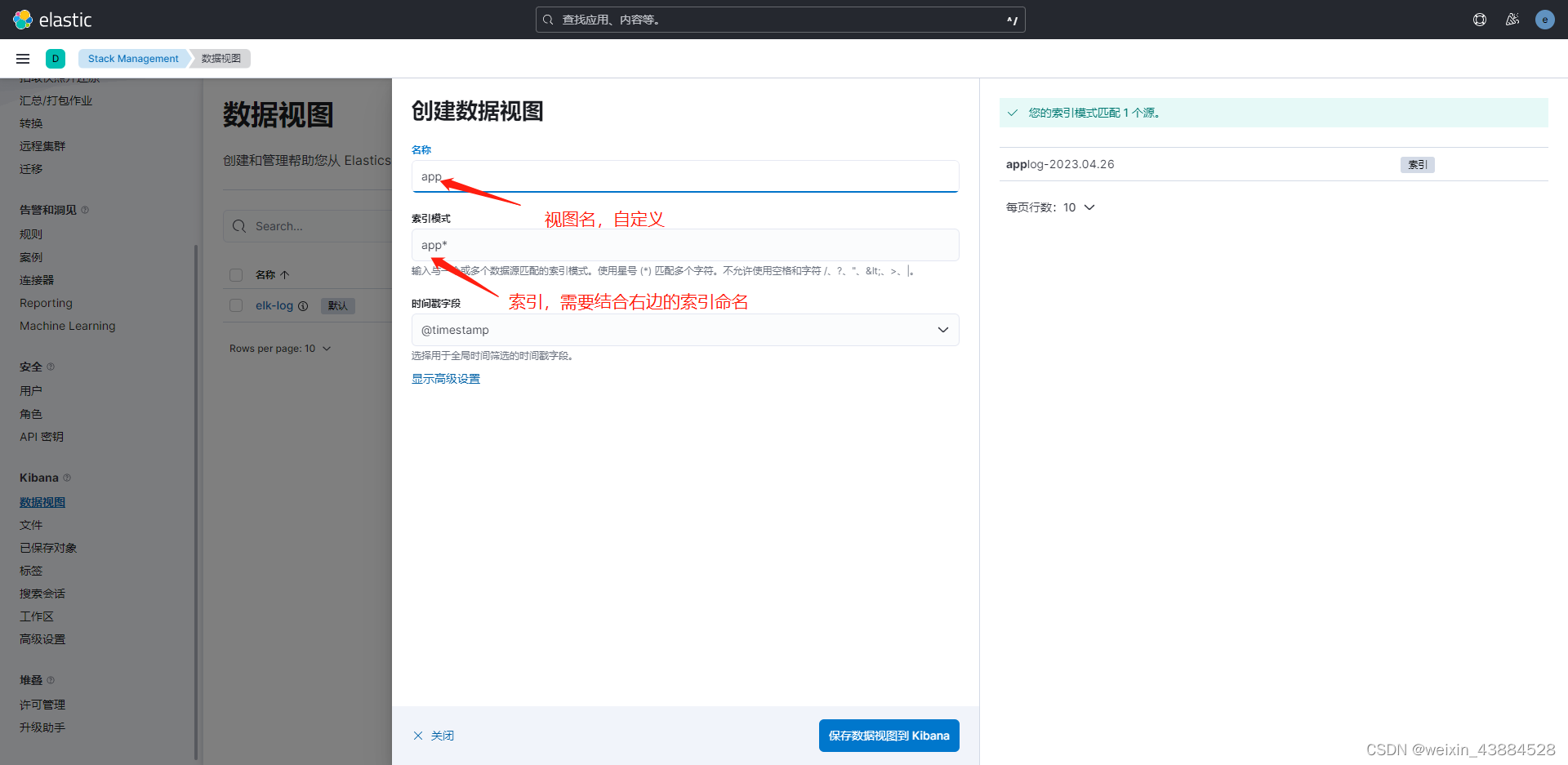

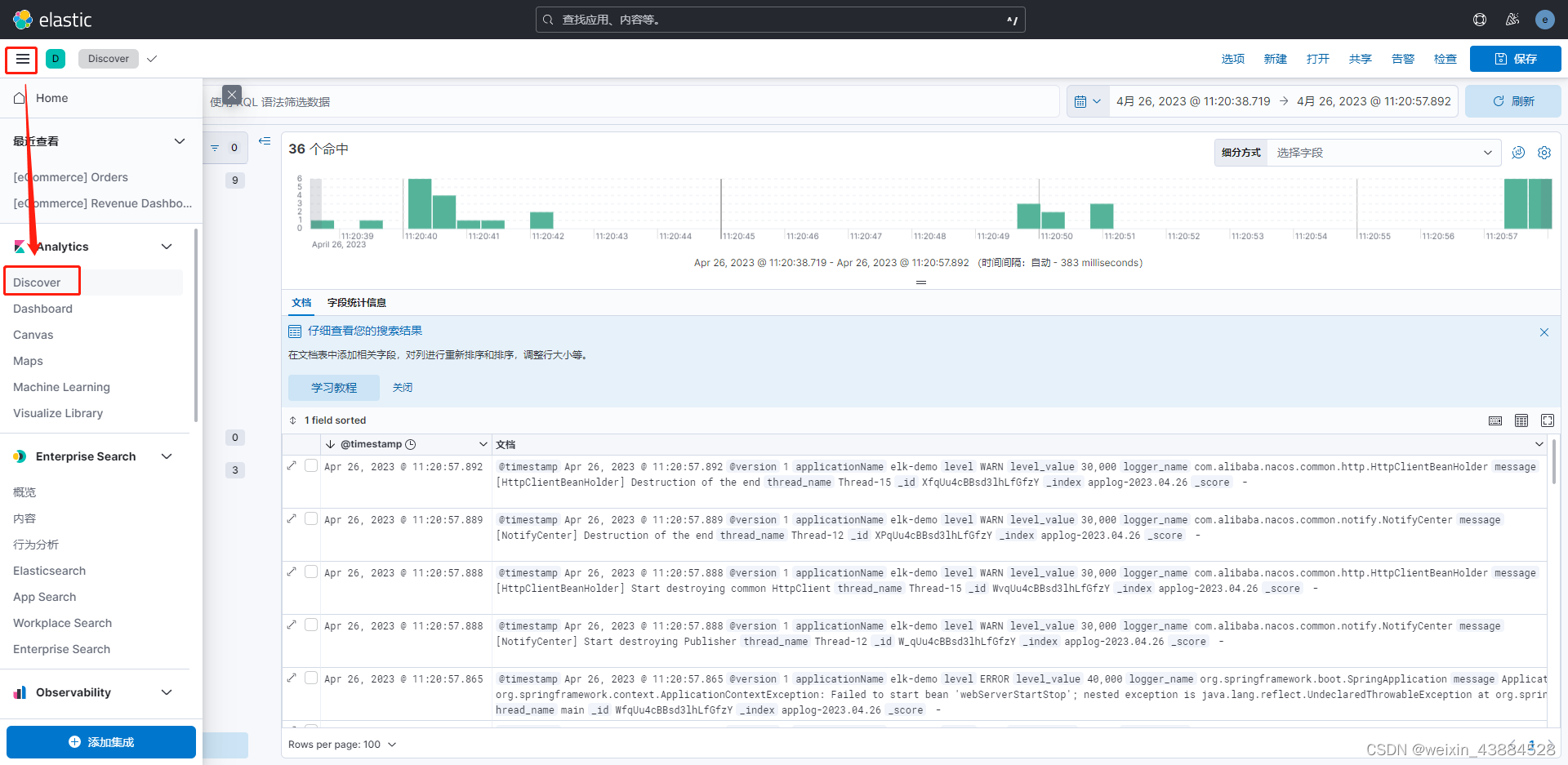

进入kibana管理页面

网页端输入:http://127.0.0.1:5601

SpringBoot 集成 ELK

引入依赖

<dependency>

<groupId>net.logstash.logback</groupId>

<artifactId>logstash-logback-encoder</artifactId>

<version>7.2</version>

</dependency>

<dependency>

<groupId>org.projectlombok</groupId>

<artifactId>lombok</artifactId>

</dependency>

添加logback.xml

<?xml version="1.0" encoding="UTF-8"?>

<configuration debug="false">

<!--获取springboot的yml配置信息-->

<springProperty scope="context" name="applicationName" source="spring.application.name" defaultValue="default"/>

<!--定义日志文件的存储地址 勿在 LogBack 的配置中使用相对路径-->

<property name="LOG_HOME" value="/home"/>

<!--输出到控制台-->

<appender name="console" class="ch.qos.logback.core.ConsoleAppender">

<filter class="ch.qos.logback.classic.filter.ThresholdFilter">

<level>INFO</level>

</filter>

<withJansi>false</withJansi>

<encoder>

<!--<pattern>%d %p (%file:%line)- %m%n</pattern>-->

<!--格式化输出:%d:表示日期 %thread:表示线程名 %-5level:级别从左显示5个字符宽度 %msg:日志消息 %n:是换行符-->

<pattern>%d{yyyy-MM-dd HH:mm:ss} %highlight(%-5level) -- %boldMagenta([%thread]) %boldCyan(%logger) :

%msg%n

</pattern>

<charset>UTF-8</charset>

</encoder>

</appender>

<!-- 日志发送至logstash -->

<appender name="logstash" class="net.logstash.logback.appender.LogstashTcpSocketAppender">

<!-- logstash的服务器地址和通信端口 -->

<destination>127.0.0.1:5044</destination>

<!-- encoder is required -->

<encoder class="net.logstash.logback.encoder.LogstashEncoder">

<!-- 在elasticsearch的index中追加applicationName字段 -->

<customFields>{"applicationName":"${applicationName}"}</customFields>

</encoder>

</appender>

<!-- 按照每天生成日志文件 -->

<appender name="FILE" class="ch.qos.logback.core.rolling.RollingFileAppender">

<rollingPolicy class="ch.qos.logback.core.rolling.TimeBasedRollingPolicy">

<!--日志文件输出的文件名-->

<FileNamePattern>${LOG_HOME}/TestWeb.log.%d{yyyy-MM-dd}.log</FileNamePattern>

<!--日志文件保留天数-->

<MaxHistory>30</MaxHistory>

</rollingPolicy>

<encoder class="ch.qos.logback.classic.encoder.PatternLayoutEncoder">

<!--格式化输出:%d表示日期,%thread表示线程名,%-5level:级别从左显示5个字符宽度%msg:日志消息,%n是换行符-->

<pattern>%d{yyyy-MM-dd HH:mm:ss.SSS} [%thread] %-5level %logger{50} - %msg%n</pattern>

</encoder>

<!--日志文件最大的大小-->

<triggeringPolicy class="ch.qos.logback.core.rolling.SizeBasedTriggeringPolicy">

<MaxFileSize>10MB</MaxFileSize>

</triggeringPolicy>

</appender>

<!-- 日志输出级别 -->

<root level="INFO">

<appender-ref ref="logstash"/>

<appender-ref ref="console"/>

<appender-ref ref="FILE"/>

</root>

</configuration>

如果需要搭建验证环境可私聊

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?