技术分析

一、缘起

小组之前做的一个需要国产化定制NVR(网络视频录像机)需求的项目,最后因为成本原因被弃了,现在做一些技术总结和分享。主要分享方案,项目中的技术难点不多说。小组以前项目的累计主要在STM32方面,不成熟的地方希望多多批评指正。

二、技术需求

简要的技术需求如下:

- 接收最多16路网络摄像头的视频流

- 显示:可配置1/2/4/9分屏显示,通过HDMI/VGA 输出

- 存储:可储存16路视频流数据

- 支持配置记忆

- 使用WEB和上位机软件配置

- 支持可配置的数据回放

- 支持可选择通道的视频分屏显示

- 模拟雷达成像软件

- 通过上位机软件动态模拟雷达成像结果。

- 仿真输入文件为目标物体的极坐标和时间信息,格式自定义。

- 目标物体不少于3 个

- 仿真周期不小于10min,可设置成单次仿真和循环仿真。

与上位机软件和配置相关的技术和接口不宜分享,我主要负责与视频相关的部分(也就是NVR的主要功能),本篇展示了项目前期的代码。

三、技术方案

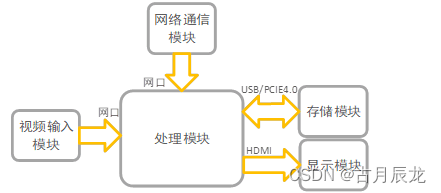

硬件的结构很简单如下(3.1 结构框图):

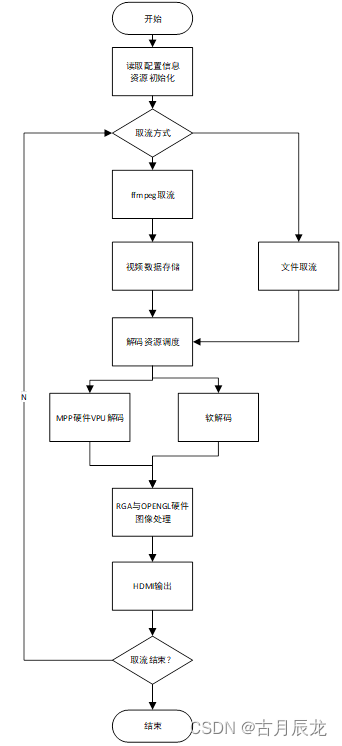

主要模块使用多线程完成(没有画出来,不涉复杂及同步和通信),软件设计流程图也很简单(3.2 软件流程图):

与上位机的通信用的TCP-Modbus协议,小组之前这方面有积累。

设备软件开发及运行环境为:Linux-debian10操作系统,在基础系统配置安装的基础上需要安装配置OPENGL、FFMPEG、MMP、OPENCV(C++)等库文件 ,编译器为aarch64-linux-gnu-g++。

四、技术模块分析

核心为三个过程:视频取流、视频解码、视频输出。存储涉及文件队列,为了节省空间直接存储码流(后续优化)。

1、视频取流

软件上ffmpeg已经有了完整的视频取流、视频编解码方案,我直接使用硬件的解码替代掉原有的软解码就行。

这部分学习的是雷霄骅前辈的总结。

2、视频解码

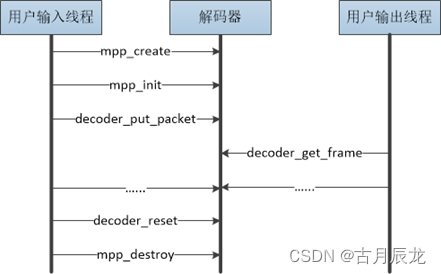

RK有专门的(H264/265)硬件编解码接口MPP,RK官方有MPP的使用说明,这里就不详诉了。还有对图像进行裁剪、旋转的接口RGA。(4.1 MPP多线程编解码使用流程)

这里有几个坑:

- 就是MPP最大只能6通道1080P@30帧显示,对于我们的项目需求只有降帧情况下才能正常使用,当然我们使用软件+硬件解码的方式处理。

- MPP多实例时还要求上下文独立。

- 解码花屏问题https://blog.csdn.net/qq_41117054/article/details/127405013

3、视频输出

视频输出使用的一个笨拙的方法:DRM,有很多好用的接口我并没有使用,当时项目比较赶没时间学。

会出现花屏(后面的版本我用单独开一个显示线程用的环形队列显示)

五、核心代码解读

首先初始化ffmpeg、MMP:

int GetStream::Init()

{

//av_register_all(); //函数在ffmpeg4.0以上版本已经被废弃,所以4.0以下版本就需要注册初始函数

avformat_network_init();

av_dict_set(&options, "buffer_size", "1024000", 0); //设置缓存大小,1080p可将值跳到最大

av_dict_set(&options, "rtsp_transport", "tcp", 0); //以tcp的方式打开,

av_dict_set(&options, "stimeout", "5000000", 0); //设置超时断开链接时间,单位us

av_dict_set(&options, "max_delay", "500000", 0); //设置最大时延

pFormatCtx = avformat_alloc_context(); //用来申请AVFormatContext类型变量并初始化默认参数,申请的空间

if (avformat_open_input(&pFormatCtx, filepath, NULL, &options) != 0)

{

printf("Couldn't open input stream.\n");

return 0;

}

//获取视频文件信息

if (avformat_find_stream_info(pFormatCtx, NULL)<0)

{

printf("Couldn't find stream information.\n");

return 0;

}

//查找码流中是否有视频流

videoindex = av_find_best_stream(pFormatCtx, AVMEDIA_TYPE_VIDEO, -1, -1, NULL, 0);

/* for (i = 0; i<pFormatCtx->nb_streams; i++)

if (pFormatCtx->streams[i]->codec->codec_type == AVMEDIA_TYPE_VIDEO)

{

videoindex = i;

break;

} */

if (videoindex < 0)

{

printf("Didn't find a video stream.\n");

return 0;

}

av_packet = (AVPacket *)av_malloc(sizeof(AVPacket)); // 申请空间,存放的每一帧数据 (h264、h265)

初始化

MPP_RET ret = MPP_OK;

size_t file_size = 0;

MpiCmd mpi_cmd = MPP_CMD_BASE;

MppParam param = NULL;

RK_U32 need_split = 1;

// MppPollType timeout = 5;

// paramter for resource malloc

RK_U32 width = 2560;

RK_U32 height = 1440;

MppCodingType type = MPP_VIDEO_CodingAVC;

mpp_log("mpi_dec_test start\n");

memset(&data, 0, sizeof(data));

data.fp_output = fopen("./tenoutput.yuv", "wb");

if (NULL == data.fp_output) {

mpp_err("failed to open output file %s\n", "tenoutput.yuv");

DeInit();

}

mpp_log("mpi_dec_test decoder test start w %d h %d type %d\n", width, height, type);

// decoder demo

ret = mpp_create(&ctx, &mpi);

if (MPP_OK != ret) {

mpp_err("mpp_create failed\n");

DeInit();

}

// NOTE: decoder split mode need to be set before init

mpi_cmd = MPP_DEC_SET_PARSER_SPLIT_MODE;

param = &need_split;

ret = mpi->control(ctx, mpi_cmd, param);

if (MPP_OK != ret) {

mpp_err("mpi->control failed\n");

DeInit();

}

/*

mpi_cmd = MPP_SET_INPUT_BLOCK;

param = &need_split;

ret = mpi->control(ctx, mpi_cmd, param);

if (MPP_OK != ret) {

mpp_err("mpi->control failed\n");

DeInit();

}

mpi_cmd = MPP_SET_INTPUT_BLOCK_TIMEOUT;

param = &need_split;

ret = mpi->control(ctx, mpi_cmd, param);

if (MPP_OK != ret) {

mpp_err("mpi->control failed\n");

DeInit();

}

*/

ret = mpp_init(ctx, MPP_CTX_DEC, type);

if (MPP_OK != ret) {

mpp_err("mpp_init failed\n");

DeInit();

}

data.ctx = ctx;

data.mpi = mpi;

data.eos = 0;

data.packet_size = packet_size;

data.frame = frame;

data.frame_count = 0;

buffer = (char*)malloc(4*displayObject.rga_align.height*displayObject.rga_align.width);

std::cout << "Init finish" << std::endl;

return 0;

}

建立ffmpeg的rtsp连接后开启线程,ffmpeg接收到视频流后将流丢到解码函数区。

void* decode_pth(void* data_)

{

auto *obj = (GetStream*) data_;

MpiDecLoopData *data = &obj->data;

AVPacket *av_packet = obj->av_packet;

void* ret;

while (obj->decode_flag)

{

if (av_read_frame(obj->pFormatCtx, av_packet) >= 0)

{

if (av_packet->stream_index == obj->videoindex)

{

mpp_log("--------------\ndata size is: %d\n-------------", av_packet->size);

ret = decode_simple(obj);

}

if (av_packet != NULL)

av_packet_unref(av_packet);

mpp_log("%d", obj->videoi);

}

//usleep(5000);

}

return ret;

}

解码后将解码数据丢到displayObject.display()里面去

void* decode_simple(void* data_)

{

auto *obj = (GetStream*) data_;

MpiDecLoopData *data = &obj->data;

AVPacket *av_packet = obj->av_packet;

RK_U32 pkt_done = 0;

RK_U32 pkt_eos = 0;

RK_U32 err_info = 0;

MPP_RET ret = MPP_OK;

MppCtx ctx = data->ctx;

MppApi *mpi = data->mpi;

// char *buf = data->buf;

MppPacket packet = NULL;

MppFrame frame = NULL;

size_t read_size = 0;

size_t packet_size = data->packet_size;

ret = mpp_packet_init(&packet, av_packet->data, av_packet->size);

mpp_packet_set_pts(packet, av_packet->pts);

clock_t startTime;

clock_t endTime;

do {

RK_S32 times = 5;

// send the packet first if packet is not done

if (!pkt_done) {

startTime = clock();

ret = mpi->decode_put_packet(ctx, packet);

if (MPP_OK == ret)

pkt_done = 1;

}

// then get all available frame and release

do {

RK_S32 get_frm = 0;

RK_U32 frm_eos = 0;

try_again:

ret = mpi->decode_get_frame(ctx, &frame);

if (MPP_ERR_TIMEOUT == ret) {

if (times > 0) {

times--;

msleep(2);

goto try_again;

}

mpp_err("decode_get_frame failed too much time\n");

}

if (MPP_OK != ret) {

mpp_err("decode_get_frame failed ret %d\n", ret);

break;

}

if (frame) {

if (mpp_frame_get_info_change(frame)) {

RK_U32 width = mpp_frame_get_width(frame);

RK_U32 height = mpp_frame_get_height(frame);

RK_U32 hor_stride = mpp_frame_get_hor_stride(frame);

RK_U32 ver_stride = mpp_frame_get_ver_stride(frame);

RK_U32 buf_size = mpp_frame_get_buf_size(frame);

mpp_log("decode_get_frame get info changed found\n");

mpp_log("decoder require buffer w:h [%d:%d] stride [%d:%d] buf_size %d",

width, height, hor_stride, ver_stride, buf_size);

ret = mpp_buffer_group_get_internal(&data->frm_grp, MPP_BUFFER_TYPE_ION);

if (ret) {

mpp_err("get mpp buffer group failed ret %d\n", ret);

break;

}

mpi->control(ctx, MPP_DEC_SET_EXT_BUF_GROUP, data->frm_grp);

mpi->control(ctx, MPP_DEC_SET_INFO_CHANGE_READY, NULL);

} else {

err_info = mpp_frame_get_errinfo(frame) | mpp_frame_get_discard(frame);

if (err_info) {

mpp_log("decoder_get_frame get err info:%d discard:%d.\n",

mpp_frame_get_errinfo(frame), mpp_frame_get_discard(frame));

}

data->frame_count++;

mpp_log("decode_get_frame get frame %d\n", data->frame_count);

if (!err_info){

if(obj->display_mode){

MppBuffer buff = mpp_frame_get_buffer(frame);

mpp_buffer_handle((char *)mpp_buffer_get_ptr(buff),obj->buffer);

//convertdata((char *)mpp_buffer_get_ptr(buff),obj->buffer,&format);

displayObject.display(obj->buffer,obj->display_id);

}

}

}

frm_eos = mpp_frame_get_eos(frame);

mpp_frame_deinit(&frame);

frame = NULL;

get_frm = 1;

}

// try get runtime frame memory usage

if (data->frm_grp) {

size_t usage = mpp_buffer_group_usage(data->frm_grp);

if (usage > data->max_usage)

data->max_usage = usage;

}

// if last packet is send but last frame is not found continue

if (pkt_eos && pkt_done && !frm_eos) {

msleep(10);

continue;

}

if (frm_eos) {

mpp_log("found last frame\n");

break;

}

if (data->frame_num > 0 && data->frame_count >= data->frame_num) {

data->eos = 1;

break;

}

if (get_frm)

continue;

break;

} while (obj->decode_flag);

endTime = clock();

std::cout << "id = " << obj->display_id << " decode time = " << endTime - startTime << std::endl;

if (pkt_done)

break;

/*

* why sleep here:

* mpi->decode_put_packet will failed when packet in internal queue is

* full,waiting the package is consumed .Usually hardware decode one

* frame which resolution is 1080p needs 2 ms,so here we sleep 3ms

* * is enough.

*/

msleep(3);

} while (obj->decode_flag);

mpp_packet_deinit(&packet);

return nullptr;

}

DRM显示:

我的display是用C格式写的如下

int init(int display_num)

{

uint32_t conn_id;

uint32_t crtc_id;

std::cout << "display init start" << std::endl;

displayObject.fd = open("/dev/dri/card0", O_RDWR | O_CLOEXEC);

res = drmModeGetResources(displayObject.fd);

crtc_id = res->crtcs[0];

conn_id = res->connectors[0];

conn = drmModeGetConnector(displayObject.fd, conn_id);

displayObject.buf.width = conn->modes[0].hdisplay;

displayObject.buf.height = conn->modes[0].vdisplay;

modeset_create_fb(displayObject.fd, &displayObject.buf);

drmModeSetCrtc(displayObject.fd, crtc_id, displayObject.buf.fb_id,0, 0, &conn_id, 1, &conn->modes[0]);

displayObject.displayLocation = new display_location[display_num];

if(split_screen(display_num) < 0 ){

std::cerr << "split_screen error" << std::endl;

return -1;

}

//16字节对齐

std::cout << "display init final" << std::endl;

return 0;

}

static int modeset_create_fb(int fd, struct buffer_object *bo)

{

struct drm_mode_create_dumb create = {};

struct drm_mode_map_dumb map = {};

create.width = bo->width;

create.height = bo->height;

create.bpp = 32;

drmIoctl(fd, DRM_IOCTL_MODE_CREATE_DUMB, &create);

bo->pitch = create.pitch;

bo->size = create.size;

bo->handle = create.handle;

drmModeAddFB(fd, bo->width, bo->height, 24, 32, bo->pitch,

bo->handle, &bo->fb_id);

map.handle = create.handle;

drmIoctl(fd, DRM_IOCTL_MODE_MAP_DUMB, &map);

bo->vaddr = (uint8_t *)mmap(0, create.size, PROT_READ | PROT_WRITE,

MAP_SHARED, fd, map.offset);

memset(bo->vaddr, 0xff, bo->size);

return 0;

}

将获取到的屏幕-buffer进行分割

static int split_screen(int display_num)

{

int width,height,display_mode;

int screen_w = displayObject.buf.width;

int screen_h = displayObject.buf.height;

if(display_num>0){

if(display_num == 1){

width = screen_w;

height = screen_h;

display_mode=1;

} else if(display_num == 2){

display_mode=2;

width = screen_w/2;

height = screen_h;

} else if(display_num <= 4){

display_mode=4;

width = screen_w/2;

height = screen_h/2;

} else if(display_num <= 6){

display_mode=6;

width = screen_w/3;

height = screen_h/2;

} else if(display_num <= 9){

display_mode=9;

width = screen_w/3;

height = screen_h/3;

} else{

std::cerr << "split_screen error" << std::endl;

return -1;

}

}else{

display_mode=0;

return 0;

}

displayObject.width = width;

displayObject.height = height;

displayObject.display_mode = display_mode;

for(int i = 0; i < display_num; ++i)

{

displayObject.displayLocation[i].x0 = (width * i) % screen_w;

displayObject.displayLocation[i].y0 = height * (width * i / screen_w);

displayObject.displayLocation[i].x1 = displayObject.displayLocation[i].x0 + width - 1;

displayObject.displayLocation[i].y1 = displayObject.displayLocation[i].y0 + height - 1;

std::cout << "i,x0,y0,x1,y1" << i <<","<< displayObject.displayLocation[i].x0 <<","<< displayObject.displayLocation[i].y0 <<","<< displayObject.displayLocation[i].x1 <<","<< displayObject.displayLocation[i].y1 <<std::endl;

}

//displayObject.location_y = (screen_h - (height *width)/screen_w )/2;

displayObject.rga_align.height = ((height+15)/16)*16;

displayObject.rga_align.width = ((width+15)/16)*16;

return 0;

}

根据之前的流id和分割的屏显示出来

static void lcd_fill(char *buff,int obj_id)

{

int id = obj_id-1;

//std::cout << "i,x0,y0,x1,y1" << id <<","<< displayObject.displayLocation[id].x0 <<","<< displayObject.displayLocation[id].y0 <<","<< displayObject.displayLocation[id].x1 <<","<< displayObject.displayLocation[id].y1 <<std::endl;

int start_x = displayObject.displayLocation[id].x0;

int start_y = displayObject.displayLocation[id].y0;

int end_x = displayObject.displayLocation[id].x1;

int end_y = displayObject.displayLocation[id].y1;

int width = displayObject.buf.width;

int height = displayObject.buf.height;

int *screen_base = (int *)displayObject.buf.vaddr;

int *src_buff = (int*)buff;

int i = 0,temp,x;

if (end_x >= width)

{

std::cout << "end_x >> screen width : " << end_x << std::endl;

end_x = width - 1;

}

if (end_y >= height)

{

std::cout << "end_y >> screen width : " << end_y << std::endl;

end_y = height - 1;

}

temp = start_y * width; //定位到起点行首

for ( ; start_y <= end_y; start_y++, temp+=width) {

for (x = start_x; x <= end_x; x++,i++)

{

screen_base[temp + x] = src_buff[i];

}

}

}

六、效果展示

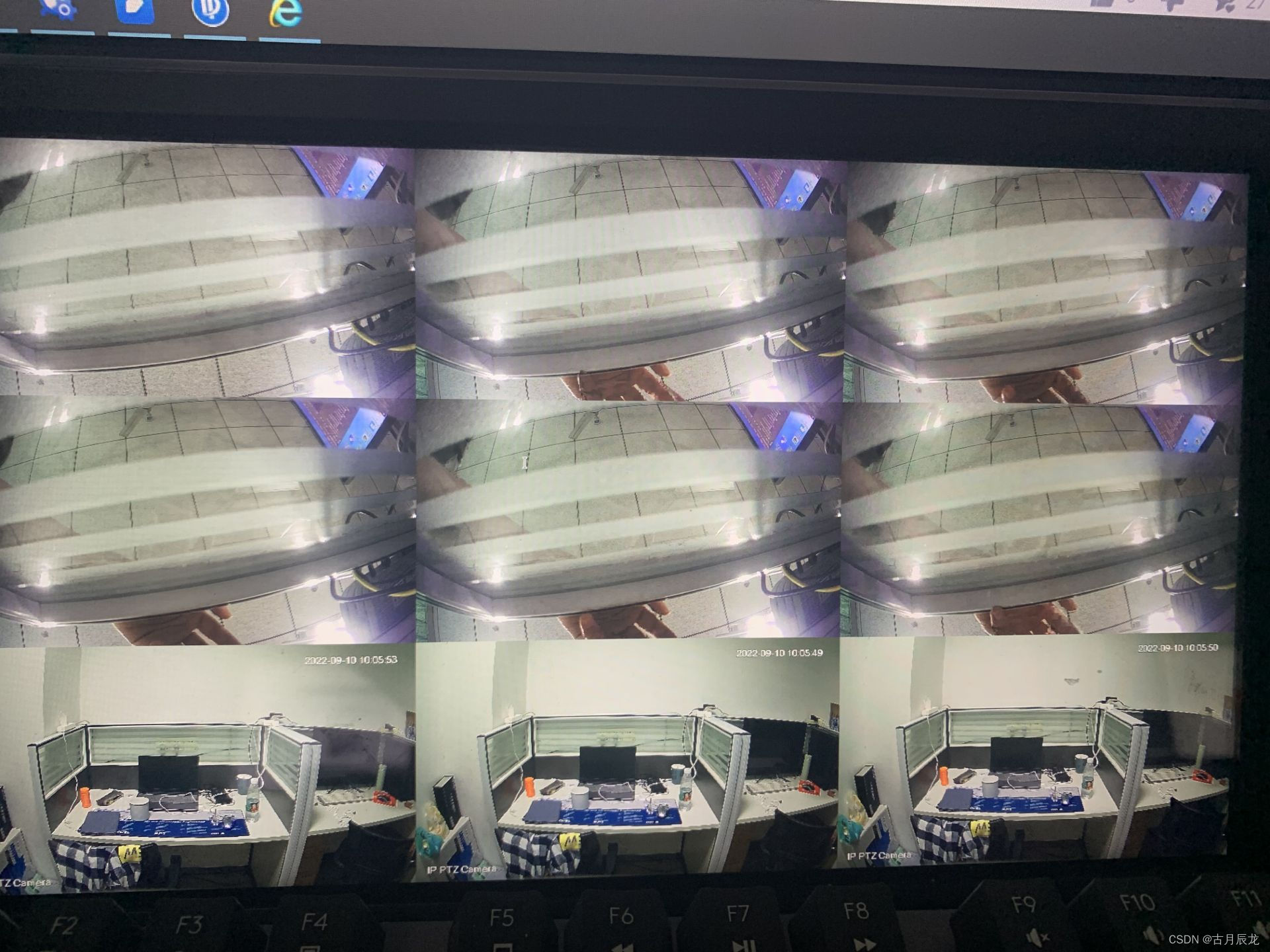

这是使用的两个摄像头,但是不是读取的同一个流。四分屏的实时性很好,但是九分屏有一个实时性跟不上。

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?