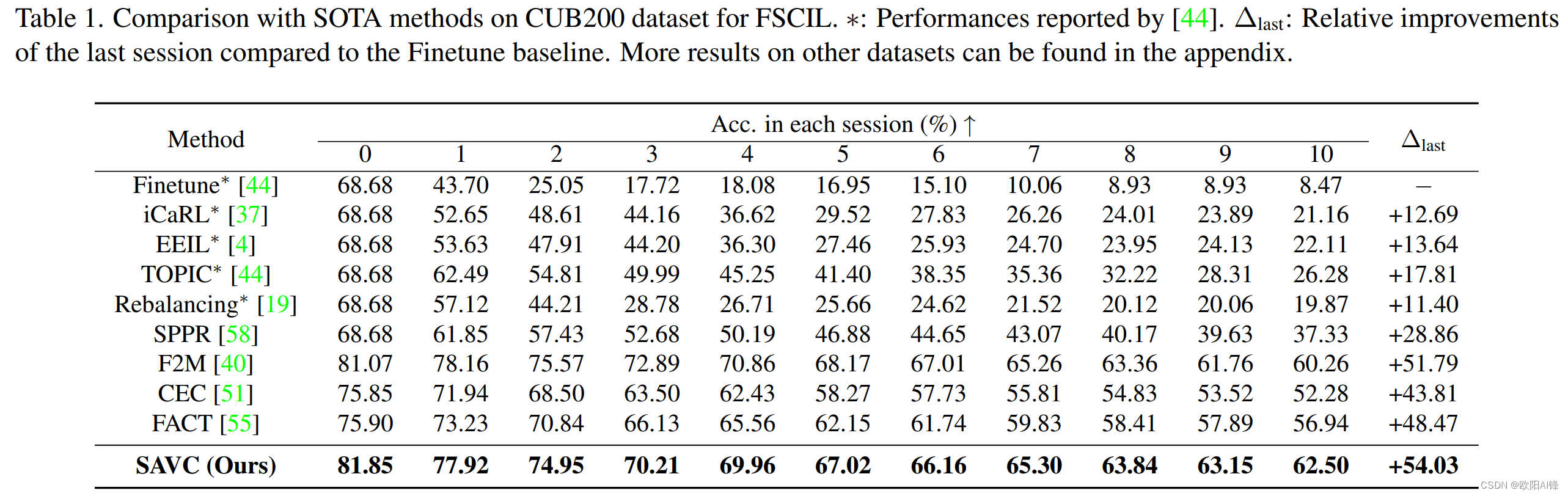

Few-shot class-incremental learning (FSCIL) aims at

learning to classify new classes continually from limited

samples without forgetting the old classes.

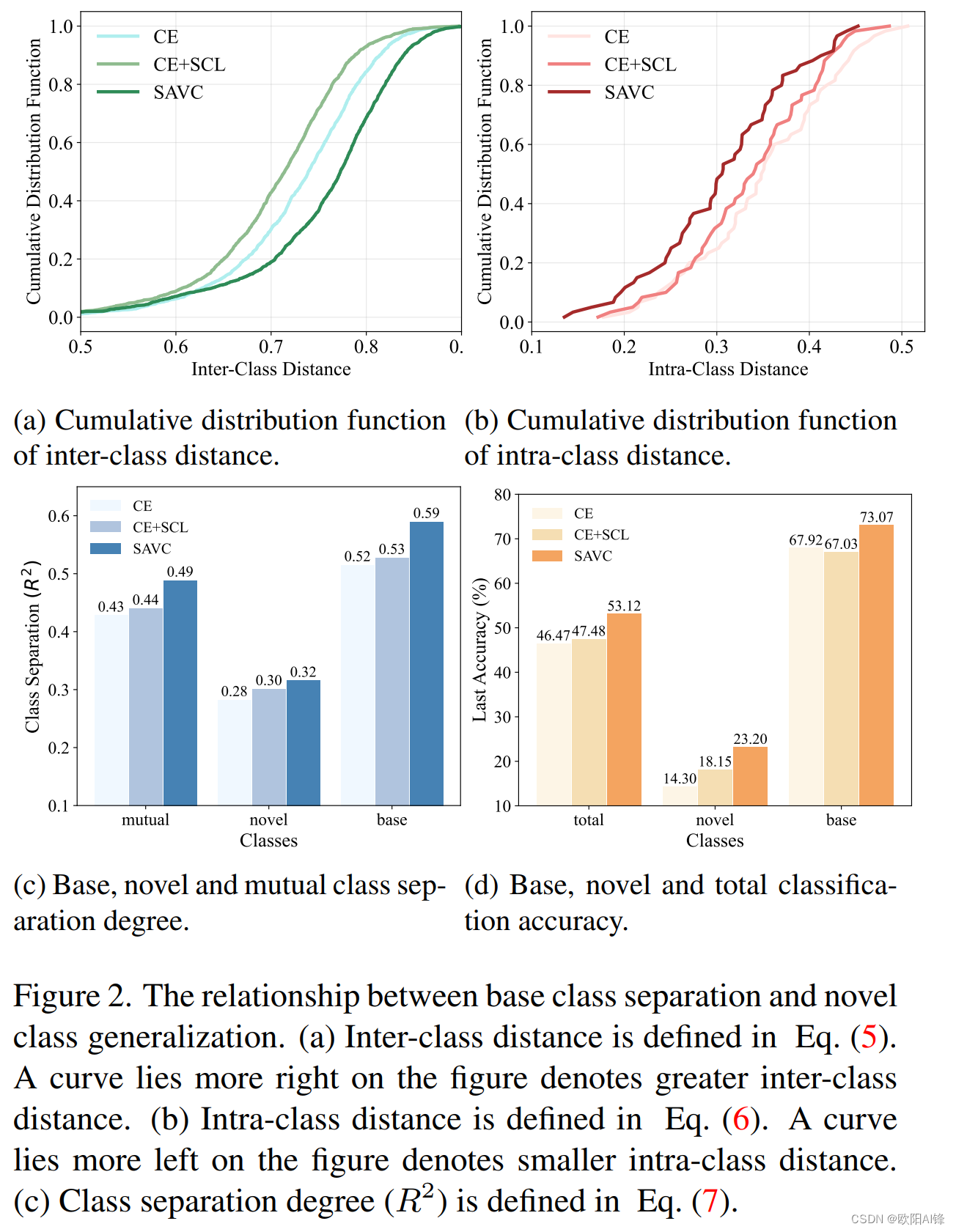

However, in this work, we find that the CE loss is not ideal for the base session training as it suffers poor class separation in terms of representations, which further degrades generalization to novel classes.

现有方法:

1233

1233

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?