1.bucket index背景简介

bucket index是整个RGW里面一个非常关键的数据结构,用于存储bucket的索引数据,默认情况下单个bucket的index全部存储在一个shard文件(shard数量为0,主要以OMAP-keys方式存储在leveldb中),随着单个bucket内的Object数量增加,整个shard文件的体积也在不断增长,当shard文件体积过大就会引发各种问题。

2. 问题及故障

2.1 故障现象描述

- Flapping OSD's when RGW buckets have millions of objects

- ● Possible causes

- ○ The first issue here is when RGW buckets have millions of objects their

- bucket index shard RADOS objects become very large with high

- number OMAP keys stored in leveldb. Then operations like deep-scrub,

- bucket index listing etc takes a lot of time to complete and this triggers

- OSD's to flap. If sharding is not used this issue become worse because

- then only one RADOS index objects will be holding all the OMAP keys.

RGW的index数据以omap形式存储在OSD所在节点的leveldb中,当单个bucket存储的Object数量高达百万数量级的时候,

deep-scrub和bucket list一类的操作将极大的消耗磁盘资源,导致对应OSD出现异常,

如果不对bucket的index进行shard切片操作(shard切片实现了将单个bucket index的LevelDB实例水平切分到多个OSD上),数据量大了以后很容易出事。

- ○ The second issue is when you have good amount of DELETEs it causes

- loads of stale data in OMAP and this triggers leveldb compaction all the

- time which is single threaded and non optimal with this kind of workload

- and causes osd_op_threads to suicide because it is always compacting

- hence OSD’s starts flapping.

RGW在处理大量DELETE请求的时候,会导致底层LevelDB频繁进行数据库compaction(数据压缩,对磁盘性能损耗很大)操作,而且刚好整个compaction在LevelDB中又是单线程处理,很容易到达osdopthreads超时上限而导致OSD自杀。

常见的问题有:

- 对index pool进行scrub或deep-scrub的时候,如果shard对应的Object过大,会极大消耗底层存储设备性能,造成io请求超时。

- 底层deep-scrub的时候耗时过长,会出现request blocked,导致大量http请求超时而出现50x错误,从而影响到整个RGW服务的可用性。

- 当坏盘或者osd故障需要恢复数据的时候,恢复一个大体积的shard文件将耗尽存储节点性能,甚至可能因为OSD响应超时而导致整个集群出现雪崩。

2.2 根因跟踪

当bucket index所在的OSD omap过大的时候,一旦出现异常导致OSD进程崩溃,这个时候就需要进行现场"救火",用最快的速度恢复OSD服务。

先确定对应OSD的OMAP大小,这个过大会导致OSD启动的时候消耗大量时间和资源去加载levelDB数据,导致OSD无法启动(超时自杀)。

特别是这一类OSD启动需要占用非常大的内存消耗,一定要注意预留好内存。(物理内存40G左右,不行用swap顶上)

3. 临时解决方案

3.1 关闭集群scrub, deep-scrub提升集群稳定性

$ ceph osd set noscrub

$ ceph osd set nodeep-scrub

3.2 调高timeout参数,减少OSD自杀的概率

osd_op_thread_timeout = 90 #default is 15

osd_op_thread_suicide_timeout = 2000 #default is 150

If filestore op threads are hitting timeout

filestore_op_thread_timeout = 180 #default is 60

filestore_op_thread_suicide_timeout = 2000 #default is 180

Same can be done for recovery thread also.

osd_recovery_thread_timeout = 120 #default is 30

osd_recovery_thread_suicide_timeout = 2000

3.2 手工压缩OMAP

在可以停OSD的情况下,可以对OSD进行compact操作,推荐在ceph 0.94.6以上版本,低于这个版本有bug。 https://github.com/ceph/ceph/pull/7645/files

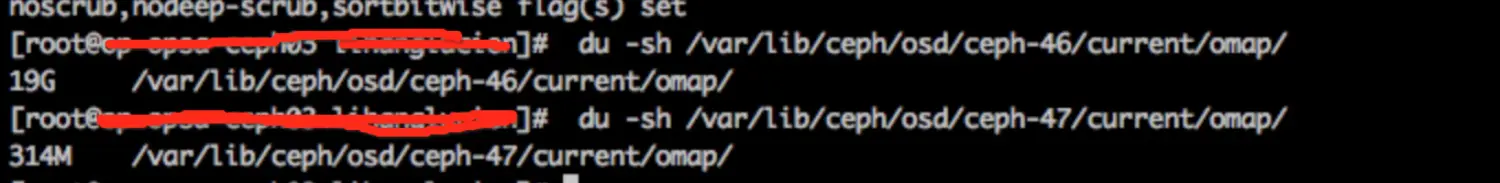

- ○ The third temporary step could be taken if OSD's have very large OMAP

- directories you can verify it with command: du -sh /var/lib/ceph/osd/ceph-$id/current/omap, then do manual leveldb compaction for OSD's.

- ■ ceph tell osd.$id compact or

- ■ ceph daemon osd.$id compact or

- ■ Add leveldb_compact_on_mount = true in [osd.$id] or [osd] section

- and restart the OSD.

- ■ This makes sure that it compacts the leveldb and then bring the

- OSD back up/in which really helps.

#开启noout操作

$ ceph osd set noout

#停OSD服务

$ systemctl stop ceph-osd@<osd-id>

#在ceph.conf中对应的[osd.id]加上下面配置

leveldb_compact_on_mount = true

#启动osd服务

$ systemctl start ceph-osd@<osd-id>

#使用ceph -s命令观察结果,最好同时使用tailf命令去观察对应的OSD日志.等所有pg处于active+clean之后再继续下面的操作

$ ceph -s

#确认compact完成以后的omap大小:

$ du -sh /var/lib/ceph/osd/ceph-$id/current/omap

#删除osd中临时添加的leveldb_compact_on_mount配置

#取消noout操作(视情况而定,建议线上还是保留noout):

$ ceph osd unset noout

4. 永久解决方案

4.1 提前规划好bucket shard

index pool一

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

88

88

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?