1.原代码信息

import org.apache.spark.SparkConf

import org.apache.spark.sql.{DataFrame, SparkSession}

object hivesql {

def main(args: Array[String]): Unit = {

val sparkConf: SparkConf = new SparkConf().setMaster("local[*]").setAppName("hivesql")

val sparkSession: SparkSession = SparkSession.builder()

.config(sparkConf)

.config("hive.metastore.uris","thrift://****:9083")

// .config("spark.sql.hive.metastore.version","1.2.1")

// .config("spark.sql.hive.metastore.jars","C:\\Users\\Administrator\\Downloads\\hivejar\\hive1_2_1jars\\*")

.enableHiveSupport()

.getOrCreate()

val df: DataFrame = sparkSession.sql("show tables")

// sparkSession.sql("show tables").show

df.show()

df.write.mode("overwrite").save("C:\\data")

sparkSession.close()

}

}

Maven中的pom.xml

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>org.example</groupId>

<artifactId>scala_test</artifactId>

<version>1.0-SNAPSHOT</version>

<properties>

<java.version>1.8</java.version>

<maven.compiler.source>${java.version}</maven.compiler.source>

<maven.compiler.target>${java.version}</maven.compiler.target>

<!-- <flink.version>1.13.0</flink.version>-->

<scala.version>2.12</scala.version>

<!-- <hadoop.version>3.1.3</hadoop.version>-->

<project.build.sourceEncoding>UTF-8</project.build.sourceEncoding>

</properties>

<dependencies>

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>5.1.27</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-sql_2.12</artifactId>

<version>3.0.0</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.12</artifactId>

<version>3.0.0</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-yarn_2.12</artifactId>

<version>3.0.0</version>

</dependency>

</dependencies>

</project>

2.运行的报错信息

Error:(1, 19) object spark is not a member of package org.apache

import org.apache.spark.SparkConf

Error:(2, 19) object spark is not a member of package org.apache

import org.apache.spark.sql.{DataFrame, SparkSession}

Error:(7, 20) not found: type SparkConf val sparkConf: SparkConf = new SparkConf().setMaster("local[*]").setAppName("hivesql")

Error:(7, 36) not found: type SparkConf

val sparkConf: SparkConf = new SparkConf().setMaster("local[*]").setAppName("hivesql")

Error:(8, 23) not found: type SparkSession val sparkSession: SparkSession = SparkSession.builder()

Error:(8, 38) not found: value SparkSession

val sparkSession: SparkSession = SparkSession.builder()

Error:(16, 13) not found: type DataFrame

val df: DataFrame = sparkSession.sql("select * from default.student")

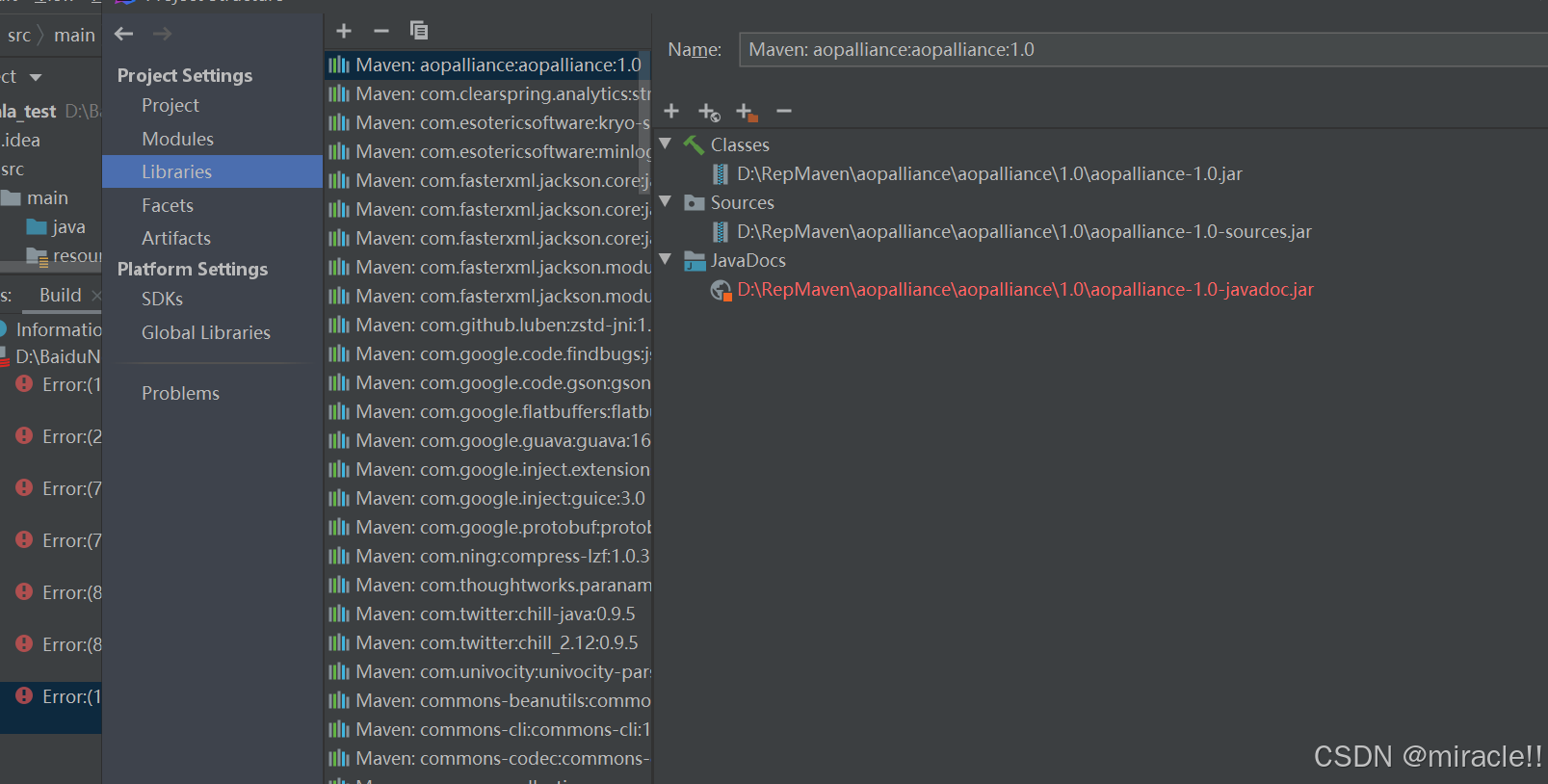

3.解决办法

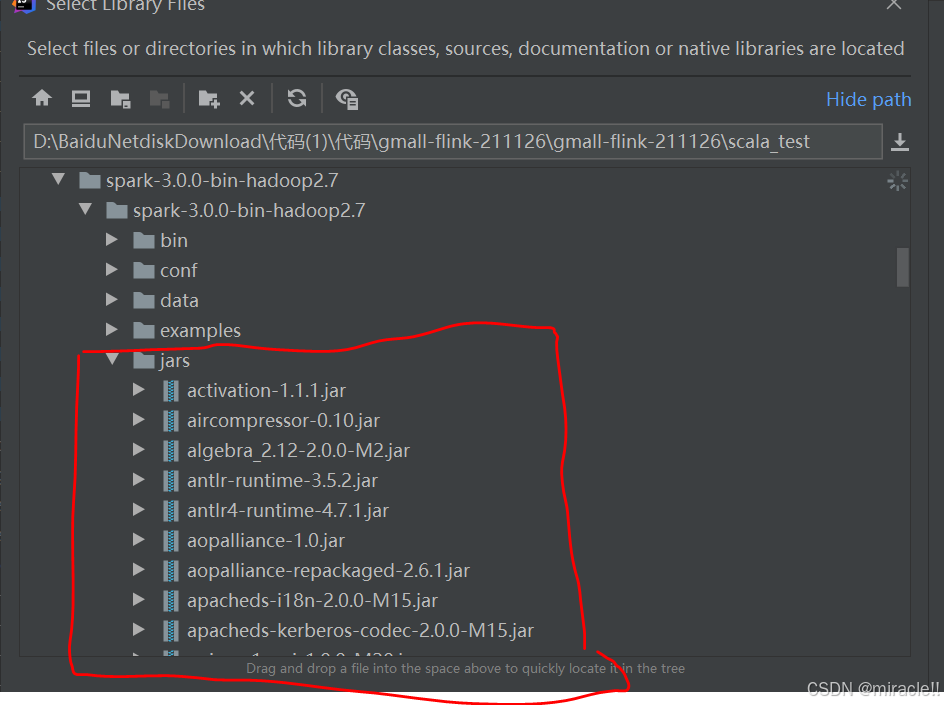

确认pom.xml文件中的信息无误之后,有可能是Maven中jar包不完整,将pom.xml文件中的spark的依赖项注释掉,可以在idea中引入外来的jar包,具体可以从spark的官网上下载,选中spark3-3.0.0-hadoop2.7/jars下面的所有包

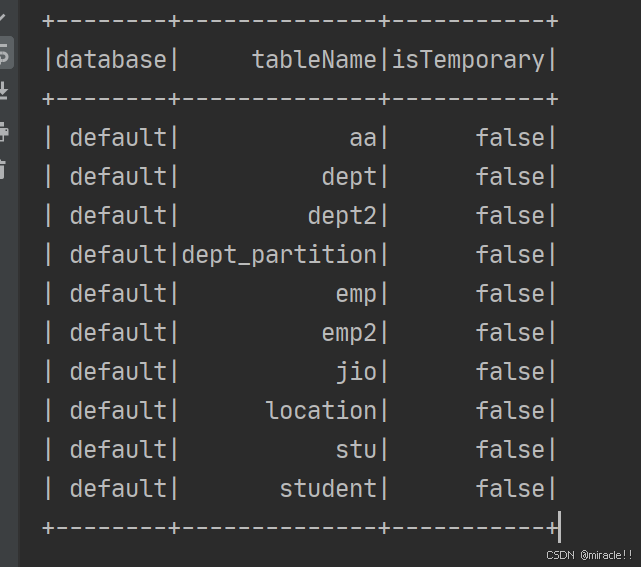

最后运行结果没有提示报错

最后运行结果没有提示报错

4874

4874

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?