eclipse中安装hadoop插件实现词频统计

我使用的hadoop和插件都是2.8.3.我之前安装过的过2.9.1,但是苦于没有插件,又不想自己编译,只好更换版本了。

一、插件配置

1. 将jar包放入eclipse的plugins目录中,打开eclipse

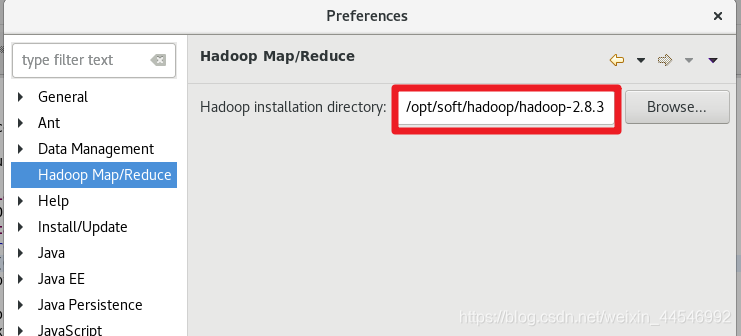

2.在windows>preferences下可看见hadoop Map/Reduce界面,路径选择hadoop解压后的路径。

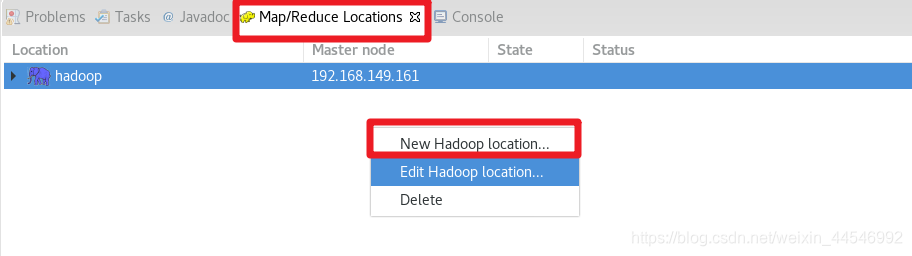

3.选择Windows->show view->others下的MapReduce Locations

4.新建一个配置

在eclipse界面中最下面一栏,选择Map/Reduce Location,在空白位置鼠标右击,选择new hadoop location 。这里我已经建好一个,所以有显示。

在eclipse界面中最下面一栏,选择Map/Reduce Location,在空白位置鼠标右击,选择new hadoop location 。这里我已经建好一个,所以有显示。

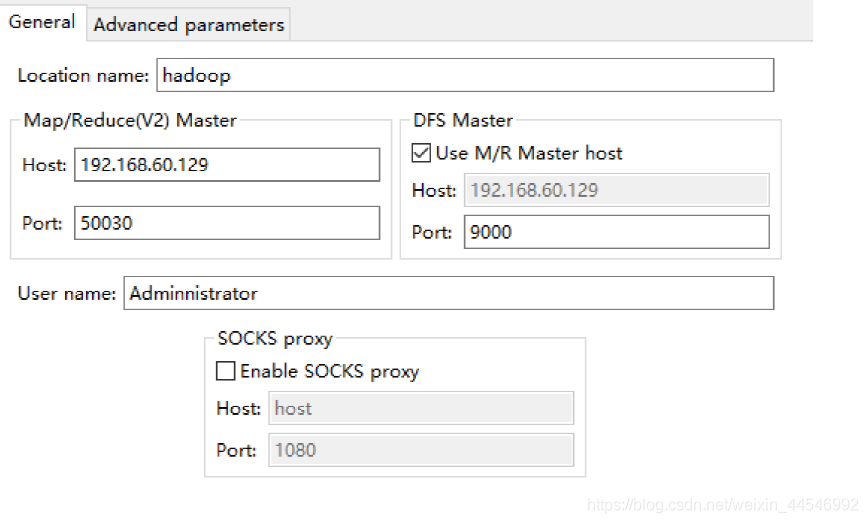

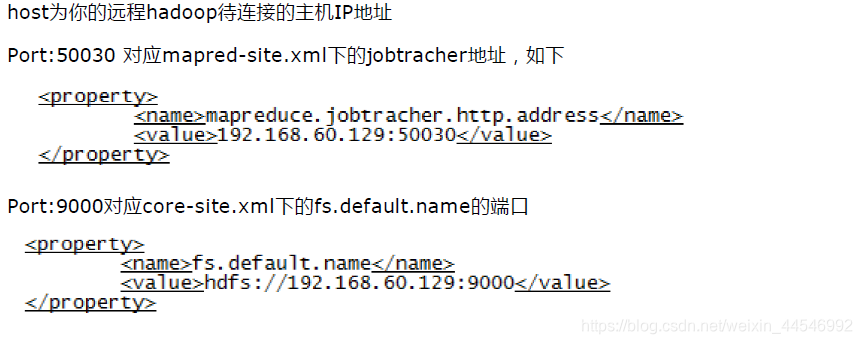

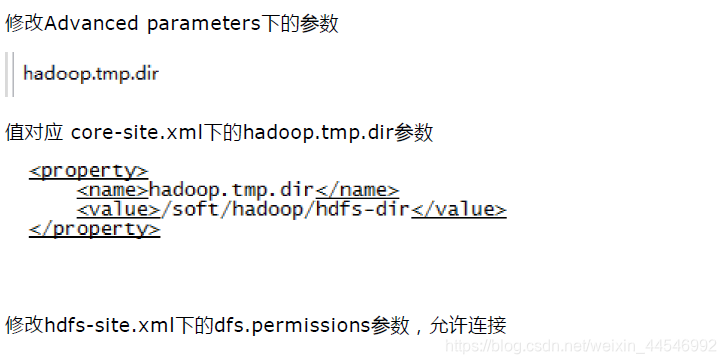

5.修改配置

若是50030那个参数为设置,则可以使用默认的50070端口。

user name是拥有hadoop的用户,我使用的是root

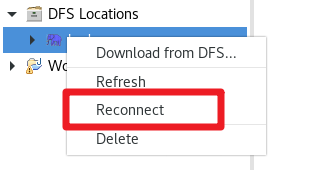

6.选择连接

连接正常的话不会报任何错。

如果发生错误,从两方面来考虑:①hadoop的插件是否是正确能用的,②配置是否发生错误。

二、实现词频统计

1.源码

import java.io.IOException;

import java.util.StringTokenizer;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.util.GenericOptionsParser;

public class WordCount {

public static class TokenizerMapper

extends Mapper<Object, Text, Text, IntWritable>{

private final static IntWritable one = new IntWritable(1);

private Text word = new Text();

public void map(Object key, Text value, Context context

) throws IOException, InterruptedException {

StringTokenizer itr = new StringTokenizer(value.toString());

while (itr.hasMoreTokens()) {

word.set(itr.nextToken());

context.write(word, one);

}

}

}

public static class IntSumReducer

extends Reducer<Text,IntWritable,Text,IntWritable> {

private IntWritable result = new IntWritable();

public void reduce(Text key, Iterable<IntWritable> values,

Context context

) throws IOException, InterruptedException {

int sum = 0;

for (IntWritable val : values) {

sum += val.get();

}

result.set(sum);

context.write(key, result);

}

}

public static void main(String[] args) throws Exception {

Configuration conf = new Configuration();

String[] otherArgs = new GenericOptionsParser(conf, args).getRemainingArgs();

if (otherArgs.length != 2) {

System.err.println(otherArgs.length);

System.err.println("Usage: wordcount <in> <out>");

System.exit(2);

}

Job job = new Job(conf, "word count");

job.setJarByClass(WordCount.class);

job.setMapperClass(TokenizerMapper.class);

job.setCombinerClass(IntSumReducer.class);

job.setReducerClass(IntSumReducer.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

FileInputFormat.addInputPath(job, new Path(otherArgs[0]));

FileOutputFormat.setOutputPath(job, new Path(otherArgs[1]));

System.exit(job.waitForCompletion(true) ? 0 : 1);

}

}2.上传文件

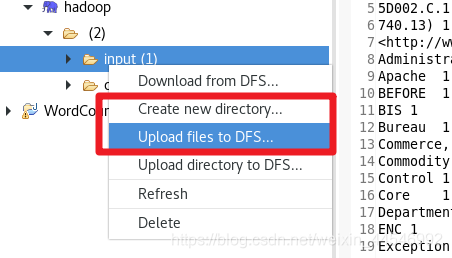

先创建一个input目录用于存放上传文件弟弟目录。然后再input目录上右击选择upload files to DFS。

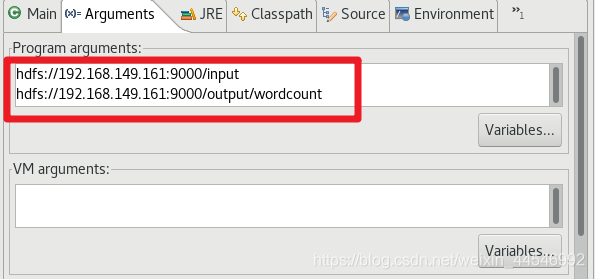

3.运行

右击wordcount,选择run as run->configurations

4.结果

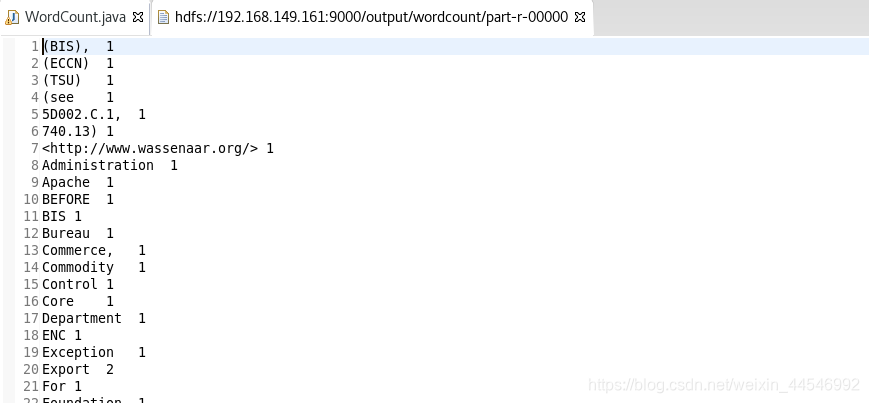

在左侧DFS Location中一级一级寻找,能找到wordCount目录,其中pat-r-xxxxx是输出文件

1379

1379

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?