Python 爬虫目录

1、最新 Python3 爬取前程无忧招聘网 lxml+xpath

2、Python3 Mysql保存爬取的数据 正则

3、Python3 用requests 库 和 bs4 库 最新爬豆瓣电影Top250

4、Python Scrapy 爬取 前程无忧招聘网

5、Python3 爬取房价 采用lxml+xpath

6、持续更新…

本文更新于2021年06月01日

本文选用的是lxml模块,xpath语法提取数据

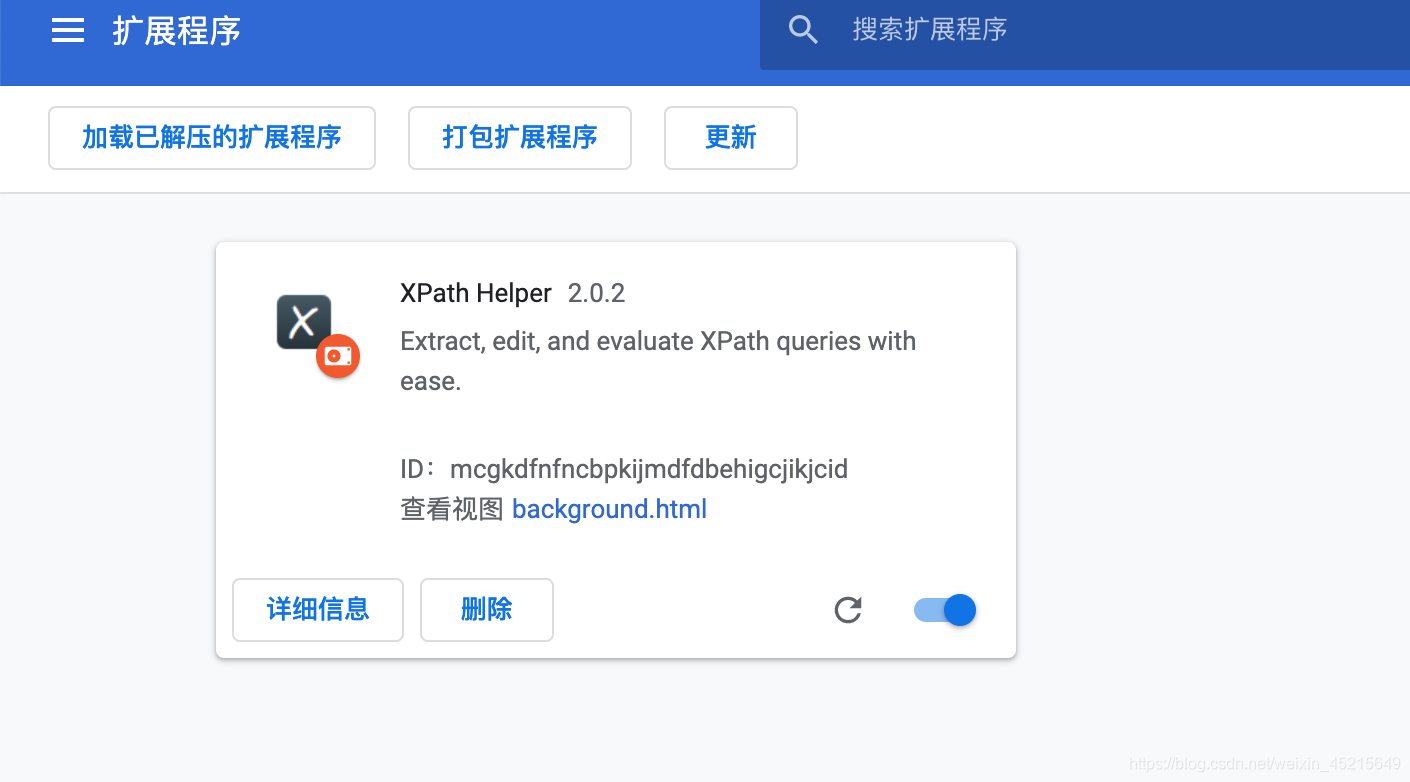

推荐谷歌用户一个可以帮助xpath调试的插件

Xpath Helper

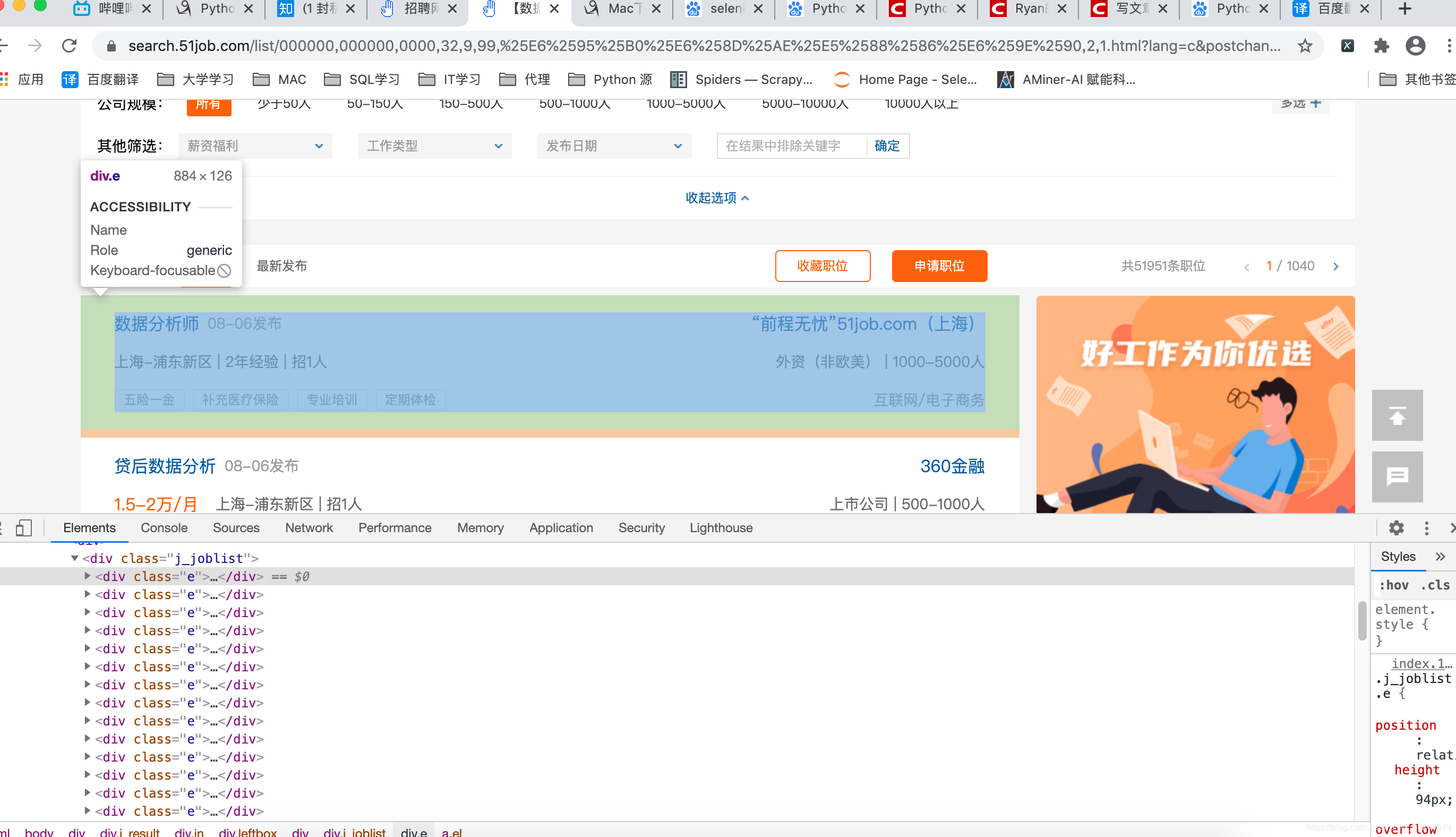

1、进行分析网站

**

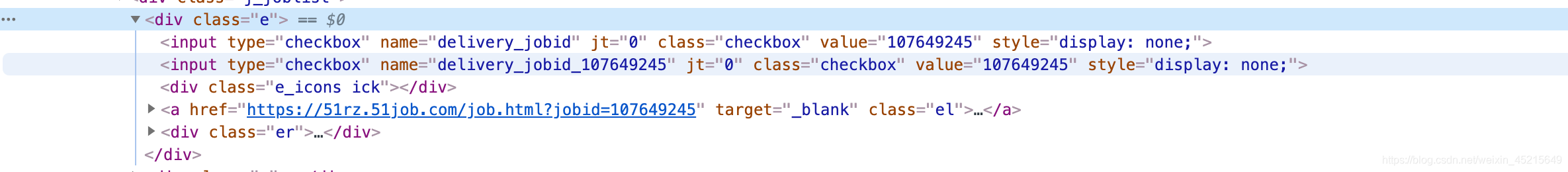

要爬取的职位名、公司名、工作地点、薪资的信息都在class="e"里

**

分析完就可以用xpath 语法进行调试了

完整代码如下:

from selenium import webdriver

from lxml import etree

import time

import pymysql

# 设置不启动浏览器

option = webdriver.ChromeOptions()

option.add_argument('headless')

def get_url(url):

browser = webdriver.Chrome(options=option)

browser.get(url)

html_text = browser.page_source

# browser.quit()

# time.sleep(5)

return html_text

def GetData(url):

"""

:param url: 目标网址

:return:

"""

html_text = get_url(url)

dom = etree.HTML(html_text)

dom_list = dom.xpath(

'//div[@class="j_result"]/div[@class="in"]/div[@class="leftbox"]//div[@class="j_joblist"]//div[@class="e"]')

Job_list = []

for t in dom_list:

# 1.岗位名称

job_name = t.xpath('.//a[@class="el"]//span[@class="jname at"]/text()')[0]

# print(job_name) for test

# 2.发布时间

release_time = t.xpath('.//a[@class="el"]//span[@class="time"]/text()')[0]

# 3.工作地点

address = t.xpath('.//a[@class="el"]//span[@class="d at"]/text()')[0]

# 4.工资

salary_mid = t.xpath('.//a[@class="el"]//span[@class="sal"]')

salary = [i.text for i in salary_mid][0] # 列表解析

# 5.公司名称

company_name = t.xpath('.//div[@class="er"]//a[@class="cname at"]/text()')[0]

# 6.公司类型和规模

company_type_size = t.xpath('.//div[@class="er"]//p[@class="dc at"]/text()')[0]

# 7.行业

indusrty = t.xpath('..//div[@class="er"]//p[@class="int at"]/text()')[0]

JobInfo = {

'job_name': job_name,

'address': address,

'salary': salary,

'company_name': company_name,

'company_type_size': company_type_size,

'industry': indusrty,

'release_time': release_time

}

Job_list.append(JobInfo)

return Job_list

def SaveSql(data):

"""

:param data: 数据

:return:

"""

# 创建连接

db = pymysql.Connect(

host='localhost', # mysql服务器地址

port=3306, # mysql服务器端口号

user='root', # 用户名

passwd='123123', # 密码

db='save_data', # 数据库名

charset='utf8' # 连接编码

)

# 创建游标

cursor = db.cursor()

# 使用预处理语句创建表

cursor.execute("""create table if not exists Job_info(ID INT PRIMARY KEY AUTO_INCREMENT ,

job_name VARCHAR(100) ,

address VARCHAR (100),

salary VARCHAR (30),

company_name VARCHAR (100) ,

company_type_size VARCHAR (100),

industry VARCHAR (50),

release_time VARCHAR (30))""")

for i in data:

insert = "INSERT INTO Job_info(" \

"job_name,address,salary,company_name,company_type_size,industry,release_time" \

")values(%s,%s,'%s',%s,%s,%s,%s)" % (

repr(i['job_name']), repr(i['address']), i['salary'], repr(i['company_name']),

repr(i['company_type_size']), repr(i['industry']), repr(i['release_time']))

try:

# 执行sql语句

cursor.execute(insert)

# 执行sql语句

db.commit()

print("insert ok")

except:

# 发生错误时回滚

db.rollback()

db.commit()

# 关闭数据库连接

db.close

if __name__ == '__main__':

for i in range(301, 451):

print('开始存储第' + str(i) + '条数据中')

url_pre = 'https://search.51job.com/list/000000,000000,0000,00,9,99,%25E6%2595%25B0%25E6%258D%25AE%25E5%2588%2586%25E6%259E%2590,2,'

url_end = '.html?'

url = url_pre + str(i) + url_end

SaveSql(GetData(url))

time.sleep(3)

print('存储完成')

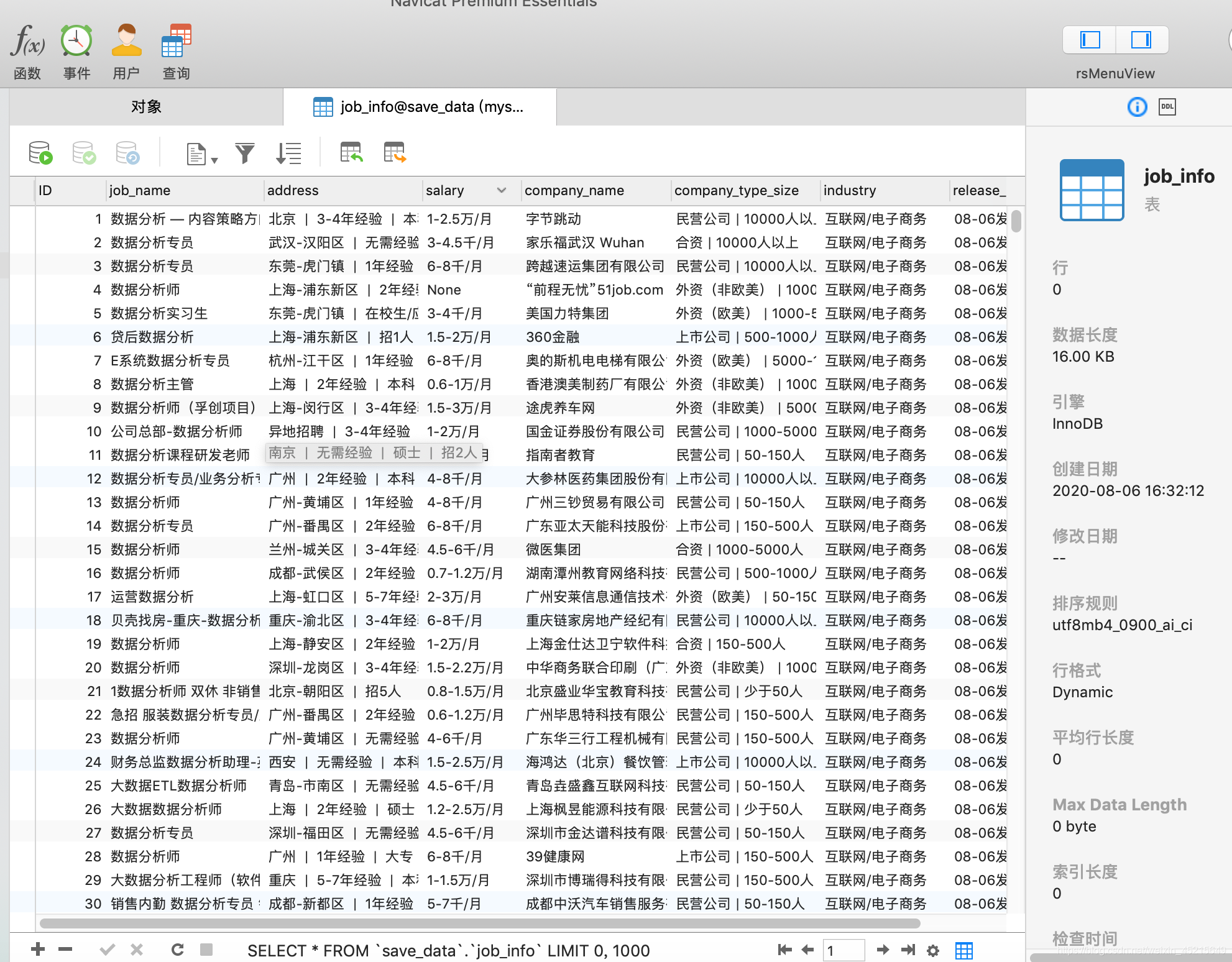

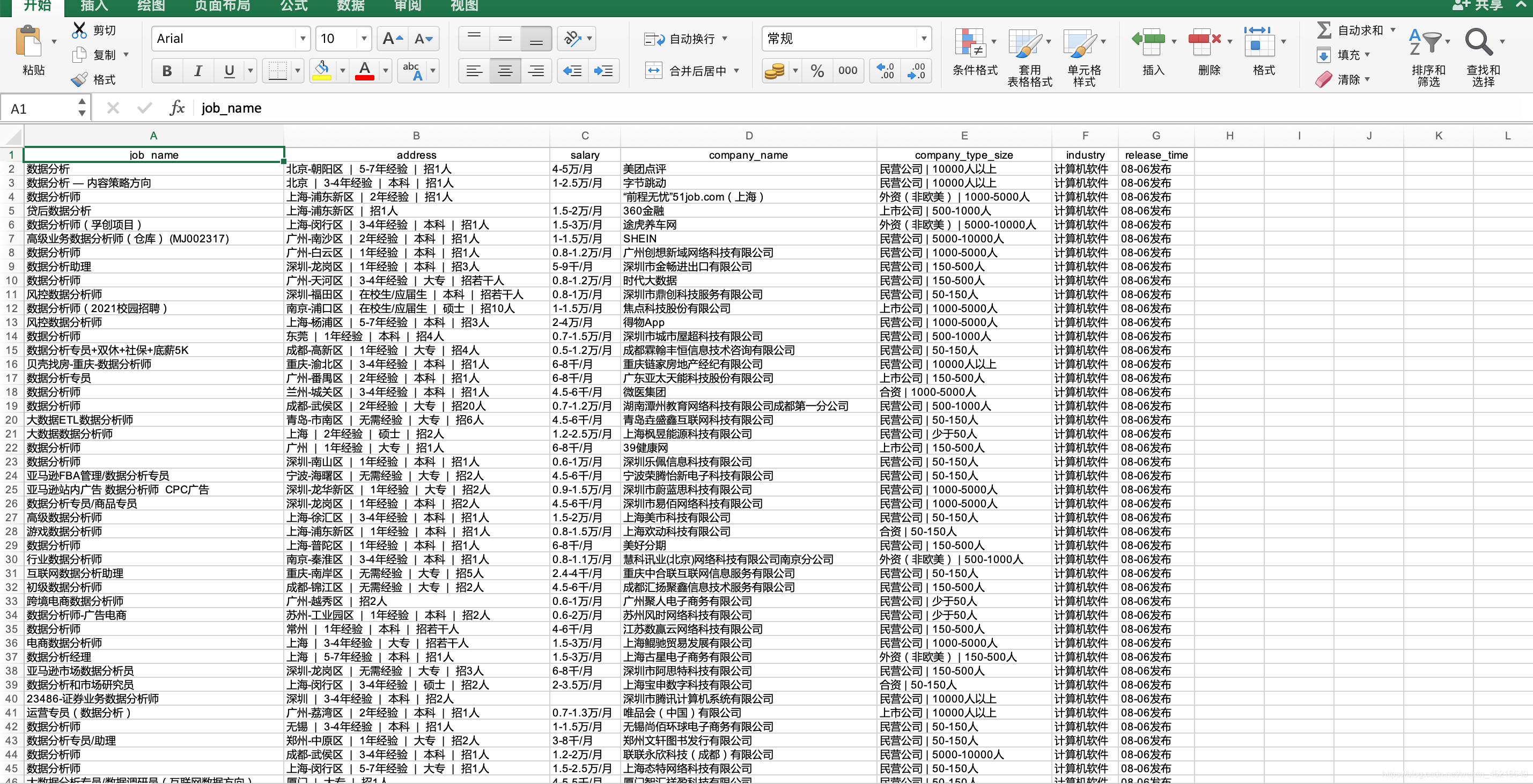

完成后效果

mysql 存储

Excel 存储(后续更新)

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?