1.上传hive的tar包

apache-hive-2.3.7-bin.tar.gz

2.解压hive的tar包

tar -xzf apache-hive-2.3.7-bin.tar.gz

3.添加hive的环境变量

进入hive的 /etc/profile文件

vim /etc/profile

添加以下内容

#HIVE_HOME

export HIVE_HOME=/opt/hive-2.3.7

export PATH=$PATH:$HIVE_HOME/bin

使配置环境生效

source /etc/profile

4.进入hive的 hive-2.3.7/conf 文件

cd hive-2.3.7/conf

5.修改配置文件 hive

将hive-env.sh.template复制为hive-env.sh

cp hive-env.sh.template hive-env.sh

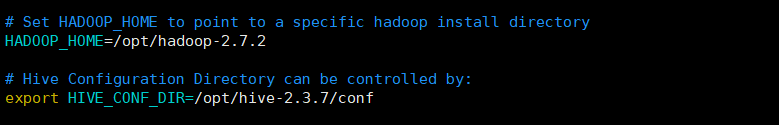

修改以下内容

HADOOP_HOME=/opt/hadoop-2.7.2

export HIVE_CONF_DIR=/opt/hive-2.3.7/conf

6.修改配置文件 hive-site.xml

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://bigdata02:3306/metastore?createDatabaseIfNotExist=true</value>

<description>JDBC connect string for a JDBC metastore</description>

</property>

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

<description>Driver class name for a JDBC metastore</description>

</property>

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>root</value>

<description>username to use against metastore database</description>

</property>

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>123456</value>

<description>password to use against metastore database</description>

</property>

</configuration>

7.保证hadoop的guava和hive的guava版本一致

hadoop的地址

cd /opt/hadoop-2.7.2/share/hadoop/common/lib/

hive 的地址

cd /opt/hive-2.3.7/lib/

执行命令

cp /opt/hive-2.3.7/lib/guava-14.0.1.jar /opt/hadoop-2.7.2/share/hadoop/common/lib

rm -rf /opt/hadoop-2.7.2/share/hadoop/common/lib/guava-11.0.2.jar

8.驱动拷贝:拷贝mysql-connector-java-5.1.47-bin.jar到/data/hive/lib/

tar -zxvf mysql-connector-java-5.1.27.tar.gz

cd mysql-connector-java-5.1.27

cp /opt/mysql-libs/mysql-connector-java-5.1.27/mysql-connector-java-5.1.27-bin.jar /opt/hive-2.3.7/lib/

9.一定在都配置好之后,在服务端初始化(node1)

bin/schematool -dbType mysgl -initSchemay

#hive2.x的命令

bin/schematool -initSchema -dbType mysql

10.启动:

在node1上启动metastore命令

bin/hive --service metastore

启动数据库命令

bin/hive

11.Web页面访问

启动服务器:

nohup bin/hiveserver2 &

访问(等待时间比较长)

node1:10001

12.beeline访问

bin/beeline

!connect jdbc:hive2://node1:10000

Enter username for jdbc:hive2://node1:10000:root

Enter password for jdbc:hive2://node1:10000:(回车)

Connected to: Apache Hive (version 2.3.7)

Driver: Hive JDBC (version 2.3.7)

Transaction isolation: TRANSACTION_REPEATABLE_READ

访问beeline是报错:

Error: Could not open client transport with JDBC Uri: jdbc:hive2://node1:10000: Failed to open new session: java.lang.RuntimeException: org.apache.hadoop.ipc.RemoteException(org.apache.hadoop.security.authorize.AuthorizationException): User: root is not allowed to impersonate root (state=08S01,code=0)

https://blog.csdn.net/weixin_45271668/article/details/113665670

1247

1247

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?