最近搭建hadoop遇到如下问题,如何解决呢?

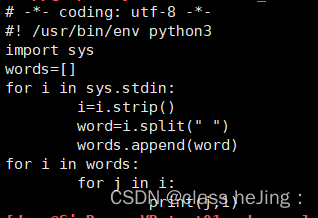

map.py 文件如下:

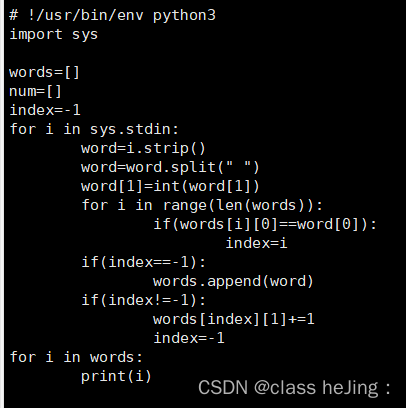

reducer.py文件如下:

执行命令 :hadoop jar /opt/hadoop/hadoop/share/hadoop/tools/lib/hadoop-streaming-2.7.5.jar -file map.py -mapper "python3 map.py" -file reducer.py -reducer "python3 reducer.py" -input /word.txt -output /test9

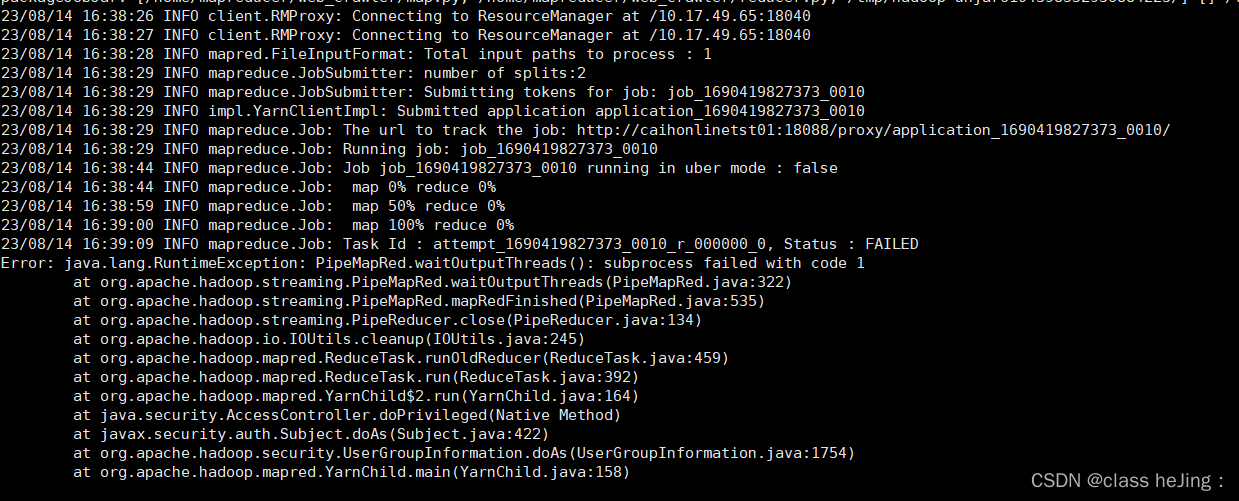

报错如下怎么解决呢:

23/08/14 16:38:26 INFO client.RMProxy: Connecting to ResourceManager at /10.17.49.65:18040

23/08/14 16:38:27 INFO client.RMProxy: Connecting to ResourceManager at /10.17.49.65:18040

23/08/14 16:38:28 INFO mapred.FileInputFormat: Total input paths to process : 1

23/08/14 16:38:29 INFO mapreduce.JobSubmitter: number of splits:2

23/08/14 16:38:29 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1690419827373_0010

23/08/14 16:38:29 INFO impl.YarnClientImpl: Submitted application application_1690419827373_0010

23/08/14 16:38:29 INFO mapreduce.Job: The url to track the job: http://caihonlinetst01:18088/proxy/application_1690419827373_0010/

23/08/14 16:38:29 INFO mapreduce.Job: Running job: job_1690419827373_0010

23/08/14 16:38:44 INFO mapreduce.Job: Job job_1690419827373_0010 running in uber mode : false

23/08/14 16:38:44 INFO mapreduce.Job: map 0% reduce 0%

23/08/14 16:38:59 INFO mapreduce.Job: map 50% reduce 0%

23/08/14 16:39:00 INFO mapreduce.Job: map 100% reduce 0%

23/08/14 16:39:09 INFO mapreduce.Job: Task Id : attempt_1690419827373_0010_r_000000_0, Status : FAILED

Error: java.lang.RuntimeException: PipeMapRed.waitOutputThreads(): subprocess failed with code 1

at org.apache.hadoop.streaming.PipeMapRed.waitOutputThreads(PipeMapRed.java:322)

at org.apache.hadoop.streaming.PipeMapRed.mapRedFinished(PipeMapRed.java:535)

at org.apache.hadoop.streaming.PipeReducer.close(PipeReducer.java:134)

at org.apache.hadoop.io.IOUtils.cleanup(IOUtils.java:245)

at org.apache.hadoop.mapred.ReduceTask.runOldReducer(ReduceTask.java:459)

at org.apache.hadoop.mapred.ReduceTask.run(ReduceTask.java:392)

at org.apache.hadoop.mapred.YarnChild$2.run(YarnChild.java:164)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1754)

at org.apache.hadoop.mapred.YarnChild.main(YarnChild.java:158)

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?