声明:

借鉴了其他博主的写法,但是基于本案例的需求修改了相当大部分,也优化了部分模块(数据存储模块),感谢大家的分享,让我可以站在巨人的肩膀上。遇到问题请在评论区留言。

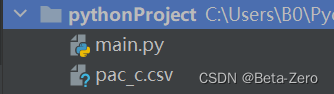

工程结构如下:

第一个文件是爬虫程序,第二个csv格式文件是存放数据的文件

main.py内容如下:

from selenium import webdriver

from selenium.webdriver.common.by import By

from selenium.webdriver.support.ui import WebDriverWait

from selenium.webdriver.support import expected_conditions as EC

import re

import time

import csv

key_word = '电动车充电器' #搜索关键字

data=[] #存放爬到的数据

browser = webdriver.Edge(r'C:\edgedriver_win64\msedgedriver.exe' ) #添加浏览器的driver的路径

def search(key_word):

try:

browser.get('https://www.jd.com/')

# 输入框加载完成

input = WebDriverWait(browser,10).until(

EC.presence_of_element_located((By.CSS_SELECTOR,'#key'))

)

# 搜索按钮加载完成

submit = WebDriverWait(browser,10).until(

EC.element_to_be_clickable((By.CSS_SELECTOR,'#search > div > div.form > button'))

)

input.send_keys(key_word)

submit.click()

# 获取一共有多少页结果

total_page = WebDriverWait(browser,10).until(

EC.presence_of_element_located((By.CSS_SELECTOR,'#J_bottomPage > span.p-skip > em:nth-child(1) > b'))

)

# 打印一共有多少页结果

return total_page.text

except TimeoutError:

# 出现错误,重新请求一次

return search()

def next_page(page_num):

try:

print('跳转到: 第', page_num,'页')

# 输入框加载完成

input = WebDriverWait(browser, 10).until(

EC.presence_of_element_located((By.CSS_SELECTOR, '#J_bottomPage > span.p-num > a.pn-next'))

)

# 搜索按钮加载完成

submit = WebDriverWait(browser, 10).until(

EC.element_to_be_clickable((By.CSS_SELECTOR, '#J_bottomPage > span.p-num > a.pn-next'))

)

submit.click()

time.sleep(1)

finish_load =WebDriverWait(browser,10).until(

EC.presence_of_element_located((By.CLASS_NAME,'gl-item'))

)

if finish_load:

print('第 ',page_num,' 页加载完成')

get_products(page_num)

except TimeoutError:

next_page(page_num)

def get_products(page_num):

if page_num == 10:

save_to_mongo()

print('开始采集第 ',page_num,' 页信息')

WebDriverWait(browser,10).until(

EC.presence_of_element_located((By.CLASS_NAME,'gl-item'))

)

# html = browser.page_source

# doc = pq(html)

items = browser.find_elements_by_class_name('gl-item')

index = 0

print("第",page_num,"页有", len(items),"条数据")

for item in items:

product={'title': 0,'price': 0,'shop': 0}

try:

product['price']=item.find_element_by_css_selector('.p-price strong').text.replace('\n', '')

except:

print("元素缺失")

try:

product['title']=item.find_element_by_css_selector('.p-name em').text.replace('\n', '')

except:

print("元素缺失")

try:

product['shop']=item.find_element_by_css_selector('.p-shop span').text.replace('\n', '')

except:

print("元素缺失")

data.append(product.values())

# print(product)

index = index + 1

def save_to_mongo():

csv_file = open('pac_c.csv', 'w',newline='')

csv_writer = csv.writer(csv_file)

# 4.3 写入内容

csv_writer.writerows(data)

# 5.关闭文件

csv_file.close()

print('保存成功')

def main(key_word):

try:

print('开始采集 ',key_word,' 信息')

total_page = search(key_word)

total_page = int(re.compile('.*?(\d+).*?').search(total_page).group(1))

print('一共有',total_page,'页数据')

get_products(1)

for i in range(2,total_page+1):

next_page(i)

except TimeoutError:

print('error')

finally:

browser.close()

if __name__ == '__main__':

main(key_word)

1024

1024

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?