爬虫代理

什么是cookie

直接使用cookie登录

from urllib import request

headers={

"User-Agent":"Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/70.0.3538.25 Safari/537.36 Core/1.70.3676.400 QQBrowser/10.4.3505.400",

"Cookie":"anonymid=k0wfsnijqs2lmb; depovince=GW; _r01_=1; JSESSIONID=abcjKGMpPZ8bo8OsLrE1w; ick_login=aacfd19d-560a-4bca-a1c1-23ba6cfcac0f; t=88897ae92ee7fdd08268afe7bc008a006; societyguester=88897ae92ee7fdd08268afe7bc008a006; id=972318796; xnsid=a1b48203; jebecookies=6910d4bb-91d7-49e2-b366-adf8ea698c6d|||||; ver=7.0; loginfrom=null; springskin=set; jebe_key=b719fb10-d33f-4f44-84bc-befc9dc7a7db%7C41a89a6b668be22f1c2d3ef71265d076%7C1569245071604%7C1%7C1569245071607; jebe_key=b719fb10-d33f-4f44-84bc-befc9dc7a7db%7C41a89a6b668be22f1c2d3ef71265d076%7C1569245071604%7C1%7C1569245071609; vip=1; wp_fold=0"

}

url="http://www.renren.com/972318796"

req=request.Request(url,headers=headers)

resp=request.urlopen(req)

with open("renren.html",'w',encoding='utf-8') as fp:

fp.write(resp.read().decode('utf-8'))

cookie自动登录

from urllib import request

from http.cookiejar import CookieJar

from urllib import parse

cookiejar=CookieJar()

handler=request.HTTPCookieProcessor(cookiejar)

opener=request.build_opener(handler)

headers={

"User-Agent":"Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/70.0.3538.25 Safari/537.36 Core/1.70.3676.400 QQBrowser/10.4.3505.400",

}

data={

"email":"15286419300",

"password":"ssss15286419300"

}

url1="http://www.renren.com/"

req=request.Request(url1,data=parse.urlencode(data).encode('utf-8'),headers=headers,method='GET')

opener.open(req)

url2="http://www.renren.com/972319020"

resp=opener.open(url2)

with open('renren.html','w',encoding='utf-8') as tp:

tp.write(resp.read().decode('utf-8'))

保存cookie信息

from urllib import request

from http.cookiejar import CookieJar

from urllib import parse

from http.cookiejar import MozillaCookieJar

cookiejar=MozillaCookieJar("cookie.txt")

cookiejar.load(ignore_discard=True)

handler=request.HTTPCookieProcessor(cookiejar)

opener=request.build_opener(handler)

resp=opener.open("https://www.baidu.com/?tn=95861220_hao_pg&H123Tmp=nunew11")

for cookie in cookiejar:

print(cookie)

# cookiejar.save(ignore_discard=True)

requests库

import requests

url="https://www.baidu.com/s?"

headers={

"User-Agent":"Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/70.0.3538.25 Safari/537.36 Core/1.70.3676.400 QQBrowser/10.4.3505.400"

}

kw={

"wd":"中国"

}

res=requests.get(url,params=kw,headers=headers)

with open("baidu.html","w",encoding="utf-8") as fp:

fp.write(res.content.decode("utf-8"))

request post请求

使用代理IP

import requests

url="https://www.baidu.com/?tn=06074089_36_pg"

proxy={

"http":"117.90.2.221:9000"

}

resp=requests.get(url,proxies=proxy)

print(resp.text)

request处理cookie

import requests

url="http://www.renren.com/"

data={

"text":"15286419300",

"password":"ssss15286419300"

}

headers={

"User-Agent":"Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/70.0.3538.25 Safari/537.36 Core/1.70.3676.400 QQBrowser/10.4.3505.400"

}

session=requests.session()

session.post(url,data=data,headers=headers)

url1="http://www.renren.com/972319020"

resp=session.get(url1)

with open("renren.html","w",encoding='utf-8') as fp:

fp.write(resp.text)

request处理不信任证书

使用lxml解析html

def lxml1():

html=etree.HTML(text)

print(etree.tostring(html,encoding='utf-8').decode('utf-8'))

def lxml2():

parser=etree.HTMLParser(encoding='utf-8')

html=etree.parse("renren.html",parser=parser)

print(etree.tostring(html,encoding="utf-8").decode('utf-8'))

if __name__ == '__main__':

lxml2()

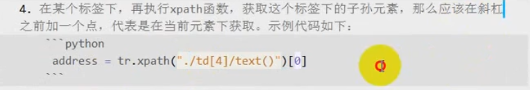

lxml和xpath结合解析网页

爬取豆瓣电影

import requests

from lxml import etree

headers={

"User-Agent":"Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/70.0.3538.25 Safari/537.36 Core/1.70.3676.400 QQBrowser/10.4.3505.400",

"Referer":"https://movie.douban.com/"

}

url="https://movie.douban.com/cinema/nowplaying/guiyang/"

re= requests.get(url,headers=headers)

text=re.text

html=etree.HTML(text)

ul=html.xpath("//ul[@class='lists']")[0]

li=ul.xpath("./li")

for i in li:

title=i.xpath("@data-title")[0]

score=i.xpath("@data-score")[0]

director=i.xpath("@data-director")[0]

actors=i.xpath("@data-actors")[0]

img=i.xpath("./ul/li/a/img/@src")

print("名字:",title,"评分:",score,"导演:",director,"演员:",actors)

爬取电影天堂

import requests

from lxml import etree

url2=[]

jurl="https://www.dytt8.net"

headers={

"User-Agent":"Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/70.0.3538.25 Safari/537.36 Core/1.70.3676.400 QQBrowser/10.4.3505.400"

}

def xiangqing(url):

re=requests.get(url,headers=headers)

text=re.content.decode('gbk','ignore')

html=etree.HTML(text)

urls=html.xpath("//table[@class='tbspan']//a/@href")

for i in urls:

url1=jurl+i

url2.append(url1)

def jiben():

url = "https://www.dytt8.net/html/gndy/dyzz/list_23_{}.html"

url4=["https://www.dytt8.net/html/gndy/dyzz/index.html"]

i=0

for x in range(2,7):

url3=url.format(x)

url4.append(url3)

for z in url4:

url5=url4[i]

i+=1

xiangqing(url5)

def pacong():

for i in url2:

re=requests.get(i)

text=re.content.decode('gbk','ignore')

html=etree.HTML(text)

test=html.xpath("//p")[0]

test1=html.xpath("//p/text()")

test2 = html.xpath("//div[@id='Zoom']")[0]

img = test2.xpath(".//img/@src")[0]

title=test1[0]

for info in test1:

if info.startswith("◎年 代"):

info=info.replace("◎年 代","").strip()

elif info.startswith("◎产 地"):

info=info.replace("◎产 地","").strip()

print(info)

if __name__ == '__main__':

jiben()

pacong()

css选择器

拾遗

爬取中国天气网,并且保存在csv文件中

import requests

from bs4 import BeautifulSoup

import csv

def sign(url):

headers={

"User-Agent":"Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/70.0.3538.25 Safari/537.36 Core/1.70.3676.400 QQBrowser/10.4.3505.400"

}

re=requests.get(url)

text=re.content.decode("utf-8")

soup=BeautifulSoup(text,"html5lib")

conMidtab=soup.find("div",class_="conMidtab")

tables=conMidtab.find_all("table")

for table in tables:

trs=table.find_all("tr")[2:]

for index,tr in enumerate(trs):

tds=tr.find_all("td")

city_td=tds[0]

if index==0:

city_td=tds[1]

city=list(city_td.stripped_strings)[0]

temp_td=tds[-2]

temp=list(temp_td.stripped_strings)[0]

writer.writerow((city,temp))

def main():

urls=["http://www.weather.com.cn/textFC/hb.shtml",

"http://www.weather.com.cn/textFC/db.shtml",

"http://www.weather.com.cn/textFC/hd.shtml",

"http://www.weather.com.cn/textFC/hz.shtml",

"http://www.weather.com.cn/textFC/hn.shtml",

"http://www.weather.com.cn/textFC/xb.shtml",

"http://www.weather.com.cn/textFC/xn.shtml",

"http://www.weather.com.cn/textFC/gat.shtml"]

#url="http://www.weather.com.cn/textFC/gat.shtml"

for url in urls:

sign(url)

if __name__ == '__main__':

csv_obj = open('data2.csv', 'w+', encoding="utf-8", newline='')

writer = csv.writer(csv_obj)

writer.writerow(('城市', '温度'))

main()

正则表达式匹配单个字符

import re

# 1.匹配某个字符串

text='hello'

ret=re.match('he',text)

print(ret.group())

# 2.点,匹配任意字符

text='hello'

ret=re.match('.',text)

print(ret.group())

# 3.\d,匹配任意到数字(0-9)

text='3344'

ret=re.match('\d',text)

print(ret.group())

# 4.\D,匹配任意的非数字

text='hello'

ret=re.match('\D',text)

print(ret.group())

# 5.\s,匹配空白字符(\n,\t,\r,空格)

text='\n'

ret=re.match('\s',text)

print(ret.group())

# 6.\w,匹配的是a-z,0-9,A-Z,和下划线_

text='99'

ret=re.match('\w',text)

print(ret.group())

# 7.\W与\w相反

text='+'

ret=re.match('\W',text)

print(ret.group())

# 8.[]组合的方式,只要满足是括号中的字符,就可以匹配

text='a1'

ret=re.match('[a1]',text)

print(ret.group())

# 9.+号能匹配多个字符

text='0731-888888'

ret=re.match('[\d\-]+',text)

print(ret.group())

# 10.中括号的形式代替\d

text='99'

ret=re.match('[0-9]',text)

print(ret.group())

# 11.^符号取相反的操作

text='hello'

ret=re.match('[^0-9]',text)

print(ret.group())

# 12.中括号代替\w

text='hello'

ret=re.match('[0-9a-zA-Z]',text)

print(ret.group())

# 13.中括号代替\W

text='++'

ret=re.match('[^0-9a-zA-Z]',text)

print(ret.group())

正则表达式匹配多个字符

#*,匹配0或者任意多个字符

import re

text="34234"

ret=re.match("\d*",text)

print(ret.group())

#+,匹配1个或者多个字符

import re

text="+abc"

ret=re.match("\w*",text)

print(ret.group())

#?,匹配一个或者0个

import re

text="abc"

ret=re.match("\w?",text)

print(ret.group())

#{m},匹配到m个字符

import re

text="abc"

ret=re.match("\w{2}",text)

print(ret.group())

#{m,n},匹配m到n个字符

import re

text="abc4343434"

ret=re.match("\w{3,4}",text)

print(ret.group())

正则表达式案例

#匹配电话号码

# import re

# text='15286419300'

# ret=re.match("1[583]\w{9}",text)

# print(ret.group())

#匹配邮箱地址

# import re

# text='1127828659@qq.com'

# ret=re.match("\w+@[a-z0-9]+\.[a-z]+",text)

# print(ret.group())

#匹配url

# import re

# text='https://www.bilibili.com/video/av44518113/?p=42&t=473'

# ret=re.match("(http|https|ftp)://[^\s]+",text)

# print(ret.group())

#匹配身份证号

# import re

# text='522229199912302414'

# ret=re.match("\d{17}[\dxX]",text)

# print(ret.group())

#^(脱字号)以………………开始

# import re

# text='hello'

# ret=re.match("^h",text)

# print(ret.group())

#$:以…………结尾

# import re

# text='xxx@163.com'

# ret=re.match("\w+@163.com$",text)

# print(ret.group())

#|:匹配多个字符串或者表达式

# import re

# text='https'

# ret=re.match("(http|https)$",text)

# print(ret.group())

#贪婪模式与非贪婪模式

# import re

# text='<h1>标题<h1>'

# ret=re.match("<.+?>",text)

# print(ret.group())

#匹配0~100

# import re

# text='89'

# ret=re.match("[1-9]\d?$|100$|0$",text)

# print(ret.group())

正则表达式转义字符和非转义字符

正则表达式分组

正则表达式基本函数

正则表达式爬取古诗词实战

import re

import requests

def parse_age(url):

headers={

"user-agent":"Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/70.0.3538.25 Safari/537.36 Core/1.70.3676.400 QQBrowser/10.4.3505.400"

}

res=requests.get(url,headers=headers)

text=res.text

title=re.findall(r'<div class="cont">.*?<b>(.*?)</b>',text,re.DOTALL)

print(title)

def main():

url='https://www.gushiwen.org/default_1.aspx'

parse_age(url)

if __name__ == '__main__':

main()

Python对象转换为json字符串

import json

demo=[

{

'name':"张三",

'age':16

},

{

'name':'李四',

'age':17

}

]

# demo1=json.dumps(demo,ensure_ascii=False)

# print(demo1)

with open('demo1.json','w',encoding='utf-8') as fp:

json.dump(demo,fp,ensure_ascii=False)

json字符串转换为Python对象

import json

demo='[{"name": "张三", "age": 16}, {"name": "李四", "age": 17}]'

# demo1=json.loads(demo)

# print(demo1)

with open('demo1.json','r',encoding='utf-8') as fp:

demo1=json.load(fp)

print(demo1)

读取csv文件

import csv

def read_csv_demo1():#以列表的方式读取数据

with open('data2.csv','r',encoding='utf-8')as fp:

demo=csv.reader(fp)

next(demo)

for i in demo:

print(i)

def read_csv_demo2():#以字典的方式读取数据

with open('data2.csv','r',encoding='utf-8')as fp:

reade=csv.DictReader(fp)

for i in reade:

print(i)

if __name__ == '__main__':

read_csv_demo1()

read_csv_demo2()

写入csv文件

import csv

def write_csv_demo1():#以列表方式写入csv文件

headers=["name",'age']

value=[('张三',18),('李四',20)]

with open('data3.csv','w',encoding='utf-8',newline='') as fp:

writer=csv.writer(fp)

writer.writerow(headers)

writer.writerows(value)

def write_csv_demo2():#以字典方式写入csv文件

headers=['name','age']

value=[

{'name':'张三','age':18},

{'name':'李四','age':20}

]

with open('data3.csv','w',encoding='utf-8',newline='') as fp:

writer=csv.DictWriter(fp,headers)

writer.writeheader()

writer.writerows(value)

if __name__ == '__main__':

# write_csv_demo1()

write_csv_demo2()

Python使用函数创建多线程

import threading

import time

def demo1():

for i in range(3):

print('正在写代码',i)

time.sleep(1)

def demo2():

for i in range(3):

print('正在听歌',i)

time.sleep(1)

def main():

t1=threading.Thread(target=demo1)

t2=threading.Thread(target=demo2)

t1.start()

t2.start()

if __name__ == '__main__':

main()

Python使用类创建多线程

import threading

import time

class demo1(threading.Thread):

def run(self):

for i in range(3):

print('正在写代码',i)

time.sleep(1)

class demo2(threading.Thread):

def run(self):

for i in range(3):

print('正在听歌',i)

time.sleep(1)

def main():

t1=demo1()

t2=demo2()

t1.start()

t2.start()

if __name__ == '__main__':

main()

Python线程锁机制

import threading

values=0

glock=threading.Lock()

def add_values():

global values

glock.acquire()

for i in range(1000000):

values+=1

glock.release()

print('第一个线程%d'%values)

def add_values2():

global values

glock.acquire()

for i in range(1000000):

values+=1

glock.release()

print('第一个线程%d'%values)

def main():

t1=threading.Thread(target=add_values)

t2=threading.Thread(target=add_values2)

t1.start()

t2.start()

if __name__ == '__main__':

main()

lock版生产者和消费者

import threading

import time

import random

glock=threading.Lock()

gmoney=1000

gtime=0

class producer(threading.Thread):

def run(self):

global gtime

global gmoney

while True:

money=random.randint(100,500)

glock.acquire()

if gtime > 10:

glock.release()

break

gmoney += money

print('%s赚了%d元,剩余%d元'%(threading.current_thread(),money,gmoney))

glock.release()

gtime +=1

time.sleep(1)

class consumer(threading.Thread):

def run(self):

global gmoney

while True:

money=random.randint(100,500)

glock.acquire()

if gmoney >= money:

gmoney -= money

print('%s消费了%d元,剩余%d元'%(threading.current_thread(),money,gmoney))

else:

print('%s不足以消费,剩余%d元'%(threading.current_thread(),gmoney))

glock.release()

break

glock.release()

time.sleep(1)

def main():

for i in range(5):

t1=producer(name='生产者%d'%i)

t1.start()

for i in range(3):

t2=consumer(name='消费者%d'%i)

t2.start()

if __name__ == '__main__':

main()

selenium使用代理IP

验证码识别

import pytesseract

from PIL import Image

pytesseract.pytesseract.tesseract_cmd = r'D:\tesseract\Tesseract-OCR\tesseract.exe'

image = Image.open(r'C:\Users\best\Desktop\2.jpg')

text = pytesseract.image_to_string(image)

print(text)

288

288

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?