常见的Operator:

- BashOperator

- SSHOperator

- DummyOperator

- PythonOperator

- BranchPythonOperator

BranchPythonOperator允许用户通过函数返回下一步要执行的task的id,从而根据条件选择执行的分支,BranchPythonOperator下级task是被"selected"或者"skipped"的分支。

在运行dag时,一个task对应1个或多个task,可以在Operator内指定

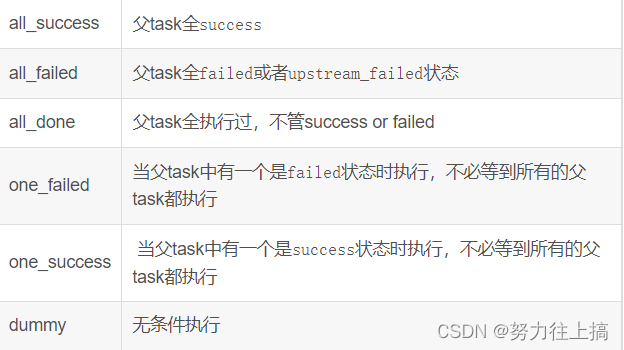

Trigger Rules

Trigger Rules定义了某个task在何种情况下执行。默认情况下,某个task是否执行,依赖于其父task(直接上游任务)全部执行成功。airflow允许创建更复杂的依赖。通过设置operator中的trigger_rule参数来控制:

all_success(默认):所有上游任务都已成功

all_failed:所有上游任务都处于失败或上游失败状态

all_done:所有上游任务都已执行完毕

all_skipped:所有上游任务都处于跳过状态

one_failed:至少有一个上游任务失败(不等待所有上游任务完成)

one_success:至少有一个上游任务成功(不等待所有上游任务完成)

one_done:至少有一个上游任务成功或失败

none_failed:所有上游任务均未失败或upstream_failed,即所有上游任务都已成功或跳过

none_failed_min_one_success:所有上游任务都没有失败或上行失败,并且至少有一个上游任务成功。

none_skipped:没有上游任务处于跳过状态,即所有上游任务都处于成功、失败或上游失败状态

always:完全没有依赖项,请随时运行此任务该参数可以和depends_on_past结合使用,当设置为true时,如果上一次没有执行成功,这一次无论如何都不会执行。

pool

池用来控制同个pool的task并行度。

aggregate_db_message_job = BashOperator(

task_id='aggregate_db_message_job',

execution_timeout=timedelta(hours=3),

pool='ep_data_pipeline_db_msg_agg',

bash_command=aggregate_db_message_job_cmd,

dag=dag)

aggregate_db_message_job.set_upstream(wait_for_empty_queue)上例中,aggregate_db_message_job设置了pool,如果pool的最大并行度为1,当其它任务也设置该池时,如果aggregate_db_message_job在运行,则其它任务必须等待。

XComs

默认情况下,dag与dag之间 、task与task之间信息是无法共享的。如果想在dag、task之间实现信息共享,要使用XComs,通过设置在一个dag(task)中设置XComs参数在另一个中读取来实现信息共享。

#coding=utf-8

from datetime import datetime, timedelta

from airflow import DAG

from airflow.operators.python_operator import PythonOperator

import airflow.utils

# 定义默认参数

default_args = {

'owner': 'airflow', # 拥有者名称

'start_date': airflow.utils.dates.days_ago(1),# 第一次开始执行的时间,为格林威治时间,为了方便测试,一般设置为当前时间减去执行周期

'email': ['1111111@163.com'], # 接收通知的email列表

'email_on_failure': True, # 是否在任务执行失败时接收邮件

'email_on_retry': True, # 是否在任务重试时接收邮件

'retries': 1, # 失败重试次数

'retry_delay': timedelta(seconds=5), # 失败重试间隔

'provide_context': True,

}

# 定义DAG

dag = DAG(

dag_id='xcom_hello_world_args', # dag_id

default_args=default_args, # 指定默认参数

schedule="@once",

# schedule_interval="00, *, *, *, *" # 执行周期,依次是分,时,天,月,年,此处表示每个整点执行

# schedule_interval=timedelta(minutes=1) # 执行周期,表示每分钟执行一次

)

# 定义要执行的Python函数1

def hello_world_args_1(**context):

current_time = str(datetime.today())

with open('/tmp/hello_world_args_1.txt', 'a') as f:

f.write('%s\n' % current_time)

assert 1 == 1 # 可以在函数中使用assert断言来判断执行是否正常,也可以直接抛出异常

context['task_instance'].xcom_push(key='sea1', value=f.name)

############################

import subprocess

OUT = subprocess.getoutput('pwd')

context['task_instance'].xcom_push(key='out',value=OUT)

############################

return "t2"#

# 定义要执行的Python函数2

def hello_world_args_2(**context):

sea = context['task_instance'].xcom_pull(key="sea1",task_ids='hello_world_args_1')#参数id,task id

##################

out = context['task_instance'].xcom_pull(key='out',task_ids='hello_world_args_1')

#######################

current_time = str(datetime.today())

with open('/tmp/hello_world_args_2.txt', 'a') as f:

f.write('%s\n' % sea)

f.write('%s\n' % current_time)

######################

f.write('%s\n' % out)

# 定义要执行的task 1

t1 = PythonOperator(

task_id='hello_world_args_1', # task_id

python_callable=hello_world_args_1, # 指定要执行的函数

dag=dag, # 指定归属的dag

retries=2, # 重写失败重试次数,如果不写,则默认使用dag类中指定的default_args中的设置

provide_context=True,

)

# 定义要执行的task 2

t2 = PythonOperator(

task_id='hello_world_args_2', # task_id

python_callable=hello_world_args_2, # 指定要执行的函数

dag=dag, # 指定归属的dag

provide_context=True,

)

t1>>t2在日常工作中,需要某一task执行成功后判断下一步需要执行哪一task就需要用到airflow中的BranchPythonOperator、PythonOperator、定义函数返回task name 来实现task调度

案例:

from airflow import DAG

from airflow.operators.bash import BashOperator

from airflow.operators.python import PythonOperator, PythonVirtualenvOperator

from airflow.utils.dates import days_ago

from airflow.operators.python_operator import BranchPythonOperator

from airflow.operators.dummy_operator import DummyOperator

args = {

'owner': 'xlx',

}

with DAG(

dag_id='httpd check and deploy ',

default_args=args,

schedule_interval=None,

start_date=days_ago(2),

) as dag:

httpd_deploy = False

def start():

print(f'start check and deploy hpttd')

def httpd_check():

if httpd_deploy: #通过判断httpd_deploy的值来判定执行的task

return "uninstall_httpd"

else:

return "deploy_httpd"

def uninstall_httpd():

import subprocess

check_cmd = 'rpm -qa | grep httpd'

uninstall_cmd = 'yum -y uninstall httpd'

result = subprocess.run(check_cmd,shell=True).returncode

if result == 0 :

print(f'已经安装httpd,版本为',{check_cmd} )

else:

subprocess.run(uninstall_cmd,shell=True,encoding='utf-8')

print(f'已经卸载httpd,版本为', {check_cmd})

def deploy_httpd():

import os

deploy_cmd = 'yum -y install httpd'

try:

os.system(deploy_cmd)

except EOFError :

print(f'命令错误',{deploy_cmd})

start = DummyOperator(

task_id='start',

python_callable=start,

dag=dag)

httpd_check = BranchPythonOperator(

task_id='httpd_check',

python_callable=httpd_check,

dag= dag)

uninstall_httpd = PythonOperator(

task_id='uninstall_httpd',

python_callable=uninstall_httpd,

dag=dag)

deploy_httpd = PythonOperator(

task_id='deploy_httpd',

python_callable=deploy_httpd,

trigger_rule='one_success', # 上一个或几个task有一个成功就执行该task

dag=dag)

start >> httpd_check >> [uninstall_httpd,deploy_httpd]

uninstall_httpd >> deploy_httpd

案例1:

PythonOperator、BranchPythonOperator、BashOperator、trigger_rule、Lable的使用

1.编写dag

from airflow import DAG

from airflow.operators.bash import BashOperator

from airflow.operators.python import PythonOperator, PythonVirtualenvOperator

from airflow.utils.dates import days_ago

from airflow.operators.bash import BashOperator

from airflow.operators.python_operator import BranchPythonOperator

from airflow.utils.edgemodifier import Label

args = {

'owner': 'xlx',

}

with DAG(

dag_id='airflow-20210711',

default_args=args,

schedule_interval=None,

start_date=days_ago(2),

max_active_runs=100,

tags=["xlx","airflow", "7/11"],

) as dag:

def lalala(**context):

# hostname = 'airflow'

hostname = context["dag_run"].conf.get("hostname")

print(hostname)

if hostname == 'airflow':

return "end"

else:

return "hostname"

def is_mem_stress(**context):

mem = context["dag_run"].conf.get("mem")

if mem == 'true':

return "mem_stress"

else:

return "end"

def mem_stress():

print('start mem_stress')

print('=======================')

print('success stress')

lalala = BranchPythonOperator(

task_id='lalala',

python_callable=lalala,

trigger_rule="none_failed_min_one_success",

dag=dag)

is_mem_stress = BranchPythonOperator(

task_id='is_mem_stress',

python_callable=is_mem_stress,

# trigger_rule="none_failed_min_one_success",

dag=dag)

mem_stress = PythonOperator(

task_id='mem_stress',

python_callable=mem_stress,

trigger_rule="none_failed_min_one_success",

dag=dag)

#####bash###################

hostname = BashOperator(

task_id="hostname",

bash_command=" hostname ",

dag=dag,

)

end = BashOperator(

task_id="end",

bash_command=" echo ======================================== ",

trigger_rule="none_failed_min_one_success",

dag=dag,

)

lalala >> hostname >> is_mem_stress >> Label("YES") >> mem_stress >> end

lalala >> hostname >> is_mem_stress >> Label("NO") >> end

完善点的

from airflow import DAG

from airflow.operators.bash import BashOperator

import pendulum

from airflow.operators.python import PythonOperator

from airflow.operators.python_operator import BranchPythonOperator

with DAG(

dag_id="test-skip",

start_date=pendulum.datetime(2021, 1, 1, tz="UTC"),

catchup=False,

schedule=None,

tags=["test-skip"],

) as dag:

def input(**context):

param = context['dag_run'].conf['param']

print('输入的参数为:', param)

def isneed_mem(**context):

print('context的key conf 值为',context['dag_run'].conf)

print('context的值为',context)

print('context的值为',context.values())

h = context['dag_run'].conf.get('mem_stress')

if h:

return "mem_stress"

else:

return "end"

def mem_stress(**context):

# h = context['dag_run'].conf.get('mem_stress',True)

print(' vvvvvvvvvvvvvvvvvvvvvvv ')

input= PythonOperator(

task_id="input",

python_callable=input,

# pool="limit_10",

dag=dag,

)

isneed_mem = BranchPythonOperator(

task_id="isneed_mem",

python_callable=isneed_mem,

# pool="limit_10",

dag=dag,

)

mem_stress = PythonOperator(

task_id="mem_stress",

python_callable=mem_stress,

# pool="limit_10",

dag=dag,

)

end = BashOperator(

task_id="end",

bash_command="echo end ",

dag=dag,

trigger_rule="none_failed_min_one_success",

)

# mem_stress = TriggerDagRunOperator(

# task_id="mem_stress",

# trigger_dag_id="trigger_children",

# dag=dag,

# conf={"host": "{{ dag_run.conf['host'] }}"},

# execution_date='{{ ts }}',

# wait_for_completion=True,

# )

input >> isneed_mem >> mem_stress >> end

input >> isneed_mem >> end输入参数

| {"param": "param", "mem_stress": "True"} |

输入参数 ,自动跳过

| {"param": "param"} |

2.触发DAG的执行(带参数)

编写对应shell脚本, 若执行任务dag多可以通过开启api操作执行

前提:

- 修改配置文件airflow.cfg,把auth_backend选项的值修改成以下值

auth_backend = airflow.api.auth.backend.basic_auth2.创建访问用户,添加一个user1用户,通过以下命令来添加一个user1用户,使得他可以通过REST API来访问airflow

airflow users create -u user1 -p user1 -r Admin -f firstname -l lastname -e user1@example.org

[root@airflow script]# cat 0001.sh

function trigger_target(){

local dag_id='';

local param='';

local mem='';

local opt;

local OPTIND;

local OPTARG;

func() {

echo "Usage:"

echo "trigger_target -d [tag_id] -o [option] -p [param] -m [mem_stress] "

echo "Description:"

echo "tag_id,the name of tag_id."

echo "option,the param's key"

echo "param,the param's value"

echo "mem_stress is or not true,dafault true "

return 2;

}

mem='flase'

while getopts ':d:p:o:m' OPT; do

case $OPT in

d) dag_id="$OPTARG";;

p) param="$OPTARG";;

o) option="$OPTARG";;

m) mem="true";;

h) func;;

?) func;;

esac

done

shift $((OPTIND-1));

# if [ $# -ne 0 ]; then

# usage;

# echo "wrong args: '$@'";

# return 1;

# else

# if [ -z "$tag_id" ]; then

# echo "wrong args: '$@'";

# usage;

# return 1;

# fi;

# fi;

echo $mem

EXE_DATE=$(TZ=America/Curacao date '+%Y-%m-%dT%H:%M:%SZ')

curl -X POST "http://localhost:8080/api/v1/dags/$dag_id/dagRuns" \

-d "{\"execution_date\": \"${EXE_DATE}\", \"conf\": {\"${option}\":\"${param}\",\"mem\":\"${mem}\"}}" \

-H 'content-type: application/json' \

--user "user1:user1"

}

3. 根据脚本执行命令

[root@airflow script]# trigger_target -d airflow-20210711 -o hostname -p airflow123

flase

{

"conf": {

"hostname": "airflow123",

"mem": "flase"

},

"dag_id": "airflow-20210711",

"dag_run_id": "manual__2023-07-11T23:51:25+00:00",

"data_interval_end": "2023-07-11T23:51:25+00:00",

"data_interval_start": "2023-07-11T23:51:25+00:00",

"end_date": null,

"execution_date": "2023-07-11T23:51:25+00:00",

"external_trigger": true,

"last_scheduling_decision": null,

"logical_date": "2023-07-11T23:51:25+00:00",

"note": null,

"run_type": "manual",

"start_date": null,

"state": "queued"

}

案例2:

TriggerDagRunOperator、PythonOperator、BranchPythonOperator、BashOperator、trigger_rule、Lable的使用

TriggerDagRunOperator: TriggerDagRunOperator 触发多个气流 dags,由父dag调用子并且把父dag参数传给子dag,从而实现airflow中的跨Dag依赖的问题

父dag:

from airflow import DAG

from airflow.operators.trigger_dagrun import TriggerDagRunOperator

import pendulum

from airflow.operators.python import PythonOperator, PythonVirtualenvOperator

from airflow.operators.python_operator import BranchPythonOperator

with DAG(

dag_id="trigger_controller",

start_date=pendulum.datetime(2021, 1, 1, tz="UTC"),

catchup=False,

schedule=None,

tags=["trigger_controller"],

) as dag:

def trigger(**context):

h = context['dag_run'].conf['host']

print('输入的参数为:',h)

print('context的key conf 值为',context['dag_run'].conf)

print('context的值为',context)

print('context的值为',context.values())

t1 = context['task_instance'].xcom_push(key='out',value=h)

print(t1)

print( context['task_instance'].xcom_push(key='out',value=h))

trigger = PythonOperator(

task_id="trigger",

python_callable=trigger,

# pool="limit_10",

dag=dag,

)

mem_stress = TriggerDagRunOperator(

task_id="mem_stress",

trigger_dag_id="trigger_children",

dag=dag,

conf={ "host":"{{ dag_run.conf['host'] }}" },

execution_date='{{ ts }}', #{{ds}} #逻辑执行时间 即当前时间,

wait_for_completion=True, #等待子dag执行完成父dag才算完成

reset_dag_run=True, 清空当前dag的状态,重新运行

#conf='{{ dag_run.conf }}',

#conf="{{ context['dag_run'].conf}}",

#conf="{{ dag_run.conf }}",

#conf={ 'h':'{{ dag_run.conf }}'},

#conf={"{{h}}": "{{ dag_run.conf['host'] }}"},

#conf="{{ dag_run.conf['host'] }}",

#params={"host": "{{ dag_run.conf['host'] }}"},

#on_failure_callback=failure_callback,# dag执行失败,可以配置dingding告警通知

)

trigger >> mem_stress子dag

from airflow import DAG

# from airflow.operators.bash import BashOperator

from airflow.operators.python import PythonOperator

from airflow.utils.dates import days_ago

from airflow.operators.bash import BashOperator

args = {

'owner': 'test',

}

with DAG(

dag_id='trigger_children',

default_args=args,

schedule_interval=None,

start_date=days_ago(2),

max_active_runs=100,

tags=["trigger_children"],

) as dag:

# def mem_stress(**context):

# # name = context["dag_run"].conf.get("name")

# name = context['task_instance'].xcom_pull(key="out", task_ids='hello_world_args_1') # 参数id,task id

# print(name)

# print('start mem_stress')

# print('=======================')

# print('success stress')

def mem_stress(**context):

host = context['dag_run'].conf['host']

#t2 = context['task_instance'].xcom_pull(key="out", task_ids='trigger') # 参数id,task id

#print(t2)

print(context)

print(host)

print('=======================')

mem_stress = PythonOperator(

task_id='mem_stress',

python_callable=mem_stress,

trigger_rule="none_failed_min_one_success",

dag=dag)

#####bash###################

hostname = BashOperator(

task_id="hostname",

bash_command=" hostname ",

dag=dag,

)

end = BashOperator(

task_id="end",

bash_command=" echo ======================================== ",

trigger_rule="none_failed_min_one_success",

dag=dag,

)

mem_stress >> hostname >> end通过父dag传参{"host":"XXXX"},(可以传入多个参数{"host":"XXXX","mem":"yes","cpu":"no"})调用子dag对应执行,完成对应airflow任务

6584

6584

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?