2021SC@SDUSC

之前的文章完成了core模块下util、converter、documents三个目录的分析。接下来,本文将开始分析data目录。data目录是对会议中的数据进行一系列处理。

目录

data目录结构

打开IDEA,看到data目录的结构:

data目录下面有两个子目录,分别是file和record。file目录下面有一个FileProcessor.java。record目录下面还有两个子目录converter和listener,converter目录下面有InterviewConverterTask.java和RecordingConverterTask.java;listener目录下面有StreamListener.java和子目录async,async目录下面是BaseStreamWriter.java、CachedEvent.java、StreamAudioWriter.java、StreamVideoWriter.java等4个java文件。文件结构比较复杂,内容也比较多。接下来对它们进行分析。

data/file目录

flie目录下只有FileProcessor.java一个文件,因此直接开始分析它。FileProcessor类的意思是文件处理器,所以是对文件进行处理的类。先看其引入的内容:

前面照旧引入了copyFile、copyInputStreamToFile等对文件进行复制等操作的类,还有util模块下的getFileExt和getWebAppRootKey两个方法。接着,引入了File、InputStream、UUID等工具类。最后一部分,引入了之前在converter目录下分析过的DocumentConverter、ImageConverter等转换器类,db模块下的数据传输对象类和实体类,util目录下的类,以及日志、注解等等。

看类的定义:

首先初始化了log。然后使用@Autowired注解声明了四个字段,分别是VideoConverter videoConverter、FileItemDao fileDao、ImageConverter imageConverter、DocumentConverter docConverter。其中,除了作为文件传输对象的fileDao,其余三个都是转换器类。

再向下看:

下面重载了两个processFile方法,但返回值类型不一样。由于第一个调用了第二个,因此先看第二个:

下面重载了两个processFile方法,但返回值类型不一样。由于第一个调用了第二个,因此先看第二个:

返回值类型是void,形参列表有FileItem f、StoredFile sf、File temp、ProcessResultList logs,包含了文件项类对象f、存储文件sf、文件temp、处理结果列表logs。方法抛出了异常。

在方法体内使用了try-catch语句块。在try块内,首先调用f.getFile方法,获取f的信息,并且扩展名是sf的,赋值给file。接着判断,如果file的父文件不存在或者创建失败,则在logs中添加新的处理结果,表明无法给file创建父文件,并且返回。

然后出现了switch语句。用switch判断f的类型,如果是Presentation类型,就把file复制到temp,然后调用docConverter.convertPDF,将f转换成pdf文件。接着,如果是PollChart、Image、Video等都进行相似的操作。这些操作将f进行了转换,并且在logs中记录了处理结果。

所以,第二个processFile文件是对文件的格式进行转换,并且把处理结果保存到logs中。

看第一个processFile:

返回值类型是ProcessResultList,也就是处理结果列表。形参是FileItem f、InputStream is,且声明可能出现异常。在方法体内,首先初始化了处理结果列表logs,然后初始化了一个随机字符串hash,注释说明它的作用是防止任何与外来字符和副本的问题。接着又初始化了一个File类型的对象temp为null。继续看:

接下来是try-catch结构。在try块中,首先将temp赋值为一个随机文件,并对它的文件名进行规范,是upload_+刚才的随机字符串,扩展名是.tmp,然后将tmp复制给输入流is。下面的String ext保存了f的扩展名,又new了StoredFile sf保存了文件。其间,还通过log输出了控制信息。然后进行判断sf的类型,如果是图片,则设置f的类型是Type.Image;如果是视频,则设置f的类型是Type.Video;如果是图表,则设置f的类型是Type.PollChart;如果是pdf或者office文件,则设置f的类型是Type.Presentation。如果都不符合,则抛出UnsupportedFormatException,即不支持的格式的异常。经过这些之后,设置f的文件哈希值为hash。最后,调用重载的第二个processFile,形参是f、sf、temp、logs,对文件格式进行转换,此时logs中已经保存了相关的处理结果。

再往下看,如果出现了异常则进行捕获,并且输出控制信息,再次把异常抛出。最后,在finally中对temp进行判断,并输出控制信息,最终返回logs。

所以,第一个processFile方法是通过调用第二个processFile方法,完成文件格式的转换,并且保存处理结果。

至此,FileProcessor.java已经分析结束,它的作用是提供了转换文件类型的方法。附上源码:

/*

* Licensed to the Apache Software Foundation (ASF) under one

* or more contributor license agreements. See the NOTICE file

* distributed with this work for additional information

* regarding copyright ownership. The ASF licenses this file

* to you under the Apache License, Version 2.0 (the

* "License") + you may not use this file except in compliance

* with the License. You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in writing,

* software distributed under the License is distributed on an

* "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY

* KIND, either express or implied. See the License for the

* specific language governing permissions and limitations

* under the License.

*/

package org.apache.openmeetings.core.data.file;

import static org.apache.commons.io.FileUtils.copyFile;

import static org.apache.commons.io.FileUtils.copyInputStreamToFile;

import static org.apache.openmeetings.util.OmFileHelper.getFileExt;

import static org.apache.openmeetings.util.OpenmeetingsVariables.getWebAppRootKey;

import java.io.File;

import java.io.InputStream;

import java.util.UUID;

import org.apache.openmeetings.core.converter.DocumentConverter;

import org.apache.openmeetings.core.converter.ImageConverter;

import org.apache.openmeetings.core.converter.VideoConverter;

import org.apache.openmeetings.db.dao.file.FileItemDao;

import org.apache.openmeetings.db.entity.file.BaseFileItem.Type;

import org.apache.openmeetings.db.entity.file.FileItem;

import org.apache.openmeetings.util.StoredFile;

import org.apache.openmeetings.util.process.ProcessResult;

import org.apache.openmeetings.util.process.ProcessResultList;

import org.apache.tika.exception.UnsupportedFormatException;

import org.red5.logging.Red5LoggerFactory;

import org.slf4j.Logger;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.stereotype.Component;

@Component

public class FileProcessor {

private static final Logger log = Red5LoggerFactory.getLogger(FileProcessor.class, getWebAppRootKey());

//Spring loaded Beans

@Autowired

private VideoConverter videoConverter;

@Autowired

private FileItemDao fileDao;

@Autowired

private ImageConverter imageConverter;

@Autowired

private DocumentConverter docConverter;

public ProcessResultList processFile(FileItem f, InputStream is) throws Exception {

ProcessResultList logs = new ProcessResultList();

// Generate a random string to prevent any problems with

// foreign characters and duplicates

String hash = UUID.randomUUID().toString();

File temp = null;

try {

temp = File.createTempFile(String.format("upload_%s", hash), ".tmp");

copyInputStreamToFile(is, temp);

String ext = getFileExt(f.getName());

log.debug("file extension: {}", ext);

//this method moves stream, so temp file MUST be created first

StoredFile sf = new StoredFile(hash, ext, temp);

log.debug("isAsIs: {}", sf.isAsIs());

if (sf.isImage()) {

f.setType(Type.Image);

} else if (sf.isVideo()) {

f.setType(Type.Video);

} else if (sf.isChart()) {

f.setType(Type.PollChart);

} else if (sf.isPdf() || sf.isOffice()) {

f.setType(Type.Presentation);

} else {

throw new UnsupportedFormatException("The file type cannot be converted :: " + f.getName());

}

f.setHash(hash);

processFile(f, sf, temp, logs);

} catch (Exception e) {

log.debug("Error while processing the file", e);

throw e;

} finally {

if (temp != null && temp.exists() && temp.isFile()) {

log.debug("Clean up was successful ? {}", temp.delete());

}

}

return logs;

}

private void processFile(FileItem f, StoredFile sf, File temp, ProcessResultList logs) throws Exception {

try {

File file = f.getFile(sf.getExt());

log.debug("writing file to: {}", file);

if (!file.getParentFile().exists() && !file.getParentFile().mkdirs()) {

logs.add(new ProcessResult("Unable to create parent for file: " + file.getCanonicalPath()));

return;

}

switch(f.getType()) {

case Presentation:

log.debug("Office document: {}", file);

copyFile(temp, file);

// convert to pdf, thumbs, swf and xml-description

docConverter.convertPDF(f, sf, logs);

break;

case PollChart:

log.debug("uploaded chart file"); // NOT implemented yet

break;

case Image:

// convert it to PNG

log.debug("##### convert it to PNG: ");

copyFile(temp, file);

imageConverter.convertImage(f, sf);

break;

case Video:

copyFile(temp, file);

videoConverter.convertVideo(f, sf.getExt(), logs);

break;

default:

break;

}

} finally {

f = fileDao.update(f);

log.debug("fileId: {}", f.getId());

}

}

}

同时,data/file目录也分析结束。在该目录下通过FileProcessor类完成了文件的格式转换。

data/record目录

data目录下还有record目录,其结构如下:

目录中有两个子目录converter和listener。首先来分析converter子目录。

converter目录

InterviewConverterTask.java

InterviewConverterTask是指InterviewConverter的任务,在其中应该交代对InterviewConverter类需要做的工作。看其引入的内容:

第一个引入了util模块下的getWebAppRootKey方法。然后引入了InterviewConverter类,剩余的就是red5相关的类、日志类、注解了。

看类的定义:

类的字段除了log,就是TaskExecutor taskExecutor、InterviewConverter interviewConverter。这里的TaskExecutor类,是java提供的用来执行某个异步任务的类。

类的字段除了log,就是TaskExecutor taskExecutor、InterviewConverter interviewConverter。这里的TaskExecutor类,是java提供的用来执行某个异步任务的类。

(以下介绍参考自博文Spring的任务执行器(TaskExecutor)和任务调度器(TaskScheduler)_陈自选的博客-CSDN博客_java taskexecutor)Spring框架使用TaskExecutor和TaskScheduler接口分别为异步执行和任务调度提供抽象。 Spring还提供了那些接口的实现,这些接口在应用服务器环境中支持线程池或委托给CommonJ。 最终,在公共接口背后使用这些实现抽象出了Java SE 5、Java SE 6和Java EE环境之间的差异。Spring的TaskExecutor接口等同于java.util.concurrent.Executor接口。实际上,最初它存在的主要原因是在使用线程池时抽象掉了对Java 5的需求。接口有一个方法execute(Runnable task),该方法接受一个基于线程池的语义和配置执行的任务。最初创建TaskExecutor是为了在需要时为其他Spring组件提供线程池抽象。诸如ApplicationEventMulticaster、JMS的AbstractMessageListenerContainer和Quartz integration等组件都使用TaskExecutor池线程抽象。但是如果bean需要线程池行为,则可以根据自己的需要使用此抽象。

回到源码,提供了startConversionThread方法,用来开启转换任务的线程。形参是Long recordingId。方法同样采用了try-catch语句,在try块中首先使用log输出控制信息,然后通过taskExecutor的execute方法,执行interviwConverter的startConversion方法,开始转换任务。此外捕获异常等就不再多说了。

至此,InterviewConverterTask.java分析完毕。它通过startConversionThread方法,开启了异步执行的转换任务。附上源码:

/*

* Licensed to the Apache Software Foundation (ASF) under one

* or more contributor license agreements. See the NOTICE file

* distributed with this work for additional information

* regarding copyright ownership. The ASF licenses this file

* to you under the Apache License, Version 2.0 (the

* "License") + you may not use this file except in compliance

* with the License. You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in writing,

* software distributed under the License is distributed on an

* "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY

* KIND, either express or implied. See the License for the

* specific language governing permissions and limitations

* under the License.

*/

package org.apache.openmeetings.core.data.record.converter;

import static org.apache.openmeetings.util.OpenmeetingsVariables.getWebAppRootKey;

import org.apache.openmeetings.core.converter.InterviewConverter;

import org.red5.logging.Red5LoggerFactory;

import org.slf4j.Logger;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.core.task.TaskExecutor;

import org.springframework.stereotype.Component;

@Component

public class InterviewConverterTask {

private static final Logger log = Red5LoggerFactory.getLogger(InterviewConverterTask.class, getWebAppRootKey());

@Autowired

private TaskExecutor taskExecutor;

@Autowired

private InterviewConverter interviewConverter;

public void startConversionThread(final Long recordingId) {

try {

log.debug("[-1-]" + taskExecutor);

taskExecutor.execute(() -> interviewConverter.startConversion(recordingId));

} catch (Exception err) {

log.error("[startConversionThread]", err);

}

}

}

RecordingConverterTask.java

RecodingConverterTask类的内容与InterviewConverterTask类几乎一样,也是开启一个转换任务,只不过与之相关的是RecordingConverter类。因此,这个类就不再多说了,只附上源码:

/*

* Licensed to the Apache Software Foundation (ASF) under one

* or more contributor license agreements. See the NOTICE file

* distributed with this work for additional information

* regarding copyright ownership. The ASF licenses this file

* to you under the Apache License, Version 2.0 (the

* "License") + you may not use this file except in compliance

* with the License. You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in writing,

* software distributed under the License is distributed on an

* "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY

* KIND, either express or implied. See the License for the

* specific language governing permissions and limitations

* under the License.

*/

package org.apache.openmeetings.core.data.record.converter;

import static org.apache.openmeetings.util.OpenmeetingsVariables.getWebAppRootKey;

import org.apache.openmeetings.core.converter.RecordingConverter;

import org.red5.logging.Red5LoggerFactory;

import org.slf4j.Logger;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.core.task.TaskExecutor;

import org.springframework.stereotype.Component;

@Component

public class RecordingConverterTask {

private static final Logger log = Red5LoggerFactory.getLogger(RecordingConverterTask.class, getWebAppRootKey());

@Autowired

private TaskExecutor taskExecutor;

@Autowired

private RecordingConverter recordingConverter;

public void startConversionThread(final Long recordingId) {

try {

log.debug("[-1-]" + taskExecutor);

taskExecutor.execute(() -> recordingConverter.startConversion(recordingId));

} catch (Exception err) {

log.error("[startConversionThread]", err);

}

}

}

listener目录

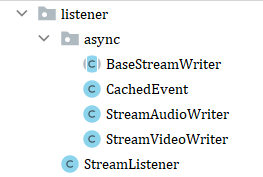

所谓listener目录应该是监听器。listener目录结构如图:

有一个子目录async和StreamListener.java文件。在async目录下,有BaseStreamWriter.java、CachedEvent.java、StreamAudioWriter.java、StreamVideoWriter.java。

async目录

async意为异步、非同步。我在前后端分离项目的开发中经常遇到这个概念,主要场景是获取后端数据的时候需要时间而导致不同步,这就需要它与await关键字同时使用以获取后端数据。在listener中出现这个子目录,有可能也是为了处理类似网络请求、Promise等异步问题。

1、BaseStreamWriter.java

BaseStreamWriter是基本的将数据流进行写入的类。看其引入的内容:

首先引入的是还没有分析到的core模块下remote目录下的ScopeApplicationAdapter类的getApp方法(留个坑),还有util模块下的内容。第二部分引入的包括File、IOException、Date、BlockingQueue、TimeUnit等工具类。最后一部分引入的包括IoBuffer、db模块下的数据访问对象类、util模块下的工具类7、red5相关的类以及日志类。

看类的定义:

首先BaseStreamWriter类实现了Runnable接口。所谓Runnable接口,实现它的类都是可以被某个线程所运行的,通过调用run()方法就可以运行。

内部实现的字段比较多。第一个仍然是log,下面定义了两个常量,MINUTE_MULTIPLIER和TIME_TO_WAIT_FOR_FRAME。目前猜测,它们应该是执行线程的数量和等待执行线程的帧时的时间。下面还有两个变量startTimeStamp和initialDelta,分别是开始执行时的时间戳和初始的一个标志。

下面定义的字段就是关于线程运行的变量了,一系列布尔变量包括running、stopping、dostopping,其含义都在注释中标识了;后面定义了ITagWriter类对象writer,它是red5服务器相关的负责修改tag的修改器接口;metaDataId是元数据的id;Date类型的startedSessionTimeDate是开始执行任务的日期;File类型的file;IScope类型的scope,IScope是red5相关的作用域操作的接口;布尔变量isScreenData,表示数据是不是屏幕上的;字符串streamName,是数据流的名字;RecordingMetaDataDao类型的metaDataDao,它是db模块下dao目录中的数据访问对象类型;最后是一个阻塞队列,队列的元素是CachedEvent,是即将要分析到的类。

看类的定义:

先略过它的构造器,看它下面的几个方法:

前面提到, BaseStreamWriter类是一个可执行的类,所以它也具有一系列类似线程生命周期相关的方法。首先是init方法。在方法体内,首先初始化了file,它是new了一个File类对象,传入的参数是根据scope和streamName确定的。再下面,建立了文件流工厂对象factory。然后对file进行判断,如果根据之前的scope和streamName新建的file已经是个被删除的文件,则创建这个文件;否则,如果file是不可写的,那抛出IO异常,说明这个文件是只读的。再下面,有对writer进行了初始化。经过这一系列操作,完成了对字段的初始化。

继续看:

下面是open方法。方法体内,设置running为true,意味着线程开启运行。然后用当前new了一个Thread对象,执行对象是this,名称是"Recording"+file的名称,并且开启这个线程。

然后是stop方法,设置dostopping为true,意味着一旦阻塞队列空了就停止运行。

再下面的run方法是覆写了Runnable接口的run方法。在方法体内,主要对队列中的事务进行依次的执行。其中使用了while循环,除非stopping为true,意味着线程需要立刻停止,否则会一直循环。在循环中就对queue中的CachedEvent进行依次执行,完成任务,其间伴随着一系列日志信息的输出。

再下面的内容就简单一些了,包含packetReceived、closeStream、write、append等方法,是把实际上的数据写回磁盘,并且对额外需要的信息进行计算等。

至此,BaseStreamWriter类分析完毕,它主要是以线程的形式完成了对一系列任务的执行,并且能够把信息写回磁盘。附上其源码:

/*

* Licensed to the Apache Software Foundation (ASF) under one

* or more contributor license agreements. See the NOTICE file

* distributed with this work for additional information

* regarding copyright ownership. The ASF licenses this file

* to you under the Apache License, Version 2.0 (the

* "License") + you may not use this file except in compliance

* with the License. You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in writing,

* software distributed under the License is distributed on an

* "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY

* KIND, either express or implied. See the License for the

* specific language governing permissions and limitations

* under the License.

*/

package org.apache.openmeetings.core.data.record.listener.async;

import static org.apache.openmeetings.core.remote.ScopeApplicationAdapter.getApp;

import static org.apache.openmeetings.util.OmFileHelper.EXTENSION_FLV;

import static org.apache.openmeetings.util.OpenmeetingsVariables.getWebAppRootKey;

import java.io.File;

import java.io.IOException;

import java.util.Date;

import java.util.concurrent.BlockingQueue;

import java.util.concurrent.LinkedBlockingQueue;

import java.util.concurrent.TimeUnit;

import org.apache.mina.core.buffer.IoBuffer;

import org.apache.openmeetings.db.dao.record.RecordingMetaDataDao;

import org.apache.openmeetings.db.entity.record.RecordingMetaData;

import org.apache.openmeetings.db.entity.record.RecordingMetaData.Status;

import org.apache.openmeetings.util.OmFileHelper;

import org.red5.io.IStreamableFile;

import org.red5.io.ITag;

import org.red5.io.ITagWriter;

import org.red5.io.flv.impl.Tag;

import org.red5.logging.Red5LoggerFactory;

import org.red5.server.api.scope.IScope;

import org.red5.server.api.service.IStreamableFileService;

import org.red5.server.api.stream.IStreamableFileFactory;

import org.red5.server.stream.StreamableFileFactory;

import org.red5.server.util.ScopeUtils;

import org.slf4j.Logger;

public abstract class BaseStreamWriter implements Runnable {

private static final Logger log = Red5LoggerFactory.getLogger(BaseStreamWriter.class, getWebAppRootKey());

private static final int MINUTE_MULTIPLIER = 60 * 1000;

public static final int TIME_TO_WAIT_FOR_FRAME = 15 * MINUTE_MULTIPLIER;

protected int startTimeStamp = -1;

protected long initialDelta = 0;

// thread is running

private boolean running = false;

// thread is stopped

private boolean stopping = false;

// thread will be stopped as soon as the queue is empty

private boolean dostopping = false;

protected ITagWriter writer = null;

protected Long metaDataId = null;

protected Date startedSessionTimeDate = null;

protected File file;

protected IScope scope;

protected boolean isScreenData = false;

protected String streamName = "";

protected final RecordingMetaDataDao metaDataDao;

private final BlockingQueue<CachedEvent> queue = new LinkedBlockingQueue<>();

public BaseStreamWriter(String streamName, IScope scope, Long metaDataId, boolean isScreenData) {

startedSessionTimeDate = new Date();

this.isScreenData = isScreenData;

this.streamName = streamName;

this.metaDataId = metaDataId;

this.metaDataDao = getApp().getOmBean(RecordingMetaDataDao.class);

this.scope = scope;

try {

init();

} catch (IOException ex) {

log.error("##REC:: [BaseStreamWriter] Could not init Thread", ex);

}

RecordingMetaData metaData = metaDataDao.get(metaDataId);

metaData.setStreamStatus(Status.STARTED);

metaDataDao.update(metaData);

open();

}

/**

* Initialization

*

* @throws IOException

* I/O exception

*/

private void init() throws IOException {

file = new File(OmFileHelper.getStreamsSubDir(scope.getName()), OmFileHelper.getName(streamName, EXTENSION_FLV));

IStreamableFileFactory factory = (IStreamableFileFactory) ScopeUtils.getScopeService(scope, IStreamableFileFactory.class,

StreamableFileFactory.class);

if (!file.isFile()) {

// Maybe the (previously existing) file has been deleted

file.createNewFile();

} else if (!file.canWrite()) {

throw new IOException("The file is read-only");

}

IStreamableFileService service = factory.getService(file);

IStreamableFile flv = service.getStreamableFile(file);

writer = flv.getWriter();

}

private void open() {

running = true;

new Thread(this, "Recording " + file.getName()).start();

}

public void stop() {

dostopping = true;

}

@Override

public void run() {

log.debug("##REC:: stream writer started");

long lastPackedRecieved = System.currentTimeMillis() + TIME_TO_WAIT_FOR_FRAME;

long counter = 0;

while (!stopping) {

try {

CachedEvent item = queue.poll(100, TimeUnit.MICROSECONDS);

if (item != null) {

log.trace("##REC:: got packet");

lastPackedRecieved = System.currentTimeMillis();

if (dostopping) {

log.trace("metadatId: {} :: Recording stopped but still packets to write to file!", metaDataId);

}

packetReceived(item);

} else if (dostopping || lastPackedRecieved + TIME_TO_WAIT_FOR_FRAME < System.currentTimeMillis()) {

log.debug("##REC:: none packets received for: {} minutes, exiting", (System.currentTimeMillis() - lastPackedRecieved) / MINUTE_MULTIPLIER);

stopping = true;

closeStream();

}

if (++counter % 5000 == 0) {

log.debug("##REC:: Stream writer is still listening:: {}", file.getName());

}

} catch (InterruptedException e) {

log.error("##REC:: [run]", e);

}

}

log.debug("##REC:: stream writer stopped");

}

/**

* Write the actual packet data to the disk and do calculate any needed additional information

*

* @param streampacket - received packet

*/

public abstract void packetReceived(CachedEvent streampacket);

protected abstract void internalCloseStream();

/**

* called when the stream is finished written on the disk

*/

public void closeStream() {

try {

writer.close();

} catch (Exception err) {

log.error("[closeStream, close writer]", err);

}

internalCloseStream();

// Write the complete Bit to the meta data, the converter task will wait for this bit!

try {

RecordingMetaData metaData = metaDataDao.get(metaDataId);

log.debug("##REC:: Stream Status was: {} has been written for: {}", metaData.getStreamStatus(), metaDataId);

metaData.setStreamStatus(Status.STOPPED);

metaDataDao.update(metaData);

} catch (Exception err) {

log.error("##REC:: [closeStream, complete Bit]", err);

}

}

public void append(CachedEvent streampacket) {

if (!running) {

throw new IllegalStateException("Append called before the Thread was started!");

}

try {

queue.put(streampacket);

log.trace("##REC:: Q put, successful: {}", queue.size());

} catch (InterruptedException ignored) {

log.error("##REC:: [append]", ignored);

}

}

protected void write(int timeStamp, byte type, IoBuffer data) throws IOException {

log.trace("timeStamp :: {}", timeStamp);

ITag tag = new Tag();

tag.setDataType(type);

tag.setBodySize(data.limit());

tag.setTimestamp(timeStamp);

tag.setBody(data);

writer.writeTag(tag);

}

}

2、CachedEvent.java

前面的阻塞队列中已经出现了CachedEvent类了。它的字面意思是缓存事件,也就是需要处理的任务。先看其引入的内容:

引入的内容相对较少。第一个是Date类,下面引入的有IoBuffer、IStreamPacket、FrameType类,之前都见过。

看类的定义:

CachedEvent实现了IStreamPacket接口。IStreamPacket意思是数据流的数据包,也是red5实现的接口,能够获取数据流的类型、时间戳、数据等。

类的字段有数据的类型dataType,时间戳timeStamp,IoBuffer类型对象data,Date对象currentTime,枚举类型FrameType对象framType且赋值为FreamType.UNKNOWN。后面有一系列getters和setters,其中三个方法getDataType、getTimestamp、getData也正好是覆写的IStreamPacket的方法。

总的来说,CachedEvent类是一个事件类,是一个数据流的状态,包含了事件的各项信息。附上其代码:

/*

* Licensed to the Apache Software Foundation (ASF) under one

* or more contributor license agreements. See the NOTICE file

* distributed with this work for additional information

* regarding copyright ownership. The ASF licenses this file

* to you under the Apache License, Version 2.0 (the

* "License") + you may not use this file except in compliance

* with the License. You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in writing,

* software distributed under the License is distributed on an

* "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY

* KIND, either express or implied. See the License for the

* specific language governing permissions and limitations

* under the License.

*/

package org.apache.openmeetings.core.data.record.listener.async;

import java.util.Date;

import org.apache.mina.core.buffer.IoBuffer;

import org.red5.server.api.stream.IStreamPacket;

import org.red5.server.net.rtmp.event.VideoData.FrameType;

public class CachedEvent implements IStreamPacket {

private byte dataType;

private int timestamp; //this is the timeStamp, showing the time elapsed since the microphone was turned on

private IoBuffer data;

private Date currentTime; //this is the actually current timeStamp when the packet with audio data did enter the server

private FrameType frameType = FrameType.UNKNOWN;

public Date getCurrentTime() {

return currentTime;

}

public void setCurrentTime(Date currentTime) {

this.currentTime = currentTime;

}

public void setDataType(byte dataType) {

this.dataType = dataType;

}

public void setTimestamp(int timestamp) {

this.timestamp = timestamp;

}

public void setData(IoBuffer data) {

this.data = data;

}

@Override

public byte getDataType() {

return dataType;

}

@Override

public int getTimestamp() {

return timestamp;

}

@Override

public IoBuffer getData() {

return data;

}

public FrameType getFrameType() {

return frameType;

}

public void setFrameType(FrameType frameType) {

this.frameType = frameType;

}

}

3、StreamVideoWriter.java

StreamVideoWriter类是视频数据流的writer。看引入的内容:

前面引入了getWebAppRootKey方法和刚才见过的枚举类型FrameType的KETFRAME字段。然后引入了Date、IoBuffer等已经见过很多次的类,不再多说。

看类的定义:

StreamVideoWriter类继承自刚才分析过的BaseStreamWriter类,这说明它也是一个能够按照线程的生命周期进行执行的程序。类的字段天然继承了BaseStreamWriter的相关字段,还定义了log和开始执行任务的日期startedSessionScreenTimeDate。下面是它的一个构造器,直接调用父类的构造器。

再下面,是覆写了packetReceived方法和internalCloseStream方法,其余方法都是继承自父类的方法。 因此它的方法都不再继续分析了,结束本类的分析。

4、StreamAudioWriter.java

StreamAudioWriter类显然是音频数据流的writer。它的结构和内容与StreamVideoWriter类相似,因此直接略过它。

至此,async目录分析完毕。在这个目录里,源码完成了对数据流的一系列读写。所谓体现异步的地方就是任务的执行是以线程的形式执行的,又提供了把数据写回磁盘的方法。

StreamListener.java

StreamListener类是对流的监听,有可能是对视频流、音频流等数据的监听。看其导入的内容:

首先引入了getWebAppRootKey、Date。然后导入的内容是刚刚分析的async目录下的四个类,不再多提。后面引入了red5相关的类和日志类。

看类的定义:

StreamListener类实现了IStreamListener接口,这个接口定义了一个packetReceived方法。

类的字段里面定义了log,还定义了BaseStreamWriter对象streamWriter。它的构造器形参有boolean isAudio、String streamName、IScope scope、Long metaDataId、boolean isScreenData、boolean isInterview。构造器内,首先要为streamWriter赋值,确定它是音频流还是视频流。判断的依据就是isAudio是否为true,如果为true就意味着它是音频流,那么streamWriter应该初始化为StreamAudioWriter,否则初始化为StreamVideoWriter。

最后实现的就是packetReceived方法和closeStream方法。前者完成了接收数据包,后者完成了关闭数据流。这些都在之前的代码里面分析过,不再多说了。

最后实现的就是packetReceived方法和closeStream方法。前者完成了接收数据包,后者完成了关闭数据流。这些都在之前的代码里面分析过,不再多说了。

至此,StreamListener.java分析完毕。它主要完成了对视频流数据和音频流数据的接收,通过的方式依然是借助StreamWriter类型。

到这里,data目录也已经分析完毕。在该目录下,完成了文件格式的转换工作和数据流的监听、修改等。

总结

本文完成了core模块下data目录的分析,已经将core模块下大块的代码分析完毕了。接下来的任务就轻松一些了,剩余的代码量少了许多。通过本篇分析,我了解了使用Java进行线程生命周期控制和数据流操作等的方法。分析到现在,遇到的语法等大部分已经见过了,因此分析的速度比起初要快了很多,能够在下面两篇博客完成全部源码的分析了。

196

196

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?