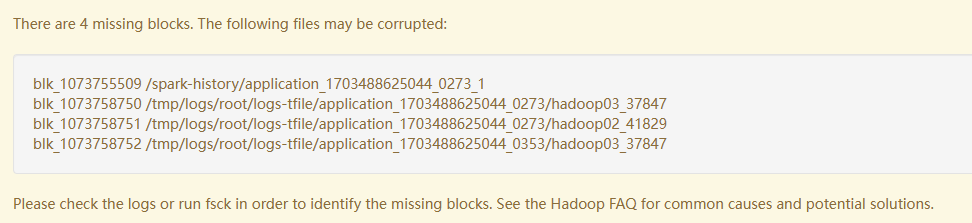

There are 4 missing blocks. The following files may be corrupted

Please check the logs or run fsck in order to identify the missing blocks. See the Hadoop FAQ for common causes and potential solutions.

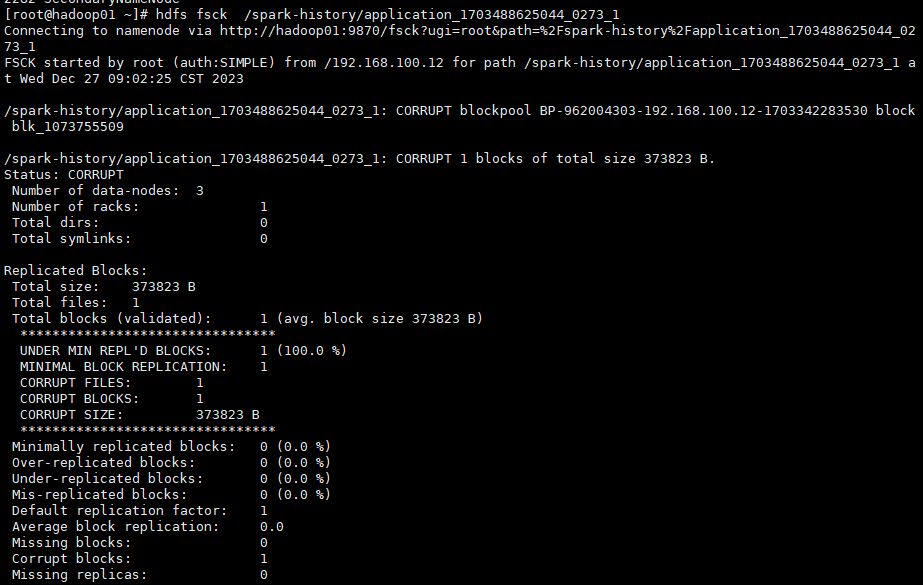

步骤1,检查文件缺失情况

hadoop fsck /tmp/logs/root/logs-tfile/ | grep parquet

可以看到

blk_1073755509 /spark-history/application_1703488625044_0273_1

blk_1073758750 /tmp/logs/root/logs-tfile/application_1703488625044_0273/hadoop03_37847

blk_1073758751 /tmp/logs/root/logs-tfile/application_1703488625044_0273/hadoop02_41829

blk_1073758752 /tmp/logs/root/logs-tfile/application_1703488625044_0353/hadoop03_37847

确实各自都丢失了1个Block, 文件已经损坏,不可恢复。

步骤2,删除缺失文件

因为我的环境为测试环境,可删除缺失文件,生产环境还需要斟酌判断。

hdfs fsck -delete /spark-history/application_1703488625044_0273_1

hdfs fsck -delete /tmp/logs/root/logs-tfile/application_1703488625044_0273/hadoop03_37847

hdfs fsck -delete /tmp/logs/root/logs-tfile/application_1703488625044_0273/hadoop02_41829

hdfs fsck -delete /tmp/logs/root/logs-tfile/application_1703488625044_0353/hadoop03_37847

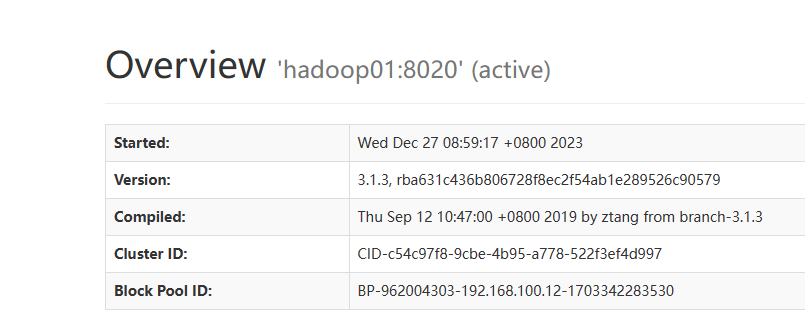

步骤3, 检查集群健康状况

文章讲述了在Hadoop环境中发现文件丢失并使用`hadoopfsck`检查缺失块的过程,提出通过删除损坏文件并关注集群健康状况来解决这个问题。

文章讲述了在Hadoop环境中发现文件丢失并使用`hadoopfsck`检查缺失块的过程,提出通过删除损坏文件并关注集群健康状况来解决这个问题。

915

915

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?