先丢个官网链接

本章博客依赖官网

第一步,先放依赖:

<dependency>

<groupId>org.apache.storm</groupId>

<artifactId>storm-redis</artifactId>

<version>${storm.version}</version>

<type>jar</type>

</dependency>

然后我们开始编写代码

首先我们编写第一个Spout,用于读取数据并发送

public static class DataSourceSpout extends BaseRichSpout {

//由于需要发送数据,所以定义一个SpoutOutputCollector

private SpoutOutputCollector collector;

@Override

public void open(Map conf, TopologyContext context, SpoutOutputCollector collector) {

//初始化SpoutOutputCollector

this.collector = collector;

}

//这边我们自定义一个数组

public static final String[] words = new String[]{"apple","banana","orange","strawberry",};

@Override

public void nextTuple() {

//我们通过随机读取数组的方式生成数据进行测试

Random random = new Random();

String word = words[random.nextInt(words.length)];

//这边为了防止刷屏,我们设定sleep一秒

Utils.sleep(1000);

//发送数据

this.collector.emit(new Values(word));

}

@Override

public void declareOutputFields(OutputFieldsDeclarer declarer) {

//声明输出字段,和上面发送的数据相对应

declarer.declare(new Fields("word"));

}

}

然后编写第一个bolt,用于进行wordCount操作并发送数据

public static class CountWords extends BaseRichBolt{

//由于需要发送数据,所以定义一个SpoutOutputCollector

private OutputCollector collector;

@Override

public void prepare(Map stormConf, TopologyContext context, OutputCollector collector) {

//初始化SpoutOutputCollector

this.collector = collector;

}

//定义一个map存放数据

Map<String,Integer> map = new HashMap<>();

@Override

public void execute(Tuple input) {

//抓取上面发送过来的数据

String word = input.getStringByField("word");

Integer i = map.get(word);

if (i == null){

i = 0;

}

i++;

map.put(word,i);

//这边输出遗传字符串方便测试

System.out.println("emit : "+word + " "+map.get(word));

//将word和count发送出去

this.collector.emit(new Values(word,map.get(word)));

}

@Override

public void declareOutputFields(OutputFieldsDeclarer declarer) {

declarer.declare(new Fields("word","count"));

}

}

这边编写第二个bolt,用于接收上面过来的word和count并保存到redis中

这个bolt的代码直接从官网拷贝

因为要存储数据,所以将这片代码拷贝过来修改

只需要修改一处

public static class WordCountStoreMapper implements RedisStoreMapper {

private RedisDataTypeDescription description;

//如果修改hashKey的值,那么就是修改了最后要保存到redis里的key值

private final String hashKey = "wc";

public WordCountStoreMapper() {

description = new RedisDataTypeDescription(

RedisDataTypeDescription.RedisDataType.HASH, hashKey);

}

public RedisDataTypeDescription getDataTypeDescription() {

return description;

}

public String getKeyFromTuple(ITuple tuple) {

return tuple.getStringByField("word");

}

public String getValueFromTuple(ITuple tuple) {

//只需要修改这里即可

//由于上面发送过来的count是int类型,所以函数要改成tuple.getIntegerByField

//因为需要返回一个string类型,所以直接在后面加一个空字符串转换成string类型

return tuple.getIntegerByField("count")+"";

}

}

最后我们编写main代码

一样从官网参考

public static void main(String[] args) {

//官网拷贝,秩序修改host值和port值即可

JedisPoolConfig poolConfig = new JedisPoolConfig.Builder()

.setHost("192.168.0.133").setPort(6379).build();

RedisStoreMapper storeMapper = new WordCountStoreMapper();

RedisStoreBolt storeBolt = new RedisStoreBolt(poolConfig, storeMapper);

//定义一个builder ,然后一串接着一串

TopologyBuilder builder = new TopologyBuilder();

builder.setSpout("DataSourceSpout",new DataSourceSpout());

builder.setBolt("CountWords",new CountWords()).shuffleGrouping("DataSourceSpout");

builder.setBolt("storeBolt",storeBolt).shuffleGrouping("CountWords");

//这边我们通过本地进行测试,然后调用 cluster.submitTopology方法开始

LocalCluster cluster = new LocalCluster();

cluster.submitTopology("LocalWCStormRedisTop",new Config(),builder.createTopology());

}

运行,查看控制台

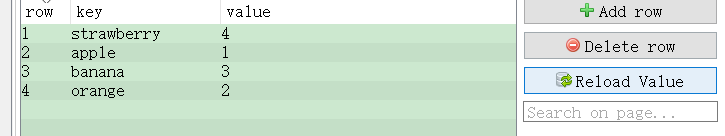

查看RDM:

这边点击Reload Value会一直跟新数据

这样我们的Storm整合Redis就完成了

240

240

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?