先上代码:

import requests

from bs4 import BeautifulSoup

headers={

"User-Agent":"Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/68.0.3440.75 Safari/537.36"

}

url="https://www.shicimingju.com/book/sanguoyanyi.html"

page_text=requests.get(url=url,headers=headers).text

#在首页中解析出章节的标题和详情页的url

#实例化BeautifulSoup对象,需要将页面源码数据加载到该对象中

soup=BeautifulSoup(page_text,'lxml')

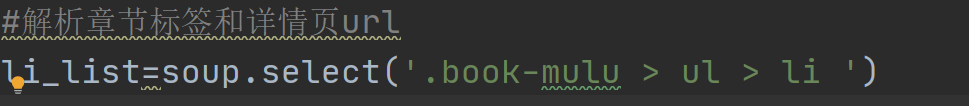

#解析章节标签和详情页url

li_list=soup.select('.book-mulu > ul > li ')

#持久化储存

fp=open('./sanguo.txt','w',encoding='utf-8')

for li in li_list:

title=li.a.string

detail_url='https://www.shicimingju.com'+li.a['href']

#对详情页发请求,解析出章节内容

detail_page_text=requests.get(url=detail_url,headers=headers).text

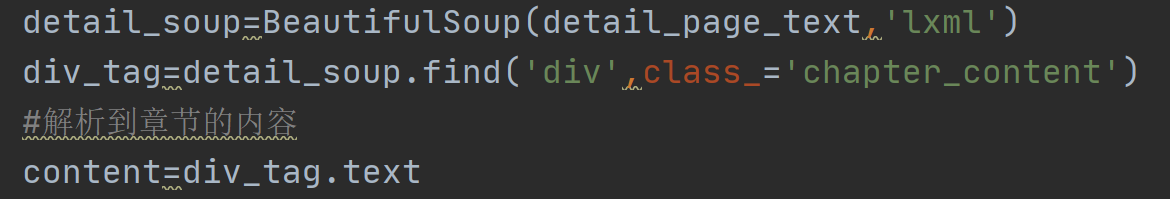

#解析出详情页中相关的章节内容

detail_soup=BeautifulSoup(detail_page_text,'lxml')

div_tag=detail_soup.find('div',class_='chapter_content')

#解析到章节的内容

content=div_tag.text

fp.write(title+':'+content+'\n')

print(title,'爬取成功')

网站链接:

https://www.shicimingju.com/book/sanguoyanyi.html

部分代码解析:

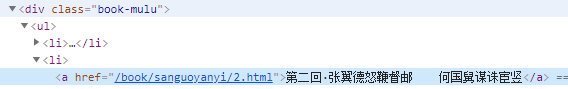

打开f12开发者工具

打开f12开发者工具

用bs4解析页面就是定位标签的过程,直到把你要的数据找到。

用bs4解析页面就是定位标签的过程,直到把你要的数据找到。

结果展示:

830

830

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?