1、安装kafka

1)确认版本

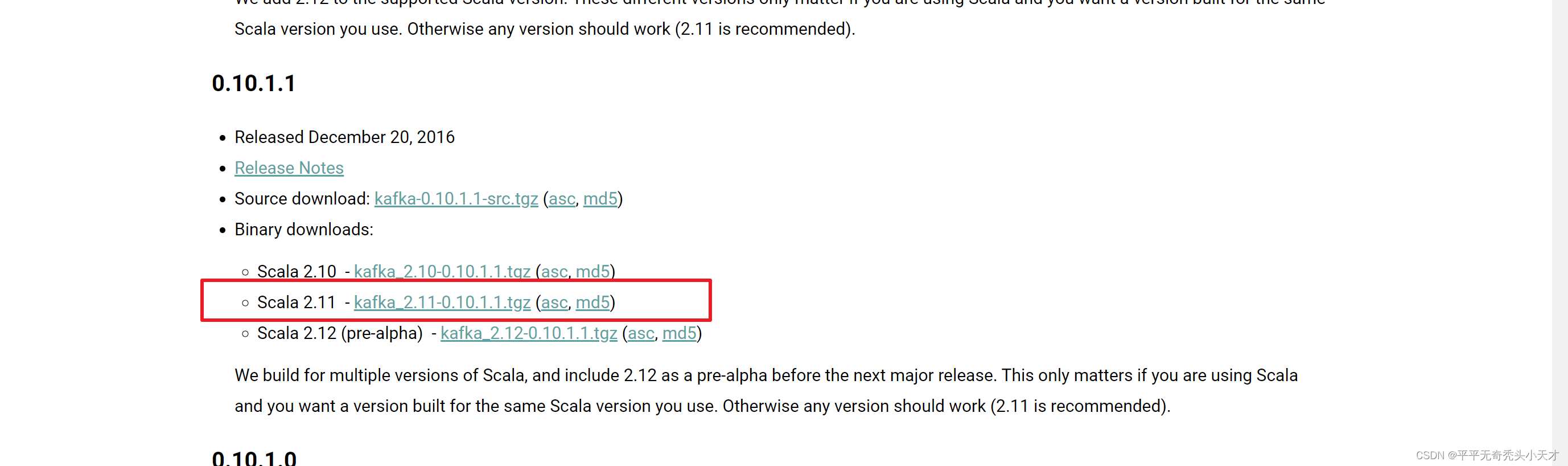

首先下载kafka安装包之前需要了解到kafka版本要与scala版本一致,查看版本对应

笔者的scala版本为2.11,对应kafka_2.11-0.10.1.1版本

2)官网下载对应版本

Apache Kafka,在kafka官网下载对应版本的kafka安装包

3)上传安装包并解压重命名

[root@hadoop001 software]# tar -zxvf kafka_2.11-0.10.1.1.tgz -C /export/servers/

[root@hadoop001 software]#mv kafka_2.11-0.10.1.1 kafka

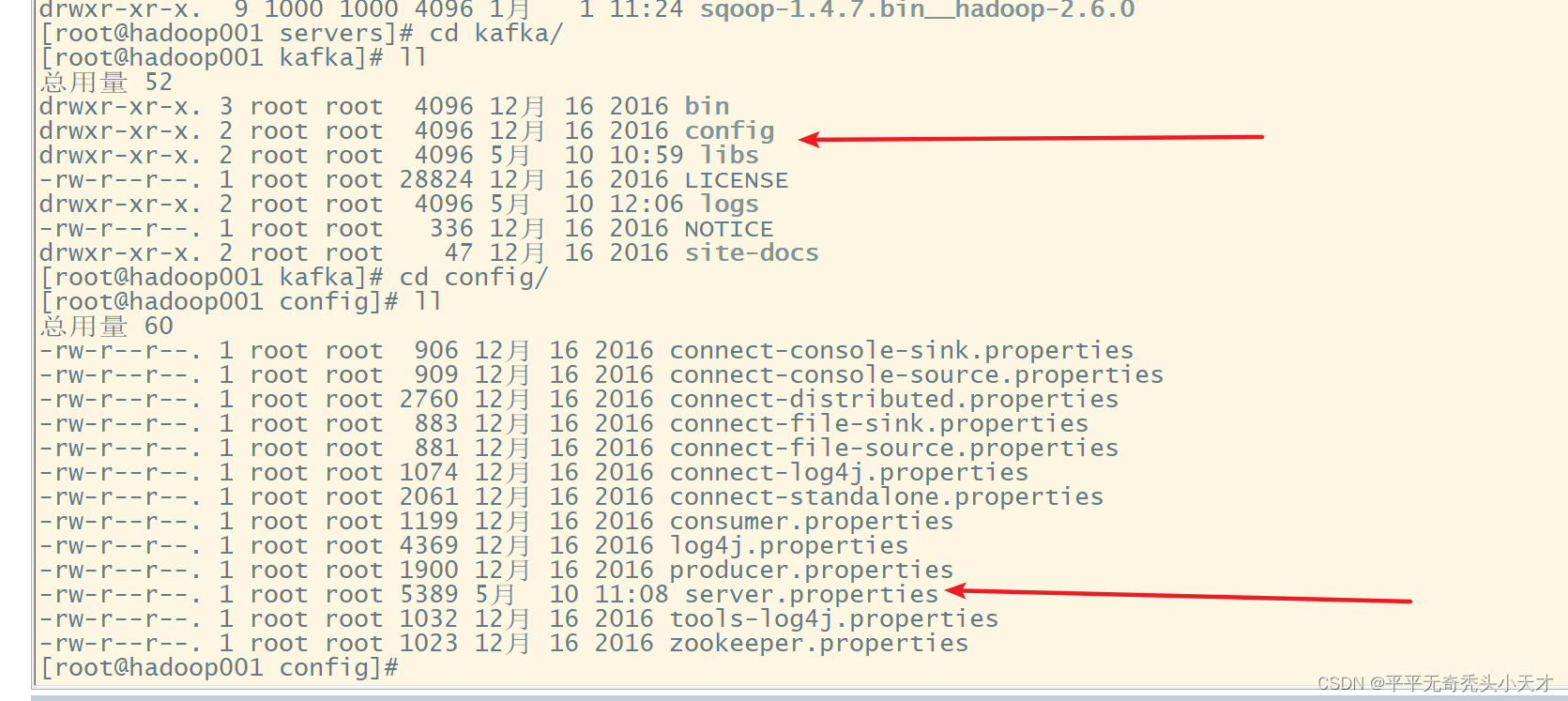

2、 修改配置文件

1)修改配置文件

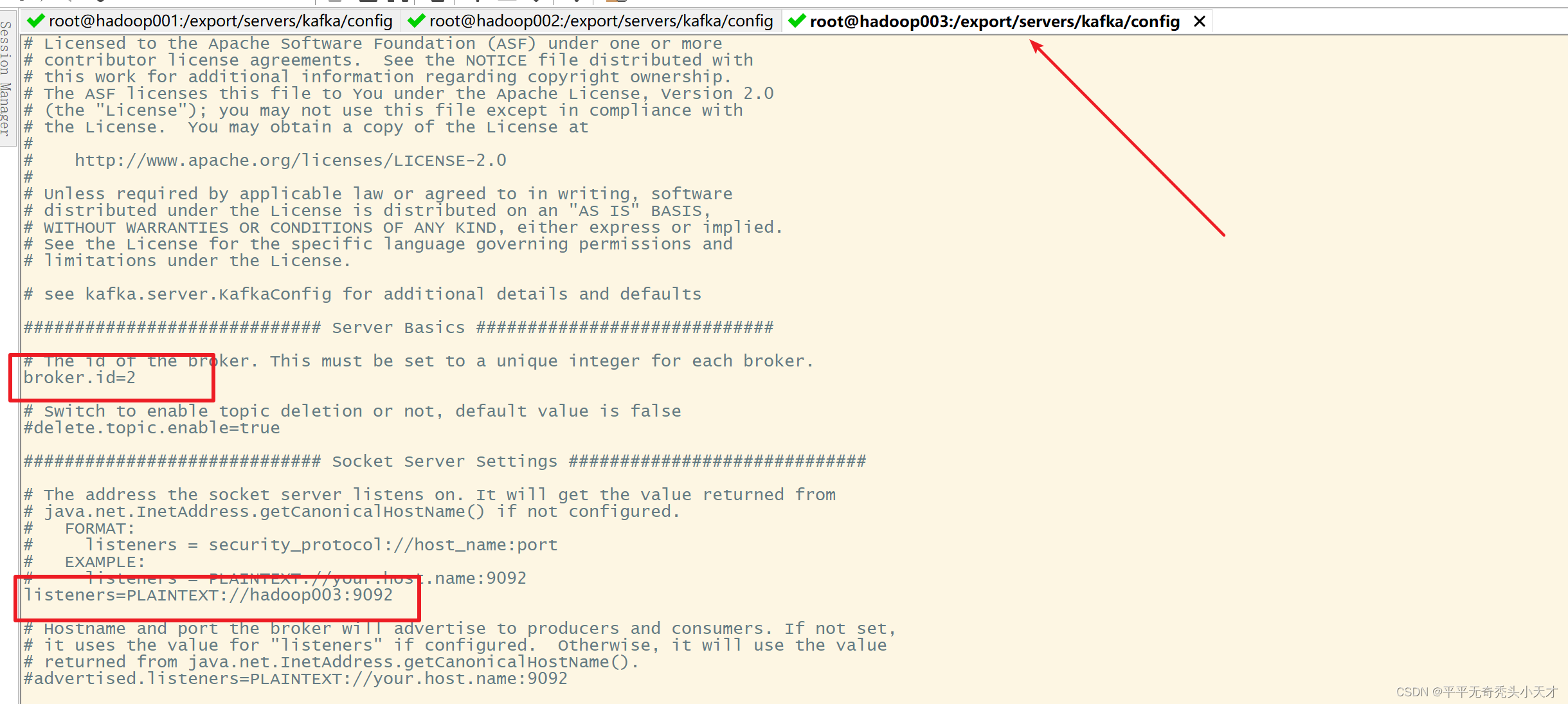

修改server.properties文件

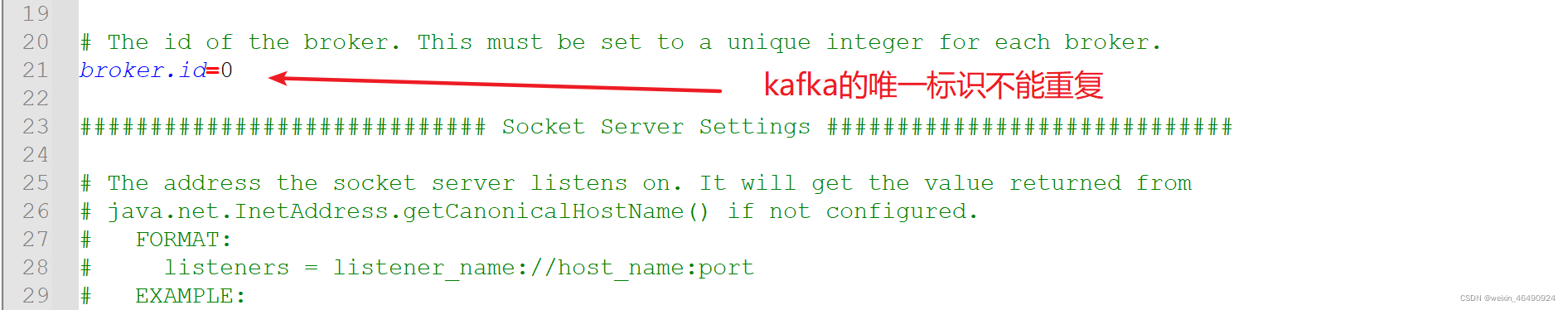

使用notpad++修改配置文件

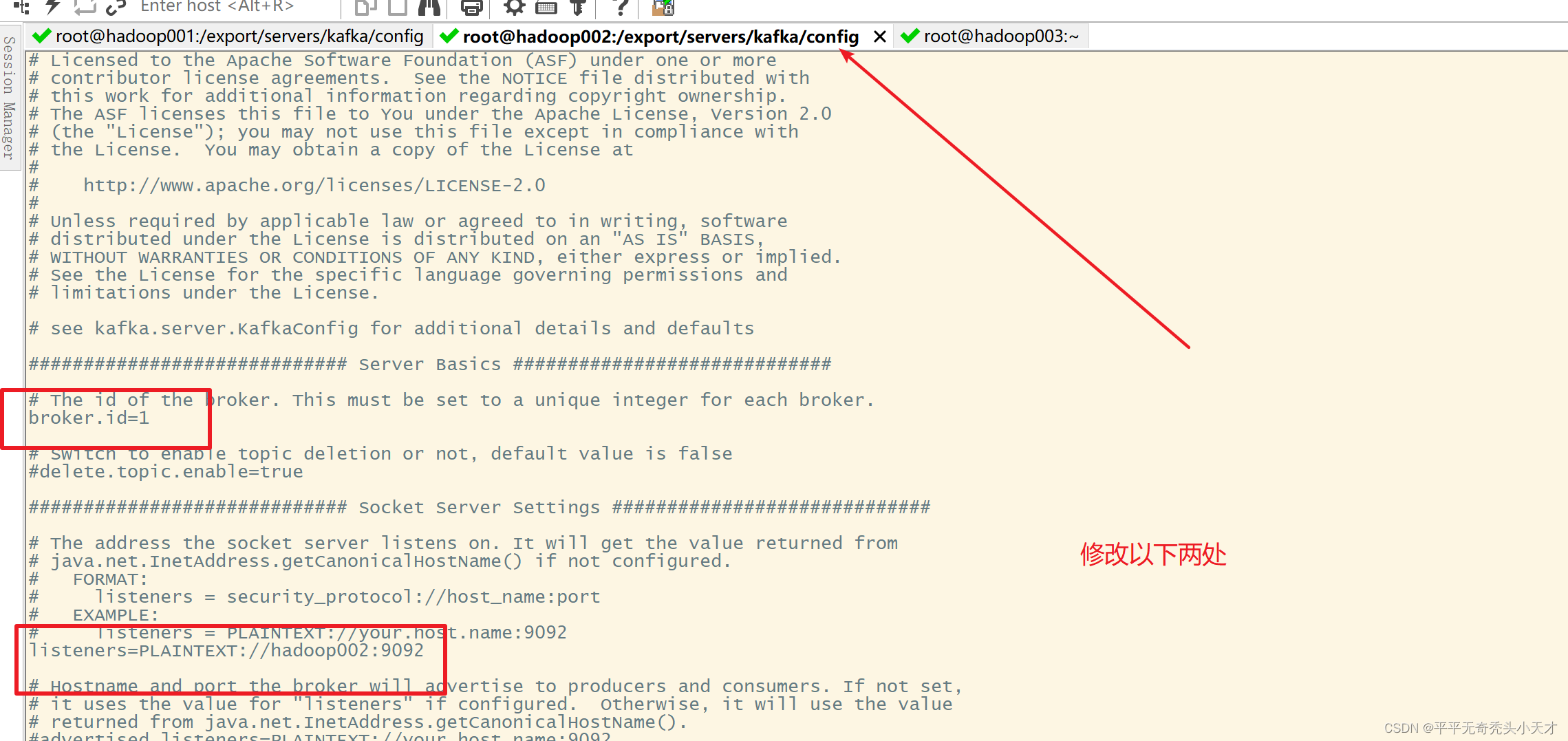

broker.id=0 三台集群,hadoop001为0,hadoop002为1,hadoop003为2

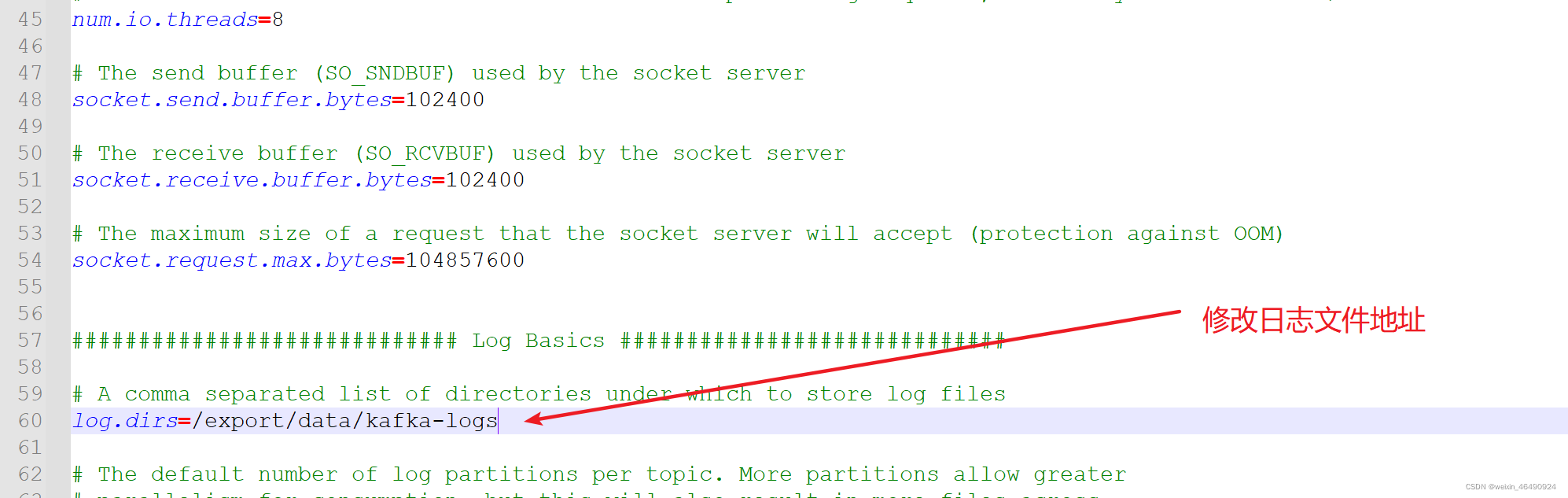

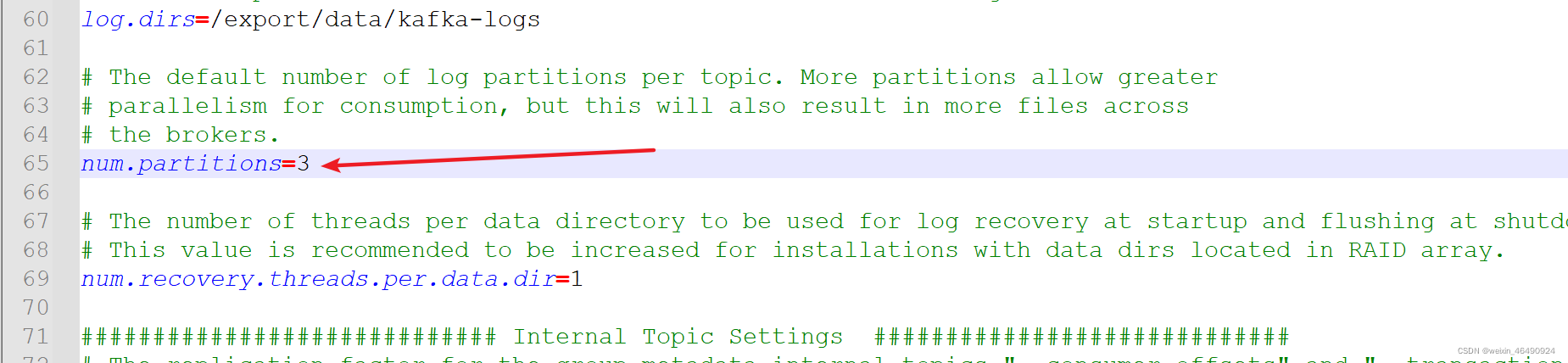

修改日志文件地址

log.dirs=/export/data/kafka-logs

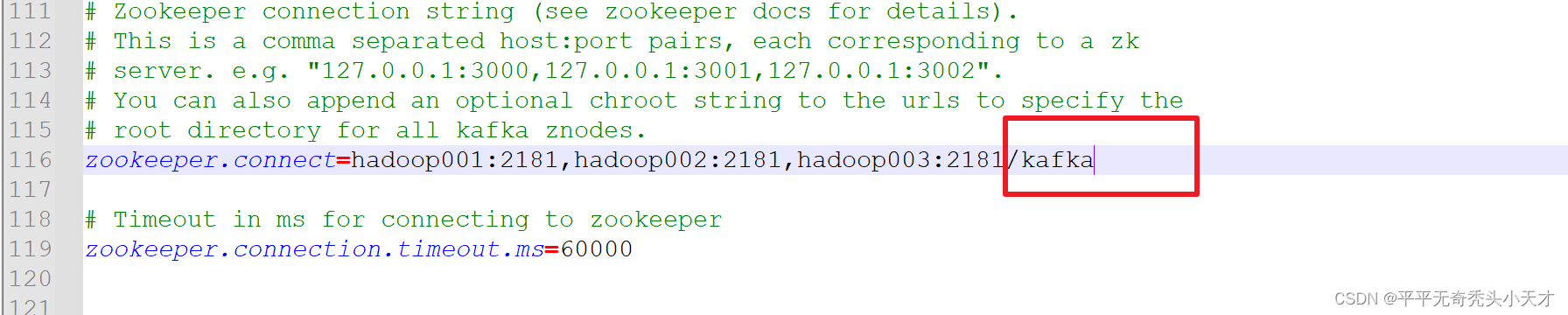

修改zookeeper对应

zookeeper.connect=hadoop001:2181,hadoop002:2181,hadoop003:2181/kafka

zookeeper.connection.timeout.ms=60000

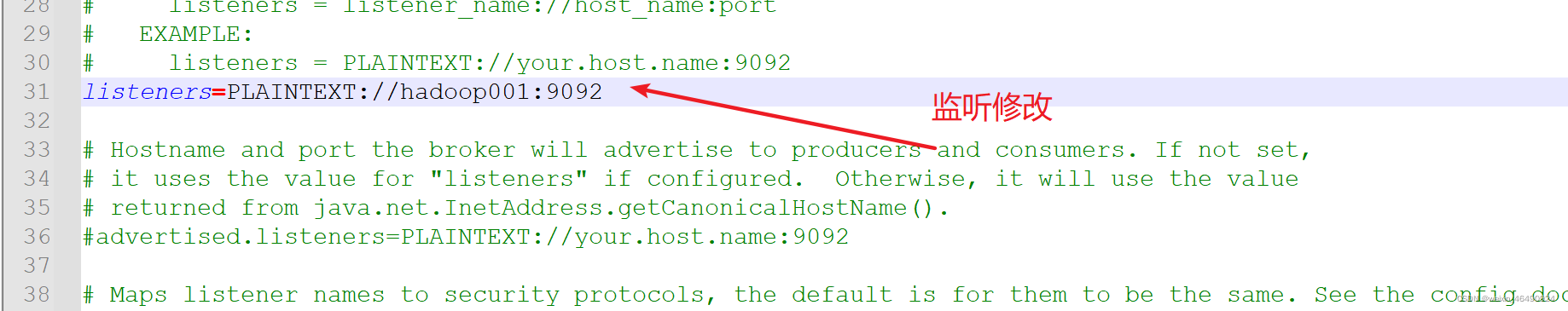

修改监听

listeners=PLAINTEXT://hadoop001:9092 hadoop001,hadoop002,hadoop003记得修改

修改分区数 默认是1 改为3 num.partitions=3

num.partitions=3

保存配置文件

3、分发修改配置文件

[root@hadoop001 servers]# scp -r kafka/ hadoop002:$PWD

[root@hadoop001 servers]# scp -r kafka/ hadoop003:$PWD

4、 修改环境变量

1)修改

[root@hadoop001 servers]# vi /etc/profile

#kafka配置

export KAFKA_HOME=/export/servers/kafka

export PATH=$PATH:$KAFKA_HOME/bin

2) 使环境变量起作用

[root@hadoop001 kafka]# source /etc/profile

[root@hadoop001 kafka]# scp /etc/profile hadoop002:$PWD

[root@hadoop001 kafka]# scp /etc/profile hadoop003:$PWD

[root@hadoop002 kafka]# source /etc/profile

[root@hadoop003 kafka]# source /etc/profile

5、 测试启动kafka

1)启动zookeeper

[root@hadoop001 ~]# zkServer.sh start

[root@hadoop002 ~]# zkServer.sh start

[root@hadoop003 ~]# zkServer.sh start

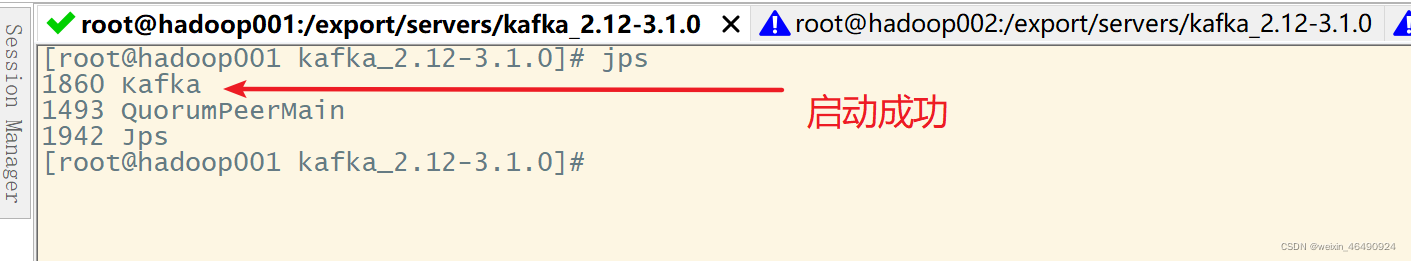

2)启动kafka

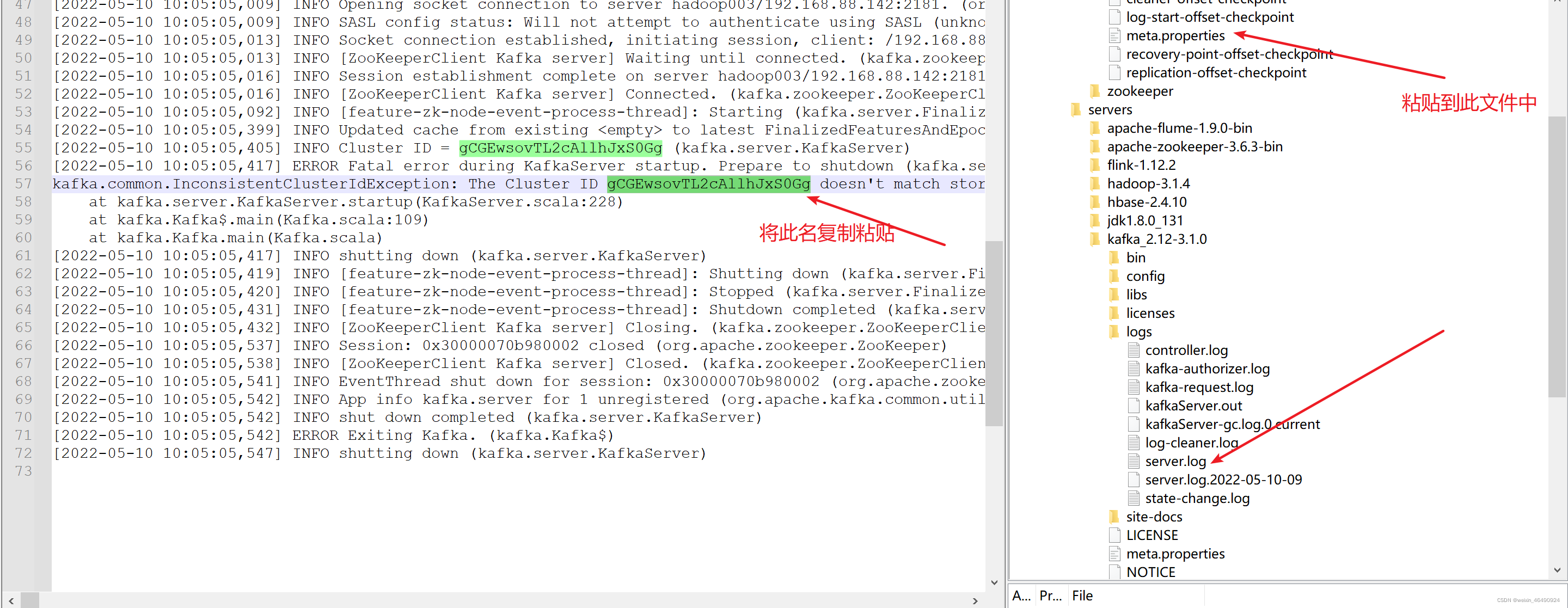

错误1:kafka启动后自动关闭,和/export/data/kafka-logs中的文件产生冲突

[root@hadoop001 kafka]# bin/kafka-server-start.sh -daemon config/server.properties

[root@hadoop002 kafka]# bin/kafka-server-start.sh -daemon config/server.properties

[root@hadoop003 kafka]# bin/kafka-server-start.sh -daemon config/server.properties

3)停止kafka

[root@hadoop001 kafka]# bin/kafka-server-stop.sh -daemon config/server.properties

[root@hadoop002 kafka]# bin/kafka-server-stop.sh -daemon config/server.properties

[root@hadoop003 kafka]# bin/kafka-server-stop.sh -daemon config/server.properties

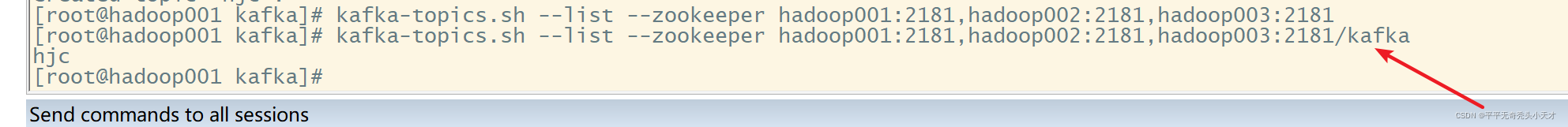

4)查看主题信息

kafka-topics.sh --list --zookeeper hadoop001:2181,hadoop002:2181,hadoop003:2181

错误2:超时,配置文件写错

zookeeper.connect=hadoop001:2181,hadoop002:2181,hadoop003:2181/kafka

[root@hadoop001 kafka]# kafka-topics.sh --list --zookeeper hadoop001:2181,hadoop002:2181,hadoop003:2181/kafka

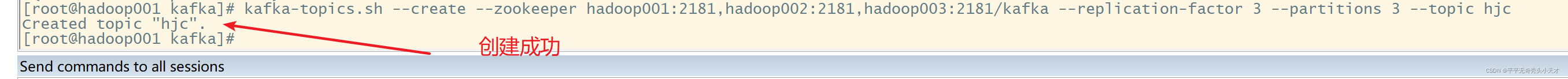

5)创建主题信息

[root@hadoop001 kafka]# kafka-topics.sh --create --zookeeper hadoop001:2181,hadoop002:2181,hadoop003:2181/kafka --replication-factor 3 --partitions 3 --topic spark_kafka_test

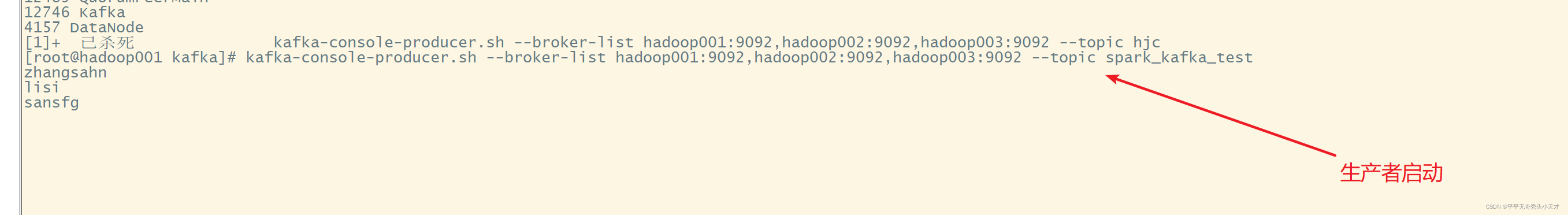

6) 写入数据

向kafka主题写入数据

[root@hadoop001 kafka]# kafka-console-producer.sh --broker-list hadoop001:9092,hadoop002:9092,hadoop003:9092 --topic spark_kafka_test

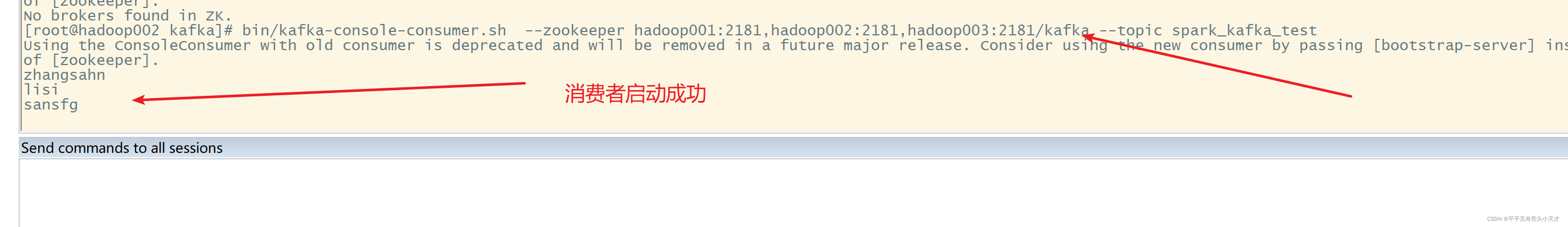

[root@hadoop002 kafka]# bin/kafka-console-consumer.sh --zookeeper hadoop001:2181,hadoop002:2181,hadoop003:2181/kafka --topic spark_kafka_test

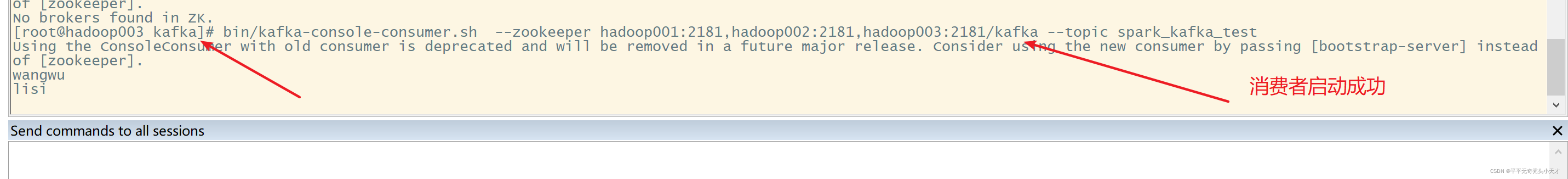

[root@hadoop003 kafka]# bin/kafka-console-consumer.sh --zookeeper hadoop001:2181,hadoop002:2181,hadoop003:2181/kafka --topic spark_kafka_test

437

437

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?