场景

ambari发现磁盘未挂载

节点执行df -h 不显示/mnt/data_1

cd /mnt/data_1/正常 且目录都在

mount -a 显示目录不存在

ambari页面停止所有服务,重新对磁盘进行分区

#自动运维

rm -rf /mnt/data_1

systemctl enable lvm2-lvmetad.service

systemctl enable lvm2-lvmetad.socket

systemctl start lvm2-lvmetad.service

systemctl start lvm2-lvmetad.socket

fdisk /dev/vdb

n

p

1

<Enter>

<Enter>

t

8e

wq

pvcreate /dev/vdb1

vgcreate vgdata_1 /dev/vdb1

lvcreate -l 100%FREE -n lvdata_1 vgdata_1

mkfs.ext4 /dev/vgdata_1/lvdata_1

mkdir /mnt/data_1

mount /dev/vgdata_1/lvdata_1 /mnt/data_1

echo /dev/vgdata_1/lvdata_1 /mnt/data_1 ext4 defaults 0 0 >> /etc/fstab

df -h

mkdir -p /mnt/data_1/data

mkdir -p /mnt/data_1/logs

ln -s /mnt/data_1/logs /cy/logs

ln -s /mnt/data_1/data /cy/data

chown -R admin:admin /mnt/

chown -R admin:admin /cy/

ll /cy

启动服务

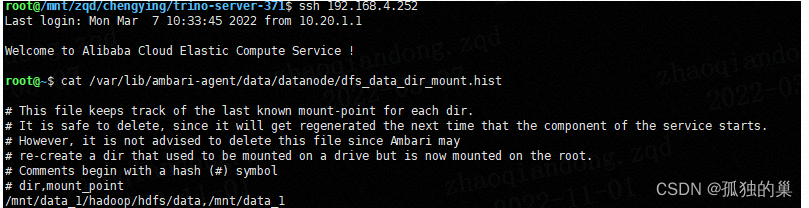

datanode 报错:

Directory /mnt/data_1/hadoop/hdfs/data became unmounted from / . Current mount point: /mnt/data_1 . Please ensure that mounts are healthy. If the mount change was intentional, you can update the contents of /var/lib/ambari-agent/data/datanode/dfs_data_dir_mount.hist.

查看文件内容

对比正常节点内容

修改后再次启动正常

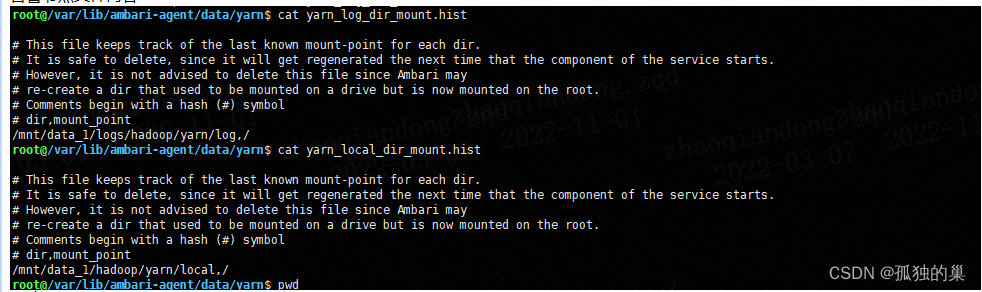

启动完毕后yarn一直告警

1/1 local-dirs have errors: [ /mnt/data_1/hadoop/yarn/local : Cannot create directory: /mnt/data_1/hadoop/yarn/local ] 1/1 log-dirs have errors: [ /mnt/data_1/logs/hadoop/yarn/log : Cannot create directory: /mnt/data_1/logs/hadoop/yarn/log ]

于是想到datanode的问题

正常节点这个目录

告警节点文件内容

修改后正常

数据的目录个数比硬盘挂载个数要多,目录挂载情况文件

/var/lib/ambari-agent/data/

2703

2703

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?