案例来自: 51doit

需求

给定JSON格式的订单数据和分类数据,求出每个商品分类的成交金额,写入到mysql中

订单数据

{"oid":"o123", "cid": 1, "money": 600.0, "longitude":116.397128,"latitude":39.916527}

"oid":"o112", "cid": 3, "money": 200.0, "longitude":118.396128,"latitude":35.916527}

{"oid":"o124", "cid": 2, "money": 200.0, "longitude":117.397128,"latitude":38.916527}

{"oid":"o125", "cid": 3, "money": 100.0, "longitude":118.397128,"latitude":35.916527}

{"oid":"o127", "cid": 1, "money": 100.0, "longitude":116.395128,"latitude":39.916527}

{"oid":"o128", "cid": 2, "money": 200.0, "longitude":117.396128,"latitude":38.916527}

{"oid":"o129", "cid": 3, "money": 300.0, "longitude":115.398128,"latitude":35.916527}

{"oid":"o130", "cid": 2, "money": 100.0, "longitude":116.397128,"latitude":39.916527}

{"oid":"o131", "cid": 1, "money": 100.0, "longitude":117.394128,"latitude":38.916527}

{"oid":"o132", "cid": 3, "money": 200.0, "longitude":118.396128,"latitude":35.916527}

分类数据

1,家具

2,手机

3,服装

输出到Mysql

maven:

<properties>

<spark.version>2.3.4</spark.version>

<fastjson.version>1.2.61</fastjson.version>

<mysql.version>5.1.39</mysql.version>

<scala.version>2.11.12</scala.version>

</properties>

<dependencies>

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>${mysql.version}</version>

</dependency>

<dependency>

<groupId>org.scala-lang</groupId>

<artifactId>scala-library</artifactId>

<version>${scala.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-sql_2.11</artifactId>

<version>${spark.version}</version>

</dependency>

<dependency>

<groupId>com.alibaba</groupId>

<artifactId>fastjson</artifactId>

<version>${fastjson.version}</version>

</dependency>

</dependencies>

代码:

import java.sql.{Connection, Date, DriverManager, PreparedStatement, SQLException}

import com.alibaba.fastjson.{JSON, JSONException}

import org.apache.log4j.{Level, Logger}

import org.apache.spark.rdd.RDD

import org.apache.spark.{SparkConf, SparkContext}

/**

* @date :2021/3/31 1:23

* @author :xiaotao

* @description :给定JSON格式的订单数据,求出每个商品分类的成交金额

*/

object OrderCount {

def main(args: Array[String]): Unit = {

Logger.getLogger("org").setLevel(Level.WARN)

val isLocal = true

//创建SparkConf,然后创建SparkContext

val conf = new SparkConf().setAppName(this.getClass.getSimpleName)

if (isLocal) {

conf.setMaster("local[*]")

}

val sc = new SparkContext(conf)

//创建RDD

val lines: RDD[String] = sc.textFile("D:\\data\\order")

//解析JSON

val beanRDD: RDD[CaseOrderBean] = lines.map(line => {

var bean: CaseOrderBean = null

try {

bean = JSON.parseObject(line, classOf[CaseOrderBean])

} catch {

case e: JSONException => {

//println(line.toString())//单独处理有问题的数据,可以记录日志后存储起来

}

}

bean

})

//过滤有问题的数据

val filtered: RDD[CaseOrderBean] = beanRDD.filter(_ != null)

//将数据转成元组,分组聚合

val cidAndMoney = filtered.map(bean => {

val cid = bean.cid

val money = bean.money

(cid, money)

})

//println(cidAndMoney.collect().toBuffer)

//分组聚合的RDD

val reduced: RDD[(Int, Double)] = cidAndMoney.reduceByKey(_ + _)

//在创建一个RDD

val categoryLines: RDD[String] = sc.textFile("D:\\data\\category")

//分类RDD

val cidAndCName: RDD[(Int, String)] = categoryLines.map(line => {

val fields = line.split(",")

val cid = fields(0).toInt

val cname = fields(1)

(cid, cname)

})

//println(cidAndCName.collect().toBuffer)

val joined: RDD[(Int, (Double, String))] = reduced.join(cidAndCName)

//将join后的数据在进行处理

val result: RDD[(Int, String, Double)] = joined.map(t => (t._1, t._2._2, t._2._1))

//println(result.collect().toBuffer)

//正确的姿势

//将数据写入到MySQL

result.foreachPartition(dataToMySQL)

sc.stop()

}

def dataToMySQL(it: Iterator[(Int, String, Double)]): Unit = {

//创建MySQL连接

var conn: Connection = null

var statement: PreparedStatement = null

try {

conn = DriverManager.getConnection(

"jdbc:mysql://localhost:3306/wxt?characterEncoding=UTF-8&useSSL=false",

"root",

"123456")

statement = conn.prepareStatement("INSERT INTO t_result values (?, ?, ?, ?)")

//将迭代器中的数据写入到MySQL

it.foreach(t => {

statement.setInt(1, t._1)

statement.setString(2, t._2)

statement.setDouble(3, t._3)

statement.setDate(4, new Date(System.currentTimeMillis()))

//执行

//statement.executeUpdate()

//批量写入

statement.addBatch()

})

statement.executeBatch()

} catch {

case e: SQLException => {

//单独处理有问题的数据

}

} finally {

//释放MySQL的资源

if (statement != null) {

statement.close()

}

if (conn != null) {

conn.close()

}

}

}

}

/**

* @date :2021/3/31 1:34

* @author :xiaotao

* @description :bean

*/

case class CaseOrderBean(

cid: Int,

money: Double

)

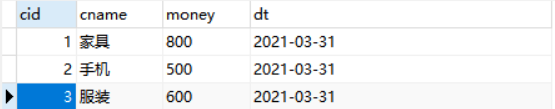

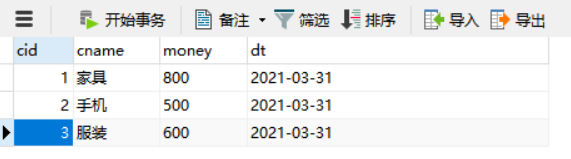

结果:

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?