项目场景:

之前再彼岸图网进行了照片的爬取,一段时间后网站增加了许多新的图片,想要获取,需要将整个网站图片全部从新获取,效率极低。

解决方案:

通过比较进行去重,大大提高代码运行效率。

源代码

from bs4 import BeautifulSoup

import requests

import os

import re

def GetPicture(lists):

url='http://pic.netbian.com/'+lists

root="./"

path=root+url.split('/')[-1]

try:

if not os.path.exists(root):

os.mkdir(root)

if not os.path.exists(path):

r=requests.get(url)

with open(path,'wb') as f:

f.write(r.content)

f.close()

print("文件保存成功")

else:

print("文件已存在")

except:

print("爬取失败")

pass

def FirstPage():

r=requests.get("http://pic.netbian.com/4kmeinv/index.html",timeout=30)

r.encoding=r.apparent_encoding

ls=Gethtml(r.text)

for i in ls:

r=requests.get("http://pic.netbian.com"+i,timeout=30)

r.encoding=r.apparent_encoding

demo=r.text

soup=BeautifulSoup(demo,"html.parser")

for link in soup.find_all('img'):

s=link.get('src')

print(s)

GetPicture(s)

break

pass

def untilLast():

for a in range(2,174):

r=requests.get("http://pic.netbian.com/4kmeinv/index_"+str(a)+".html",timeout=30)

r.encoding=r.apparent_encoding

ls=Gethtml(r.text)

for i in ls:

r=requests.get("http://pic.netbian.com/"+i,timeout=30)

r.encoding=r.apparent_encoding

demo=r.text

soup=BeautifulSoup(demo,"html.parser")

for link in soup.find_all('img'):

s=link.get('src')

GetPicture(s)

break

pass

def Gethtml(html):

ls=[]

soup=BeautifulSoup(html,"html.parser")

for link in soup.find("ul",class_="clearfix"):

try:

for i in link.find_all('a'):

ls.append(i.get('href'))

except AttributeError:

pass

# 跟NavigableString说拜拜

return ls

def main():

FirstPage()

untilLast()

main()

改进后

from bs4 import BeautifulSoup

import requests

import os

import re

def GetPicture(lists):

url='http://pic.netbian.com/'+lists

root="./"

path=root+url.split('/')[-1]

try:

if not os.path.exists(root):

os.mkdir(root)

if not os.path.exists(path):

r=requests.get(url)

with open(path,'wb') as f:

f.write(r.content)

f.close()

print("文件保存成功")

else:

print("文件已存在")

except:

print("爬取失败")

pass

def FirstPage():

print("第1页")

r=requests.get("http://pic.netbian.com/4kmeinv/index.html",timeout=30)

r.encoding=r.apparent_encoding

ls=Gethtml(r.text)

cun = []

f = open("suoyin.txt", "r")

for line in f:

cun.append(line.replace("\n", ""))

for i in ls:

if i in cun:

continue

else:

with open("suoyin.txt","a") as s:

s.write(i+"\n")

r=requests.get("http://pic.netbian.com"+i,timeout=30)

r.encoding=r.apparent_encoding

demo=r.text

soup=BeautifulSoup(demo,"html.parser")

for link in soup.find_all('img'):

s=link.get('src')

GetPicture(s)

break

untilLast()

def untilLast():

for a in range(2,lastpage()+1):

print("第"+str(a)+"页")

r=requests.get("http://pic.netbian.com/4kmeinv/index_"+str(a)+".html",timeout=30)

r.encoding=r.apparent_encoding

ls=Gethtml(r.text)

for i in ls:

cun = []

f = open("suoyin.txt", "r")

for line in f:

cun.append(line.replace("\n", ""))

if number>len(cun):

if i in cun:

continue

else:

with open("suoyin.txt","a") as s:

s.write(i+"\n")

r=requests.get("http://pic.netbian.com/"+i,timeout=30)

r.encoding=r.apparent_encoding

demo=r.text

soup=BeautifulSoup(demo,"html.parser")

for link in soup.find_all('img'):

s=link.get('src')

GetPicture(s)

break

else:

return

def Gethtml(html):

ls=[]

soup=BeautifulSoup(html,"html.parser")

for link in soup.find("ul",class_="clearfix"):

try:

for i in link.find_all('a'):

ls.append(i.get('href'))

except AttributeError:

pass

# 跟NavigableString说拜拜

return ls

def lastpage():

r=requests.get("https://pic.netbian.com/4kmeinv/index.html",timeout=30)

r.encoding=r.apparent_encoding

jpg=str(re.search(r'index_\d{3}.html',str(r.text)).group(0))

page=str(re.search(r'\d{3}',jpg).group())

return int(page)

def zengliang():

s=lastpage()

r = requests.get("http://pic.netbian.com/4kmeinv/index_" + str(s) + ".html", timeout=30)

r.encoding = r.apparent_encoding

ls = Gethtml(r.text)

num = (int(s) - 1) * 20 + len(ls)

return num

def main():

global number

number=zengliang()

cun = []

f = open("suoyin.txt", "r")

for line in f:

cun.append(line.replace("\n", ""))

if number>len(cun):

print("本次新增加" + str(number - len(cun))+"张")

FirstPage()

else:

print("网页未更新")

main()

现在将修改过的地方分析一下。

def lastpage():

r=requests.get("https://pic.netbian.com/4kmeinv/index.html",timeout=30)

r.encoding=r.apparent_encoding

jpg=str(re.search(r'index_\d{3}.html',str(r.text)).group(0))

page=str(re.search(r'\d{3}',jpg).group())

return int(page)

这个函数函数可以获得当前网页的最后一页的具体页数,主要是同于提高代码效率,之前这一步主要通过人工修改。

def zengliang():

s=lastpage()

r = requests.get("http://pic.netbian.com/4kmeinv/index_" + str(s) + ".html", timeout=30)

r.encoding = r.apparent_encoding

ls = Gethtml(r.text)

num = (int(s) - 1) * 20 + len(ls)

return num

这个函数可以计算出出目的网站中有多少张图片,进一步要和自己本地图片进行数量比较,如果大于本地照片数量,说明网站有更新则进行爬取

def main():

global number

number=zengliang()

cun = []

f = open("suoyin.txt", "r")

for line in f:

cun.append(line.replace("\n", ""))

if number>len(cun):

print("本次新增加" + str(number - len(cun))+"张")

FirstPage()

else:

print("网页未更新")

number记录目的网站的照片数量,在其他函数模块中需要调用,设置成全局变量。此篇增量式爬虫较核心的代码就是接下来这几行。

cun = []

f = open("suoyin.txt", "r")

for line in f:

cun.append(line.replace("\n", ""))

if number>len(cun):

print("本次新增加" + str(number - len(cun))+"张")

FirstPage()

else:

print("网页未更新")

这里建立了一个TXT文本文件,用于储存url一部分,因为一张图片最开始是由一个URL指向的,所以每个URL就代表一张图片。

所以用一个列表cun=[]来保存所有url,每个url来作为列表的一个元素。当number>len(cun)代表目的网站有新的图片调用FirstPage,否则打印网站未更新,函数结束。

def FirstPage():

print("第1页")

r=requests.get("http://pic.netbian.com/4kmeinv/index.html",timeout=30)

r.encoding=r.apparent_encoding

ls=Gethtml(r.text)

cun = []

f = open("suoyin.txt", "r")

for line in f:

cun.append(line.replace("\n", ""))

for i in ls:

if i in cun:#如果url在列表cun中

continue#中断本次循环

else:#否则将url写入TXT文本中并获取图片

with open("suoyin.txt","a") as s:

s.write(i+"\n")

r=requests.get("http://pic.netbian.com"+i,timeout=30)

r.encoding=r.apparent_encoding

demo=r.text

soup=BeautifulSoup(demo,"html.parser")

for link in soup.find_all('img'):

s=link.get('src')

GetPicture(s)

break

untilLast()

def untilLast():

for a in range(2,lastpage()+1):

print("第"+str(a)+"页")

r=requests.get("http://pic.netbian.com/4kmeinv/index_"+str(a)+".html",timeout=30)

r.encoding=r.apparent_encoding

ls=Gethtml(r.text)

for i in ls:

cun = []#每次都从新获取cun,因为有可能前面会添加新的url

f = open("suoyin.txt", "r")

for line in f:

cun.append(line.replace("\n", ""))

if number>len(cun):#有新图片进行下一步

if i in cun:

continue

else:

with open("suoyin.txt","a") as s:

s.write(i+"\n")

r=requests.get("http://pic.netbian.com/"+i,timeout=30)

r.encoding=r.apparent_encoding

demo=r.text

soup=BeautifulSoup(demo,"html.parser")

for link in soup.find_all('img'):

s=link.get('src')

GetPicture(s)

break

else:#否则结束函数

return

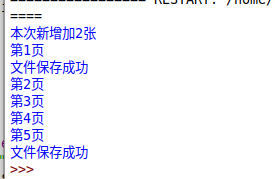

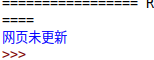

现在人工删除网页第一页和第五页的各一张图片进行测验。

再次运行

由于本地照片数量还是较少,所以对于查看url是否在列表中,用来python自带的查询,如果数量较大建议使用数据算法中的快速排序,以及二叉查找等方法,提高代码运算速度。

1246

1246

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?