基于数据库技术的HTML网页抽取技术的研究经过了人工、半自动化和全自动化方法的三个阶段。

人工方法,通过程序员人工分析出网页的模板,借助一定的编程语言,针对具体的问题生成具体的包装器。

半自动化方法,应用网页模板抽取数据,从而生成具体包装器的部分被计算机接管,而网页模板的分析仍然需要人工参与。

自动化方法中,网页模板的分析部分也交给了计算机进行,仅仅需要很少的人工参与或完全不需要人工参与,因而更加适合大规模、系统化、持续性的Web数据抽取。

下面通过人工方法实现HTML网页数据的抽取。这里以抽取“豆瓣电影排行榜”网页的超链接数据为例,演示如何抽取网页数据并保存至数据库mysql中的数据表html中。

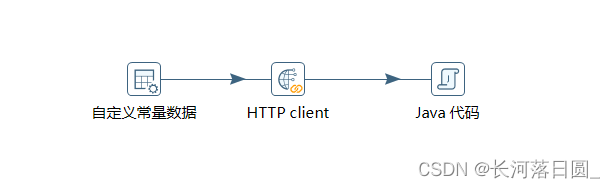

1.打开kettle工具,创建转换html_extract,并添加如下控件及Hop连接线

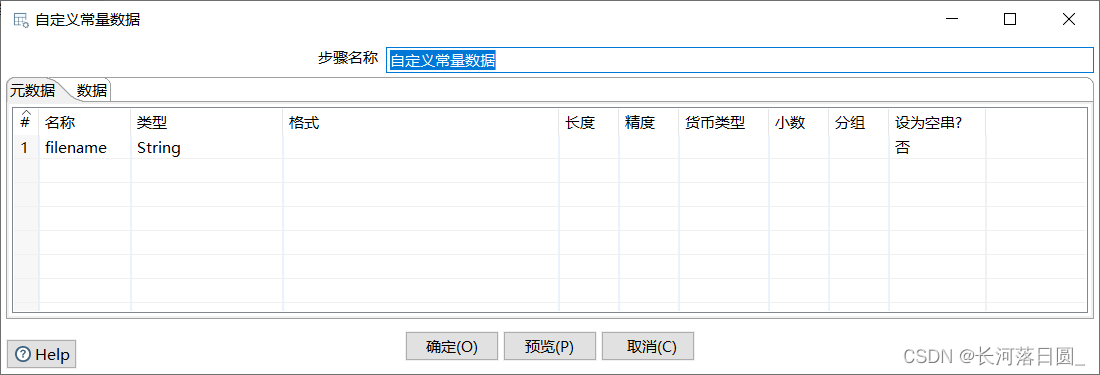

2.配置“自定义常量数据”控件

3.配置HTTP client控件

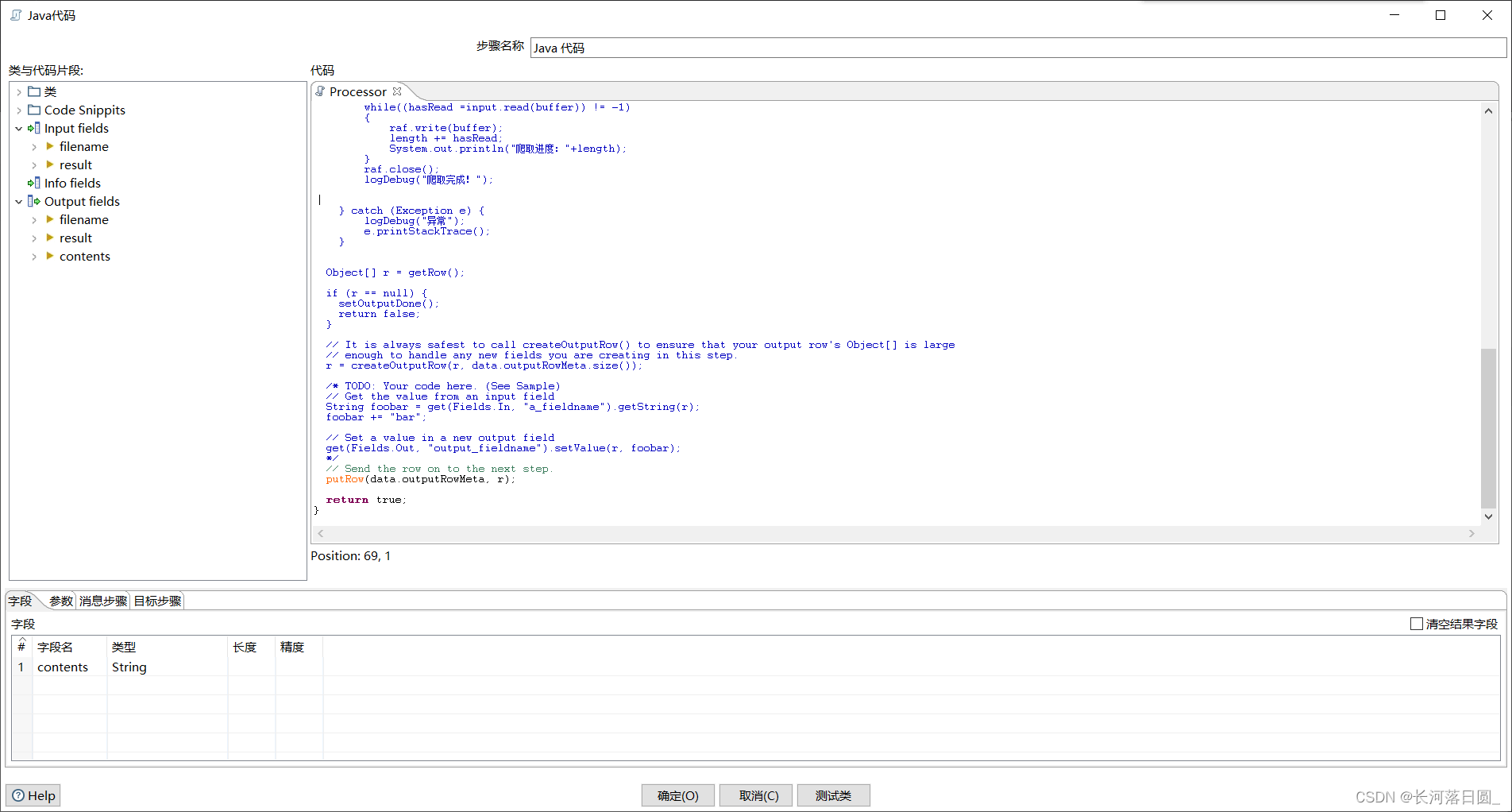

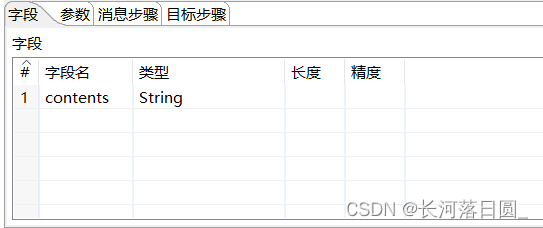

4.配置“Java代码”控件

import java.util.*;

import java.util.regex.Matcher;

import java.util.regex.Pattern;

import java.sql.DriverManager;

import java.sql.SQLException;

import java.util.regex.Matcher;

import java.util.regex.Pattern;

import com.mysql.jdbc.Connection;

import com.mysql.jdbc.PreparedStatement;

import java.io.InputStream;

import java.io.RandomAccessFile;

import java.net.URL;

import java.net.URLConnection;

private String result;

private String contents;

private Connection connection = null;

public boolean processRow(StepMetaInterface smi, StepDataInterface sdi) throws KettleException {

if (first) {

first = false;

/* TODO: Your code here. (Using info fields)

FieldHelper infoField = get(Fields.Info, "info_field_name");

RowSet infoStream = findInfoRowSet("info_stream_tag");

Object[] infoRow = null;

int infoRowCount = 0;

// Read all rows from info step before calling getRow() method, which returns first row from any

// input rowset. As rowMeta for info and input steps varies getRow() can lead to errors.

while((infoRow = getRowFrom(infoStream)) != null){

// do something with info data

infoRowCount++;

}

*/

}

try{

URL url = new URL("https://movie.douban.com/");

URLConnection conn = url.openConnection();

conn.setRequestProperty("accept","*/*");

conn.setRequestProperty("connection","Keep-Alive");

conn.setRequestProperty("user-agent","Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/96.0.4664.45 Safari/537.36");

conn.connect();

InputStream input = conn.getInputStream();

byte[] buffer = new byte[1024];

int hasRead;

int length = 0;

String msg = "";

//输出到一个txt文件中

//FileWriter fw = new FileWriter("D:\\豆瓣电影排行榜.txt");

RandomAccessFile raf = new RandomAccessFile("D:\\output\\豆瓣电影排行榜.txt","rw");

while((hasRead =input.read(buffer)) != -1)

{

raf.write(buffer);

length += hasRead;

System.out.println("爬取进度:"+length);

}

raf.close();

logDebug("爬取完成!");

} catch (Exception e) {

logDebug("异常");

e.printStackTrace();

}

Object[] r = getRow();

if (r == null) {

setOutputDone();

return false;

}

// It is always safest to call createOutputRow() to ensure that your output row's Object[] is large

// enough to handle any new fields you are creating in this step.

r = createOutputRow(r, data.outputRowMeta.size());

/* TODO: Your code here. (See Sample)

// Get the value from an input field

String foobar = get(Fields.In, "a_fieldname").getString(r);

foobar += "bar";

// Set a value in a new output field

get(Fields.Out, "output_fieldname").setValue(r, foobar);

*/

// Send the row on to the next step.

putRow(data.outputRowMeta, r);

return true;

}

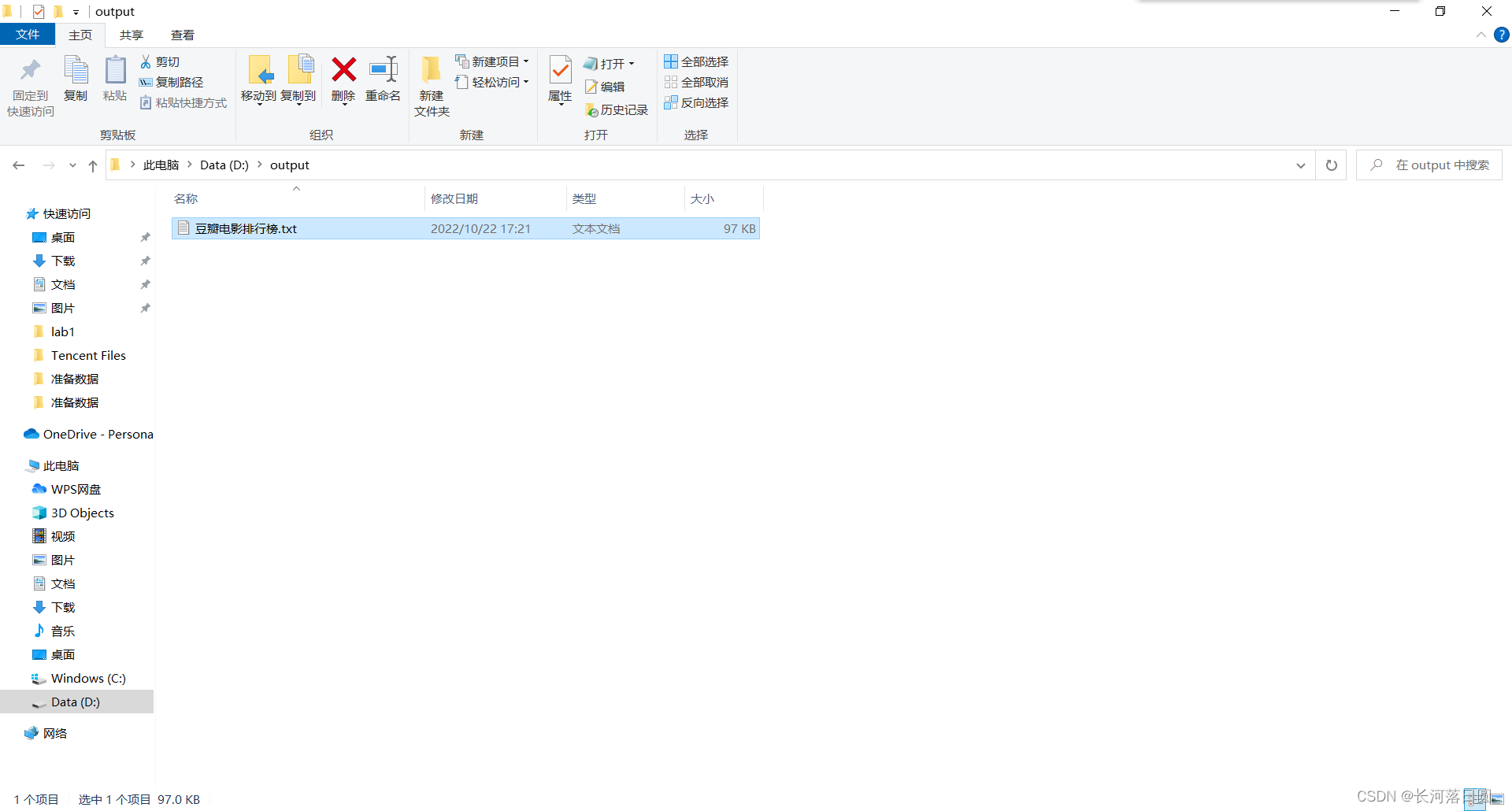

5.运行转换html_extract

成功!

454

454

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?