kubernetes

Kubernetes概述

kubernetes(k8s),是Google在2014年开源的容器化集群化管理系统,主要目标是让部署容器化应用简单并且高效,它提供了容器编排,资源管理,弹性伸缩,部署管理,服务发现等一些列功能

Kubernetes特性

- 轻量级:由go语言(编译型)开发,相对于解释型语言占用资源较少

- 开源

- 自我修复:控制器控制pod,对异常状态的容器进行先创建再删除,保证业务不中断

- 弹性伸缩:yml定义的阈值(cgroups控制的limit资源上限)和伸缩方式(水平)

- 自动部署,回滚更新

- 服务发现,复制均衡:K8S为多个pod(容器)提供一个统一访问入口(内部IP地址和一个DNS名称),并且负载均衡关联的所有容器,使得用户无需考虑容器IP问题。

使用IPVS(章文嵩)框架—>“替代”iptables - 机密和配置管理:管理机密数据和应用程序配置,而不需要把敏感数据暴露在镜像里,提高敏感数据安全性。并可以将一些常用的配置存储在K8S中,方便应用程序使用。

- 存储编排:挂载外部存储系统,无论是来自本地存储,公有云(如AWS),还是网络存储(如NFS、GlusterFS、Ceph)都作为集群资源的一部分使用,极大提高存储使用灵活性。

- 批处理:提供一次性任务(job),定时任务(crontab);满足批量数据处理和分析的场景

Kubernetes核心概念

Pod

- Pod是kubernetes中最小的资源单位

- 一个pod会封装多个容器组成一个子节点的运行环境,每个pod中至少有两个容器(基础容器pause和主应用容器)

- 一个pod中的容器共享网络名称空间,容器之间通过本地localhost进行通信

- pod有其生命周期

Controllers

- ReplicaSet:确保预期的Pod副本数量

- Deployment:无状态应用部署

- StatefulSet:有状态应用部署

- DaemonSet:确保所有Node运行同一个Pod

- Job:一次性任务

- Cronjob:定时任务

Service

- 放在Pod失联

- 定义一组pod访问策略

- Label:标签,附加到某个资源上,用于管理对象、查询和筛选

- Namespaces:命名空间,将对象逻辑上隔离

- Annotations:注释

Kubernetes单节点部署

ETCD集群部署

master:192.168.118.11、kube-apiserver kube-controller-manager kube-scheduler etcd docker

node1:192.168.118.22、kubelet kube-proxy docker flannel etcd

node2:192.168.118.33、kubelet kube-proxy docker flannel etcd

- 基本准备,三台节点,关闭防火墙,设置时间同步,node节点部署好docker

systemctl stop firewalld.service

systemctl disable firewalld

setenforce 0

ntpdate ntp1.aliyun.com ##设置时间同步

##部署docker

yum install -y yum-utils device-mapper-persistent-data lvm2

cd /etc/yum.repos.d/

yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

yum install -y docker-ce

systemctl stop firewalld

systemctl disable firewalld

setenforce 0

systemctl start docker

systemctl enable docker

tee /etc/docker/daemon.json <<-'EOF'

{

"registry-mirrors": ["https://r4f0p1ia.mirror.aliyuncs.com"]

}

EOF

systemctl daemon-reload

systemctl restart docker

echo "net.ipv4.ip_forward=1" >> /etc/sysctl.conf

sysctl -p

systemctl restart network

systemctl restart docker

- master节点操作,定义两个脚本,

[root@master k8s]# ls

etcd-cert.sh etcd.sh

##etcd-cert.sh是证书制作的脚本,etcd.sh是etcd启动脚本

[root@master k8s]# cat etcd-cert.sh

cat > ca-config.json <<EOF #CA证书配置文件

{

"signing": { #键名称

"default": {

"expiry": "87600h" #证书有效期(10年),但新申请的默认只有一年,需要修改

},

"profiles": { #简介

"www": { #名称

"expiry": "87600h",

"usages": [ #使用方法

"signing",

"key encipherment", #密钥验证

"server auth", #服务器端验证

"client auth" #客户端验证

]

}

}

}

}

EOF

cat > ca-csr.json <<EOF #CA签名文件

{

"CN": "etcd CA", #CA前面未etcd指定

"key": {

"algo": "rsa", #使用rsa非对称密钥的形式

"size": 2048

},

"names": [ #在证书中定义信息

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing"

}

]

}

EOF

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

#-----------------------

cat > server-csr.json <<EOF #服务器端签名

{

"CN": "etcd",

"hosts": [

"192.168.118.11",

"192.168.118.22",

"192.168.118.33"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing"

}

]

}

EOF

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare server

[root@master k8s]# cat etcd.sh ##etcd启动脚本

#!/bin/bash

# example: ./etcd.sh etcd01 192.168.118.11 etcd02=https://192.168.118.22:2380,etcd03=https://192.168.118.33:2380

ETCD_NAME=$1 #位置变量1,etcd节点名称

ETCD_IP=$2 #位置变量2,节点地址

ETCD_CLUSTER=$3 #位置变量3,集群

WORK_DIR=/opt/etcd #指定工作目录

cat <<EOF >$WORK_DIR/cfg/etcd #在指定工作目录创建ETCD的配置文件

#[Member]

ETCD_NAME="${ETCD_NAME}"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://${ETCD_IP}:2380"

ETCD_LISTEN_CLIENT_URLS="https://${ETCD_IP}:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://${ETCD_IP}:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://${ETCD_IP}:2379" #对外提供的url使用https的协议进行访问

ETCD_INITIAL_CLUSTER="etcd01=https://${ETCD_IP}:2380,${ETCD_CLUSTER}" #多路访问

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster" #tokens 令牌环名称:etcd-cluster

ETCD_INITIAL_CLUSTER_STATE="new" #状态,重新创建

EOF

cat <<EOF >/usr/lib/systemd/system/etcd.service #定义etcd的启动脚本

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=${WORK_DIR}/cfg/etcd

ExecStart=${WORK_DIR}/bin/etcd \

--name=\${ETCD_NAME} \

--data-dir=\${ETCD_DATA_DIR} \

--listen-peer-urls=\${ETCD_LISTEN_PEER_URLS} \

--listen-client-urls=\${ETCD_LISTEN_CLIENT_URLS},http://127.0.0.1:2379 \

--advertise-client-urls=\${ETCD_ADVERTISE_CLIENT_URLS} \

--initial-advertise-peer-urls=\${ETCD_INITIAL_ADVERTISE_PEER_URLS} \

--initial-cluster=\${ETCD_INITIAL_CLUSTER} \

--initial-cluster-token=\${ETCD_INITIAL_CLUSTER_TOKEN} \

--initial-cluster-state=new \

--cert-file=${WORK_DIR}/ssl/server.pem \ #证书相关参数

--key-file=${WORK_DIR}/ssl/server-key.pem \

--peer-cert-file=${WORK_DIR}/ssl/server.pem \

--peer-key-file=${WORK_DIR}/ssl/server-key.pem \

--trusted-ca-file=${WORK_DIR}/ssl/ca.pem \

--peer-trusted-ca-file=${WORK_DIR}/ssl/ca.pem

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable etcd

systemctl restart etcd

- 创建CA证书

[root@master k8s]# mkdir etcd-cert

[root@master k8s]# mv etcd-cert

etcd-cert/ etcd-cert.sh

[root@master k8s]# mv etcd-cert.sh etcd-cert

##创建cfssl类型工具下载脚本

先从官网源中制作证书的工具下载下来

[root@master etcd-cert]# cat cfssl.sh

#先从官网源中制作证书的工具下载下来,(-o:导出)放在/usr/local/bin中便于系统识别

curl -L https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 -o /usr/local/bin/cfssl

#从另一个站点源中下载cfssljson工具,用于识别json配置文件格式

curl -L https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 -o /usr/local/bin/cfssljson

#下载cfssl-certinfo工具

curl -L https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64 -o /usr/local/bin/cfssl-certinfo

[root@master k8s]# cd /usr/local/bin

[root@master bin]# chmod +x * #给予执行权限

[root@master bin]# ls

cfssl cfssl-certinfo cfssljson

[root@master bin]# cd -

/root/k8s

[root@master k8s]# cd etcd-cert/

[root@master etcd-cert]# ls

etcd-cert.sh

[root@master etcd-cert]# sh -x etcd-cert.sh

[root@master etcd-cert]# ls

ca-config.json ca.csr ca-csr.json ca-key.pem ca.pem etcd-cert.sh server.csr server-csr.json server-key.pem server.pem

- 上传etcd包,进行配置

[root@master k8s]# tar zxf etcd-v3.3.10-linux-amd64.tar.gz

[root@master k8s]# cd etcd-v3.3.10-linux-amd64/

[root@master etcd-v3.3.10-linux-amd64]# ls

Documentation etcd etcdctl README-etcdctl.md README.md READMEv2-etcdctl.md

[root@master etcd-v3.3.10-linux-amd64]# mkdir /opt/etcd/{cfg,bin,ssl} -p #创建etcd工作目录

[root@master etcd-v3.3.10-linux-amd64]# mv etcd etcdctl /opt/etcd/bin/

[root@master etcd-v3.3.10-linux-amd64]# cd ../etcd-cert/

[root@master etcd-cert]# cp *.pem /opt/etcd/ssl/

##进入卡住状态,等待其他节点加入

[root@master k8s]# bash etcd.sh etcd01 192.168.118.11 etcd02=https://192.168.118.22:2380,etcd03=https://192.168.118.33:2380

##另起一个master终端,负载命令文件和证书文件至相应的文件

[root@master etcd-cert]# scp -r /opt/etcd/ root@192.168.118.22:/opt/

[root@master etcd-cert]# scp -r /opt/etcd/ root@192.168.118.33:/opt/

[root@master etcd-cert]# scp /usr/lib/systemd/system/etcd.service root@192.168.118.22:/usr/lib/systemd/system/

root@192.168.118.22's password:

etcd.service 100% 923 470.6KB/s 00:00

[root@master etcd-cert]# scp /usr/lib/systemd/system/etcd.service root@192.168.118.33:/usr/lib/systemd/system/

root@192.168.118.33's password:

etcd.service 100% 923 610.4KB/s 00:00

- 修改node节点

node1:

[root@node1 ~]# cd /opt/etcd/

[root@node1 etcd]# ls

bin cfg ssl

[root@node1 etcd]# vim cfg/etcd

#[Member]

ETCD_NAME="etcd02"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.118.22:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.118.22:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.118.22:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.118.22:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://192.168.118.11:2380,etcd02=https://192.168.118.22:2380,etcd03=https://192.168.118.33:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

node2:

[root@node2 etcd]# vim cfg/etcd

#[Member]

ETCD_NAME="etcd03"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.118.33:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.118.33:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.118.33:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.118.33:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://192.168.118.11:2380,etcd02=https://192.168.118.22:2380,etcd03=https://192.168.118.33:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

- 三台节点都启动etcd

systemctl start etcd

查看集群状态

[root@master etcd-cert]# /opt/etcd/bin/etcdctl --ca-file=ca.pem --cert-file=server.pem --key-file=server-key.pem --endpoints="https://192.168.118.11:2379,https://192.168.118.22:2379,https://192.168.118.33:2379" cluster-health

member 26f81b159382c99f is healthy: got healthy result from https://192.168.118.11:2379

member d68cc2d726219f6b is healthy: got healthy result from https://192.168.118.22:2379

member e094240d4ff7a9fd is healthy: got healthy result from https://192.168.118.33:2379

cluster is healthy

flannel网络配置

master节点配置

##写入分配的子网段到etcd中,供flannel使用

[root@master etcd-cert]# /opt/etcd/bin/etcdctl --ca-file=ca.pem --cert-file=server.pem --key-file=server-key.pem --endpoints="https://192.168.118.11:2379,https://192.168.118.22:2379,https://192.168.118.33:2379" set /coreos.com/network/config '{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}'

{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}

##查看写入的信息

[root@master etcd-cert]# /opt/etcd/bin/etcdctl --ca-file=ca.pem --cert-file=server.pem --key-file=server-key.pem --endpoints="https://192.168.118.11:2379,https://192.168.118.22:2379,https://192.168.118.33:2379" get /coreos.com/network/config

{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}

- 上传flannel包到node节点解压,配置node节点,两台node节点同时配置,这里只显示 node1

node1:

[root@node1 ~]# tar xzf flannel-v0.10.0-linux-amd64.tar.gz

[root@node1 ~]# ls

anaconda-ks.cfg flannel-v0.10.0-linux-amd64.tar.gz mk-docker-opts.sh 公共 视频 文档 音乐

flanneld initial-setup-ks.cfg README.md 模板 图片 下载 桌面

##创建kubernetes工作目录

[root@node1 ~]# mkdir /opt/kubernetes/{cfg,bin,ssl} -p

[root@node1 ~]# mv mk-docker-opts.sh flanneld /opt/kubernetes/bin/

[root@node1 ~]# vim flannel.sh ##设置flannel启动脚本

#!/bin/bash

ETCD_ENDPOINTS=${1:-"http://127.0.0.1:2379"}

cat <<EOF >/opt/kubernetes/cfg/flanneld

FLANNEL_OPTIONS="--etcd-endpoints=${ETCD_ENDPOINTS} \

-etcd-cafile=/opt/etcd/ssl/ca.pem \

-etcd-certfile=/opt/etcd/ssl/server.pem \

-etcd-keyfile=/opt/etcd/ssl/server-key.pem"

EOF

cat <<EOF >/usr/lib/systemd/system/flanneld.service

[Unit]

Description=Flanneld overlay address etcd agent

After=network-online.target network.target

Before=docker.service

[Service]

Type=notify

EnvironmentFile=/opt/kubernetes/cfg/flanneld

ExecStart=/opt/kubernetes/bin/flanneld --ip-masq \$FLANNEL_OPTIONS

ExecStartPost=/opt/kubernetes/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/subnet.env

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable flanneld

systemctl restart flanneld

[root@node1 ~]# bash flannel.sh https://192.168.118.11:2379,https://192.168.118.22:2379,https://192.168.118.33:2379

Created symlink from /etc/systemd/system/multi-user.target.wants/flanneld.service to /usr/lib/systemd/system/flanneld.service.

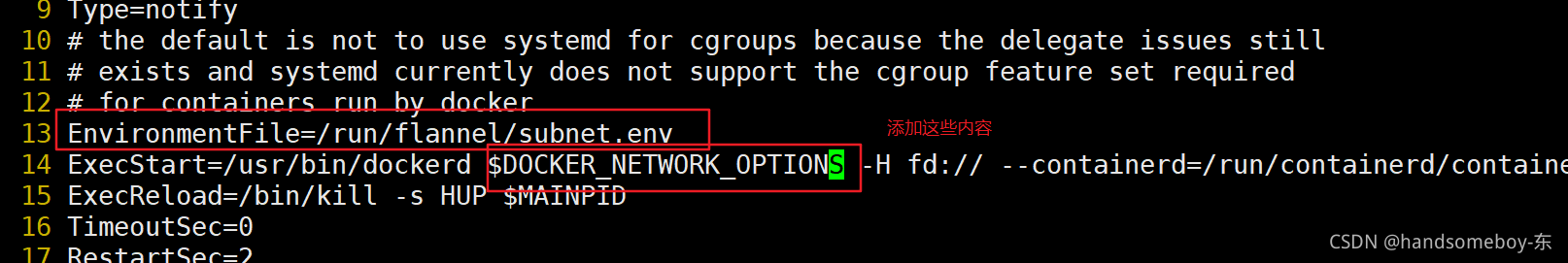

##配置docker连接flannel

[root@node1 ~]# vim /usr/lib/systemd/system/docker.service

- 重启docker服务,查看flannel网络

[root@node1 ~]# systemctl daemon-reload

[root@node1 ~]# systemctl restart docker

[root@node1 ~]# ifconfig

………………………………

flannel.1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 172.17.44.0 netmask 255.255.255.255 broadcast 0.0.0.0

inet6 fe80::589d:14ff:fe41:814d prefixlen 64 scopeid 0x20<link>

ether 5a:9d:14:41:81:4d txqueuelen 0 (Ethernet)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 37 overruns 0 carrier 0 collisions 0

………………………………

- 在node节点创建两centos容器,测试ping通

[root@node1 ~]# docker run -itd centos:7 /bin/bash

[root@node1 ~]# docker ps -a

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

30e483267fa7 centos:7 "/bin/bash" About a minute ago Up About a minute pedantic_hellman

[root@node1 ~]# docker exec -it 30e483267fa7 /bin/bash

[root@30e483267fa7 /]# yum install -y net-tools

[root@30e483267fa7 /]# ifconfig

eth0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 172.17.44.2 netmask 255.255.255.0 broadcast 172.17.44.255

ether 02:42:ac:11:2c:02 txqueuelen 0 (Ethernet)

RX packets 25655 bytes 19757628 (18.8 MiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 12640 bytes 685960 (669.8 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

lo: flags=73<UP,LOOPBACK,RUNNING> mtu 65536

inet 127.0.0.1 netmask 255.0.0.0

loop txqueuelen 1000 (Local Loopback)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

node2:

[root@2e5d9ef13696 /]# ifconfig

eth0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 172.17.55.2 netmask 255.255.255.0 broadcast 172.17.53.255

ether 02:42:ac:11:35:02 txqueuelen 0 (Ethernet)

RX packets 25677 bytes 19758055 (18.8 MiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 12739 bytes 691355 (675.1 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

lo: flags=73<UP,LOOPBACK,RUNNING> mtu 65536

inet 127.0.0.1 netmask 255.0.0.0

loop txqueuelen 1000 (Local Loopback)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

[root@30e483267fa7 /]# ping 172.17.55.2

PING 172.17.55.2 (172.17.55.2) 56(84) bytes of data.

64 bytes from 172.17.55.2: icmp_seq=1 ttl=62 time=0.251 ms

^C

--- 172.17.55.2 ping statistics ---

1 packets transmitted, 1 received, 0% packet loss, time 0ms

rtt min/avg/max/mdev = 0.251/0.251/0.251/0.000 ms

部署master组件

- 在master上操作

[root@master k8s]# unzip master.zip

Archive: master.zip

inflating: apiserver.sh

inflating: controller-manager.sh

inflating: scheduler.sh

[root@master k8s]# mkdir /opt/kubernetes/{cfg,bin,ssl} -p

[root@master k8s]# mkdir k8s-cert

[root@master k8s]# cd k8s-cert/

[root@master k8s-cert]# ls

k8s-cert.sh

[root@master k8s-cert]# cat k8s-cert.sh

cat > ca-config.json <<EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF

cat > ca-csr.json <<EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

#-----------------------

cat > server-csr.json <<EOF

{

"CN": "kubernetes",

"hosts": [

"10.0.0.1",

"127.0.0.1",

"192.168.118.11",

"192.168.118.55",

"192.168.18.66",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes server-csr.json | cfssljson -bare server

#-----------------------

cat > admin-csr.json <<EOF

{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "system:masters",

"OU": "System"

}

]

}

EOF

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

#-----------------------

cat > kube-proxy-csr.json <<EOF

{

"CN": "system:kube-proxy",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

- 生成k8s证书

[root@master k8s-cert]# bash k8s-cert.sh

………………………………

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

- 安装kubernets

[root@master k8s-cert]# ls

admin.csr admin.pem ca-csr.json k8s-cert.sh kube-proxy-key.pem server-csr.json

admin-csr.json ca-config.json ca-key.pem kube-proxy.csr kube-proxy.pem server-key.pem

admin-key.pem ca.csr ca.pem kube-proxy-csr.json server.csr server.pem

[root@master k8s-cert]# cp ca*pem server*pem /opt/kubernetes/ssl/

[root@master k8s-cert]# cd ..

[root@master k8s]# tar xzf kubernetes-server-linux-amd64.tar.gz

[root@master k8s]# cd kubernetes/server/bin

[root@master bin]# cp kube-apiserver kubectl kube-controller-manager kube-scheduler /opt/kubernetes/bin/

[root@master bin]# cd /root/k8s/

[root@master k8s]# vim /opt/kubernetes/cfg/token.csv

c9f453bfaa0900ccfe59a8b4da73c438,kubelet-bootstrap,10001,"system:kubelet-bootstrap"

##使用 head -c 16 /dev/urandom | od -An -t x | tr -d ' ' 可以随机生成序列号

##二进制文件,token,证书都准备好,开启apiserver

[root@master k8s]# bash apiserver.sh 192.168.118.11 https://192.168.118.11:2379,https://192.168.118.22:2379,https://192.168.118.33:2379

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-apiserver.service to /usr/lib/systemd/system/kube-apiserver.service.

[root@master k8s]# ps aux | grep kube ##检查进程是否启动成功

[root@master k8s]# netstat -antp | grep 6443 ##apiserver监听端口6443

tcp 0 0 192.168.118.11:6443 0.0.0.0:* LISTEN 83684/kube-apiserve

tcp 0 0 192.168.118.11:59886 192.168.118.11:6443 ESTABLISHED 83684/kube-apiserve

tcp 0 0 192.168.118.11:6443 192.168.118.11:59886 ESTABLISHED 83684/kube-apiserve

[root@master k8s]# netstat -antp | grep 8080

tcp 0 0 127.0.0.1:8080 0.0.0.0:* LISTEN 83684/kube-apiserve

##启动sheduler

[root@master k8s]# ./scheduler.sh 127.0.0.1

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-scheduler.service to /usr/lib/systemd/system/kube-scheduler.service.

##启动controller-manager

[root@master k8s]# chmod +x controller-manager.sh

[root@master k8s]# ./controller-manager.sh 127.0.0.1

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-controller-manager.service to /usr/lib/systemd/system/kube-controller-manager.service.

##查看master节点状态

[root@master k8s]# /opt/kubernetes/bin/kubectl get cs

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-0 Healthy {"health":"true"}

etcd-1 Healthy {"health":"true"}

etcd-2 Healthy {"health":"true"}

node节点部署

- 在master上把 kubelet、kube-proxy拷贝到node节点上去

[root@master bin]# scp kubelet kube-proxy root@192.168.118.22:/opt/kubernetes/bin/

root@192.168.118.22's password:

kubelet 100% 168MB 122.0MB/s 00:01

kube-proxy 100% 48MB 92.3MB/s 00:00

[root@master bin]# scp kubelet kube-proxy root@192.168.118.33:/opt/kubernetes/bin/

root@192.168.118.33's password:

kubelet 100% 168MB 133.2MB/s 00:01

kube-proxy 100% 48MB 138.2MB/s 00:00

- node节点解压node组件

[root@node1 ~]# unzip node.zip

Archive: node.zip

inflating: proxy.sh

inflating: kubelet.sh

- master节点操作

[root@master bin]# cd /root/k8s/

[root@master k8s]# mkdir kubeconfig

[root@master k8s]# cd kubeconfig/

##拷贝kubeconfig.sh文件进行重命名

[root@master kubeconfig]# mv kubeconfig.sh kubeconfig

[root@master kubeconfig]# vim kubeconfig #修改kubeconfig文件

############删除以下部分

# 创建 TLS Bootstrapping Token

#BOOTSTRAP_TOKEN=$(head -c 16 /dev/urandom | od -An -t x | tr -d ' ')

BOOTSTRAP_TOKEN=0fb61c46f8991b718eb38d27b605b008

cat > token.csv <<EOF

${BOOTSTRAP_TOKEN},kubelet-bootstrap,10001,"system:kubelet-bootstrap"

EOF

##获取token信息

[root@master kubeconfig]# cat /opt/kubernetes/cfg/token.csv

c9f453bfaa0900ccfe59a8b4da73c438,kubelet-bootstrap,10001,"system:kubelet-bootstrap"

##配置文件修改未tokenID信息,设置客户端认证参数

[root@master kubeconfig]# kubectl config set-credentials kubelet-bootstrap --token=c9f453bfaa0900ccfe59a8b4da73c438 --kubeconfig=bootstrap.kubeconfig

User "kubelet-bootstrap" set.

##设置环境变量

[root@master kubeconfig]# echo "export PATH=$PATH:/opt/kubernetes/bin/" >> /etc/profile

[root@master kubeconfig]# source /etc/profile

[root@master kubeconfig]# kubectl get cs

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-1 Healthy {"health":"true"}

etcd-2 Healthy {"health":"true"}

etcd-0 Healthy {"health":"true"}

##生成配置文件

[root@master kubeconfig]# bash kubeconfig 192.168.118.11 /root/k8s/k8s-cert/

Cluster "kubernetes" set.

User "kubelet-bootstrap" set.

Context "default" created.

Switched to context "default".

Cluster "kubernetes" set.

User "kube-proxy" set.

Context "default" created.

Switched to context "default".

##复制配置文件到node节点

[root@master kubeconfig]# scp bootstrap.kubeconfig kube-proxy.kubeconfig root@192.168.118.22:/opt/kubernetes/cfg/

root@192.168.118.22's password:

bootstrap.kubeconfig 100% 2127 1.5MB/s 00:00

kube-proxy.kubeconfig 100% 6274 7.6MB/s 00:00

[root@master kubeconfig]# scp bootstrap.kubeconfig kube-proxy.kubeconfig root@192.168.118.33:/opt/kubernetes/cfg/

root@192.168.118.33's password:

bootstrap.kubeconfig 100% 2127 1.9MB/s 00:00

kube-proxy.kubeconfig 100% 6274 6.4MB/s 00:00

##创建bootstrap角色赋予权限用于连接apiserver请求签名

[root@master kubeconfig]# kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap

clusterrolebinding.rbac.authorization.k8s.io/kubelet-bootstrap created

- node1节点操作

[root@node1 ~]# bash kubelet.sh 192.168.118.22

Created symlink from /etc/systemd/system/multi-user.target.wants/kubelet.service to /usr/lib/systemd/system/kubelet.service.

[root@node1 ~]# ps aux | grep kube ##检查kubelet服务处启动

root 112579 0.5 1.1 412480 44320 ? Ssl 10:06 0:00 /opt/kubernetes/bin/kubelet --logtostderr=true --v=4 --hostname-override=192.168.118.22 --kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig --bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig --config=/opt/kubernetes/cfg/kubelet.config --cert-dir=/opt/kubernetes/ssl --pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0

root 112594 0.0 0.0 112724 988 pts/1 S+ 10:06 0:00 grep --color=auto kube

- master节点查看node节点的请求

[root@master kubeconfig]# kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr-K9GLDOPB6JZOjU3xK9_cIyOaVEn_3zbEJygKn27cjnI 3m19s kubelet-bootstrap Pending

node-csr-kHtR8FHSZ-KHLP5NiSh7oCNoCIPbbwcXt9IsdG9MSPM 2m5s kubelet-bootstrap Pending

##给node节点颁发证书

[root@master kubeconfig]# kubectl certificate approve node-csr-K9GLDOPB6JZOjU3xK9_cIyOaVEn_3zbEJygKn27cjnI

certificatesigningrequest.certificates.k8s.io/node-csr-K9GLDOPB6JZOjU3xK9_cIyOaVEn_3zbEJygKn27cjnI approved

##再次查看集群状态,成功加入node1节点

[root@master kubeconfig]# kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr-K9GLDOPB6JZOjU3xK9_cIyOaVEn_3zbEJygKn27cjnI 5m23s kubelet-bootstrap Approved,Issued

node-csr-kHtR8FHSZ-KHLP5NiSh7oCNoCIPbbwcXt9IsdG9MSPM 4m9s kubelet-bootstrap Pending

[root@master kubeconfig]# kubectl get node

NAME STATUS ROLES AGE VERSION

192.168.118.22 Ready <none> 116s v1.12.3

##同理加入node2节点

[root@master kubeconfig]# kubectl certificate approve node-csr-kHtR8FHSZ-KHLP5NiSh7oCNoCIPbbwcXt9IsdG9MSPM

certificatesigningrequest.certificates.k8s.io/node-csr-kHtR8FHSZ-KHLP5NiSh7oCNoCIPbbwcXt9IsdG9MSPM approved

[root@master kubeconfig]# kubectl get node

NAME STATUS ROLES AGE VERSION

192.168.118.22 Ready <none> 6m5s v1.12.3

192.168.118.33 Ready <none> 2m25s v1.12.3

- node1接待你启动proxy服务

[root@node1 ~]# bash proxy.sh 192.168.118.22

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-proxy.service to /usr/lib/systemd/system/kube-proxy.service.

[root@node1 ~]# systemctl status kube-proxy.service

● kube-proxy.service - Kubernetes Proxy

Loaded: loaded (/usr/lib/systemd/system/kube-proxy.service; enabled; vendor preset: disabled)

Active: active (running) since 六 2021-10-02 10:16:19 CST; 16s ago

Main PID: 114085 (kube-proxy)

Tasks: 0

Memory: 8.1M

CGroup: /system.slice/kube-proxy.service

‣ 114085 /opt/kubernetes/bin/kube-proxy --logtostderr=true --v=4 --hostname-override=192.168.118.22 --cl...

865

865

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?