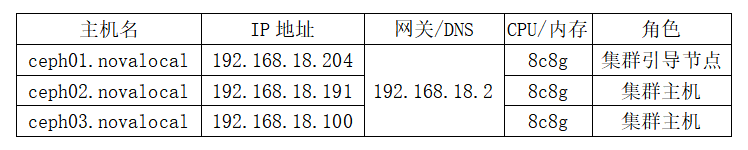

一、环境规划系统环境配置

基于openeuler22.03 (LTS-SP4)部署,镜像下载地址为:https://mirror.sjtu.edu.cn/openeuler/openEuler-22.03-LTS-SP4/ISO/x86_64/openEuler-22.03-LTS-SP4-x86_64-dvd.iso

1.1-IP以及主机名映射

1.1.1-修改主机名(所有节点执行)

将三台主机主机名设置为 ceph01.novalocal、ceph02.novalocal、ceph03.novalocal

[root@ceph01 ~]# hostnamectl set-hostname ceph01.novalocal && bash

[root@ceph02 ~]# hostnamectl set-hostname ceph02.novalocal && bash

[root@ceph03 ~]# hostnamectl set-hostname ceph03.novalocal && bash

1.1.2-追加HOSTS文件

在CEPH01节点上节点执行,配置互信设置后直接复制到其他的所有节点

[root@ceph01 ~]# echo >> "192.168.18.204 ceph01.novalocal ceph01"

[root@ceph01 ~]# echo >> "192.168.18.191 ceph02.novalocal ceph02"

[root@ceph01 ~]# echo >> "192.168.18.100 ceph03.novalocal ceph03"1.1.3-互信设置配置(仅在CEPH01节点执行)

执行ssh-keygen之后一直回车,无需进行设置

[root@ceph01 ~]# ssh-keygen

[root@ceph01 ~]# ssh-copy-id 192.168.18.191

[root@ceph01 ~]# ssh-copy-id 192.168.18.1001.1.4-复制文件到其他节点

[root@ceph01 ~]# scp -r /etc/hosts 192.168.18.191:/etc/

[root@ceph01 ~]# scp -r /etc/hosts 192.168.18.100:/etc/1.2-关闭防火墙与SELINUX

1.2.1-关闭防火墙

[root@ceph01 ~]# systemctl stop firewalld

[root@ceph01 ~]# systemctl disable --now firewalld

Removed /etc/systemd/system/multi-user.target.wants/firewalld.service.

Removed /etc/systemd/system/dbus-org.fedoraproject.FirewallD1.service.1.2.2-关闭SELINUX

[root@ceph01 ~]# sed -i 's/SELINUX=enforcing/SELINUX=disabled/g' /etc/selinux/config

[root@ceph01 ~]# cat /etc/selinux/config

# This file controls the state of SELinux on the system.

# SELINUX= can take one of these three values:

# enforcing - SELinux security policy is enforced.

# permissive - SELinux prints warnings instead of enforcing.

# disabled - No SELinux policy is loaded.

SELINUX=disabled

# SELINUXTYPE= can take one of these three values:

# targeted - Targeted processes are protected,

# minimum - Modification of targeted policy. Only selected processes are protected.

# mls - Multi Level Security protection.

SELINUXTYPE=targeted

#修改配置文件关闭SELINUX后不会立即生效,所以我们在修改完成文件后,执行setenforce 0临时关闭一下

[root@ceph01 ~]# setenforce 01.3-配置Ceph.repo文件

[root@ceph01 ~]# cat >>/etc/yum.repos.d/ceph.repo <<EOF

[ceph]

name=ceph x86_64

baseurl=https://repo.huaweicloud.com/ceph/rpm-pacific/el8/x86_64

enabled=1

gpgcheck=0

[ceph-noarch]

name=ceph noarch

baseurl=https://repo.huaweicloud.com/ceph/rpm-pacific/el8/noarch

enabled=1

gpgcheck=0

[ceph-source]

name=ceph SRPMS

baseurl=https://repo.huaweicloud.com/ceph/rpm-pacific/el8/SRPMS

enabled=1

gpgcheck=0

EOF二、基础软件安装与配置

2.1-安装时钟同步软件

[root@ceph01 ~]# yum -y install chrony git && systemctl enable --now chronyd

[root@ceph01 ~]# chronyc sources2.2-安装cephadm软件

[root@ceph01 ~]# git clone https://gitee.com/yftyxa/openeuler-cephadm.git

Cloning into 'openeuler-cephadm'...

remote: Enumerating objects: 3, done.

remote: Counting objects: 100% (3/3), done.

remote: Compressing objects: 100% (2/2), done.

remote: Total 3 (delta 0), reused 0 (delta 0), pack-reused 0

Receiving objects: 100% (3/3), 67.88 KiB | 317.00 KiB/s, done.

[root@ceph01 ~]# ls

anaconda-ks.cfg openeuler-cephadm

[root@ceph01 ~]#

[root@ceph01 ~]# cp openeuler-cephadm/cephadm /usr/bin/ && chmod a+x /usr/bin/cephadm如果在克隆的时候出现没有GIT软件包请执行 <yum -y install git> 之后再执行 <git clone https://gitee.com/yftyxa/openeuler-cephadm.git>

2.3-安装容器引擎

[root@ceph01 ~]# wget -O /etc/yum.repos.d/CentOs-Base.repo https://repo.huaweicloud.com/repository/conf/CentOS-8-reg.repo

[root@ceph01 ~]# cat /etc/yum.repos.d/ceph.repo

[ceph]

name=ceph x86_64

baseurl=https://repo.huaweicloud.com/ceph/rpm-pacific/el8/x86_64

enabled=1

gpgcheck=0

[ceph-noarch]

name=ceph noarch

baseurl=https://repo.huaweicloud.com/ceph/rpm-pacific/el8/noarch

enabled=1

gpgcheck=0

[ceph-source]

name=ceph SRPMS

baseurl=https://repo.huaweicloud.com/ceph/rpm-pacific/el8/SRPMS

enabled=1

gpgcheck=0

[root@ceph01 ~]#

[root@ceph01 ~]# yum install podman-3.3.1-9.module_el8.5.0+988+b1f0b741.x86_64 lvm2 -y2.4-重启所有节点

前面所操作的部分设置需要我们进行重启以达到配置生效

reboot三、CEPH初始化安装 以及节点增加

3.1-cephadm bootstrap集群初始化安装

[root@ceph01 ~]# cephadm bootstrap --mon-ip 192.168.18.204 --allow-fqdn-hostname 当我们如果部署成功的话则屏幕会显示如下如所示的字样,我们后期可以使用红框标示的URL访问web界面,账户密码则是URL下方所示

3.2-集群增加节点

[root@ceph1 ~]# cd /etc/ceph/

[root@ceph1 ceph]# ls

ceph.client.admin.keyring ceph.conf ceph.pub rbdmap

[root@ceph01 ceph]# ssh-copy-id -f -i ceph.pub ceph02

/usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: "ceph.pub"

Number of key(s) added: 1

Now try logging into the machine, with: "ssh 'ceph02'"

and check to make sure that only the key(s) you wanted were added.

[root@ceph01 ceph]# ssh-copy-id -f -i ceph.pub ceph03

/usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: "ceph.pub"

Number of key(s) added: 1

Now try logging into the machine, with: "ssh 'ceph03'"

and check to make sure that only the key(s) you wanted were added.

[root@ceph01 ceph]# cephadm shell

[root@ceph01 ceph]# ceph orch host add ceph02.novalocal --labels=mon

Added host 'ceph02' with addr '192.168.18.191'

[root@ceph01 ceph]# ceph orch host add ceph03.novalocal --labels=mon

Added host 'ceph03' with addr '192.168.18.100'

3.3-集群添加OSD

[root@ceph01 ceph]# ceph osd tree

ID CLASS WEIGHT TYPE NAME STATUS REWEIGHT PRI-AFF

-1 0 root default

[ceph: root@ceph01 /]# ceph orch daemon add osd ceph01.novalocal:/dev/nvme0n1

Created osd(s) 0 on host 'ceph01'

[ceph: root@ceph01 /]# ceph orch daemon add osd ceph02.novalocal:/dev/nvme0n1

Created osd(s) 0 on host 'ceph02'

[ceph: root@ceph01 /]# ceph orch daemon add osd ceph03.novalocal:/dev/nvme0n1

Created osd(s) 0 on host 'ceph03'

[ceph: root@ceph01 /]# ceph osd tree

ID CLASS WEIGHT TYPE NAME STATUS REWEIGHT PRI-AFF

-1 0.14639 root default

-3 0.04880 host ceph01

0 ssd 0.04880 osd.0 up 1.00000 1.00000

-7 0.04880 host ceph02

2 ssd 0.04880 osd.2 up 1.00000 1.00000

-5 0.04880 host ceph03

1 ssd 0.04880 osd.1 up 1.00000 1.00000 3.4-检查集群状态

[ceph: root@ceph01 /]# ceph -s

cluster:

id: 754d02a6-6782-11ef-b717-000c29d8d8e0

health: HEALTH_OK

services:

mon: 3 daemons, quorum ceph01.novalocal,ceph02,ceph03 (age 100s)

mgr: ceph01.novalocal.mvtjvb(active, since 7m), standbys: ceph02.vhjmps

osd: 3 osds: 3 up (since 34s), 3 in (since 54s)

data:

pools: 1 pools, 1 pgs

objects: 0 objects, 0 B

usage: 15 MiB used, 150 GiB / 150 GiB avail

pgs: 1 active+clean四、Dashboard 界面登录

根据我们前面1.1章节初始化完成后显示的URL以及账户名密码通过浏览器进行登录

4.1-输入默认的账户名以及密码

4.2-修改默认账户密码

4.3-登录成功

895

895

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?