Flume汇入数据到Hive

方法一:汇入到Hive指定的HDFS路径中:

1.在hive中创建数据库

create database flume;

2.创建flume_into_hive表

create external table flume_into_hive(name string,age int) partitioned by (dt string) row format delimited fields terminated by ' ' location '/user/hive/warehouse/flume.db/flume_into_hive';

3.在root/flume_hive目录下创建hive.log

mkdir root/flume_hive

Vi hive.log

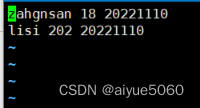

写入数据

4.在flume conf/路径下创建配置文件 flume-into-hive-1.conf

cd /opt/software/flume

agent.sources=r1

agent.channels=c1

agent.sinks=s1

agent.sources.r1.type=exec

agent.sources.r1.command=tail -F /root/flume_hive/hive.log

agent.channels.c1.type=memory

agent.channels.capacity=1000

agent.channels.c1.transactionCapacity=100

agent.sinks.s1.type=hdfs

agent.sinks.s1.hdfs.path= hdfs://node01:9000/user/hive/warehouse/flume.db/flume_into_hive/dt=%Y%m%d

agent.sinks.s1.hdfs.filePrefix = upload-

agent.sinks.s1.hdfs.fileSuffix=.txt

#是否按照时间滚动文件夹

agent.sinks.s1.hdfs.round = true

#多少时间单位创建一个新的文件夹

agent.sinks.s1.hdfs.roundValue = 1

#重新定义时间单位

agent.sinks.s1.hdfs.roundUnit = hour

#是否使用本地时间戳

agent.sinks.s1.hdfs.useLocalTimeStamp = true

#积攒多少个 Event 才 flush 到 HDFS 一次

agent.sinks.s1.hdfs.batchSize = 100

#设置文件类型,可支持压缩

agent.sinks.s1.hdfs.fileType = DataStream

agent.sinks.s1.hdfs.writeFormat=Text

#多久生成一个新的文件

agent.sinks.s1.hdfs.rollInterval = 60

#设置每个文件的滚动大小大概是 128M

agent.sinks.s1.hdfs.rollSize =

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

832

832

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?