时间窗口

1.滚动窗口

package window;

import flink_Partition.WaterSensorMapFunction;

import flink_transfrom.WaterSensor;

import org.apache.commons.lang.time.DateFormatUtils;

import org.apache.flink.streaming.api.datastream.SingleOutputStreamOperator;

import org.apache.flink.streaming.api.datastream.WindowedStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.functions.windowing.ProcessWindowFunction;

import org.apache.flink.streaming.api.windowing.assigners.TumblingProcessingTimeWindows;

import org.apache.flink.streaming.api.windowing.time.Time;

import org.apache.flink.streaming.api.windowing.windows.TimeWindow;

import org.apache.flink.util.Collector;

public class timeWindow {

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

env.setParallelism(1);

WindowedStream<WaterSensor, String, TimeWindow> sensorsWS = env

.socketTextStream("hadoop102", 7777)

.map(new WaterSensorMapFunction())

.keyBy(r -> r.getId())

// 设置滚动事件时间窗口

.window(TumblingProcessingTimeWindows.of(Time.seconds(20)));

SingleOutputStreamOperator<String> process = sensorsWS.process(

/*

参数一:输入类型

参数二:输出类型

参数三:key的类型

参数四:窗口的类型(基于时间还是计数)

*/

new ProcessWindowFunction<WaterSensor, String, String, TimeWindow>() {

/*

s:分组的key

context:上下文

elements:存的数据

out:采集器

*/

@Override

public void process(String s, Context context, Iterable<WaterSensor> elements, Collector<String> out) throws Exception {

//上下文可以拿到window对象,还有其他东西:侧输出流等等

long startTs = context.window().getStart();

long endTs = context.window().getEnd();

String windowStart = DateFormatUtils.format(startTs, "yyyy-MM-dd HH:mm:ss.SSS");

String windowEnd = DateFormatUtils.format(endTs, "yyyy-MM-dd HH:mm:ss.SSS");

long count = elements.spliterator().estimateSize();

out.collect("key=" + s + "的窗口[" + windowStart + "," + windowEnd + ")包含" + count + "条数据===>" + elements.toString());

}

}

);

process.print();

env.execute();

}

}

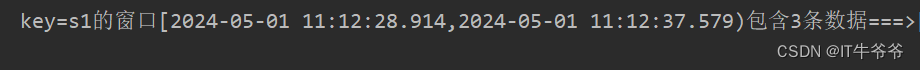

20s一个窗口,窗口是连续的,而且没有时间重叠。

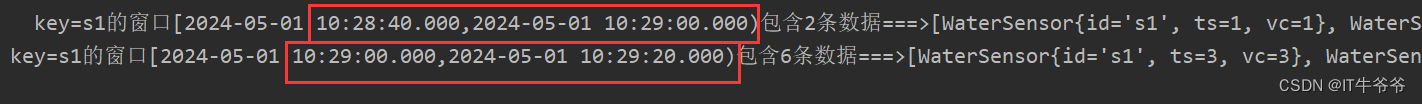

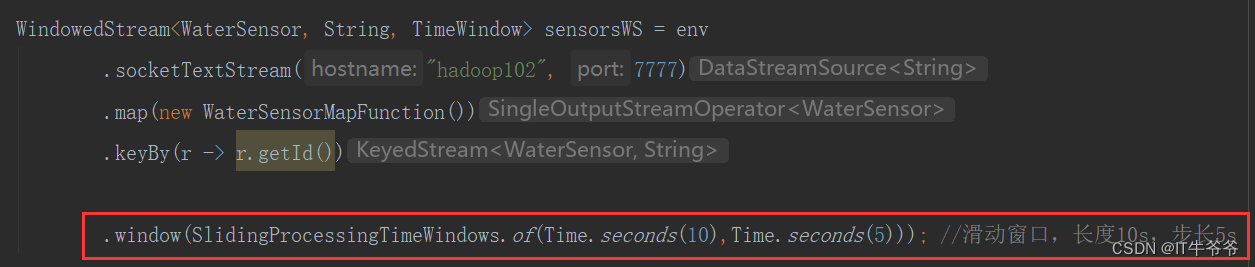

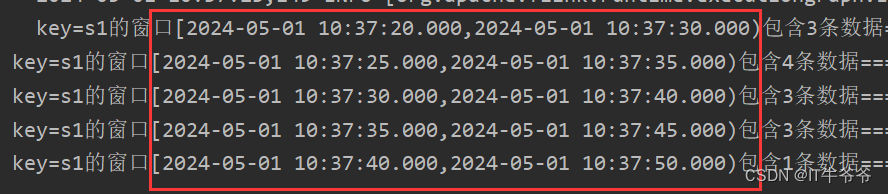

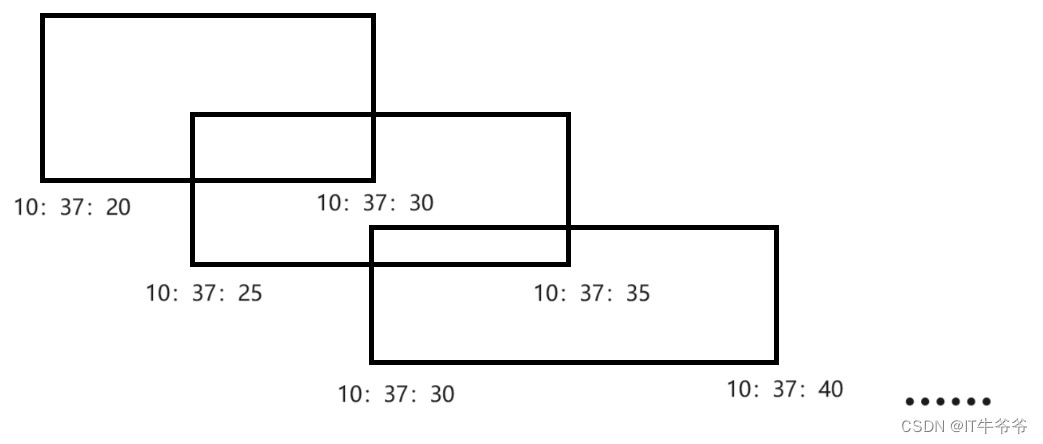

2.滑动窗口

只需改一下这里:

窗口的时间有重叠,属于这个时间段的窗口中的数据肯定也有重叠:

时间滑动窗口图示:

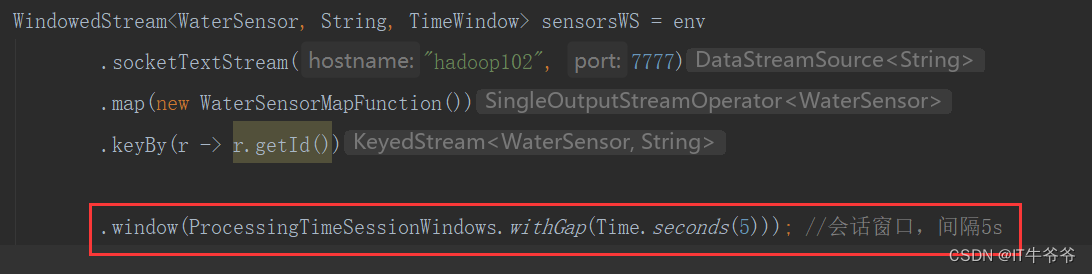

3.会话窗口

只需改一下这里:

会话窗口中,最重要的参数就是会话的超时时间,也就是两个会话窗口之间的最小距离。如果相邻两个数据到来的时间间隔小于指定的大小那说明还在保持会话,它们就属于同一个窗口;如果大于,

那么新来的数据就应该属于新的会话窗口,而前个窗口就应该关闭了。

连续输入3条数据后,间隔5s没有来数据,窗口结束:

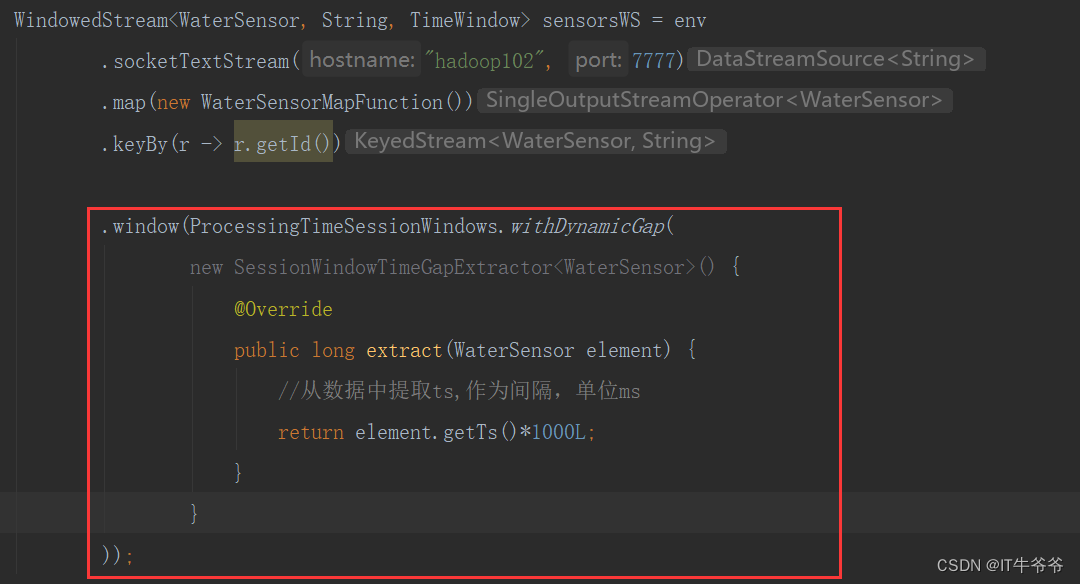

还有一种方法可以动态的获取时间间隔,也就是说超时时间是动态变化的。

.window(ProcessingTimeSessionWindows.withDynamicGap(

new SessionWindowTimeGapExtractor<WaterSensor>() {

@Override

public long extract(WaterSensor element) {

//从数据中提取ts,作为间隔,单位ms

return element.getTs()*1000L;

}

}

));

计数窗口

1.滚动窗口

package window;

import flink_Partition.WaterSensorMapFunction;

import flink_transfrom.WaterSensor;

import org.apache.flink.streaming.api.datastream.KeyedStream;

import org.apache.flink.streaming.api.datastream.SingleOutputStreamOperator;

import org.apache.flink.streaming.api.datastream.WindowedStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.functions.windowing.ProcessWindowFunction;

import org.apache.flink.streaming.api.windowing.assigners.TumblingProcessingTimeWindows;

import org.apache.flink.streaming.api.windowing.time.Time;

import org.apache.flink.streaming.api.windowing.windows.GlobalWindow;

import org.apache.flink.streaming.api.windowing.windows.TimeWindow;

import org.apache.flink.util.Collector;

public class countWindow {

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

env.setParallelism(1);

KeyedStream<WaterSensor, String> sensorsKS = env

.socketTextStream("hadoop102", 7777)

.map(new WaterSensorMapFunction())

.keyBy(r -> r.getId());

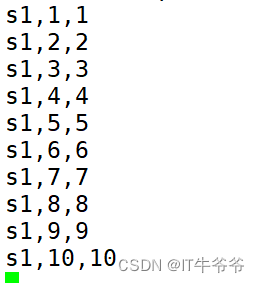

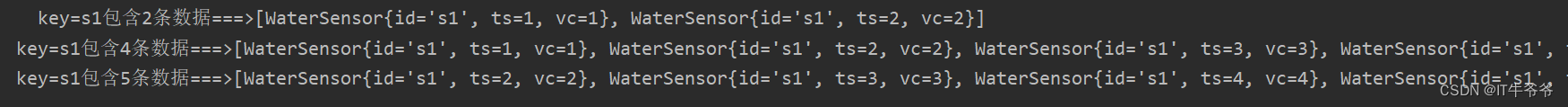

WindowedStream<WaterSensor, String, GlobalWindow> sensorsWS = sensorsKS.countWindow(5); //滚动窗口,窗口长度5条数据

SingleOutputStreamOperator<String> result = sensorsWS.process(

new ProcessWindowFunction<WaterSensor, String, String, GlobalWindow>() {

@Override

public void process(String s, Context context, Iterable<WaterSensor> elements, Collector<String> out) throws Exception {

long count = elements.spliterator().estimateSize();

out.collect("key=" + s + "包含" + count + "条数据===>" + elements.toString());

}

}

);

result.print();

env.execute();

}

}

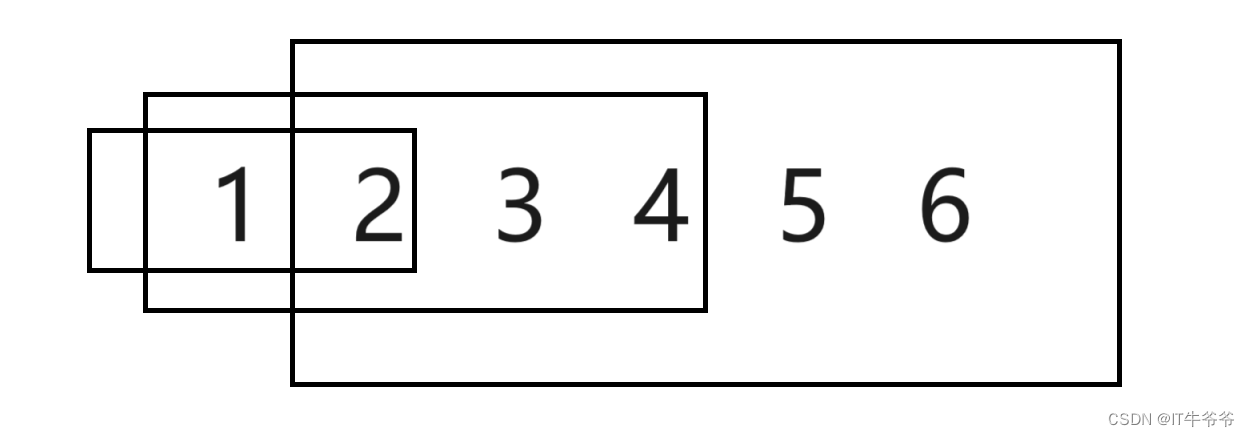

2.滑动窗口

package window;

import flink_Partition.WaterSensorMapFunction;

import flink_transfrom.WaterSensor;

import org.apache.flink.streaming.api.datastream.KeyedStream;

import org.apache.flink.streaming.api.datastream.SingleOutputStreamOperator;

import org.apache.flink.streaming.api.datastream.WindowedStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.functions.windowing.ProcessWindowFunction;

import org.apache.flink.streaming.api.windowing.assigners.TumblingProcessingTimeWindows;

import org.apache.flink.streaming.api.windowing.time.Time;

import org.apache.flink.streaming.api.windowing.windows.GlobalWindow;

import org.apache.flink.streaming.api.windowing.windows.TimeWindow;

import org.apache.flink.util.Collector;

public class countWindow {

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

env.setParallelism(1);

KeyedStream<WaterSensor, String> sensorsKS = env

.socketTextStream("hadoop102", 7777)

.map(new WaterSensorMapFunction())

.keyBy(r -> r.getId());

WindowedStream<WaterSensor, String, GlobalWindow> sensorsWS = sensorsKS.countWindow(5,2); //滑动窗口,窗口长度5条数据,滑动步长2条数据

SingleOutputStreamOperator<String> result = sensorsWS.process(

new ProcessWindowFunction<WaterSensor, String, String, GlobalWindow>() {

@Override

public void process(String s, Context context, Iterable<WaterSensor> elements, Collector<String> out) throws Exception {

long count = elements.spliterator().estimateSize();

out.collect("key=" + s + "包含" + count + "条数据===>" + elements.toString());

}

}

);

result.print();

env.execute();

}

}

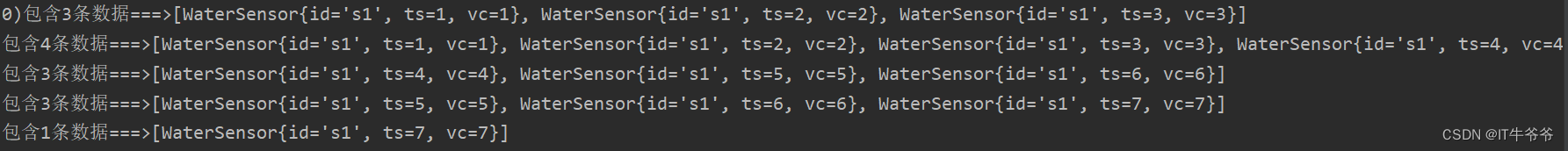

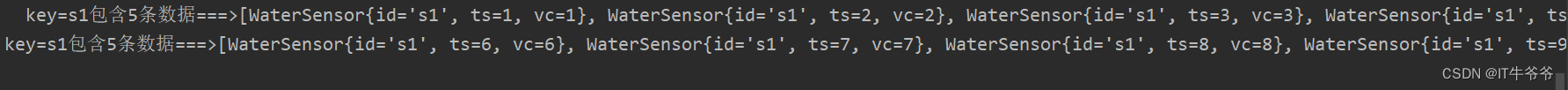

计数滑动窗口图示:

这里的结果如果觉得不好理解就不要想那么多,反正就是一个步长一定有一个窗口触发和输出。

2006

2006

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?