Memcached

是一个开源的高性能、分布式内存对象缓存系统,通常用于加速动态 Web 应用程序通过减少数据库负载来提高性能,支持设置缓存项的过期时间,到期后会被清除,将用户会话数据存储在内存中,提高Web应用性能

安装

[root@rocky /data/webapps1/ROOT]$ dnf install memcached

[root@t1 ~]#cat /etc/sysconfig/memcached

PORT="11211" #监听端口

USER="memcached" #启动用户

MAXCONN="1024" #最大连接数

CACHESIZE="64" #最大使用内存

OPTIONS="-l 127.0.0.1,::1" #其他选项

[root@rocky /usr/lib/systemd/system]$ systemctl enable --now memcached

#监听端口为11211

[root@rocky /usr/lib/systemd/system]$ ss -ntlp |grep memcached

LISTEN 0 1024 127.0.0.1:11211 0.0.0.0:* users:(("memcached",pid=38686,fd=26))

LISTEN 0 1024 [::1]:11211 [::]:* users:(("memcached",pid=38686,fd=27))

#修改为主机的所有IP

[root@rocky /usr/lib/systemd/system]$ cat /etc/sysconfig/memcached

PORT="11211"

USER="memcached"

MAXCONN="1024"

CACHESIZE="64"

OPTIONS="-l 10.0.0.151"

#OPTIONS="-l 127.0.0.1,::1"

说明

memcached常用选项

-u username memcached运行的用户身份,必须普通用户

-p 绑定的端口,默认11211

-m num 最大内存,单位MB,默认64MB

-c num 最大连接数,缺省1024

-d 守护进程方式运行

-f 增长因子Growth Factor,默认1.25

-v 详细信息,-vv能看到详细信息

-M 使用内存直到耗尽,不许LRU

-U 设置UDP监听端口,0表示禁用UD[root@rocky /usr/lib/systemd/system]$ memcached -u memcached -p 11211 -f 2 -vv

slab class 1: chunk size 96 perslab 10922

slab class 2: chunk size 192 perslab 5461

slab class 3: chunk size 384 perslab 2730

slab class 4: chunk size 768 perslab 1365

slab class 5: chunk size 1536 perslab 682

slab class 6: chunk size 3072 perslab 341

slab class 7: chunk size 6144 perslab 170

slab class 8: chunk size 12288 perslab 85

slab class 9: chunk size 24576 perslab 42

slab class 10: chunk size 49152 perslab 21

slab class 11: chunk size 98304 perslab 10

slab class 12: chunk size 196608 perslab 5

slab class 13: chunk size 524288 perslab 2

<26 server listening (auto-negotiate)

使用

#查看memcached状态

[root@rocky /usr/lib/systemd/system]$ memstat --servers=10.0.0.1515种命令

set

add

replace

get

delete#前三个命令是用于操作存储在 memcached 中的键值对的标准修改命令,都使用如下所示的语法:

command <key> <flags> <expiration time> <bytes>

<value>

#参数说明如下:

command set/add/replace

key key 用于查找缓存值

flags 可以包括键值对的整型参数,客户机使用它存储关于键值对的额外信息

expiration time 在缓存中保存键值对的时间长度(以秒为单位,0 表示永远)

bytes 在缓存中存储的字节数

value 存储的值(始终位于第二行)

#增加key,过期时间为秒,bytes为存储数据的字节数

add key flags exptime bytes 进到memcached

[root@rocky /usr/lib/systemd/system]$ telnet localhost 11211

Trying ::1...

Connected to localhost.

Escape character is '^]'.

status

STAT pid 41063

STAT uptime 137

STAT time 1711626155

STAT version 1.5.22

STAT libevent 2.1.8-stable

STAT pointer_size 64

STAT rusage_user 0.009017

STAT rusage_system 0.015165

STAT max_connections 1024

STAT curr_connections 2

STAT total_connections 4

STAT rejected_connections 0

STAT connection_structures 3

STAT reserved_fds 20

STAT cmd_get 0

STAT cmd_set 0

STAT cmd_flush 0

STAT cmd_touch 0

STAT cmd_meta 0

STAT get_hits 0

STAT get_misses 0

STAT get_expired 0

STAT get_flushed 0

STAT delete_misses 0

STAT delete_hits 0

STAT incr_misses 0

STAT incr_hits 0

STAT decr_misses 0

STAT decr_hits 0

STAT cas_misses 0

STAT cas_hits 0

STAT cas_badval 0

STAT touch_hits 0

STAT touch_misses 0

STAT auth_cmds 0

STAT auth_errors 0

STAT bytes_read 53

STAT bytes_written 63

STAT limit_maxbytes 67108864

STAT accepting_conns 1

STAT listen_disabled_num 0

STAT time_in_listen_disabled_us 0

STAT threads 4

STAT conn_yields 0

STAT hash_power_level 16

STAT hash_bytes 524288

STAT hash_is_expanding 0

STAT slab_reassign_rescues 0

STAT slab_reassign_chunk_rescues 0

STAT slab_reassign_evictions_nomem 0

STAT slab_reassign_inline_reclaim 0

STAT slab_reassign_busy_items 0

STAT slab_reassign_busy_deletes 0

STAT slab_reassign_running 0

STAT slabs_moved 0

STAT lru_crawler_running 0

STAT lru_crawler_starts 510

STAT lru_maintainer_juggles 187

STAT malloc_fails 0

STAT log_worker_dropped 0

STAT log_worker_written 0

STAT log_watcher_skipped 0

STAT log_watcher_sent 0

STAT bytes 0

STAT curr_items 0

STAT total_items 0

STAT slab_global_page_pool 0

STAT expired_unfetched 0

STAT evicted_unfetched 0

STAT evicted_active 0

STAT evictions 0

STAT reclaimed 0

STAT crawler_reclaimed 0

STAT crawler_items_checked 0

STAT lrutail_reflocked 0

STAT moves_to_cold 0

STAT moves_to_warm 0

STAT moves_within_lru 0

STAT direct_reclaims 0

STAT lru_bumps_dropped 0

END

#添加

add mykey 1 60 4

#名字

test

STORED

#查看

get mykey

VALUE mykey 1 4

test

END

add aa 1 5 3

tese

get aa

get mykey

#刷新

flush_all

quit

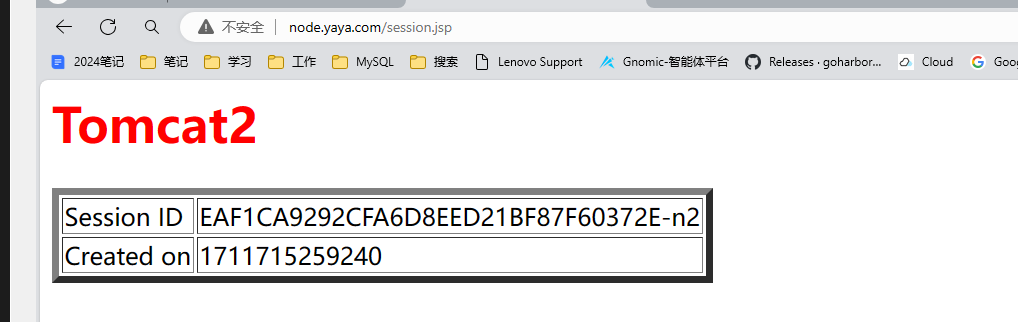

Tomcat Session Replication Cluster

无论指向后台的哪台主机,都要保证用户的信息可以得到保存,不需要每次致指向不同的机器,就需要重新输入登录信息,为了保证前台页面用户登录不受任何影响,所以要保证后台机器session共享

实现2台session共享

2台机器server.xml都需要配置

<host>

<Cluster className="org.apache.catalina.ha.tcp.SimpleTcpCluster"

channelSendOptions="8">

<Manager className="org.apache.catalina.ha.session.DeltaManager"

expireSessionsOnShutdown="false"

notifyListenersOnReplication="true"/>

<Channel className="org.apache.catalina.tribes.group.GroupChannel">

<Membership className="org.apache.catalina.tribes.membership.McastService"

address="230.100.100.100" #多播地址

port="45564"

frequency="500"

dropTime="3000"/>

<Receiver className="org.apache.catalina.tribes.transport.nio.NioReceiver"

address="10.0.0.150" #本机ip

port="4000"

autoBind="100"

selectorTimeout="5000"

maxThreads="6"/>

<Sender

className="org.apache.catalina.tribes.transport.ReplicationTransmitter">

<Transport

className="org.apache.catalina.tribes.transport.nio.PooledParallelSender"/>

</Sender>

<Interceptor

className="org.apache.catalina.tribes.group.interceptors.TcpFailureDetector"/>

<Interceptor

className="org.apache.catalina.tribes.group.interceptors.MessageDispatchInterceptor"/>

</Channel>

<Valve className="org.apache.catalina.ha.tcp.ReplicationValve"

filter=""/>

<Valve className="org.apache.catalina.ha.session.JvmRouteBinderValve"/>

<Deployer className="org.apache.catalina.ha.deploy.FarmWarDeployer"

tempDir="/tmp/war-temp/"

deployDir="/tmp/war-deploy/"

watchDir="/tmp/war-listen/"

watchEnabled="false"/>

<ClusterListener

className="org.apache.catalina.ha.session.ClusterSessionListener"/>

</Cluster>

#添加以上几行

<host>

[root@t1 ~]#systemctl restart tomcat

为所有tomcat主机应用web.xml的 <web-app> 标签增加子标签 <distributable/> 来开启该应用程序的分布式。

[root@t1 ~]#tail -n3 /data/webapps/ROOT/WEB-INF/web.xml

</description>

<distributable/> #添加此行

</web-app>重启全部Tomcat,通过负载均衡调度到不同节点,返回的SessionID不变了。 用浏览器访问,并刷新多次,发现SessionID 不变,但后端主机在轮询 但此方式当后端tomcat主机较多时,会重复占用大量的内存,并不适合后端服务器众多的场景

session共享服务器

Session 共享服务器有多种实现方案

#支持Memcached和Redis

https://github.com/magro/memcached-session-manager #2018

#只支持Redis

https://github.com/redisson/redisson #20240301

https://github.com/ran-jit/tomcat-cluster-redis-session-manager #2020MSM(memcached session manager)提供将Tomcat的session保持到memcached或Redis的程序, 可以实现高可用。

提前需要准备这些软件包

kryo-3.0.3.jar

asm-5.2.jar

objenesis-2.6.jar

reflectasm-1.11.9.jar

minlog-1.3.1.jar

kryo-serializers-0.45.jar

msm-kryo-serializer-2.3.2.jar

memcached-session-manager-tc8-2.3.2.jar

spymemcached-2.12.3.jar

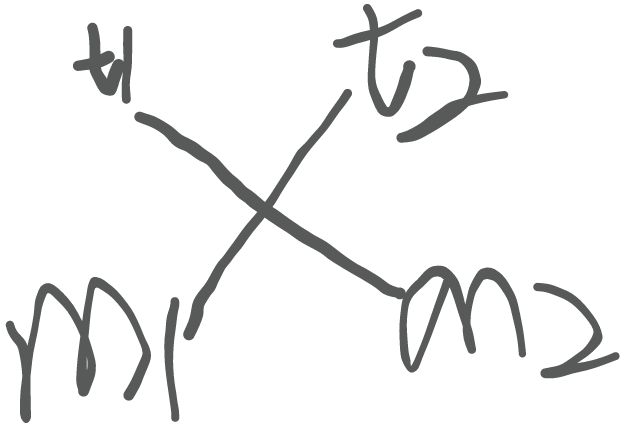

memcached-session-manager-2.3.2.jarSticky模式

sticky 模式即前端tomcat和后端memcached有关联(粘性)关系

t1和m1部署可以在一台主机上,t2和m2部署也可以在同一台。 当新用户发请求到Tomcat1时, Tomcat1生成session返回给用户的同时,也会同时发给memcached2备 份。即Tomcat1 session为主session,memcached2 session为备用session,使用memcached相当 于备份了一份Session 如果Tomcat1发现memcached2 失败,无法备份Session到memcached2,则将Sessoin备份存放在 memcached1中

修改memcached文件

[root@rocky ~]$ cat /etc/sysconfig/memcached

PORT="11211"

USER="memcached"

MAXCONN="1024"

CACHESIZE="64"

OPTIONS=""

#OPTIONS="-l 127.0.0.1,::1"

修改tomcat文件

<Manager pathname="" />

-->

###倒数第一行前,即</Context>行的前面,加下面内容

<Manager className="de.javakaffee.web.msm.MemcachedBackupSessionManager"

memcachedNodes="n1:10.0.0.151:11211,n2:10.0.0.150:11211"

failoverNodes="n1"

requestUriIgnorePattern=".*\.(ico|png|gif|jpg|css|js)$"

transcoderFactoryClass="de.javakaffee.web.msm.serializer.kryo.KryoTranscoderFactory"

/>

</Context> #最后一行

tomcat1和tomcat2区别仅在于failoverNodes="n1"改为failoverNodes="n2"

然后重启服务,会发现sessionID保持不变,只有页面变

2万+

2万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?