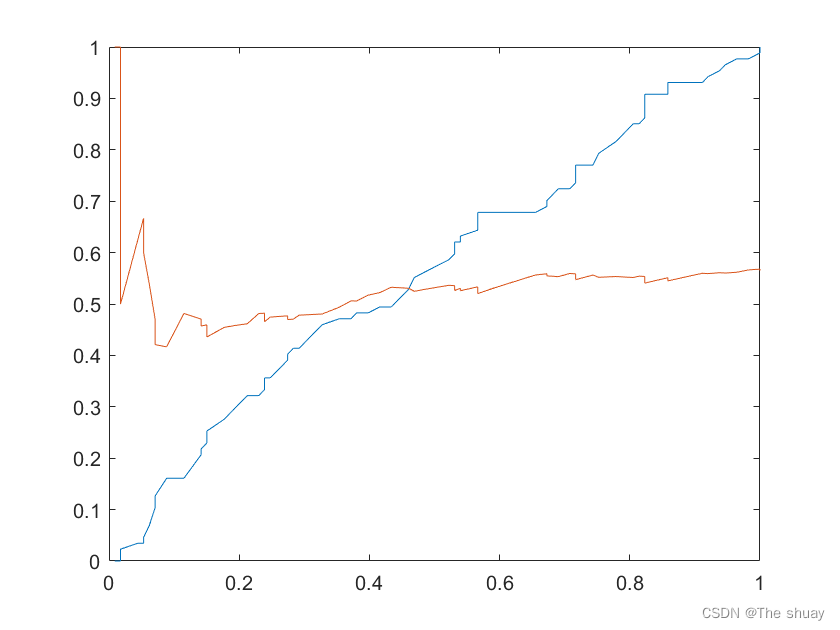

PR曲线图及ROC曲线

clear all

clc

data=xlsread("C:\Users\19680\Desktop\作业1数据.xlsx");

n=size(data,1);

t=0; %t参数记录roc曲线下x,y轴

for j=1:-0.01:0 %j代表阈值

%初始化

TP=0;

FP=0;

TN=0;

FN=0;

t=t+1;

for i=1:n %i代表1-200序列遍历

if data(i,2)==1&&data(i,3)>=j

TP=TP+1;

elseif data(i,2)==0&&data(i,3)>=j

FP=FP+1;

elseif data(i,2)==0&&data(i,3)<j

TN=TN+1;

elseif data(i,2)==1&&data(i,3)<j

FN=FN+1;

end

end

p(t)=TP/(TP+FP);

r(t)=TP/(TP+FN);

c(t)=TP/(TP+FN);

z(t)=FP/(FP+TN);

end

plot(c,z); %ROC曲线

hold on

plot(r,p); %PR曲线

梯度下降法和adgrad方法

clear all

clc

x0=[-2 2];

f0=yuanhanshu(x0);

g0=tidu(x0);

lr=0.001;

n=20000;

for i=1:n

x1=x0-lr*g0;

g1=tidu(x1);

f1=yuanhanshu(x1);

f0=f1;

x0=x1;

g0=g1;

N(i,:) = x0;

M(i,:) = f0;

end

x0;

f0;

plot(N,M)function f=yuanhanshu(x)

f=(1-x(1))^2+100*(x(2)-x(1)^2)^2;

function g=tidu(x)

t1=4*100*x(1)^3+(2-4*100*x(2))*x(1)-2;

t2=2*100*(x(2)-x(1)^2);

g=[t1 t2];

adgrad方法

clear all

clc

%adgrad 但是和梯度下降法只增加了一个学习率的学习率

x0=[-2 2];

f0=yuanhanshu(x0);

g0=tidu(x0);

lr=0.001; %学习率

llr=0.99; %学习率的学习率

n=20000;

for i=1:n

x1=x0-lr*g0;

g1=tidu(x1);

f1=yuanhanshu(x1);

lr=llr*lr;

f0=f1;

x0=x1;

g0=g1;

N(i,:) = x0;

M(i,:) = f0;

end

x0;

f0;

plot(N,M)adgrad修改版还是不会,图不对劲

clear all

clc

%adgrad 梯度下降算法

x0=[-2 2];

f0=yuanhanshu(x0);

g0=tidu(x0);

lr=0.001;

n=200;

ssum=g0.^2+1e-10;

eps=1e-10;

for i=1:n

x1=x0-lr*g0;

g0

g1=tidu(x1);

f1=yuanhanshu(x1);

ssum=ssum+g1.^2+eps;

ssum(1,1)^2+sum(1,2)^2;

lr=lr/sqrt(ssum(1,1)^2+sum(1,2)^2);

f0=f1;

x0=x1;

g0=g1;

N(i,:) = x0;

M(i,:) = f0;

end

x0;

f0;

plot(N,M)

572

572

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?